The world was launched to the idea of shape-changing robots in 1991, with the T-1000 featured within the cult film Terminator 2: Judgment Day. Since then (if not earlier than), many a scientist has dreamed of making a robotic with the flexibility to alter its form to carry out various duties.

And certainly, we’re beginning to see a few of these issues come to life – like this “magnetic turd” from the Chinese language College of Hong Kong, for instance, or this liquid steel Lego man, able to melting and re-forming itself to flee from jail. Each of those, although, require exterior magnetic controls. They cannot transfer independently.

However a analysis staff at MIT is engaged on creating ones that may. They’ve developed a machine-learning approach that trains and controls a reconfigurable ‘slime’ robotic that squishes, bends, and elongates itself to work together with its atmosphere and exterior objects. Upset aspect be aware: the robotic’s not fabricated from liquid steel.

TERMINATOR 2: JUDGMENT DAY Clip – “Hospital Escape” (1991)

“When folks consider tender robots, they have an inclination to consider robots which might be elastic, however return to their unique form,” mentioned Boyuan Chen, from MIT’s Laptop Science and Synthetic Intelligence Laboratory (CSAIL) and co-author of the research outlining the researchers’ work. “Our robotic is like slime and might truly change its morphology. It is vitally putting that our methodology labored so effectively as a result of we’re coping with one thing very new.”

The researchers needed to devise a means of controlling a slime robotic that doesn’t have arms, legs, or fingers – or certainly any kind of skeleton for its muscle tissues to push and pull in opposition to – or certainly, any set location for any of its muscle actuators. A type so formless, and a system so endlessly dynamic… These current a nightmare state of affairs: how on Earth are you speculated to program such a robotic’s actions?

Clearly any form of commonplace management scheme could be ineffective on this state of affairs, so the staff turned to AI, leveraging its immense functionality to cope with advanced information. And so they developed a management algorithm that learns transfer, stretch, and form mentioned blobby robotic, typically a number of instances, to finish a selected job.

MIT

Reinforcement studying is a machine-learning approach that trains software program to make choices utilizing trial and error. It’s nice for coaching robots with well-defined transferring elements, like a gripper with ‘fingers,’ that may be rewarded for actions that transfer it nearer to a purpose—for instance, selecting up an egg. However what a couple of formless tender robotic that’s managed by magnetic fields?

“Such a robotic may have hundreds of small items of muscle to manage,” Chen mentioned. “So it is extremely laborious to study in a standard means.”

A slime robotic requires giant chunks of it to be moved at a time to attain a practical and efficient form change; manipulating single particles wouldn’t consequence within the substantial change required. So, the researchers used reinforcement studying in a nontraditional means.

Huang et al.

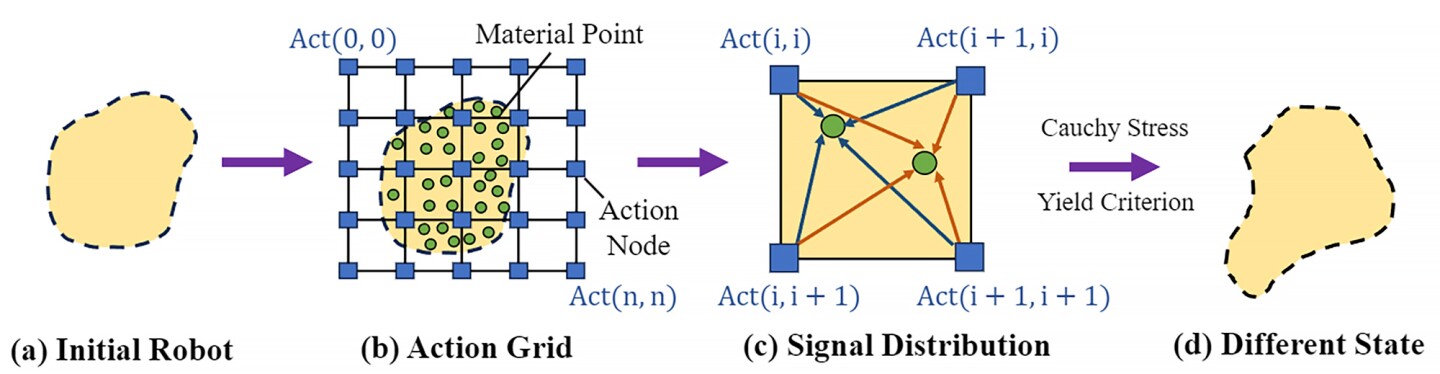

In reinforcement studying, the set of all legitimate actions, or decisions, accessible to an agent because it interacts with an atmosphere is named an ‘motion house.’ Right here, the robotic’s motion house was handled like a picture made up of pixels. Their mannequin used pictures of the robotic’s atmosphere to generate a 2D motion house lined by factors overlayed with a grid.

In the identical means close by pixels in a picture are associated, the researchers’ algorithm understood that close by motion factors had stronger correlations. So, motion factors across the robotic’s ‘arm’ will transfer collectively when it modifications form; motion factors on the ‘leg’ may also transfer collectively, however in a different way from the arm’s motion.

The researchers additionally developed an algorithm with ‘coarse-to-fine coverage studying.’ First, the algorithm is skilled utilizing a low-resolution coarse coverage – that’s, transferring giant chunks – to discover the motion house and determine significant motion patterns. Then, a higher-resolution, advantageous coverage delves deeper to optimize the robotic’s actions and enhance its capacity to carry out advanced duties.

MIT

“Coarse-to-fine signifies that whenever you take a random motion, that random motion is more likely to make a distinction,” mentioned Vincent Sitzmann, a research co-author who’s additionally from CSAIL. “The change within the end result is probably going very important since you coarsely management a number of muscle tissues on the similar time.”

Subsequent was to check their strategy. They created a simulation atmosphere referred to as DittoGym, which options eight duties that consider a reconfigurable robotic’s capacity to alter form. For instance, having the robotic match a letter or image and making it develop, dig, kick, catch, and run.

MIT’s slime robotic management scheme: Examples

“Our job choice in DittoGym follows each generic reinforcement studying benchmark design ideas and the precise wants of reconfigurable robots,” mentioned Suning Huang from the Division of Automation at Tsinghua College, China, a visiting researcher at MIT and research co-author.

“Every job is designed to symbolize sure properties that we deem essential, equivalent to the aptitude to navigate by way of long-horizon explorations, the flexibility to investigate the atmosphere, and work together with exterior objects,” Huang continued. “We consider they collectively may give customers a complete understanding of the flexibleness of reconfigurable robots and the effectiveness of our reinforcement studying scheme.”

DittoGym

The researchers discovered that, by way of effectivity, their coarse-to-fine algorithm outperformed the options (e.g., coarse-only or fine-from-scratch insurance policies) persistently throughout all duties.

It will be a while earlier than we see shape-changing robots outdoors the lab, however this work is a step in the best course. The researchers hope that it’s going to encourage others to develop their very own reconfigurable tender robotic that, at some point, may traverse the human physique or be integrated right into a wearable gadget.

The research was printed on the pre-print web site arXiv.

Supply: MIT