Understanding the distribution of knowledge is among the most necessary elements of performing knowledge evaluation. Visualizing the distribution helps us perceive the patterns, traits, and anomalies that is perhaps hidden in uncooked numbers. Whereas histograms are sometimes used for this goal, they often may be too blocky to indicate some refined particulars. Kernel Density Estimation (KDE) plots present a smoother and extra correct option to visualize steady knowledge by estimating its chance density perform. This enables knowledge scientists and analysts to see necessary options comparable to a number of peaks, skewness, and outliers extra clearly. Studying to make use of KDE plots is a useful ability for higher understanding knowledge insights. On this article, we’ll go over KDE plots and their implementations.

What are Kernel Density Estimation (KDE) Plots?

Kernel Density Estimation (KDE) is a non-parametric technique for estimating the chance density perform (PDF) of a steady random variable. Merely talking, KDE makes a easy curve (density estimate) which approximates the distribution of knowledge, fairly than utilizing separated bins like in a histogram. Idea-wise, now we have a “kernel” (a easy and symmetric perform) on every knowledge level and add them as much as kind a steady density. Mathematically, if now we have knowledge factors x1,…,xn, then the KDE at a degree x is:

The place Okay is the kernel (principally a bell form of perform) and h is the bandwidth (a smoothness parameter). Since no fastened kind like “regular” or “exponential” is taken for the distribution, KDE is known as a non-parametric estimator. KDE “smooths a histogram” by turning every knowledge level right into a small hill; all these hills collectively make the full density (as may be seen from the next diagram).

Completely different sorts of kernel features are used based on the use case. For instance, the Gaussian (or regular) kernel is fashionable due to its smoothness, however others like Epanechnikov (parabolic), uniform, triangular, biweight, and even triweight may also be used. By default, many libraries go together with a Gaussian kernel, which means each knowledge level provides a bell-shaped bump to the estimate. Epanechnikov kernel minimises the imply squared error between all, however nonetheless, the Gaussian is usually picked only for comfort.

Density plots are tremendous useful in analysing knowledge to indicate the form of a distribution. They work properly for giant datasets and may present issues (like a number of peaks or lengthy tails) {that a} histogram would possibly cover. For instance, KDE plots can catch bimodal or skewed shapes that let you know about sub-groups or outliers. When exploring a brand new numeric variable, plotting KDE is usually one of many first issues folks do. In some areas (like sign processing or econometrics), KDE can be referred to as the Parzen-Rosenblatt window technique.

Essential Ideas

Listed below are the important thing issues to bear in mind when understanding how KDE plot works :

- Non-parametric PDF estimation: KDE doesn’t assume the underlying distribution. It builds a easy estimate immediately from the info.

- Kernel features: A kernel Okay (e.g., Gaussian) is a symmetric weighting perform. Frequent decisions embrace Gaussian, Epanechnikov, uniform, and many others. The selection has a small impact on the consequence so long as the bandwidth is adjusted.

- Bandwidth (smoothing): The parameter h (or, equivalently, bw ) scales the kernel. Bigger h yields smoother (wider) curves; smaller h yields tighter, extra detailed curves. The optimum bandwidth usually scales like n−1/5.

- Bias-variance tradeoff: A key consideration is balancing element vs. smoothness: too small h results in a loud estimate; too massive h can oversmooth necessary peaks or valleys.

Utilizing KDE Plots in Python

Each Seaborn (constructed on Matplotlib) and pandas make it simple to create KDE plots in Python. Now, I might be displaying some utilization patterns, parameters, and customisation suggestions.

Seaborn’s kdeplot

First, use seaborn.kdeplot perform. This perform plots univariate (or bivariate) KDE curves for a dataset. Internally, it makes use of a Gaussian kernel by default and helps many different choices. For instance, to plot the distribution of the sepal_width variable from the Iris dataset.

Univariate KDE Plot Utilizing Seaborn (Iris Dataset Instance)

The next instance demonstrates tips on how to create a KDE plot for a single steady variable.

import seaborn as sns

import matplotlib.pyplot as plt

# Load instance dataset

df = sns.load_dataset('iris')

# Plot 1D KDE

sns.kdeplot(knowledge=df, x='sepal_width', fill=True)

plt.title("KDE of Iris Sepal Width")

plt.xlabel("Sepal Width")

plt.ylabel("Density")

plt.present()

From the earlier picture, we will see a easy density curve of the speal_width values. Additionally, the fill=True argument shapes the world below the curve, and whether it is fill = False, solely the darkish blue line would have been seen.

Evaluating KDE plots throughout Classes

To this point, now we have seen easy univariate KDE plots. Now, let’s see one of the crucial highly effective makes use of of Seaborn’s kdeplot technique, which is its potential to match distributions throughout subgroups utilizing the hue parameter.

Let’s say we need to analyse how the distribution of whole restaurant payments differs between lunch and dinner occasions. So, for this, let’s use the suggestions dataset. With this, we will overlay two KDE plots, one for Lunch and one for Dinner, on the identical axes for direct comparability.

import seaborn as sns

import matplotlib.pyplot as plt

suggestions = sns.load_dataset('suggestions')

sns.kdeplot(knowledge=suggestions, x='total_bill', hue="time", fill=True,

common_norm=False, alpha=0.5)

plt.title("KDE of Whole Invoice (Lunch vs Dinner)")

plt.present()

So we will see that the above code overlays two density curves. The fill=True shades below every curve to make the distinction extra seen, common_norm= False makes positive that every group’s density is scaled independently, and alpha=0.5 provides transparency so the overlapping areas are simple to interpret.

You can too experiment with a number of=‘layer’, ‘stack’, or ‘fill’ to alter how a number of densities are proven.

Pandas and Matplotlib

If you’re working with pandas, you too can use built-in plotting to get KDE plots. A pandas collection has a plot(sort=’density’) or plot.density() technique that acts as a wrapper for the related strategies in Matplotlib.

Code:

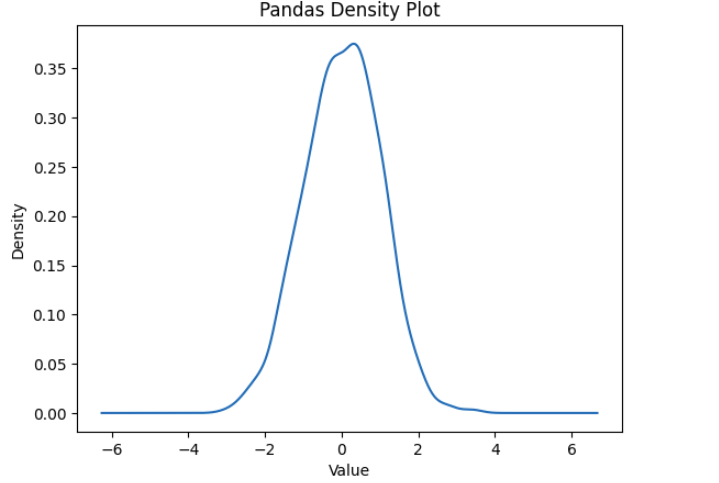

import pandas as pd

import numpy as np

knowledge = np.random.randn(1000) # 1000 random factors from a traditional distribution

s = pd.Collection(knowledge)

s.plot(sort='density')

plt.title("Pandas Density Plot")

plt.xlabel("Worth")

plt.present()

Alternatively, we will compute and plot KDE manually utilizing SciPy’s gaussian_kde technique.

import numpy as np

from scipy.stats import gaussian_kde

knowledge = np.concatenate([np.random.normal(-2, 0.5, 300), np.random.normal(3,

1.0, 500)])

kde = gaussian_kde(knowledge, bw_method=0.3) # bandwidth could be a issue or

'silverman', 'scott'

xs = np.linspace(min(knowledge), max(knowledge), 200)

density = kde(xs)

plt.plot(xs, density)

plt.title("Handbook KDE through scipy")

plt.xlabel("Worth"); plt.ylabel("Density")

plt.present()

The above code creates a bimodal dataset and estimates its density. In apply, utilizing Seaborn or pandas for attaining the identical performance is way simpler.

Deciphering KDE Plot or Kernel Density Estimator plot

Studying a KDE plot is much like a histogram, however with a easy curve. The peak of the curve at a degree x is proportional to the estimated chance density there. The realm below the curve over a spread corresponds to the chance of touchdown in that vary. As a result of the curve is steady, the precise worth at any level will not be as necessary as the general form:

- Peaks (modes): A excessive peak signifies a standard worth or cluster within the knowledge. A number of peaks recommend a number of modes (e.g., combination of sub-populations).

- Unfold: The width of the curve reveals dispersion. A wider curve means extra variability (bigger normal deviation), whereas a slim, tall curve means the info is tightly clustered.

- Tails: Observe how rapidly the density tapers off. Heavy tails suggest outliers; quick tails suggest bounded knowledge.

- Evaluating curves: When overlaying teams, search for shifts (one distribution systematically increased or decrease) or variations in form.

Use Circumstances and Examples

KDE plots have many helpful functions in day-to-day knowledge evaluation:

- Exploratory Knowledge Evaluation (EDA): Once we first take a look at a dataset, KDE helps us see how the variables are distributed, whether or not they look regular, skewed, or have multiple peak(multimodal). As everyone knows that checking the distribution of your variables one after the other might be the primary process it is best to do while you get a brand new dataset. KDE, being smoother than histograms, is usually extra useful when making an attempt to get a really feel of the info throughout EDA.

- Evaluating distributions: KDE works properly once we need to examine how completely different teams behave. For instance, plotting the KDE of check scores for girls and boys on the identical axis reveals if there’s any distinction in common or variation. Seaborn makes it tremendous simple to overlay KDE utilizing completely different colors. KDE plots are often much less messy than side-by-side histograms, and so they give a greater sense of how the teams differ.

- Smoothing histograms: KDE may be regarded as a smoother model of a histogram. When histograms look too uneven or change quite a bit with bin measurement, KDE provides a extra secure and clear image. As an illustration, the Airbnb value instance above may very well be proven as a histogram, however KDE makes it a lot simpler to interpret. KDE helps create a extra steady estimate of the info’s form, which could be very helpful, particularly when the info isn’t too massive or too small.

Alternate options to Kernel Density Plots

So, whereas KDE plots are tremendous helpful for displaying easy estimates of a distribution, they don’t seem to be at all times the very best factor to make use of. Relying on the info measurement or what precisely you are attempting to do, there are different sorts of plots you’ll be able to strive, too. Listed below are a couple of widespread ones:

Histograms

Truthfully, essentially the most fundamental approach to take a look at distributions. You simply chop the info into bins and depend what number of issues fall in every. Straightforward to make use of, however can get messy in the event you use too many bins or too few. Generally it hides patterns. KDE form of helps with that by smoothing the bumps.

Field Plots(additionally referred to as box-and-whisker)

These are good in the event you simply wanna know, like the place many of the knowledge is, you get the median, quartiles, and many others. It’s quick to identify outliers. But it surely doesn’t actually present the form of the info like KDE does. Nonetheless helpful while you don’t want each element.

Violin Plots

Consider these like a elaborate model of field plots that additionally reveals the KDE form. It’s like the very best of each, you get abstract stats and a way of distribution. I take advantage of these when evaluating teams facet by facet.

Rug Plots

Rug plots are easy. They only present every knowledge level as small vertical strains on the axis. Usually, together with KDE, to indicate the place the true knowledge factors are. However when you’ve gotten an excessive amount of knowledge, it will possibly look form of messy.

Histogram + KDE Combo

Some folks like to mix a histogram with KDE, as a histogram reveals the counts and KDE provides a easy curve on prime. This fashion, they’ll see each uncooked frequencies and the smoothed sample collectively.

Truthfully, which one you employ simply relies on what you want. KDE is nice for easy patterns, however typically you don’t want all that; possibly a easy field plot or histogram says sufficient, particularly in case you are quick on time or simply exploring stuff rapidly.

Conclusion

KDE plots supply a robust and intuitive option to visualize the distribution of steady knowledge. In contrast to regular histograms, they offer a easy and steady curve by estimating the chance density perform with the assistance of kernels, which makes refined patterns like skewness, multimodality, or outliers simpler to note. Whether or not you’re doing Exploratory Knowledge Evaluation, evaluating distributions, or discovering anomalies, KDE plots are actually useful. Instruments like Seaborn or pandas make it fairly easy to create and use them.

Login to proceed studying and luxuriate in expert-curated content material.