Deep studying fashions are primarily based on activation features that present non-linearity and allow networks to be taught difficult patterns. This text will talk about the Softplus activation perform, what it’s, and the way it may be utilized in PyTorch. Softplus could be mentioned to be a clean type of the favored ReLU activation, that mitigates the drawbacks of ReLU however introduces its personal drawbacks. We’ll talk about what Softplus is, its mathematical formulation, its comparability with ReLU, what its benefits and limitations are and take a stroll by some PyTorch code using it.

What’s Softplus Activation Operate?

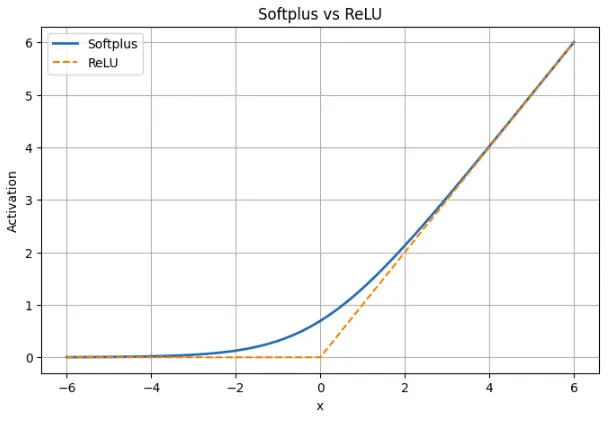

Softplus activation perform is a non-linear perform of neural networks and is characterised by a clean approximation of the ReLU perform. In simpler phrases, Softplus acts like ReLU in circumstances when the constructive or damaging enter could be very giant, however a pointy nook on the zero level is absent. As an alternative, it rises easily and yields a marginal constructive output to damaging inputs as a substitute of a agency zero. This steady and differentiable conduct implies that Softplus is steady and differentiable in all places in distinction to ReLU which is discontinuous (with a pointy change of slope) at x = 0.

Why is Softplus used?

Softplus is chosen by builders that desire a extra handy activation that gives. non-zero gradients additionally the place ReLU would in any other case be inactive. Gradient-based optimization could be spared main disruptions attributable to the smoothness of Softplus (the gradient is shifting easily as a substitute of stepping). It additionally inherently clips outputs (as ReLU does) but the clipping is to not zero. In abstract, Softplus is the softer model of ReLU: it’s ReLU-like when the worth is giant however is best round zero and is sweet and clean.

Softplus Mathematical Formulation

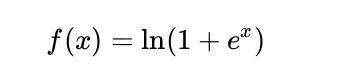

The Softplus is mathematically outlined to be:

When x is giant, ex could be very giant and due to this fact, ln(1 + ex) is similar to ln(ex), equal to x. It implies that Softplus is sort of linear at giant inputs, similar to ReLU.

When x is giant and damaging, ex could be very small, thus ln(1 + ex) is sort of ln(1), and that is 0. The values produced by Softplus are near zero however by no means zero. To tackle a worth that’s zero, x should method damaging infinity.

One other factor that’s helpful is that the by-product of Softplus is the sigmoid. The by-product of ln(1 + ex) is:

ex / (1 + ex)

That is the very sigmoid of x. It implies that at any second, the slope of Softplus is sigmoid(x), that’s, it has a non-zero gradient in all places and is clean. This renders Softplus helpful in gradient-based studying because it doesn’t have flat areas the place the gradients vanish.

Utilizing Softplus in PyTorch

PyTorch supplies the activation Softplus as a local activation and thus could be simply used like ReLU or another activation. An instance of two easy ones is given under. The previous makes use of Softplus on a small variety of take a look at values, and the latter demonstrates the right way to insert Softplus right into a small neural community.

Softplus on Pattern Inputs

The snippet under applies nn.Softplus to a small tensor so you’ll be able to see the way it behaves with damaging, zero, and constructive inputs.

import torch

import torch.nn as nn

# Create the Softplus activation

softplus = nn.Softplus() # default beta=1, threshold=20

# Pattern inputs

x = torch.tensor([-2.0, -1.0, 0.0, 1.0, 2.0])

y = softplus(x)

print("Enter:", x.tolist())

print("Softplus output:", y.tolist())

What this exhibits:

- At x = -2 and x = -1, the worth of Softplus is small constructive values quite than 0.

- The output is roughly 0.6931 at x =0, i.e. ln(2)

- In case of constructive inputs similar to 1 or 2, the outcomes are somewhat larger than the inputs since Softplus smoothes the curve. Softplus is approaching x because it will increase.

The Softplus of PyTorch is represented by the formulation ln(1 + exp(betax)). Its inside threshold worth of 20 is to forestall a numerical overflow. Softplus is linear in giant betax, that means that in that case of PyTorch merely returns x.

Utilizing Softplus in a Neural Community

Right here is a straightforward PyTorch community that makes use of Softplus because the activation for its hidden layer.

import torch

import torch.nn as nn

class SimpleNet(nn.Module):

def __init__(self, input_size, hidden_size, output_size):

tremendous(SimpleNet, self).__init__()

self.fc1 = nn.Linear(input_size, hidden_size)

self.activation = nn.Softplus()

self.fc2 = nn.Linear(hidden_size, output_size)

def ahead(self, x):

x = self.fc1(x)

x = self.activation(x) # apply Softplus

x = self.fc2(x)

return x

# Create the mannequin

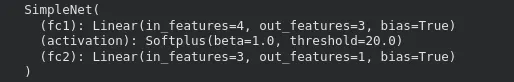

mannequin = SimpleNet(input_size=4, hidden_size=3, output_size=1)

print(mannequin)

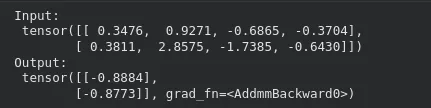

Passing an enter by the mannequin works as regular:

x_input = torch.randn(2, 4) # batch of two samples

y_output = mannequin(x_input)

print("Enter:n", x_input)

print("Output:n", y_output)

On this association, Softplus activation is used in order that the values exited within the first layer to the second layer are non-negative. The substitute of Softplus by an present mannequin could not want another structural variation. It is just necessary to do not forget that Softplus may be somewhat slower in coaching and require extra computation than ReLU.

The ultimate layer might also be carried out with Softplus when there are constructive values {that a} mannequin ought to generate as outputs, e.g. scale parameters or constructive regression goals.

Softplus vs ReLU: Comparability Desk

| Side | Softplus | ReLU |

|---|---|---|

| Definition | f(x) = ln(1 + ex) | f(x) = max(0, x) |

| Form | Easy transition throughout all x | Sharp kink at x = 0 |

| Habits for x < 0 | Small constructive output; by no means reaches zero | Output is strictly zero |

| Instance at x = -2 | Softplus ≈ 0.13 | ReLU = 0 |

| Close to x = 0 | Easy and differentiable; worth ≈ 0.693 | Not differentiable at 0 |

| Habits for x > 0 | Virtually linear, carefully matches ReLU | Linear with slope 1 |

| Instance at x = 5 | Softplus ≈ 5.0067 | ReLU = 5 |

| Gradient | All the time non-zero; by-product is sigmoid(x) | Zero for x < 0, undefined at 0 |

| Danger of useless neurons | None | Attainable for damaging inputs |

| Sparsity | Doesn’t produce precise zeros | Produces true zeros |

| Coaching impact | Steady gradient move, smoother updates | Easy however can cease studying for some neurons |

An analog of ReLU is softplus. It’s ReLU with very giant constructive or damaging inputs however with the nook at zero eliminated. This prevents useless neurons because the gradient doesn’t go to a zero. This comes on the value that Softplus doesn’t generate true zeros that means that it isn’t as sparse as ReLU. Softplus supplies extra comfy coaching dynamics within the apply, however ReLU remains to be used as a result of it’s sooner and easier.

Advantages of Utilizing Softplus

Softplus has some sensible advantages that render it to be helpful in some fashions.

- All over the place clean and differentiable

There are not any sharp corners in Softplus. It’s solely differentiable to each enter. This assists in sustaining gradients that will find yourself making optimization somewhat simpler because the loss varies slower.

- Avoids useless neurons

ReLU can forestall updating when a neuron constantly will get damaging enter, because the gradient will likely be zero. Softplus doesn’t give the precise zero worth on damaging numbers and thus all of the neurons stay partially lively and are up to date on the gradient.

- Reacts extra favorably to damaging inputs

Softplus doesn’t throw out the damaging inputs by producing a zero worth as ReLU does however quite generates a small constructive worth. This permits the mannequin to retain part of info of damaging indicators quite than dropping all of it.

Concisely, Softplus maintains gradients flowing, prevents useless neurons and presents clean conduct for use in some architectures or duties the place continuity is necessary.

Limitations and Commerce-offs of Softplus

There are additionally disadvantages of Softplus that limit the frequency of its utilization.

- Costlier to compute

Softplus makes use of exponential and logarithmic operations which might be slower than the straightforward max(0, x) of ReLU. This extra overhead could be visibly felt on giant fashions as a result of ReLU is extraordinarily optimized on most {hardware}.

- No true sparsity

ReLU generates excellent zeroes on damaging examples, which may save computing time and sometimes help in regularization. Softplus doesn’t give an actual zero and therefore all of the neurons are at all times not inactive. This eliminates the danger of useless neurons in addition to the effectivity benefits of sparse activations.

- Steadily decelerate the convergence of deep networks

ReLU is often used to coach deep fashions. It has a pointy cutoff and linear constructive area which may pressure studying. Softplus is smoother and might need gradual updates significantly in very deep networks the place the distinction between layers is small.

To summarize, Softplus has good mathematical properties and avoids points like useless neurons, however these advantages don’t at all times translate to higher ends in deep networks. It’s best utilized in circumstances the place smoothness or constructive outputs are necessary, quite than as a common substitute for ReLU.

Conclusion

Softplus supplies clean, delicate options of ReLU to the neural networks. It learns gradients, doesn’t kill neurons and is absolutely differentiable all through the inputs. It’s like ReLU at giant values, however at zero, behaves extra like a continuing than ReLU as a result of it produces non-zero output and slope. In the meantime, it’s related to trade-offs. It’s also slower to compute; it additionally doesn’t generate actual zeros and will not speed up studying in deep networks as shortly as ReLU. Softplus is simpler in fashions, the place gradients are clean or the place constructive outputs are obligatory. In most different situations, it’s a helpful various to a default substitute of ReLU.

Incessantly Requested Questions

A. Softplus prevents useless neurons by holding gradients non-zero for all inputs, providing a clean various to ReLU whereas nonetheless behaving equally for giant constructive values.

A. It’s a sensible choice when your mannequin advantages from clean gradients or should output strictly constructive values, like scale parameters or sure regression targets.

A. It’s slower to compute than ReLU, doesn’t create sparse activations, and might result in barely slower convergence in deep networks.

Login to proceed studying and revel in expert-curated content material.