Say an individual takes their French Bulldog, Bowser, to the canine park. Figuring out Bowser as he performs among the many different canines is straightforward for the dog-owner to do whereas onsite.

But when somebody desires to make use of a generative AI mannequin like GPT-5 to observe their pet whereas they’re at work, the mannequin may fail at this fundamental activity. Imaginative and prescient-language fashions like GPT-5 usually excel at recognizing normal objects, like a canine, however they carry out poorly at finding customized objects, like Bowser the French Bulldog.

To handle this shortcoming, researchers from MIT, the MIT-IBM Watson AI Lab, the Weizmann Institute of Science, and elsewhere have launched a brand new coaching methodology that teaches vision-language fashions to localize customized objects in a scene.

Their methodology makes use of fastidiously ready video-tracking information through which the identical object is tracked throughout a number of frames. They designed the dataset so the mannequin should concentrate on contextual clues to determine the customized object, relatively than counting on information it beforehand memorized.

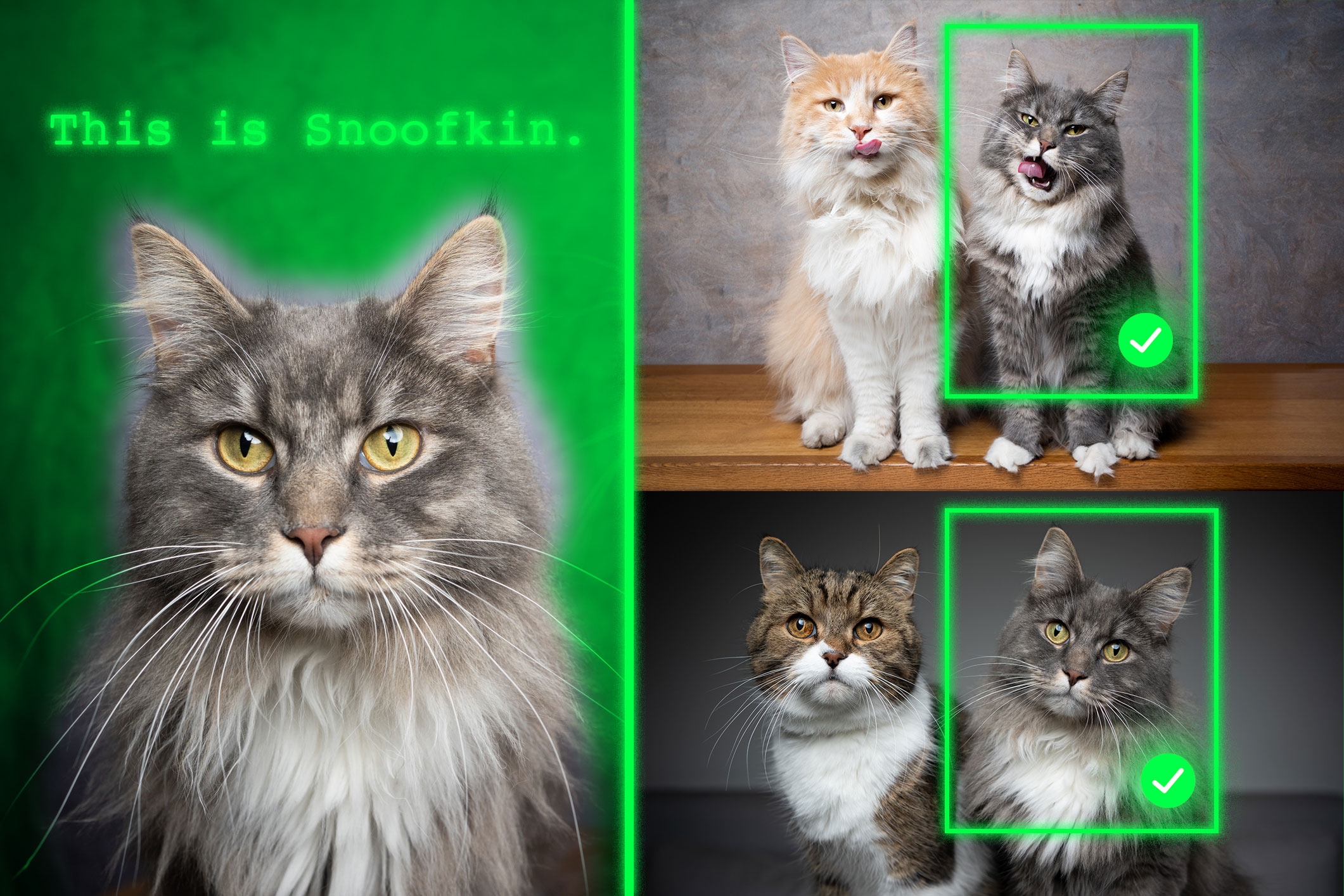

When given a couple of instance pictures exhibiting a personalised object, like somebody’s pet, the retrained mannequin is best capable of determine the situation of that very same pet in a brand new picture.

Fashions retrained with their methodology outperformed state-of-the-art techniques at this activity. Importantly, their method leaves the remainder of the mannequin’s normal talents intact.

This new strategy may assist future AI techniques observe particular objects throughout time, like a toddler’s backpack, or localize objects of curiosity, reminiscent of a species of animal in ecological monitoring. It may additionally support within the improvement of AI-driven assistive applied sciences that assist visually impaired customers discover sure gadgets in a room.

“Finally, we wish these fashions to have the ability to be taught from context, identical to people do. If a mannequin can do that effectively, relatively than retraining it for every new activity, we may simply present a couple of examples and it might infer learn how to carry out the duty from that context. It is a very highly effective potential,” says Jehanzeb Mirza, an MIT postdoc and senior creator of a paper on this system.

Mirza is joined on the paper by co-lead authors Sivan Doveh, a postdoc at Stanford College who was a graduate scholar at Weizmann Institute of Science when this analysis was performed; and Nimrod Shabtay, a researcher at IBM Analysis; James Glass, a senior analysis scientist and the top of the Spoken Language Techniques Group within the MIT Laptop Science and Synthetic Intelligence Laboratory (CSAIL); and others. The work might be introduced on the Worldwide Convention on Laptop Imaginative and prescient.

An sudden shortcoming

Researchers have discovered that giant language fashions (LLMs) can excel at studying from context. In the event that they feed an LLM a couple of examples of a activity, like addition issues, it will probably be taught to reply new addition issues based mostly on the context that has been supplied.

A vision-language mannequin (VLM) is actually an LLM with a visible element linked to it, so the MIT researchers thought it might inherit the LLM’s in-context studying capabilities. However this isn’t the case.

“The analysis neighborhood has not been capable of finding a black-and-white reply to this explicit drawback but. The bottleneck may come up from the truth that some visible info is misplaced within the means of merging the 2 parts collectively, however we simply don’t know,” Mirza says.

The researchers got down to enhance VLMs talents to do in-context localization, which includes discovering a particular object in a brand new picture. They targeted on the info used to retrain present VLMs for a brand new activity, a course of known as fine-tuning.

Typical fine-tuning information are gathered from random sources and depict collections of on a regular basis objects. One picture would possibly comprise vehicles parked on a road, whereas one other features a bouquet of flowers.

“There isn’t any actual coherence in these information, so the mannequin by no means learns to acknowledge the identical object in a number of pictures,” he says.

To repair this drawback, the researchers developed a brand new dataset by curating samples from present video-tracking information. These information are video clips exhibiting the identical object transferring by means of a scene, like a tiger strolling throughout a grassland.

They minimize frames from these movies and structured the dataset so every enter would encompass a number of pictures exhibiting the identical object in several contexts, with instance questions and solutions about its location.

“By utilizing a number of pictures of the identical object in several contexts, we encourage the mannequin to constantly localize that object of curiosity by specializing in the context,” Mirza explains.

Forcing the main focus

However the researchers discovered that VLMs are inclined to cheat. As a substitute of answering based mostly on context clues, they may determine the thing utilizing information gained throughout pretraining.

As an example, for the reason that mannequin already realized that a picture of a tiger and the label “tiger” are correlated, it may determine the tiger crossing the grassland based mostly on this pretrained information, as a substitute of inferring from context.

To unravel this drawback, the researchers used pseudo-names relatively than precise object class names within the dataset. On this case, they modified the title of the tiger to “Charlie.”

“It took us some time to determine learn how to stop the mannequin from dishonest. However we modified the sport for the mannequin. The mannequin doesn’t know that ‘Charlie’ could be a tiger, so it’s pressured to take a look at the context,” he says.

The researchers additionally confronted challenges find one of the best ways to organize the info. If the frames are too shut collectively, the background wouldn’t change sufficient to supply information variety.

Ultimately, finetuning VLMs with this new dataset improved accuracy at customized localization by about 12 p.c on common. After they included the dataset with pseudo-names, the efficiency good points reached 21 p.c.

As mannequin dimension will increase, their method results in larger efficiency good points.

Sooner or later, the researchers need to examine attainable causes VLMs don’t inherit in-context studying capabilities from their base LLMs. As well as, they plan to discover extra mechanisms to enhance the efficiency of a VLM with out the necessity to retrain it with new information.

“This work reframes few-shot customized object localization — adapting on the fly to the identical object throughout new scenes — as an instruction-tuning drawback and makes use of video-tracking sequences to show VLMs to localize based mostly on visible context relatively than class priors. It additionally introduces the primary benchmark for this setting with strong good points throughout open and proprietary VLMs. Given the immense significance of fast, instance-specific grounding — usually with out finetuning — for customers of real-world workflows (reminiscent of robotics, augmented actuality assistants, artistic instruments, and many others.), the sensible, data-centric recipe provided by this work can assist improve the widespread adoption of vision-language basis fashions,” says Saurav Jha, a postdoc on the Mila-Quebec Synthetic Intelligence Institute, who was not concerned with this work.

Extra co-authors are Wei Lin, a analysis affiliate at Johannes Kepler College; Eli Schwartz, a analysis scientist at IBM Analysis; Hilde Kuehne, professor of pc science at Tuebingen AI Middle and an affiliated professor on the MIT-IBM Watson AI Lab; Raja Giryes, an affiliate professor at Tel Aviv College; Rogerio Feris, a principal scientist and supervisor on the MIT-IBM Watson AI Lab; Leonid Karlinsky, a principal analysis scientist at IBM Analysis; Assaf Arbelle, a senior analysis scientist at IBM Analysis; and Shimon Ullman, the Samy and Ruth Cohn Professor of Laptop Science on the Weizmann Institute of Science.

This analysis was funded, partially, by the MIT-IBM Watson AI Lab.