In an period the place information drives innovation and decision-making, organizations are more and more targeted on not solely accumulating information however on sustaining its high quality and reliability. Excessive-quality information is important for constructing belief in analytics, enhancing the efficiency of machine studying (ML) fashions, and supporting strategic enterprise initiatives.

By utilizing AWS Glue Knowledge High quality, you may measure and monitor the standard of your information. It analyzes your information, recommends information high quality guidelines, evaluates information high quality, and gives you with a rating that quantifies the standard of your information. With this, you can also make assured enterprise selections. With this launch, AWS Glue Knowledge High quality is now built-in with the lakehouse structure of Amazon SageMaker, Apache Iceberg on basic objective Amazon Easy Storage Service (Amazon S3) buckets, and Amazon S3 Tables. This integration brings collectively serverless information integration, high quality administration, and superior ML capabilities in a unified surroundings.

This publish explores how you need to use AWS Glue Knowledge High quality to take care of information high quality of S3 Tables and Apache Iceberg tables on basic objective S3 buckets. We’ll talk about methods for verifying the standard of printed information and the way these built-in applied sciences can be utilized to implement efficient information high quality workflows.

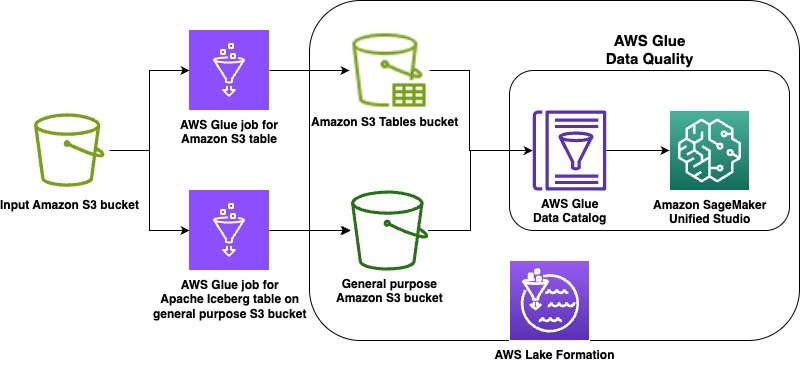

Resolution overview

On this launch, we’re supporting the lakehouse structure of Amazon SageMaker, Apache Iceberg on basic objective S3 buckets, and Amazon S3 Tables. As instance use instances, we show information high quality on an Apache Iceberg desk saved in a basic objective S3 bucket in addition to on Amazon S3 Tables. The steps will cowl the next:

- Create an Apache Iceberg desk on a basic objective Amazon S3 bucket and an Amazon S3 desk in a desk bucket utilizing two AWS Glue extract, rework, and cargo (ETL) jobs

- Grant applicable AWS Lake Formation permissions on every desk

- Run information high quality suggestions at relaxation on the Apache Iceberg desk on basic objective S3 bucket

- Run the information high quality guidelines and visualize the leads to Amazon SageMaker Unified Studio

- Run information high quality suggestions at relaxation on the S3 desk

- Run the information high quality guidelines and visualize the leads to SageMaker Unified Studio

The next diagram is the answer structure.

Stipulations

To implement the directions, you should have the next conditions:

Create S3 tables and Apache Iceberg on basic objective S3 bucket

First, full the next steps to add information and scripts:

- Add the hooked up AWS Glue job scripts to your designated script bucket in S3

- To obtain the New York Metropolis Taxi – Yellow Journey Knowledge dataset for January 2025 (Parquet file), navigate to NYC TLC Journey File Knowledge, develop 2025, and select Yellow Taxi Journey information beneath January part. A file referred to as

yellow_tripdata_2025-01.parquetcan be downloaded to your pc. - On the Amazon S3 console, open an enter bucket of your alternative and create a folder referred to as

nyc_yellow_trip_data. The stack will create aGlueJobRolewith permissions to this bucket. - Add the

yellow_tripdata_2025-01.parquetfile to the folder. - Obtain the CloudFormation stack file. Navigate to the CloudFormation console. Select Create stack. Select Add a template file and choose the CloudFormation template you downloaded. Select Subsequent.

- Enter a singular identify for Stack identify.

- Configure the stack parameters. Default values are offered within the following desk:

| Parameter | Default worth | Description |

ScriptBucketName | N/A – user-supplied | Title of the referenced Amazon S3 basic objective bucket containing the AWS Glue job scripts |

DatabaseName | iceberg_dq_demo | Title of the AWS Glue Database to be created for the Apache Iceberg desk on basic objective Amazon S3 bucket |

GlueIcebergJobName | create_iceberg_table_on_s3 | The identify of the created AWS Glue job that creates the Apache Iceberg desk on basic objective Amazon S3 bucket |

GlueS3TableJobName | create_s3_table_on_s3_bucket | The identify of the created AWS Glue job that creates the Amazon S3 desk |

S3TableBucketName | dataquality-demo-bucket | Title of the Amazon S3 desk bucket to be created. |

S3TableNamespaceName | s3_table_dq_demo | Title of the Amazon S3 desk bucket namespace to be created |

S3TableTableName | ny_taxi | Title of the Amazon S3 desk to be created by the AWS Glue job |

IcebergTableName | ny_taxi | Title of the Apache Iceberg desk on basic objective Amazon S3 to be created by the AWS Glue job |

IcebergScriptPath | scripts/create_iceberg_table_on_s3.py | The referenced Amazon S3 path to the AWS Glue script file for the Apache Iceberg desk creation job. Confirm the file identify matches the corresponding GlueIcebergJobName |

S3TableScriptPath | scripts/create_s3_table_on_s3_bucket.py | The referenced Amazon S3 path to the AWS Glue script file for the Amazon S3 desk creation job. Confirm the file identify matches the corresponding GlueS3TableJobName |

InputS3Bucket | N/A – user-supplied bucket | Title of the referenced Amazon S3 bucket with which the NY Taxi information was uploaded |

InputS3Path | nyc_yellow_trip_data | The referenced Amazon S3 path with which the NY Taxi information was uploaded |

OutputBucketName | N/A – user-supplied | Title of the created Amazon S3 basic objective bucket for the AWS Glue job for Apache Iceberg desk information |

Full the next steps to configure AWS Identification and Entry Administration (IAM) and Lake Formation permissions:

- In the event you haven’t beforehand labored with S3 Tables and analytics companies, navigate to Amazon S3.

- Select Desk buckets.

- Select Allow integration to allow analytics service integrations together with your S3 desk buckets.

- Navigate to the Sources tab to your AWS CloudFormation stack. Notice the IAM function with the logical ID

GlueJobRoleand the database identify with the logical IDGlueDatabase. Moreover, notice the identify of the S3 desk bucket with the logical IDS3TableBucketin addition to the namespace identify with the logical IDS3TableBucketNamespace. The S3 desk bucket identify is the portion of the Amazon Useful resource Title (ARN) which follows:arn:aws:s3tables:<area>:<accountID>:bucket/{S3 Desk bucket Title}. The namespace identify is the portion of the namespace ARN which follows:arn:aws:s3tables:<area>:<accountID>:bucket/{S3 Desk bucket Title}|{namespace identify}. - Navigate to the Lake Formation console with a Lake Formation information lake administrator.

- Navigate to the Databases tab and choose your

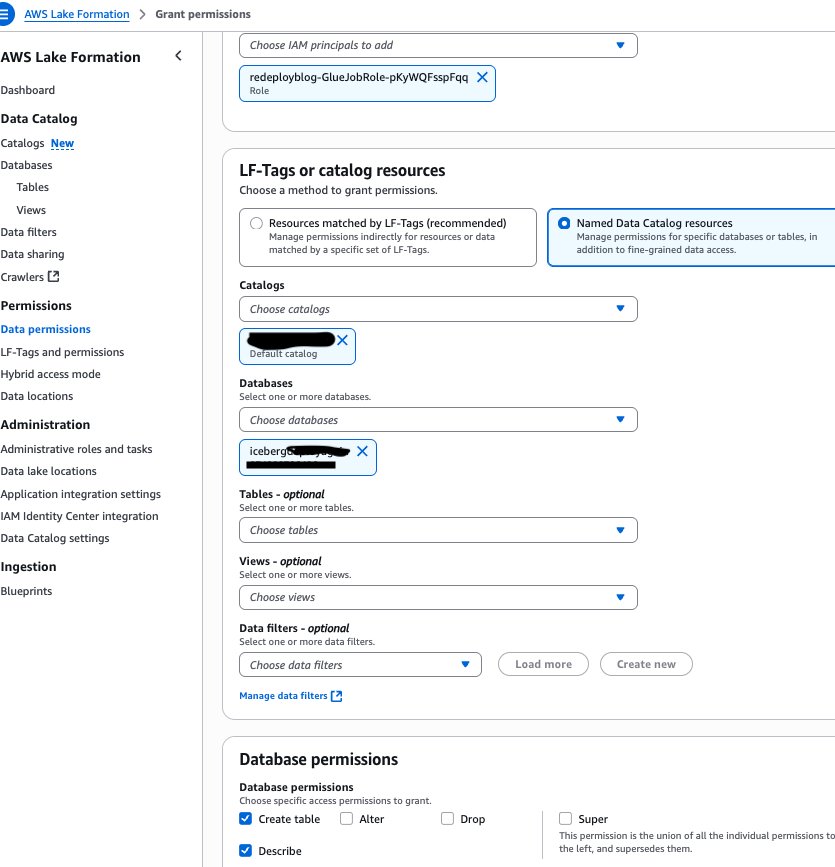

GlueDatabase. Notice the chosen default catalog ought to match your AWS account ID. - Choose the Actions dropdown menu and beneath Permissions, select Grant.

- Grant your

GlueJobRolefrom step 4 the mandatory permissions. Below Database permissions, choose Create desk and Describe, as proven within the following screenshot.

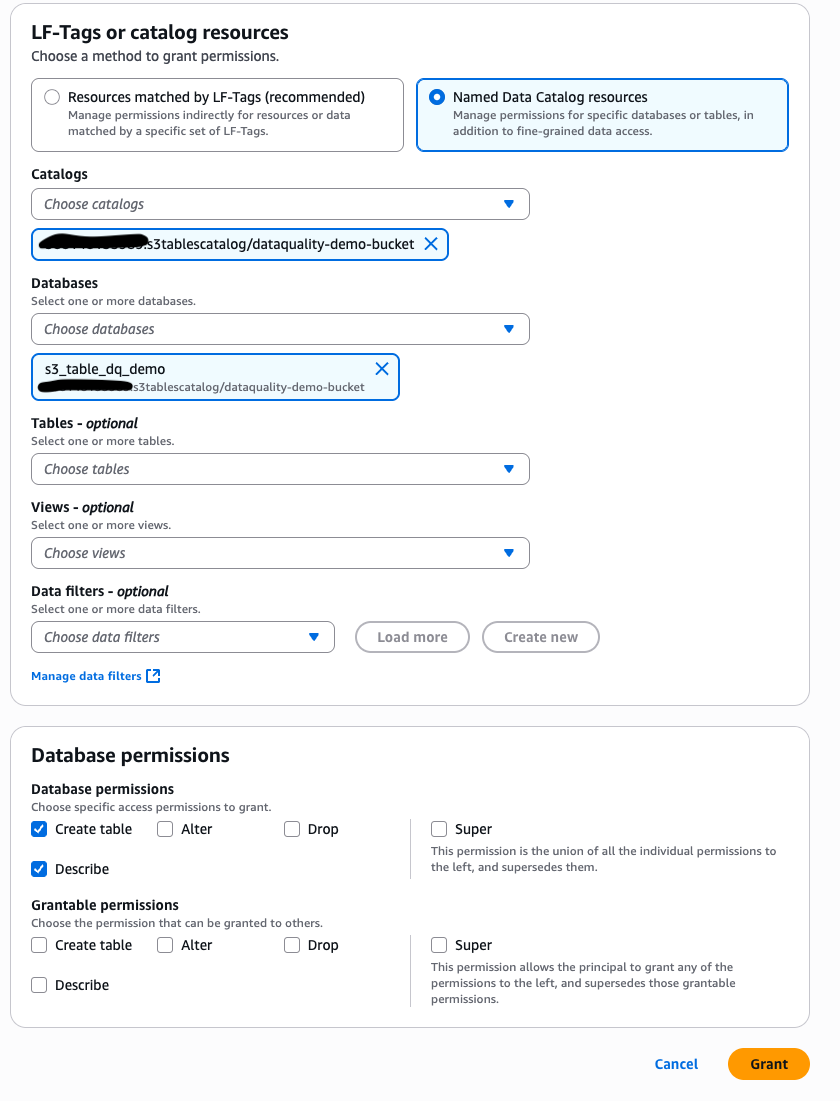

Navigate again to the Databases tab in Lake Formation and choose the catalog that matches with the worth of S3TableBucket you famous in step 4 within the format: <AWS account ID>:s3tablescatalog/<S3 Desk Bucket identify>

- Choose your namespace identify. From the Actions dropdown menu, beneath Permissions, select Grant.

- Grant your GlueJobRole from step 4 the mandatory permissions Below Database permissions, choose Create desk and Describe, as proven within the following screenshot.

To run the roles created within the CloudFormation stack to create the pattern tables and configure Lake Formation permissions for the DataQualityRole, full the next steps:

- Within the Sources tab of your CloudFormation stack, notice the AWS Glue job names for the logical useful resource IDs:

GlueS3TableJobandGlueIcebergJob. - Navigate to the AWS Glue console and choose ETL jobs. Choose your

GlueIcebergJobfrom step 11 and select Run job. Choose yourGlueS3TableJoband select Run job. - To confirm the profitable creation of your Apache Iceberg desk on basic objective S3 bucket within the database, navigate to Lake Formation together with your Lake Formation information lake administrator permissions. Below Databases, choose your

GlueDatabase. The chosen default catalog ought to match your AWS account ID. - On the dropdown menu, select View after which Tables. You need to see a brand new tab with the desk identify you specified for

IcebergTableName. You’ve gotten verified the desk creation. - Choose this desk and grant your DataQualityRole (

<stack_name>-DataQualityRole-<xxxxxx>) the mandatory Lake Formation permissions by selecting the Grant hyperlink within the Actions tab. Select Choose, Describe from Desk permissions for the brand new Apache Iceberg desk. - To confirm the S3 desk within the S3 desk bucket, navigate to Databases within the Lake Formation console together with your Lake Formation information lake administrator permissions. Ensure the chosen catalog is your S3 desk bucket catalog:

<AWS account ID>:s3tablescatalog/<S3 Desk Bucket identify> - Choose your S3 desk namespace and select the dropdown menu View.

- Select Tables and it’s best to see a brand new tab with the desk identify you specified for

S3TableTableName. You’ve gotten verified the desk creation. - Select the hyperlink for the desk and beneath Actions, select Grant. Grant your

DataQualityRolethe mandatory Lake Formation permissions. Select Choose, Describe from Desk permissions for the S3 desk. - Within the Lake Formation console together with your Lake Formation information lake administrator permissions, on the Administration tab, select Knowledge lake areas .

- Select Register location. Enter your

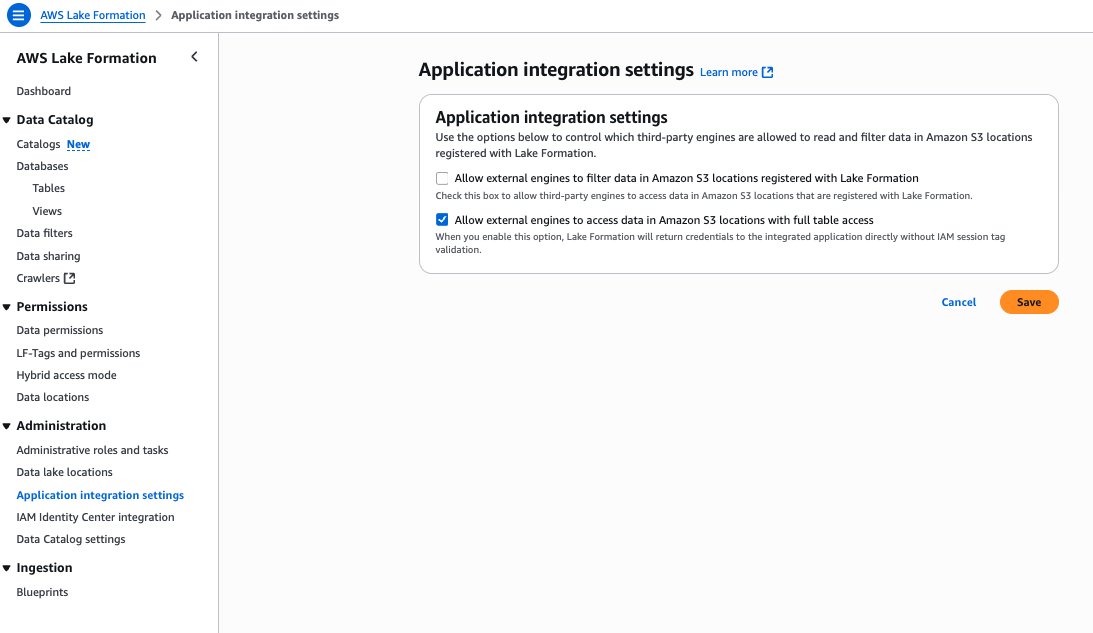

OutputBucketNamebecause the Amazon S3 path. Enter theLakeFormationRolefrom the stack assets because the IAM function. Below Permission mode, select Lake Formation. - On the Lake Formation console beneath Software integration settings, choose Enable exterior engines to entry information in Amazon S3 areas with full desk entry, as proven within the following screenshot.

Generate suggestions for Apache Iceberg desk on basic objective S3 bucket managed by Lake Formation

On this part, we present how one can generate information high quality guidelines utilizing the information high quality rule suggestions characteristic of AWS Glue Knowledge High quality to your Apache Iceberg desk on a basic objective S3 bucket. Observe these steps:

- Navigate to the AWS Glue console. Below Knowledge Catalog, select Databases. Select the

GlueDatabase. - Below Tables, choose your

IcebergTableName. On the Knowledge high quality tab, select Run historical past. - Below Advice runs, select Advocate guidelines.

- Use the

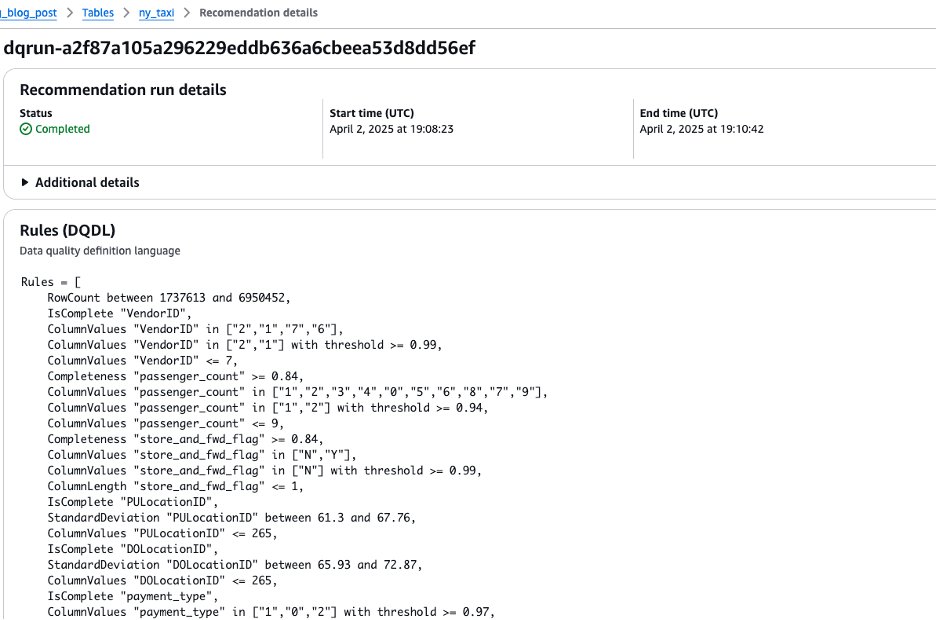

DataQualityRole(<stack_name>-DataQualityRole-<xxxxxx>) to generate information high quality rule suggestions, leaving the opposite settings as default. The outcomes are proven within the following screenshot.

Run information high quality guidelines for Apache Iceberg desk on basic objective S3 bucket managed by Lake Formation

On this part, we present how one can create an information high quality ruleset with the advisable guidelines. After creating the ruleset, we run the information high quality guidelines. Observe these steps:

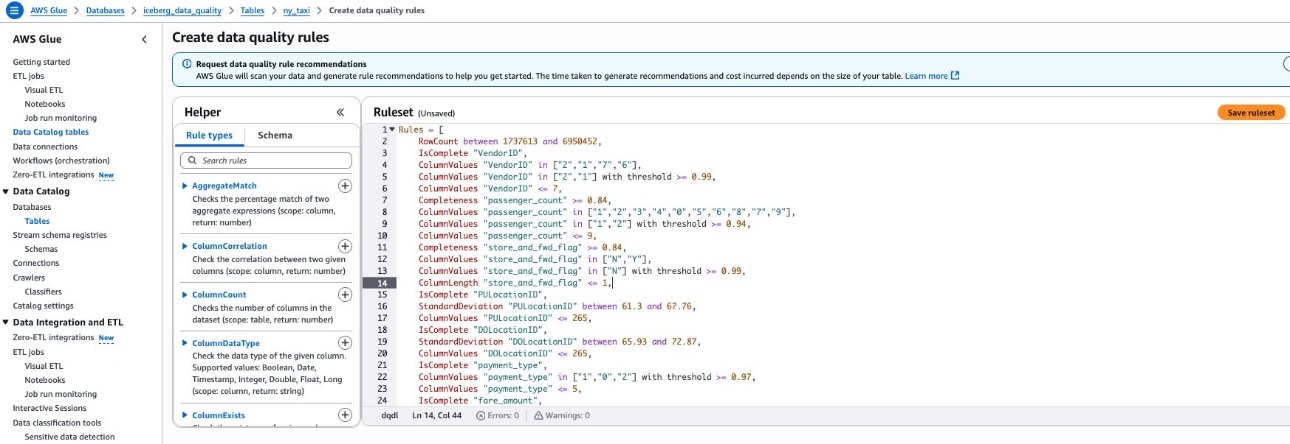

- Copy the ensuing guidelines out of your suggestion run by deciding on the dq-run ID and selecting Copy.

- Navigate again to the desk beneath the Knowledge high quality tab and select Create information high quality guidelines. Paste the ruleset from step 1 right here. Select Save ruleset, as proven within the following screenshot.

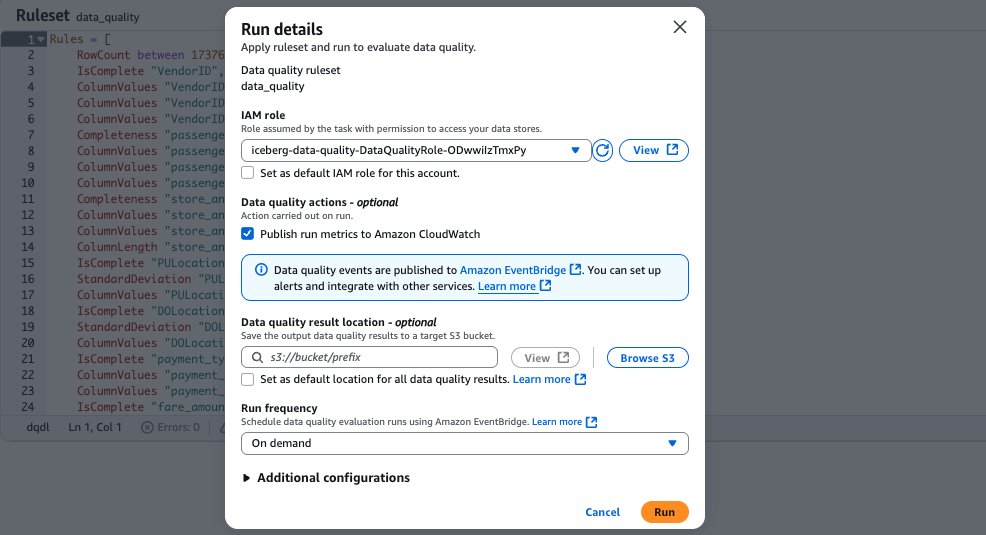

- After saving your ruleset, navigate again to the Knowledge High quality tab to your Apache Iceberg desk on the final objective S3 bucket. Choose the ruleset you created. To run the information high quality analysis run on the ruleset utilizing your information high quality function, select Run, as proven within the following screenshot.

Generate suggestions for the S3 desk on the S3 desk bucket

On this part, we present how one can use the AWS Command Line Interface (AWS CLI) to generate suggestions to your S3 desk on the S3 desk bucket. This can even create an information high quality ruleset for the S3 desk. Observe these steps:

- Fill in your S3 desk

namespace identify, S3 deskdesk identify,Catalog ID, andKnowledge High quality function ARNwithin the following JSON file and reserve it regionally:

- Enter the next AWS CLI command changing native

file identifyandareawith your individual data:

- Run the next AWS CLI command to verify the advice run succeeds:

Run information high quality guidelines for the S3 desk on the S3 desk bucket

On this part, we present how one can use the AWS CLI to judge the information high quality ruleset on the S3 tables bucket that we simply created. Observe these steps:

- Exchange S3 desk

namespace identify, S3 tablesdesk identify,Catalog ID, andKnowledge High quality function ARNwith your individual data within the following JSON file and reserve it regionally:

- Run the next AWS CLI command changing native

file identifyandareatogether with your data:

- Run the next AWS CLI command changing

areaand information high qualityrun IDtogether with your data:

View leads to SageMaker Unified Studio

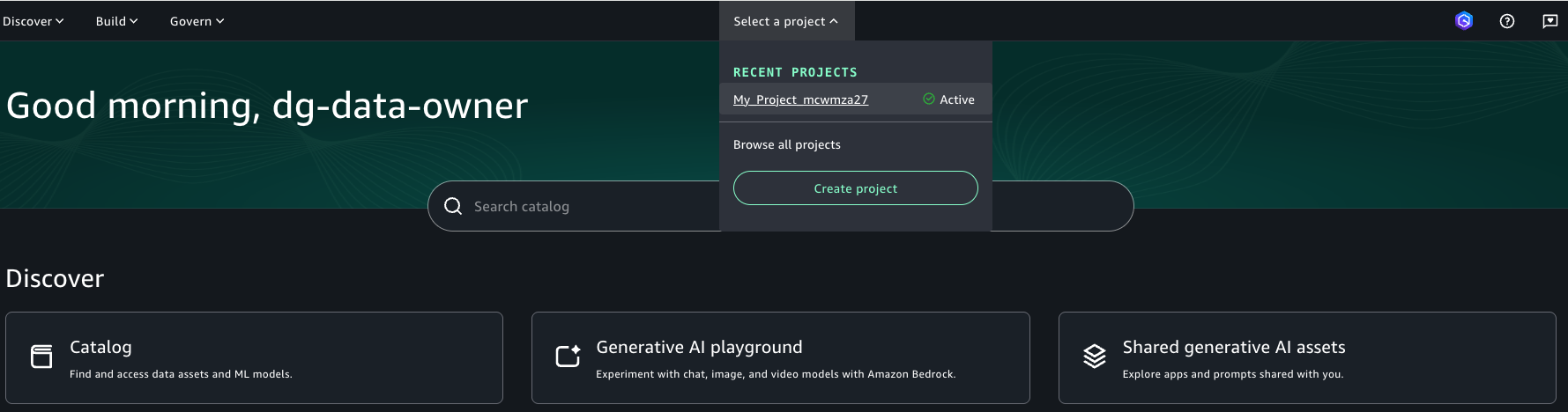

Full the next steps to view outcomes out of your information high quality analysis runs in SageMaker Unified Studio:

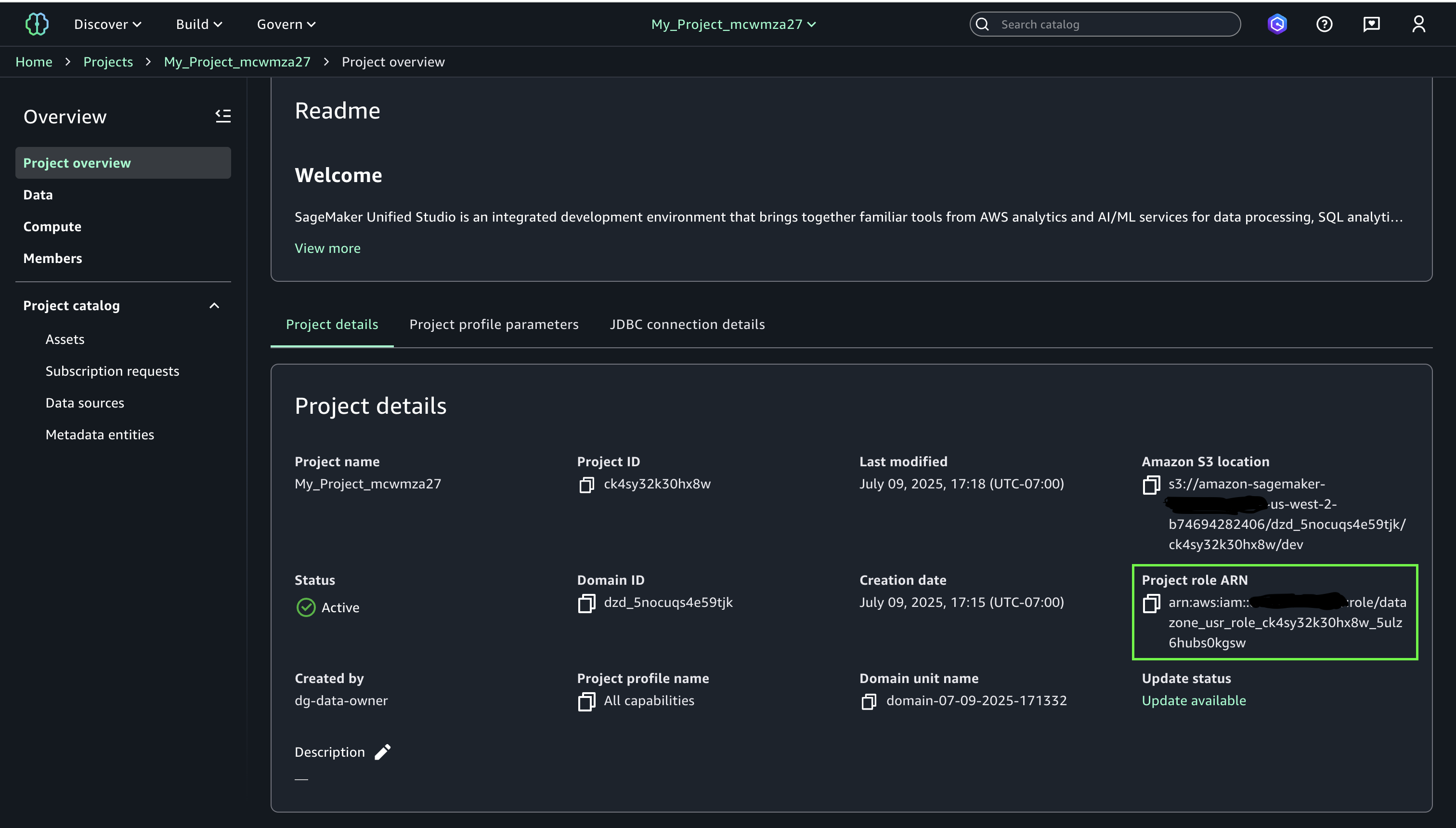

- Log in to the SageMaker Unified Studio portal utilizing your single sign-on (SSO).

- Navigate to your venture and notice the venture function ARN

- Navigate to the Lake Formation console together with your Lake Formation information lake administrator permissions. Choose your Apache Iceberg desk that you just created on basic objective S3 bucket and select Grant from the Actions dropdown menu. Grant the next Lake Formation permissions to your SageMaker Unified Studio venture function from step 2:

- Describe for Desk permissions and Grantable permissions

- Subsequent, choose your S3 Desk from the S3 Desk bucket catalog in Lake Formation and select Grant from the Actions drop-down. Grant the beneath Lake Formation permissions to your SageMaker Unified Studio venture function from step 2:

- Describe for Desk permissions and Grantable permissions

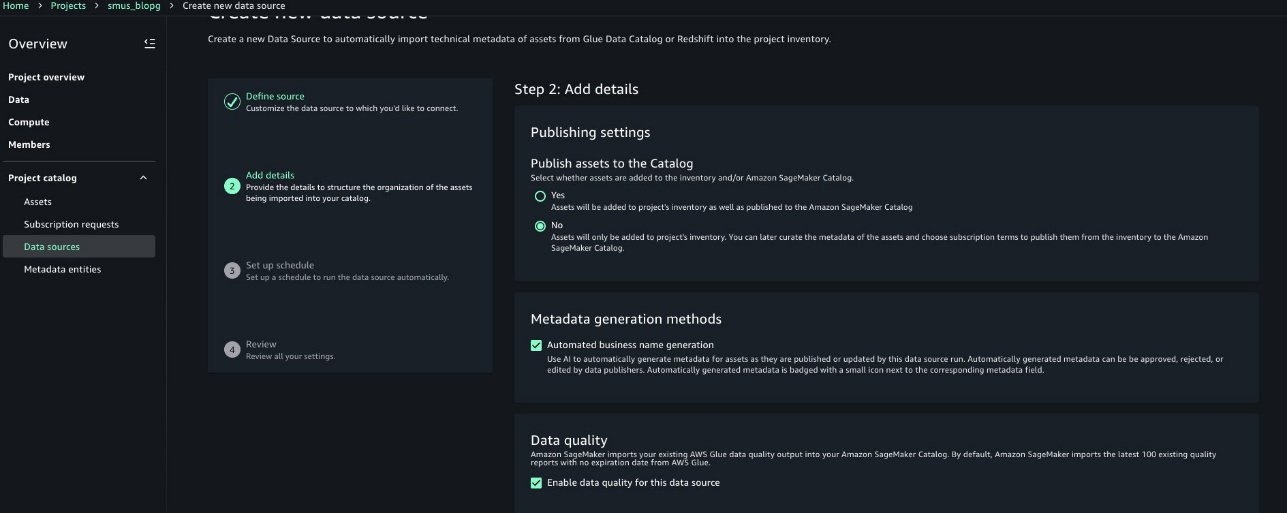

- Observe the steps at Create an Amazon SageMaker Unified Studio information supply for AWS Glue within the venture catalog to configure your information supply to your

GlueDatabaseand your S3 tables namespace.- Select a reputation and optionally enter an outline to your information supply particulars.

- Select AWS Glue (Lakehouse) to your Knowledge supply kind. Depart connection and information lineage because the default values.

- Select Use the AwsDataCatalog for the Apache Iceberg desk on basic objective S3 bucket AWS Glue database.

- Select the Database identify equivalent to the

GlueDatabase.Select Subsequent. - Below Knowledge high quality, choose Allow information high quality for this information supply. Depart the remainder of the defaults.

- Configure the following information supply with a reputation to your S3 desk namespace. Optionally, enter an outline to your information supply particulars.

- Select AWS Glue (Lakehouse) to your Knowledge supply kind. Depart connection and information lineage because the default values.

- Select to enter the catalog identify:

s3tablescatalog/<S3TableBucketName> - Select the Database identify equivalent to the S3 desk namespace. Select Subsequent.

- Choose Allow information high quality for this information supply. Depart the remainder of the defaults.

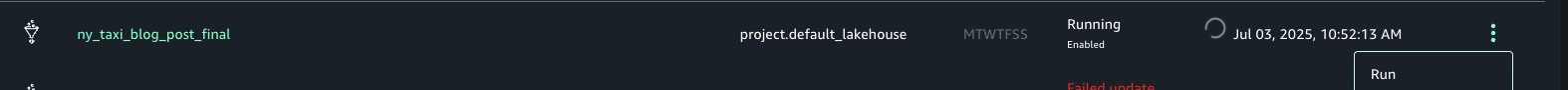

- Run every dataset.

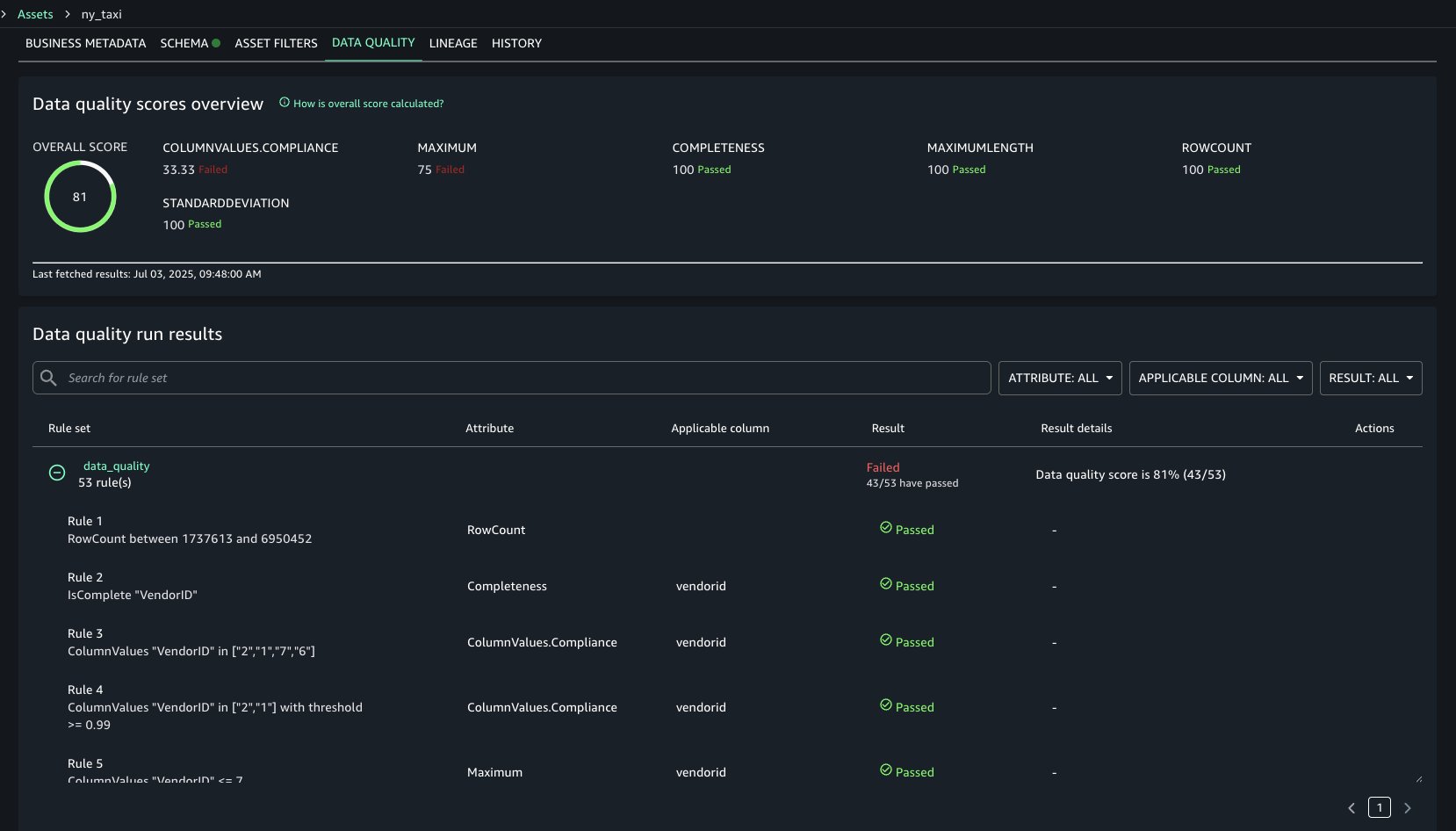

- Navigate to your venture’s Property and choose the associated asset that you just created for Apache Iceberg desk on basic objective S3 bucket. Navigate to the Knowledge High quality tab to view your information high quality outcomes. You need to have the ability to see the information high quality outcomes for the S3 desk asset equally.

The information high quality leads to the next screenshot present every rule evaluated within the chosen information high quality analysis run and its end result. The information high quality rating calculates the proportion of guidelines that handed, and the overview reveals how sure rule varieties faired throughout the analysis. For instance, Completeness rule varieties all handed, however ColumnValues rule varieties handed solely three out of 9 occasions.

Cleanup

To keep away from incurring future prices, clear up the assets you created throughout this walkthrough:

- Navigate to the weblog publish output bucket and delete its contents.

- Un-register the information lake location to your output bucket in Lake Formation

- Revoke the Lake Formation permissions to your SageMaker venture function, to your information high quality function, and to your AWS Glue job function.

- Delete the enter information file and the job scripts out of your bucket.

- Delete the S3 desk.

- Delete the CloudFormation stack.

- [Optional] Delete your SageMaker Unified Studio area and the related CloudFormation stacks it created in your behalf.

Conclusion

On this publish, we demonstrated how one can now generate information high quality suggestion to your lakehouse structure utilizing Apache Iceberg tables on basic objective Amazon S3 buckets and Amazon S3 Tables. Then we confirmed how one can combine and think about these information high quality leads to Amazon SageMaker Unified Studio. Do this out to your personal use case and share your suggestions and questions within the feedback.

In regards to the Authors

Brody Pearman is a Senior Cloud Assist Engineer at Amazon Internet Providers (AWS). He’s keen about serving to prospects use AWS Glue ETL to rework and create their information lakes on AWS whereas sustaining excessive information high quality. In his free time, he enjoys watching soccer together with his associates and strolling his canine.

Brody Pearman is a Senior Cloud Assist Engineer at Amazon Internet Providers (AWS). He’s keen about serving to prospects use AWS Glue ETL to rework and create their information lakes on AWS whereas sustaining excessive information high quality. In his free time, he enjoys watching soccer together with his associates and strolling his canine.

Shiv Narayanan is a Technical Product Supervisor for AWS Glue’s information administration capabilities like information high quality, delicate information detection and streaming capabilities. Shiv has over 20 years of information administration expertise in consulting, enterprise growth and product administration.

Shiv Narayanan is a Technical Product Supervisor for AWS Glue’s information administration capabilities like information high quality, delicate information detection and streaming capabilities. Shiv has over 20 years of information administration expertise in consulting, enterprise growth and product administration.

Shriya Vanvari is a Software program Developer Engineer in AWS Glue. She is keen about studying how one can construct environment friendly and scalable methods to supply higher expertise for purchasers. Outdoors of labor, she enjoys studying and chasing sunsets.

Shriya Vanvari is a Software program Developer Engineer in AWS Glue. She is keen about studying how one can construct environment friendly and scalable methods to supply higher expertise for purchasers. Outdoors of labor, she enjoys studying and chasing sunsets.

Narayani Ambashta is an Analytics Specialist Options Architect at AWS, specializing in the automotive and manufacturing sector, the place she guides strategic prospects in growing trendy information and AI methods. With over 15 years of cross-industry expertise, she focuses on huge information structure, real-time analytics, and AI/ML applied sciences, serving to organizations implement trendy information architectures. Her experience spans throughout lakehouse structure, generative AI, and IoT platforms, enabling prospects to drive digital transformation initiatives. When not architecting trendy options, she enjoys staying lively by way of sports activities and yoga.

Narayani Ambashta is an Analytics Specialist Options Architect at AWS, specializing in the automotive and manufacturing sector, the place she guides strategic prospects in growing trendy information and AI methods. With over 15 years of cross-industry expertise, she focuses on huge information structure, real-time analytics, and AI/ML applied sciences, serving to organizations implement trendy information architectures. Her experience spans throughout lakehouse structure, generative AI, and IoT platforms, enabling prospects to drive digital transformation initiatives. When not architecting trendy options, she enjoys staying lively by way of sports activities and yoga.