Final yr, we launched basis mannequin assist in Databricks Mannequin Serving to allow enterprises to construct safe and customized GenAI apps on a unified knowledge and AI platform. Since then, 1000’s of organizations have used Mannequin Serving to deploy GenAI apps personalized to their distinctive datasets.

At this time, we’re excited to announce new updates that make it simpler to experiment, customise, and deploy GenAI apps. These updates embody entry to new giant language fashions (LLMs), simpler discovery, easier customization choices, and improved monitoring. Collectively, these enhancements provide help to develop and scale GenAI apps extra rapidly and at a decrease value.

Databricks Mannequin Serving is accelerating our AI-driven tasks by making it simple to securely entry and handle a number of SaaS and open fashions, together with these hosted on or exterior Databricks. Its centralized method simplifies safety and price administration, permitting our knowledge groups to focus extra on innovation and fewer on administrative overhead – Greg Rokita, VP, Know-how at Edmunds.com

Entry New Open and Proprietary Fashions By Unified Interface

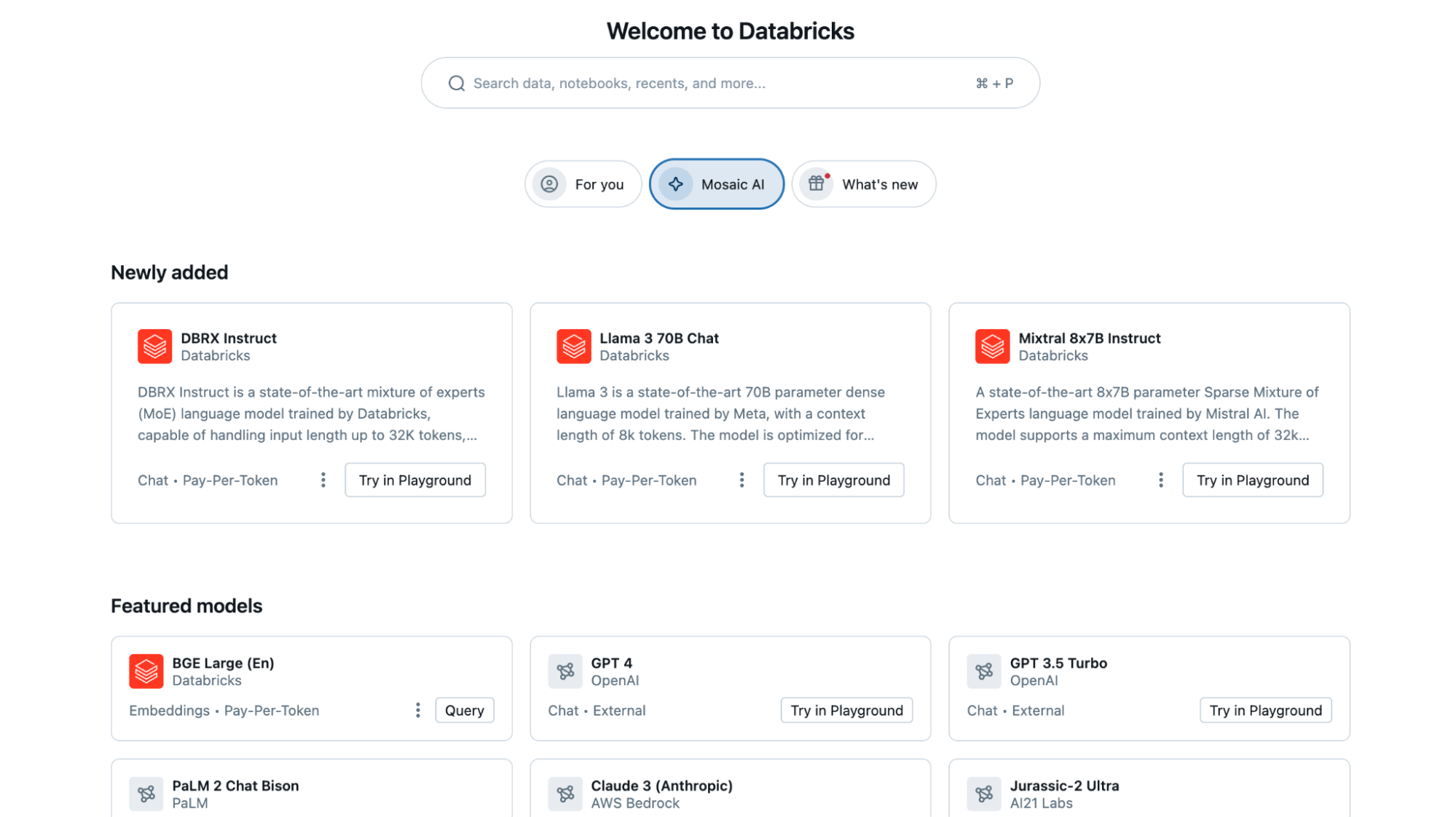

We’re regularly including new open-source and proprietary fashions to Mannequin Serving, supplying you with entry to a broader vary of choices through a unified interface.

- New Open Supply Fashions: Latest additions, similar to DBRX and Llama-3, set a brand new benchmark for open language fashions, delivering capabilities that rival essentially the most superior closed mannequin choices. These fashions are immediately accessible on Databricks through Basis Mannequin APIs with optimized GPU inference, conserving your knowledge safe inside Databricks’ safety perimeter.

- New Exterior Fashions Assist: The Exterior Fashions function now helps newest proprietary state-of-the-art fashions, together with Gemini Professional and Claude 3. Exterior fashions let you securely handle Third-party mannequin supplier credentials and supply charge limiting and permission assist.

All fashions might be accessed through a unified OpenAI-compatible API and SQL interface, making it simple to check, experiment with, and choose the most effective mannequin on your wants.

consumer = OpenAI(

api_key='DATABRICKS_TOKEN',

base_url='https://<YOUR WORKSPACE ID>.cloud.databricks.com/serving-endpoints'

)

chat_completion = consumer.chat.completions.create(

messages=[

{

"role": "user",

"content": "Tell me about Large Language Models"

}

],

# Specify the mannequin, both exterior or hosted on Databricks. For example,

# exchange 'claude-3-sonnet' with 'databricks-dbrx-instruct'

# to make use of a Databricks-hosted mannequin.

mannequin='claude-3-sonnet'

)

print(chat_completion.selections[0].message.content material)At Experian, we’re creating Gen AI fashions with the bottom charges of hallucination whereas preserving core performance. Using the Mixtral 8x7b mannequin on Databricks has facilitated fast prototyping, revealing its superior efficiency and fast response instances.” – James Lin, Head of AI/ML Innovation at Experian.

Uncover Fashions and Endpoints By New Discovery Web page and Search Expertise

As we proceed to develop the record of fashions on Databricks, a lot of you might have shared that discovering them has develop into tougher. We’re excited to introduce new capabilities to simplify mannequin discovery:

- Customized Homepage: The brand new homepage personalizes your Databricks expertise primarily based in your widespread actions and workloads. The ‘Mosaic AI’ tab on the Databricks homepage showcases state-of-the-art fashions for simple discovery. To allow this Preview function, go to your account profile and navigate to Settings > Developer > Databricks Homepage.

- Common Search: The search bar now helps fashions and endpoints, offering a sooner technique to discover current fashions and endpoints, lowering discovery time, and facilitating mannequin reuse.

Construct Compound AI Techniques with Chain Apps and Operate Calling

Most GenAI functions require combining LLMs or integrating them with exterior techniques. With Databricks Mannequin Serving, you possibly can deploy customized orchestration logic utilizing LangChain or arbitrary Python code. This allows you to handle and deploy an end-to-end utility fully on Databricks. We’re introducing updates to make compound techniques even simpler on the platform.

- Vector Search (now GA): Databricks Vector Search seamlessly integrates with Mannequin Serving, offering correct and contextually related responses. Now typically obtainable, it is prepared for large-scale, production-ready deployments.

- Operate Calling (Preview): Presently, in non-public preview, perform calling permits LLMs to generate structured responses extra reliably. This functionality lets you use an LLM as an agent that may name capabilities by outputting JSON objects and mapping arguments. Frequent perform calling examples are: calling exterior providers like DBSQL, translating pure language into API calls, and extracting structured knowledge from textual content. Be part of the preview.

- Guardrails (Preview): In non-public preview, guardrails present request and response filtering for dangerous or delicate content material. Be part of the preview.

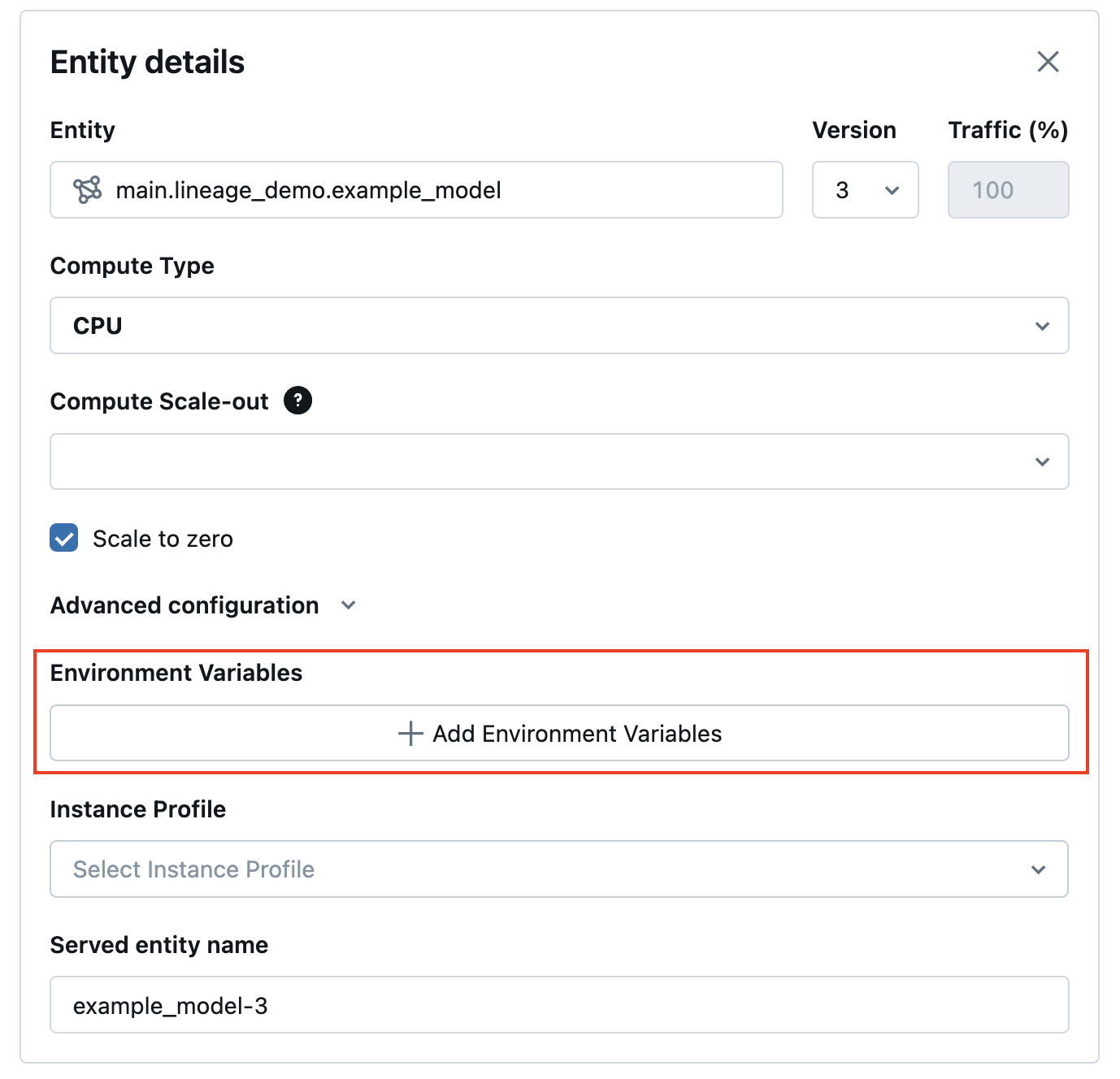

- Secrets and techniques UI: The brand new Secrets and techniques UI streamlines the addition of surroundings variables and secrets and techniques to endpoints, facilitating seamless communication with exterior techniques (API can be obtainable).

Extra updates are coming quickly, together with streaming assist for LangChain and PyFunc fashions and playground integration to additional simplify constructing production-grade compound AI apps on Databricks.

By bringing mannequin serving and monitoring collectively, we will guarantee deployed fashions are all the time up-to-date and delivering correct outcomes. This streamlined method permits us to give attention to maximizing the enterprise impression of AI with out worrying about availability and operational considerations. – Don Scott, VP Product Improvement at Hitachi Options

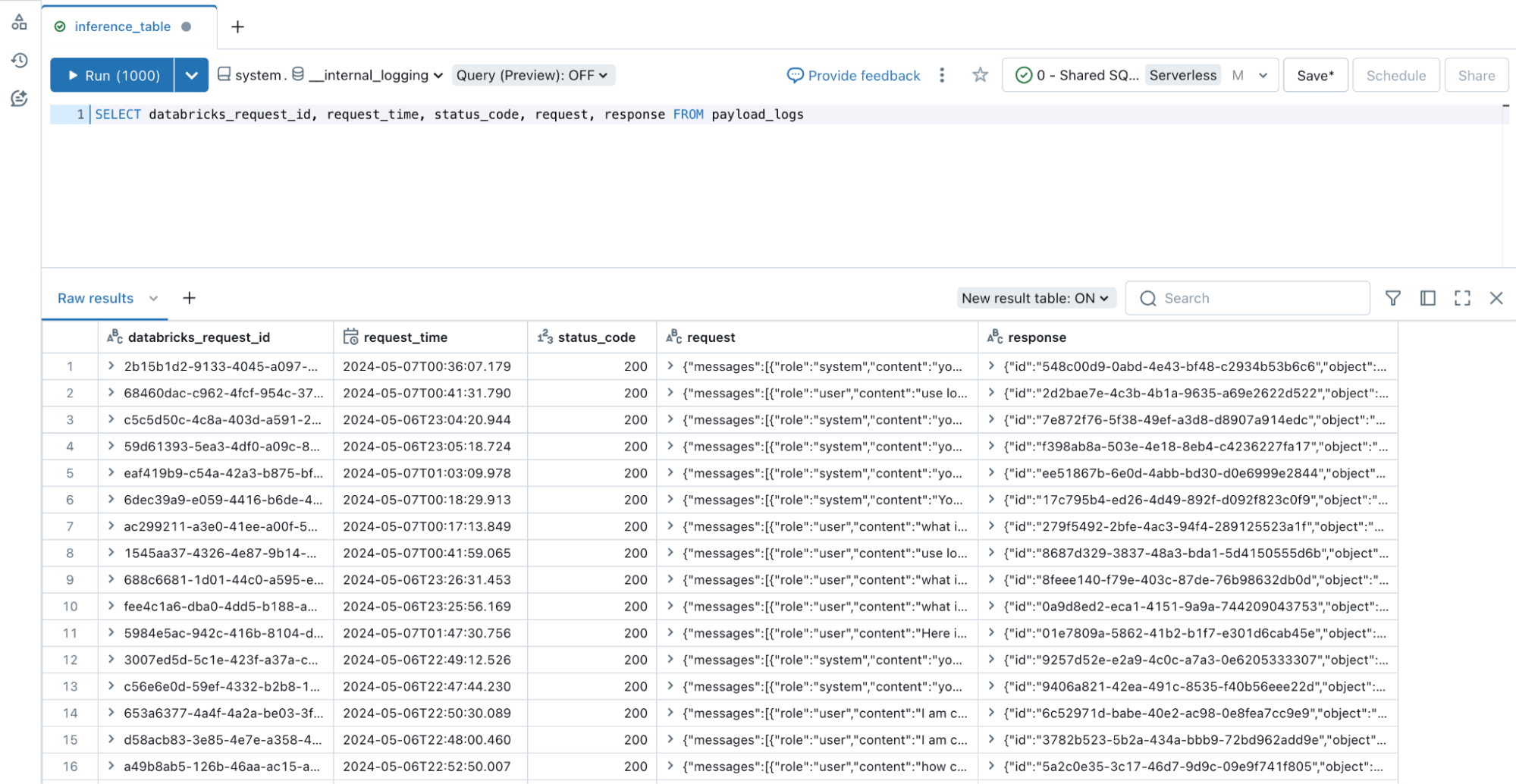

Monitor All Kinds of Endpoints with Inference Tables

Monitoring LLMs and different AI fashions is simply as essential as deploying them. We’re excited to announce that Inference Tables now helps all endpoint varieties, together with GPU-deployed and externally hosted fashions. Inference Tables repeatedly seize inputs and predictions from Databricks Mannequin Serving endpoints and log them right into a Unity Catalog Delta Desk. You may then make the most of current knowledge instruments to judge, monitor, and fine-tune your AI fashions.

To hitch the preview, go to your Account > Previews > Allow Inference Tables For Exterior Fashions And Basis Fashions.

Get Began At this time!

Go to the Databricks AI Playground to attempt Basis Fashions instantly out of your workspace. For extra info, please discuss with the next sources: