Have you ever ever had the expertise of rereading a sentence a number of occasions solely to understand you continue to don’t perceive it? As taught to scores of incoming school freshmen, whenever you understand you’re spinning your wheels, it’s time to alter your method.

This course of, changing into conscious of one thing not working after which altering what you’re doing, is the essence of metacognition, or eager about considering.

It’s your mind monitoring its personal considering, recognizing an issue, and controlling or adjusting your method. The truth is, metacognition is key to human intelligence and, till not too long ago, has been understudied in synthetic intelligence techniques.

My colleagues Charles Courchaine, Hefei Qiu, and Joshua Iacoboni and I are working to alter that. We’ve developed a mathematical framework designed to permit generative AI techniques, particularly massive language fashions like ChatGPT or Claude, to watch and regulate their very own inner “cognitive” processes. In some sense, you may consider it as giving generative AI an interior monologue, a option to assess its personal confidence, detect confusion, and resolve when to assume more durable about an issue.

Why Machines Want Self-Consciousness

In the present day’s generative AI techniques are remarkably succesful however basically unaware. They generate responses with out genuinely realizing how assured or confused their response is perhaps, whether or not it accommodates conflicting info, or whether or not an issue deserves additional consideration. This limitation turns into crucial when generative AI’s incapability to acknowledge its personal uncertainty can have severe penalties, notably in high-stakes functions equivalent to medical analysis, monetary recommendation, and autonomous car decision-making.

For instance, contemplate a medical generative AI system analyzing signs. It’d confidently recommend a analysis with none mechanism to acknowledge conditions the place it is perhaps extra acceptable to pause and mirror, like “These signs contradict one another” or “That is uncommon, I ought to assume extra fastidiously.”

Growing such a capability would require metacognition, which entails each the power to monitor one’s personal reasoning by way of self-awareness and to manage the response by way of self-regulation.

Impressed by neurobiology, our framework goals to offer generative AI a semblance of those capabilities through the use of what we name a metacognitive state vector, which is actually a quantified measure of the generative AI’s inner “cognitive” state throughout 5 dimensions.

5 Dimensions of Machine Self-Consciousness

A method to consider these 5 dimensions is to think about giving a generative AI system 5 totally different sensors for its personal considering.

We quantify every of those ideas inside an total mathematical framework to create the metacognitive state vector and use it to manage ensembles of huge language fashions. In essence, the metacognitive state vector converts a big language mannequin’s qualitative self-assessments into quantitative indicators that it could use to manage its responses.

For instance, when a big language mannequin’s confidence in a response drops beneath a sure threshold or the conflicts within the response exceed some acceptable ranges, it would shift from quick, intuitive processing to gradual, deliberative reasoning. That is analogous to what psychologists name System 1 and System 2 considering in people.

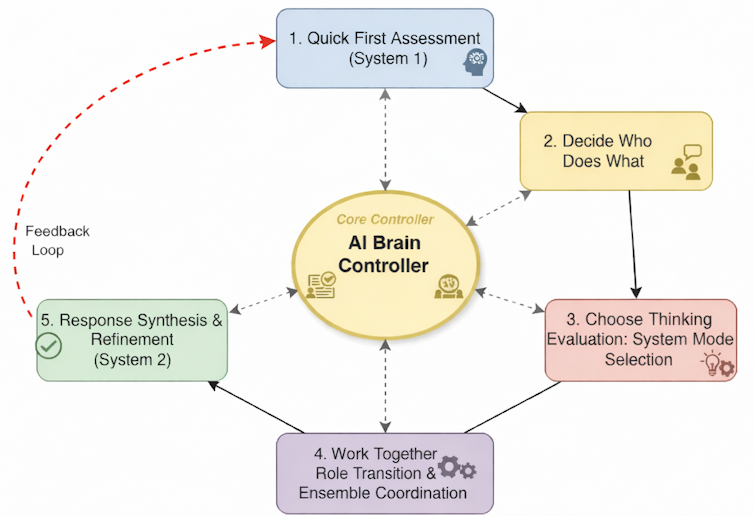

This conceptual diagram reveals the essential thought for giving a set of huge language fashions an consciousness of the state of its processing. Ricky J. Sethi

Conducting an Orchestra

Think about a big language mannequin ensemble as an orchestra the place every musician—a person massive language mannequin—is available in at sure occasions based mostly on the cues obtained from the conductor. The metacognitive state vector acts because the conductor’s consciousness, continually monitoring whether or not the orchestra is in concord, whether or not somebody is out of tune, or whether or not a very troublesome passage requires additional consideration.

When performing a well-known, well-rehearsed piece, like a easy people melody, the orchestra simply performs in fast, environment friendly unison with minimal coordination wanted. That is the System 1 mode. Every musician is aware of their half, the harmonies are easy, and the ensemble operates nearly mechanically.

However when the orchestra encounters a fancy jazz composition with conflicting time signatures, dissonant harmonies, or sections requiring improvisation, the musicians want higher coordination. The conductor directs the musicians to shift roles: Some change into part leaders, others present rhythmic anchoring, and soloists emerge for particular passages.

That is the form of system we’re hoping to create in a computational context by implementing our framework, orchestrating ensembles of huge language fashions. The metacognitive state vector informs a management system that acts because the conductor, telling it to change modes to System 2. It could actually then inform every massive language mannequin to imagine totally different roles—for instance, critic or skilled—and coordinate their advanced interactions based mostly on the metacognitive evaluation of the state of affairs.

Influence and Transparency

The implications lengthen far past making generative AI barely smarter. In well being care, a metacognitive generative AI system might acknowledge when signs don’t match typical patterns and escalate the issue to human consultants moderately than risking misdiagnosis. In schooling, it might adapt educating methods when it detects pupil confusion. In content material moderation, it might determine nuanced conditions requiring human judgment moderately than making use of inflexible guidelines.

Maybe most significantly, our framework makes generative AI decision-making extra clear. As a substitute of a black field that merely produces solutions, we get techniques that may clarify their confidence ranges, determine their uncertainties, and present why they selected explicit reasoning methods.

This interpretability and explainability is essential for constructing belief in AI techniques, particularly in regulated industries or safety-critical functions.

The Highway Forward

Our framework doesn’t give machines consciousness or true self-awareness within the human sense. As a substitute, our hope is to supply a computational structure for allocating assets and bettering responses that additionally serves as a primary step towards extra subtle approaches for full synthetic metacognition.

The subsequent section in our work entails validating the framework with intensive testing, measuring how metacognitive monitoring improves efficiency throughout various duties, and increasing the framework to start out reasoning about reasoning, or metareasoning. We’re notably excited by eventualities the place recognizing uncertainty is essential, equivalent to in medical diagnoses, authorized reasoning, and producing scientific hypotheses.

Our final imaginative and prescient is generative AI techniques that don’t simply course of info however perceive their cognitive limitations and strengths. This implies techniques that know when to be assured and when to be cautious, when to assume quick and when to decelerate, and after they’re certified to reply and when they need to defer to others.

This text is republished from The Dialog beneath a Artistic Commons license. Learn the unique article.