MongoDB has grown from a fundamental JSON key-value retailer to one of the vital in style NoSQL database options in use right now. It’s extensively supported and offers versatile JSON doc storage at scale. It additionally offers native querying and analytics capabilities. These attributes have precipitated MongoDB to be extensively adopted particularly alongside JavaScript internet functions.

As succesful as it’s, there are nonetheless cases the place MongoDB alone cannot fulfill all the necessities for an software, so getting a replica of the information into one other platform by way of a change information seize (CDC) resolution is required. This can be utilized to create information lakes, populate information warehouses or for particular use circumstances like offloading analytics and textual content search.

On this publish, we’ll stroll by means of how CDC works on MongoDB and the way it may be carried out, after which delve into the the explanation why you may wish to implement CDC with MongoDB.

Bifurcation vs Polling vs Change Knowledge Seize

Change information seize is a mechanism that can be utilized to maneuver information from one information repository to a different. There are different choices:

- You’ll be able to bifurcate information coming in, splitting the information into a number of streams that may be despatched to a number of information sources. Typically, this implies your functions would submit new information to a queue. This isn’t an ideal possibility as a result of it limits the APIs that your software can use to submit information to be those who resemble a queue. Functions have a tendency to wish the assist of upper degree APIs for issues like ACID transactions. So, this implies we typically wish to permit our software to speak on to a database. The applying might submit information by way of a micro-service or software server that talks on to the database, however this solely strikes the issue. These providers would nonetheless want to speak on to the database.

- You might periodically ballot your entrance finish database and push information into your analytical platform. Whereas this sounds easy, the main points get difficult, significantly if you must assist updates to your information. It seems that is laborious to do in apply. And you’ve got now launched one other course of that has to run, be monitored, scale and so forth.

So, utilizing CDC avoids these issues. The applying can nonetheless leverage the database options (perhaps by way of a service) and you do not have to arrange a polling infrastructure. However there may be one other key distinction — utilizing CDC provides you with the freshest model of the information. CDC allows true real-time analytics in your software information, assuming the platform you ship the information to can eat the occasions in actual time.

Choices For Change Knowledge Seize on MongoDB

Apache Kafka

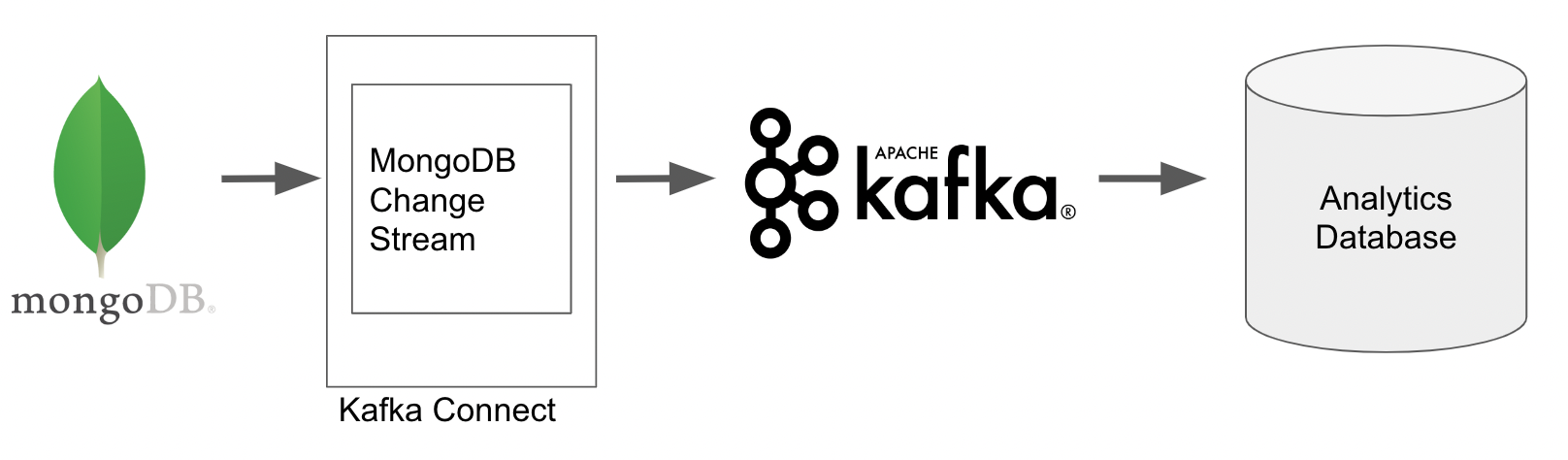

The native CDC structure for capturing change occasions in MongoDB makes use of Apache Kafka. MongoDB offers Kafka supply and sink connectors that can be utilized to put in writing the change occasions to a Kafka matter after which output these modifications to a different system reminiscent of a database or information lake.

The out-of-the-box connectors make it pretty easy to arrange the CDC resolution, nevertheless they do require using a Kafka cluster. If this isn’t already a part of your structure then it could add one other layer of complexity and value.

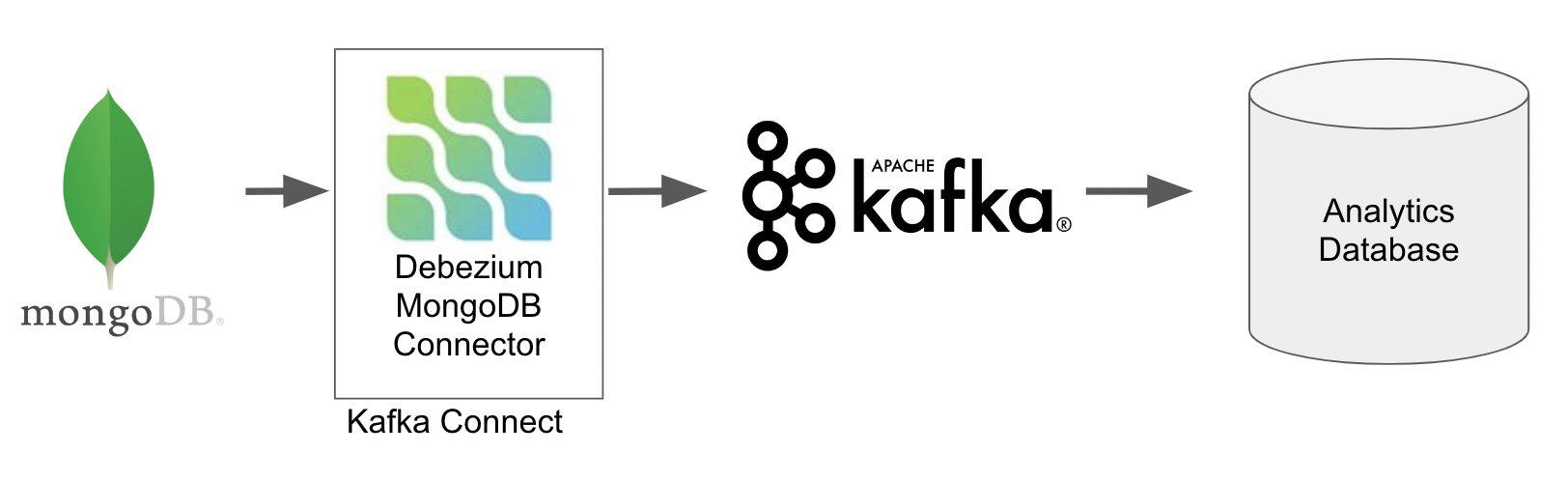

Debezium

Additionally it is potential to seize MongoDB change information seize occasions utilizing Debezium. In case you are acquainted with Debezium, this may be trivial.

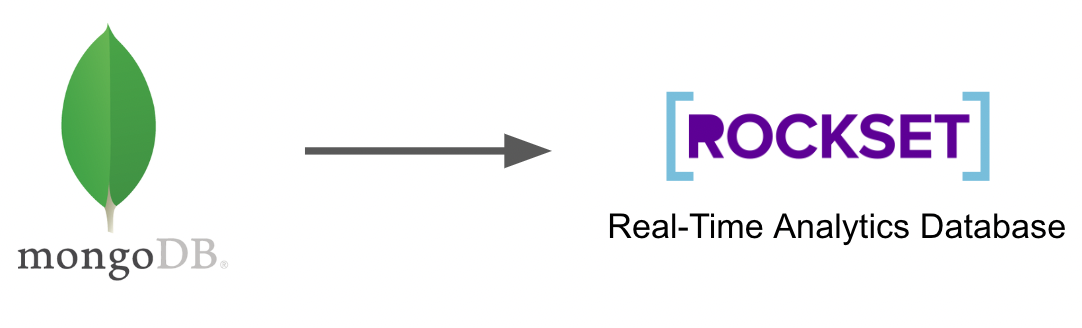

MongoDB Change Streams and Rockset

In case your objective is to execute real-time analytics or textual content search, then Rockset’s out-of-the-box connector that leverages MongoDB change streams is an effective selection. The Rockset resolution requires neither Kafka nor Debezium. Rockset captures change occasions instantly from MongoDB, writes them to its analytics database, and routinely indexes the information for quick analytics and search.

Your selection to make use of Kafka, Debezium or a completely built-in resolution like Rockset will rely in your use case, so let’s check out some use circumstances for CDC on MongoDB.

Use Instances for CDC on MongoDB

Offloading Analytics

One of many primary use circumstances for CDC on MongoDB is to dump analytical queries. MongoDB has native analytical capabilities permitting you to construct up complicated transformation and aggregation pipelines to be executed on the paperwork. Nevertheless, these analytical pipelines, because of their wealthy performance, are cumbersome to put in writing as they use a proprietary question language particular to MongoDB. This implies analysts who’re used to utilizing SQL could have a steep studying curve for this new language.

Paperwork in MongoDB may have complicated constructions. Knowledge is saved as JSON paperwork that may comprise nested objects and arrays that every one present additional intricacies when build up analytical queries on the information reminiscent of accessing nested properties and exploding arrays to research particular person parts.

Lastly, performing giant analytical queries on a manufacturing entrance finish occasion can negatively affect consumer expertise, particularly if the analytics is being run incessantly. This might considerably decelerate learn and write speeds that builders usually wish to keep away from, particularly as MongoDB is commonly chosen significantly for its quick write and browse operations. Alternatively, it will require bigger and bigger MongoDB machines and clusters, rising price.

To beat these challenges, it’s common to ship information to an analytical platform by way of CDC in order that queries may be run utilizing acquainted languages reminiscent of SQL with out affecting efficiency of the front-end system. Kafka or Debezium can be utilized to extract the modifications after which write them to an appropriate analytics platform, whether or not it is a information lake, information warehouse or a real-time analytics database.

Rockset takes this a step additional by not solely instantly consuming CDC occasions from MongoDB, but additionally supporting SQL queries natively (together with JOINs) on the paperwork, and offers performance to control complicated information constructions and arrays, all inside SQL queries. This permits real-time analytics as a result of the necessity to rework and manipulate the paperwork earlier than queries is eradicated.

Search Choices on MongoDB

One other compelling use case for CDC on MongoDB is to facilitate textual content searches. Once more, MongoDB has carried out options reminiscent of textual content indexes that assist this natively. Textual content indexes permit sure properties to be listed particularly for search functions. This implies paperwork may be retrieved primarily based on proximity matching and never simply precise matches. It’s also possible to embody a number of properties within the index reminiscent of a product identify and an outline, so each are used to find out whether or not a doc matches a specific search time period.

Whereas that is highly effective, there should be some cases the place offloading to a devoted database for search could be preferable. Once more, efficiency would be the primary motive particularly if quick writes are essential. Including textual content indexes to a set in MongoDB will naturally add an overhead on each insertion because of the indexing course of.

In case your use case dictates a richer set of search capabilities, reminiscent of fuzzy matching, then chances are you’ll wish to implement a CDC pipeline to repeat the required textual content information from MongoDB into Elasticsearch. Nevertheless, Rockset continues to be an possibility if you’re proud of proximity matching, wish to offload search queries, and likewise retain all the real-time analytics advantages mentioned beforehand. Rockset’s search functionality can also be SQL primarily based, which once more may cut back the burden of manufacturing search queries as each Elasticsearch and MongoDB use bespoke languages.

Conclusion

MongoDB is a scalable and highly effective NoSQL database that gives a number of performance out of the field together with quick learn (get by major key) and write speeds, JSON doc manipulation, aggregation pipelines and textual content search. Even with all this, a CDC resolution should allow larger capabilities and/or cut back prices, relying in your particular use case. Most notably, you may wish to implement CDC on MongoDB to cut back the burden on manufacturing cases by offloading load intensive duties, reminiscent of real-time analytics, to a different platform.

MongoDB offers Kafka and Debezium connectors out of the field to assist with CDC implementations; nevertheless, relying in your present structure, this will likely imply implementing new infrastructure on high of sustaining a separate database for storing the information.

Rockset skips the requirement for Kafka and Debezium with its inbuilt connector, primarily based on MongoDB change streams, lowering the latency of information ingestion and permitting real-time analytics. With computerized indexing and the power to question structured or semi-structured natively with SQL, you’ll be able to write highly effective queries on information with out the overhead of ETL pipelines, which means queries may be executed on CDC information inside one to 2 seconds of it being produced.

Lewis Gavin has been a knowledge engineer for 5 years and has additionally been running a blog about expertise throughout the Knowledge group for 4 years on a private weblog and Medium. Throughout his pc science diploma, he labored for the Airbus Helicopter crew in Munich enhancing simulator software program for navy helicopters. He then went on to work for Capgemini the place he helped the UK authorities transfer into the world of Large Knowledge. He’s at present utilizing this expertise to assist rework the information panorama at easyfundraising.org.uk, a web-based charity cashback web site, the place he’s serving to to form their information warehousing and reporting functionality from the bottom up.