Apache Spark Join, launched in Spark 3.4, enhances the Spark ecosystem by providing a client-server structure that separates the Spark runtime from the consumer utility. Spark Join permits extra versatile and environment friendly interactions with Spark clusters, notably in eventualities the place direct entry to cluster assets is restricted or impractical.

A key use case for Spark Join on Amazon EMR is to have the ability to join instantly out of your native growth environments to Amazon EMR clusters. By utilizing this decoupled method, you may write and take a look at Spark code in your laptop computer whereas utilizing Amazon EMR clusters for execution. This functionality reduces growth time and simplifies knowledge processing with Spark on Amazon EMR.

On this publish, we exhibit the best way to implement Apache Spark Join on Amazon EMR on Amazon Elastic Compute Cloud (Amazon EC2) to construct decoupled knowledge processing purposes. We present the best way to arrange and configure Spark Join securely, so you may develop and take a look at Spark purposes regionally whereas executing them on distant Amazon EMR clusters.

Answer structure

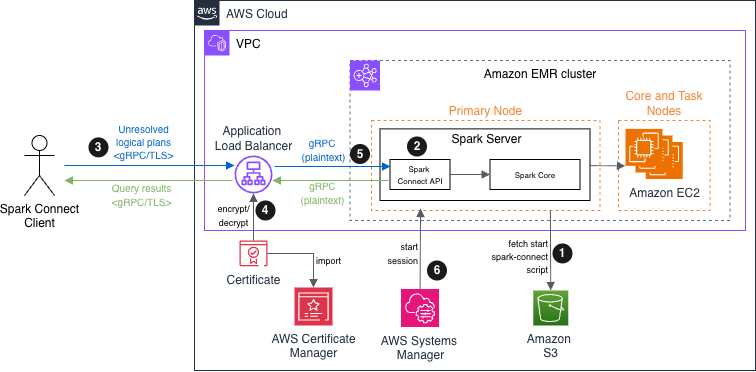

The structure facilities on an Amazon EMR cluster with two node sorts. The major node hosts each the Spark Join API endpoint and Spark Core parts, serving because the gateway for consumer connections. The core node supplies further compute capability for distributed processing. Though this answer demonstrates the structure with two nodes for simplicity, it scales to assist a number of core and activity nodes based mostly on workload necessities.

In Apache Spark Join model 4.x, TLS/SSL community encryption is just not inherently supported. We present you the best way to implement safe communications by deploying an Amazon EMR cluster with Spark Join on Amazon EC2 utilizing an Software Load Balancer (ALB) with TLS termination because the safe interface. This method permits encrypted knowledge transmission between Spark Join purchasers and Amazon Digital Non-public Cloud (Amazon VPC) assets.

The operational circulate is as follows:

- Bootstrap script – Throughout Amazon EMR initialization, the first node fetches and executes the

start-spark-connect.shfile from Amazon Easy Storage Service (Amazon S3). This script begins the Spark Join server. - Server availability – When the bootstrap course of is full, the Spark Server enters a ready state, prepared to simply accept incoming connections. The Spark Join API endpoint turns into out there on the configured port (usually 15002), listening for gRPC connection from distant purchasers.

- Consumer interplay – Spark Join purchasers can set up safe connections to an Software Load Balancer. These purchasers translate DataFrame operations into unresolved logical question plans, encode these plans utilizing protocol buffers, and ship them to the Spark Join API utilizing gRPC.

- Encryption in transit – The Software Load Balancer receives incoming gRPC or HTTPS visitors, performs TLS termination (decrypting the visitors), and forwards the requests to the first node. The certificates is saved in AWS Certificates Supervisor (ACM).

- Request processing – The Spark Join API receives the unresolved logical plans, interprets them into Spark’s built-in logical plan operators, passes them to Spark Core for optimization and execution, and streams outcomes again to the consumer as Apache Arrow-encoded row batches.

- (Non-compulsory) Operational entry – Directors can securely connect with each major and core nodes by means of Session Supervisor, a functionality of AWS Methods Supervisor, enabling troubleshooting and upkeep with out exposing SSH ports or managing key pairs.

The next diagram depicts the structure of this publish’s demonstration for submitting Spark unresolved logical plans to EMR clusters utilizing Spark Join.

Apache Spark Join on Amazon EMR answer structure diagram

Stipulations

To proceed with this publish, guarantee you’ve the next:

Implementation steps

On this recipe, by means of AWS CLI instructions, you’ll:

- Put together the bootstrap script, a bash script beginning Spark Join on Amazon EMR.

- Arrange the permissions for Amazon EMR to provision assets and carry out service-level actions with different AWS providers.

- Create the Amazon EMR cluster with these related roles and permissions and finally connect the ready script as a bootstrap motion.

- Deploy the Software Load Balancer and certificates with ACM safe knowledge in transit over the web.

- Modify the first node’s safety group to permit Spark Join purchasers to attach.

- Join with a take a look at utility connecting the consumer to Spark Join server.

Put together the bootstrap script

To organize the bootstrap script, observe these steps:

- Create an Amazon S3 bucket to host the bootstrap bash script:

- Open your most popular textual content editor, add the next instructions in a brand new file with a reputation such

start-spark-connect.sh. If the script runs on the first node, it begins Spark Join server. If it runs on a activity or core node, it does nothing: - Add the script into the bucket created in step 1:

Arrange the permissions

Earlier than creating the cluster, you will need to create the service position, and occasion profile. A service position is an IAM position that Amazon EMR assumes to provision assets and carry out service-level actions with different AWS providers. An EC2 occasion profile for Amazon EMR assigns a job to each EC2 occasion in a cluster. The occasion profile should specify a job that may entry the assets to your bootstrap motion.

- Create the IAM position:

- Connect the required managed insurance policies to the service position to permit Amazon EMR to handle the underlying providers Amazon EC2 and Amazon S3 in your behalf and optionally grant an occasion to work together with Methods Supervisor:

- Create an Amazon EMR occasion position to grant permissions to EC2 cases to work together with Amazon S3 or different AWS providers:

- To permit the first occasion to learn from Amazon S3, connect the

AmazonS3ReadOnlyAccesscoverage to the Amazon EMR occasion position. For manufacturing environments, this entry coverage ought to be reviewed and changed with a customized coverage following the precept of least privilege, granting solely the particular permissions wanted to your use case: - Attaching AmazonSSMManagedInstanceCore coverage permits the cases to make use of core Methods Supervisor options, equivalent to Session Supervisor, and Amazon CloudWatch:

- To cross the

EMR_EC2_SparkClusterInstanceProfileIAM position info to the EC2 cases after they begin, create the Amazon EMR EC2 occasion profile: - Connect the position

EMR_EC2_SparkClusterNodesRolecreated in step 3 to the newly occasion profile:

Create the Amazon EMR cluster

To create the Amazon EMR cluster, observe these steps:

- Set the surroundings variables, the place your EMR cluster and load-balancer should be deployed:

- Create the EMR cluster with the most recent Amazon EMR launch. Change the placeholder worth along with your precise S3 bucket identify the place the bootstrap motion script is saved:

To change major node’s safety group to permit Methods Supervisor to start out a session.

- Get the first node’s safety group identifier. File the identifier since you’ll want it for subsequent configuration steps wherein

primary-node-security-group-idis talked about: - Discover the EC2 occasion join prefix record ID to your Area. You should use the

EC2_INSTANCE_CONNECTfilter with the describe-managed-prefix-lists command. Utilizing a managed prefix record supplies a dynamic safety configuration to authorize Methods Supervisor EC2 cases to attach the first and core nodes by SSH: - Modify the first node safety group inbound guidelines to permit SSH entry (port 22) to the EMR cluster’s major node from assets which might be a part of the required Occasion Join service contained within the prefix record:

Optionally, you may repeat the previous steps 1–3 for the core (and duties) cluster’s nodes to permit Amazon EC2 Occasion Hook up with entry the EC2 occasion by means of SSH.

Deploy the Software Load Balancer and certificates

To deploy the Software Load Balancer and certificates, observe these steps:

- Create a load balancer’s safety group:

- Add rule to simply accept TCP visitors from a trusted IP on port 443. We advocate that you just use the native growth machine’s IP tackle. You may verify your present public IP tackle right here: https://checkip.amazonaws.com:

- Create a brand new goal group with gRPC protocol, which targets the Spark Join server occasion and the port the server is listening to:

- Create the Software Load Balancer:

- Get the load balancer DNS identify:

- Retrieve the Amazon EMR major node ID:

- (Non-compulsory) To encrypt and decrypt the visitors, the load balancer wants a certificates. You may skip this step if you have already got a trusted certificates in ACM. In any other case, create a self-signed certificates:

- Add to ACM:

- Create the load balancer listener:

- After the listener has been provisioned, register the first node to the goal group:

Modify the first node’s safety group to permit Spark Join purchasers to attach

To connect with Spark Join, amend solely the first safety group. Add an inbound rule to the first’s node safety group to simply accept Spark Join TCP connection on port 15002 out of your chosen trusted IP tackle:

Join with a take a look at utility

This instance demonstrates {that a} consumer operating a more moderen Spark model (4.0.1) can efficiently connect with an older Spark model on the Amazon EMR cluster (3.5.5), showcasing Spark Join’s model compatibility function. This model mixture is for demonstration solely. Working older variations may pose safety dangers in manufacturing environments.

To check the client-to-server connection, we offer the next take a look at Python utility. We advocate that you just create and activate a Python digital surroundings (venv) earlier than putting in the packages. This helps isolate the dependencies for this particular undertaking and prevents conflicts with different Python initiatives. To put in packages, run the next command:

In your built-in growth surroundings (IDE), copy and paste the next code, change the placeholder, and invoke it. The code creates a Spark DataFrame containing two rows and it exhibits its knowledge:

The next exhibits the appliance output:

Clear up

If you now not want the cluster, launch the next assets to cease incurring fees:

- Delete the Software Load Balancer listener, goal group, and the load balancer.

- Delete the ACM certificates.

- Delete the load balancer and Amazon EMR node safety teams.

- Terminate the EMR cluster.

- Empty the Amazon S3 bucket and delete it.

- Take away

AmazonEMR-ServiceRole-SparkConnectDemoandEMR_EC2_SparkClusterNodesRoleroles andEMR_EC2_SparkClusterInstanceProfileoccasion profile.

Issues

Safety concerns with Spark Join:

- Non-public subnet deployment – Hold EMR clusters in non-public subnets with no direct web entry, utilizing NAT gateways for outbound connectivity solely.

- Entry logging and monitoring – Allow VPC Circulate Logs, AWS CloudTrail, and bastion host entry logs for audit trails and safety monitoring.

- Safety group restrictions – Configure safety teams to permit Spark Join port (15002) entry solely from bastion host or particular IP ranges.

Conclusion

On this publish, we confirmed how one can undertake fashionable growth workflows and debug Spark purposes from native IDEs or notebooks, so you may step by means of code execution. With Spark Join’s client-server structure, the Spark cluster can run on a unique model than the consumer purposes, so operations groups can carry out infrastructure upgrades and patches independently.

Because the cluster operators acquire expertise, they’ll customise the bootstrap actions and add steps to course of knowledge. Think about exploring Amazon Managed Workflows for Apache Airflow (MWAA) for orchestrating your knowledge pipeline.