Picture processing has had a resurgence with releases like Nano Banana and Qwen Picture, stretching the boundary of what was beforehand attainable. We’re now not caught with further fingers or damaged textual content. These fashions can produce life-like pictures and illustrations that mimic the work of a designer. Meta’s newest launch, SAM3D, is right here to make its personal contribution to this ecosystem. With an ingenious method to 3D object and physique modelling, It’s right here to current itself as a welcome addition to any designer’s arsenal.

This text will break down what SAM3D is, how one can entry it and a hands-on so that you can gauge its capabilities.

What’s SAM3D?

SAM3D or Phase Something Mannequin 3D is a next-generation system for spatial segmentation in full 3D scenes. It really works on level clouds, depth maps, and reconstructed volumes, and takes textual content or prompts as a substitute of fastened class labels. That is object detection and extraction that operates straight in three dimensional area with AI pushed understanding. Whereas present 3D fashions can phase broad lessons like Human or Chair, SAM3D can isolate way more particular ideas just like the tall lamp subsequent to the couch.

SAM3D overcomes these limits by utilizing promptable idea segmentation in 3D area. It might probably discover and extract any object you describe inside a scanned scene, whether or not you immediate with a brief phrase, a degree, or a reference form, with out relying on a set record of classes.

Find out how to Entry SAM3?

Listed below are among the methods during which you may get entry to the SAM3 mannequin:

- Internet-based playground/demo: There’s an internet interface “Phase Something Playground”, the place you’ll be able to add a picture or video, present a textual content immediate (or exemplar), and experiment with SAM 3D’s segmentation and monitoring performance.

- Mannequin weights + code on GitHub: The official repository by Meta Analysis (facebookresearch/sam-3d-body) consists of code for inference and fine-tuning, plus hyperlinks to obtain skilled mannequin checkpoints.

- Hugging Face mannequin hub: The mannequin is on the market on Hugging Face (huggingface/SAM3D) with description, tips on how to load the mannequin, instance utilization for pictures/movies.

You’ll find different methods of accessing the mannequin from the official launch web page of SAM3D.

Sensible Implementation of SAM3

Let’s get our fingers soiled. To see how properly SAM3D performs I’d be placing it to check throughout the the 2 duties:

- Create 3D Scenes

- Create 3D Our bodies

The picture used for demonstration are the pattern pictures supplied by Meta on their playground.

Create 3D Scenes

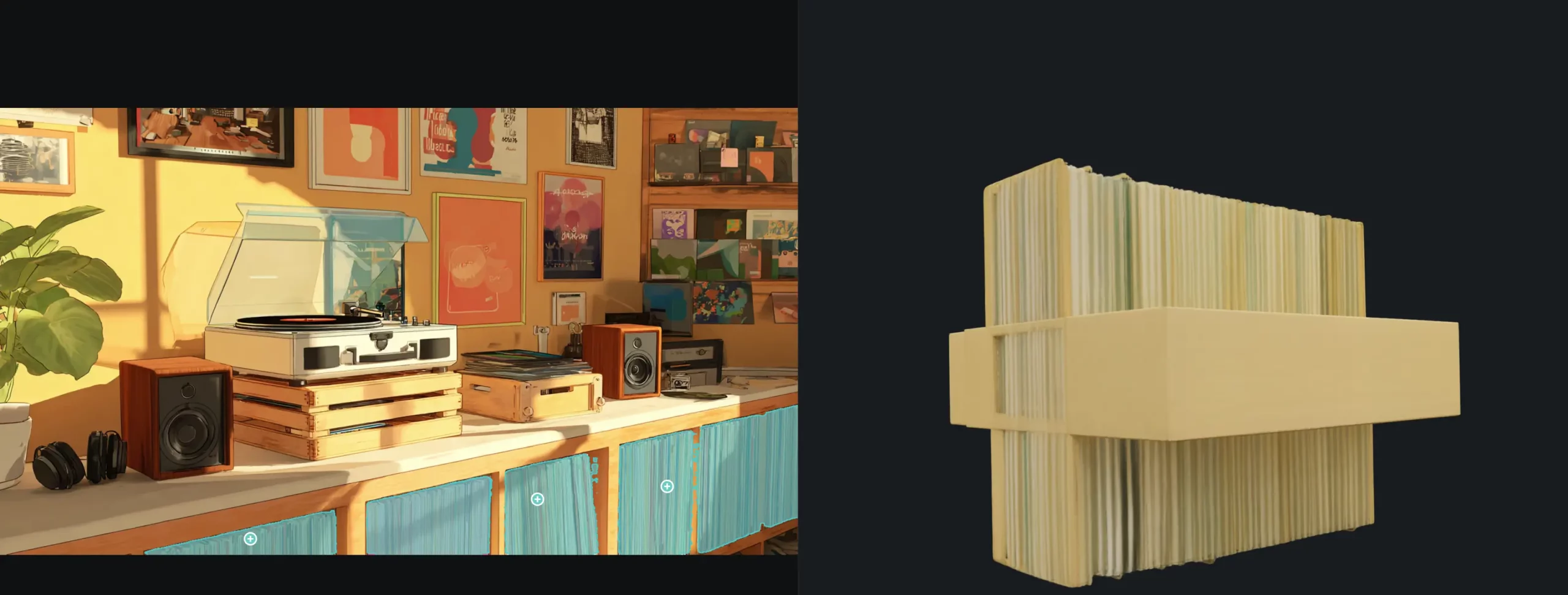

This software permits 3D modelling of object from a picture. Simply click on on an object and it could create an overview round it which you’ll be able to additional refine. For this take a look at we’d be utilizing the next picture:

Response:

I acquired the next response after deciding on the espresso machine:

The mannequin was recognised that it was a espresso machine, and was capable of mannequin it akin to 1. If you happen to look carefully on the visualization, there have been elements of the espresso that weren’t even current within the picture, however the mannequin made it by itself, based mostly on its understanding of a espresso machine.

Create 3D Our bodies

For 3D physique recognition, I’d be testing how properly the mannequin maps a human in a given picture. For demonstration, I’d be utilizing the next picture:

Response:

It had appropriately recognized the one individual within the clip and created an interactable 3D mannequin out of his physique. It was near the physique form, which was fascinating. For pictures that doesn’t consists of a number of topics and are of top quality, this software would show helpful.

Verdict

The mannequin does its job. However I can’t assist however really feel restricted utilizing it, particularly in comparison with SAM3 which is much more customizable. Additionally, the 3D modelling isn’t good, particularly within the case of object detection.

Listed below are among the obtrusive points that I had realized utilizing the software:

- Restricted to easy pictures: The 3D physique mannequin carried out properly after I had used the pattern pictures supplied by Meta as an enter. However struggled and carried out poorly after I supplied it pictures that weren’t this prime quality and tailor-made to the software:

- No guide choice: The 3D physique software acknowledges the human our bodies itself, and doesn’t permit any demarcation. This makes it arduous to make use of the software when the define of the physique isn’t right or to our liking.

- Crashes and timeouts: When the enter picture is sophisticated and accommodates a couple of topic (like within the first level), the mannequin takes plenty of time to not solely determine the our bodies, but in addition plenty of {hardware} sources. To the purpose that typically the webpage would straight up crash out, on account of lack of sources.

Conclusion

SAM3D raises the bar for working with 3D scenes by making superior spatial segmentation far simpler to make use of. What it brings to level clouds and volumes is a significant step ahead, whereas its potential to phase throughout a number of views opens recent prospects. SAM3D paired with SAM3 turns the duo into a robust alternative for anybody who desires AI powered scene understanding in each 2D and 3D. The mannequin continues to be evolving, and its capabilities will hold increasing because the analysis matures.

Ceaselessly Requested Questions

A. It segments objects in full 3D utilizing textual content or immediate cues as a substitute of fastened class labels.

A. Sure. It might probably extract detailed ideas like a single lamp or a particular merchandise based mostly on prompts.

A. By means of the online playground, GitHub code and weights, or the Hugging Face mannequin hub.

Login to proceed studying and revel in expert-curated content material.