This put up was co-authored by Mike Araujo Principal Engineer at Medidata Options.

The life sciences trade is transitioning from fragmented, standalone instruments in the direction of built-in, platform-based options. Medidata, a Dassault Systèmes firm, is constructing a next-generation knowledge platform that addresses the complicated challenges of contemporary medical analysis. On this put up, we present you the way Medidata created a unified, scalable, real-time knowledge platform that serves 1000’s of medical trials worldwide with AWS providers, Apache Iceberg, and a contemporary lakehouse structure.

Challenges with legacy structure

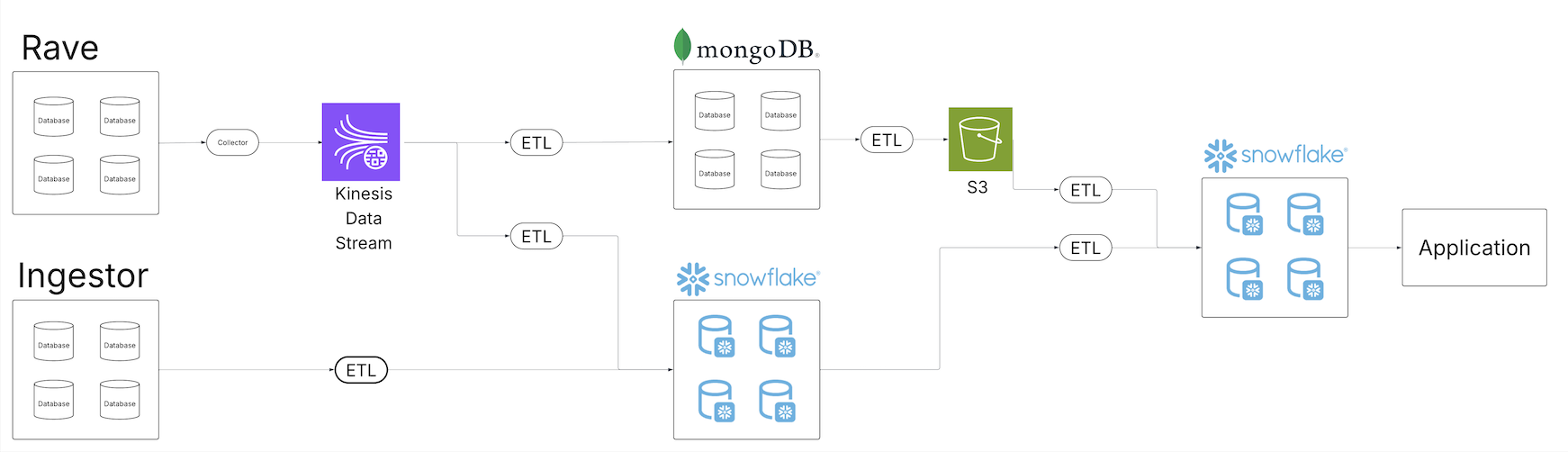

Because the Medidata medical knowledge repository expanded, the staff acknowledged the shortcomings of the legacy knowledge resolution to offer high quality knowledge merchandise to their prospects throughout their rising portfolio of information choices. A number of knowledge tenants started to erode. The next diagram exhibits Medidata’s legacy extract, remodel, and cargo (ETL) structure.

Constructed upon a sequence of scheduled batch jobs, the legacy system proved ill-equipped to offer a unified view of the info throughout your entire ecosystem. Batch jobs ran at completely different intervals, typically requiring a enough diploma of scheduling buffer to verify upstream jobs accomplished throughout the anticipated window. As the info quantity expanded, the roles and their schedules continued to inflate, introducing a latency window between ingestion and processing for dependent customers. Totally different customers working from numerous underlying knowledge providers additional magnified the issue as pipelines needed to be constantly constructed throughout a wide range of knowledge supply stacks.

The increasing portfolio of pipelines started to overwhelm current upkeep operations. With extra operations, the chance for failure expanded and restoration efforts additional difficult. Present observability programs have been inundated with operational knowledge, and figuring out the foundation trigger of information high quality points grew to become a multi-day endeavor. Will increase within the knowledge quantity required scaling issues throughout your entire knowledge property.

Moreover, the proliferation of information pipelines and copies of the info in numerous applied sciences and storage programs necessitated increasing entry controls with enhanced security measures to verify solely the proper customers had entry to the subset of information to which they have been permitted. Ensuring entry management modifications have been appropriately propagated throughout all programs added an additional layer of complexity to customers and producers.

Answer overview

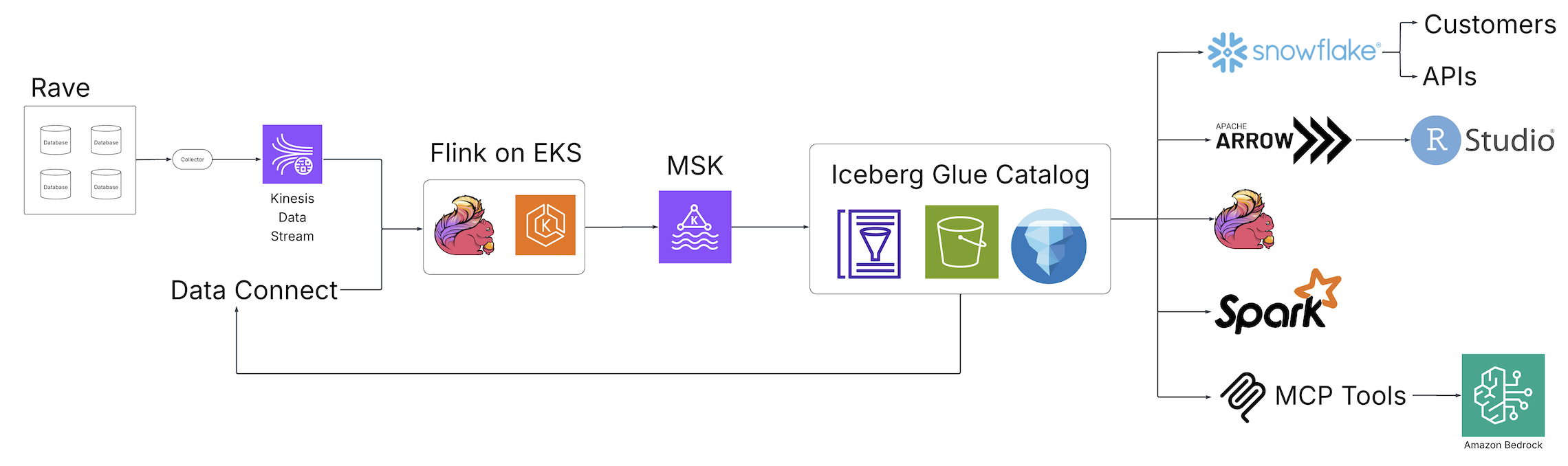

With the arrival of Scientific Information Studio (Medidata’s unified knowledge administration and analytics resolution for medical trials) and Information Join (Medidata’s knowledge resolution for buying, reworking, and exchanging digital well being document (EHR) knowledge throughout healthcare organizations), Medidata launched a brand new world of information discovery, evaluation, and integration to the life sciences trade powered by open supply applied sciences and hosted on AWS. The next diagram illustrates the answer structure.

Fragmented batch ETL jobs have been changed by real-time Apache Flink streaming pipelines, an open supply, distributed engine for stateful processing, and powered by Amazon Elastic Kubernetes Service (Amazon EKS), a totally managed Kubernetes service. The Flink jobs write to Apache Kafka working in Amazon Managed Apache Kafka (Amazon MSK), a streaming knowledge service that manages Kafka infrastructure and operations, earlier than touchdown in Iceberg tables backed by the AWS Glue Information Catalog, a centralized metadata repository for knowledge belongings. From this assortment of Iceberg tables, a central, single supply of information is now accessible from a wide range of customers with out extra downstream processing, assuaging the necessity for customized pipelines to fulfill the necessities of downstream customers. By way of these basic architectural modifications, the staff at Medidata solved the problems introduced by the legacy resolution.

Information availability and consistency

With the introduction of the Flink jobs and Iceberg tables, the staff was capable of ship a constant view of their knowledge throughout the Medidata knowledge expertise. Pipeline latency was decreased from days to minutes, serving to Medidata prospects notice a 99% efficiency achieve from the info ingestion to the info analytics layers. Attributable to Iceberg’s interoperability, Medidata customers noticed the identical view of the info no matter the place they seen that knowledge, minimizing the necessity for consumer-driven customized pipelines as a result of Iceberg may plug into current customers.

Upkeep and sturdiness

Iceberg’s interoperability offered a single copy of the info to fulfill their use circumstances, so the Medidata staff may focus its commentary and upkeep efforts on a five-times smaller subset of operations than beforehand required. Observability was enhanced by tapping into the assorted metadata parts and metrics uncovered by Iceberg and the Information Catalog. High quality administration reworked from cross-system traces and queries to a single evaluation of unified pipelines, with an added advantage of time limit knowledge queries because of the Iceberg snapshot characteristic. Information quantity will increase are dealt with with out-of-box scaling supported by your entire infrastructure stack and AWS Glue Iceberg optimization options that embrace compaction, snapshot retention, and orphan file deletion, which give a set-and-forget expertise for fixing various widespread Iceberg frustrations, such because the small file drawback, orphan file retention, and question efficiency.

Safety

With Iceberg on the middle of its resolution structure, the Medidata staff now not needed to spend the time constructing customized entry management layers with enhanced security measures at every knowledge integration level. Iceberg on AWS centralizes the authorization layer utilizing acquainted programs corresponding to AWS Id and Entry Administration (IAM), offering a single and sturdy management for knowledge entry. The info additionally stays completely throughout the Medidata digital personal cloud (VPC), additional lowering the chance for unintended disclosures.

Conclusion

On this put up, we demonstrated how legacy universe of consumer-driven customized ETL pipelines will be changed with a scalable, high-performant streaming lakehouses. By placing Iceberg on AWS on the middle of information operations, you possibly can have a single supply of information to your customers.

To be taught extra about Iceberg on AWS, confer with Optimizing Iceberg tables and Utilizing Apache Iceberg on AWS.