In the present day, we’re thrilled to announce that Mosaic AI Mannequin Coaching’s help for fine-tuning GenAI fashions is now accessible in Public Preview. At Databricks, we consider that connecting the intelligence in general-purpose LLMs to your enterprise information – information intelligence – is the important thing to constructing high-quality GenAI programs. Tremendous-tuning can specialize fashions for particular duties, enterprise contexts, or area information, and might be mixed with RAG for extra correct functions. This types a vital pillar of our Knowledge Intelligence Platform technique, which lets you adapt GenAI to your distinctive wants by incorporating your enterprise information.

Mannequin Coaching

Our prospects have educated over 200,000 customized AI fashions within the final yr, and we’ve distilled the teachings into Mosaic AI Mannequin Coaching, a totally managed service. Tremendous-tune or pretrain a variety of fashions – together with Llama 3, Mistral, DBRX, and extra – along with your enterprise information. The ensuing mannequin is then registered to Unity Catalog, offering full possession and management over the mannequin and its weights. Moreover, simply deploy your mannequin with Mosaic AI Mannequin Serving in only one click on.

We’ve designed Mosaic AI Mannequin Coaching to be:

- Easy: Choose your base mannequin and coaching dataset, and begin coaching instantly. We deal with the GPU and environment friendly coaching complexities so you may deal with the modeling.

- Quick: Powered by a proprietary coaching stack that’s as much as 2x sooner than open supply, iterate shortly to construct your fashions. From fine-tuning on just a few thousand examples to continued pre-training on billions of tokens, our coaching stack scales with you.

- Built-in: Simply ingest, rework, and preprocess your information on the Databricks platform, and pull straight into coaching.

- Tunable: Rapidly tune the important thing hyperparameters, specifically studying fee and coaching length, to construct the very best high quality mannequin.

- Sovereign: You will have full possession of the mannequin and its weights. You management the permissions and entry lineage — monitoring the coaching dataset in addition to downstream customers.

“At Experian, we’re innovating within the space of fine-tuning for open supply LLMs. The Mosaic AI Mannequin Coaching lowered the common coaching time of our fashions considerably, which allowed us to speed up our GenAI improvement cycle to a number of iterations per day. The tip result’s a mannequin that behaves in a vogue that we outline, outperforms business fashions for our use instances, and prices us considerably much less to function.” James Lin, Head of AI/ML Innovation, Experian

Advantages

Mosaic AI Mannequin Coaching lets you adapt open supply fashions to carry out effectively on specialised enterprise duties to realize increased high quality. Advantages embrace:

- Larger high quality: Enhance the mannequin high quality together with particular duties and capabilities, whether or not that be summarization, chatbot habits, instruments use, multilingual dialog, or extra.

- Decrease latency at decrease prices: Giant, normal intelligence fashions might be costly and sluggish in manufacturing. A lot of our prospects discover that fine-tuning small fashions (<13B parameters) can dramatically cut back latency and price whereas sustaining high quality.

- Constant, structured formatting or model: Generate outputs that comply with a particular format or model, like entity extraction or creating JSON schemas in a compound AI system.

- Light-weight, manageable system prompts: Combine many enterprise logic or consumer suggestions into the mannequin itself. It may be onerous to include end-user suggestions into a fancy immediate and small immediate adjustments may cause regressions for different questions.

- Develop the information base: With Continued Pretraining, lengthen a mannequin’s information base, whether or not that be explicit subjects, inside paperwork, languages, or up to date current occasions previous the mannequin’s unique information cut-off. Keep tuned for future blogs on the advantages of continued pretraining!

“With Databricks, we may automate tedious handbook duties by utilizing LLMs to course of a million+ information every day for extracting transaction and entity information from property data. We exceeded our accuracy objectives by fine-tuning Meta Llama3 8b and utilizing Mosaic AI Mannequin Serving. We scaled this operation massively with out the necessity to handle a big and costly GPU fleet.” – Prabhu Narsina, VP Knowledge and AI, First American

RAG and Tremendous-Tuning

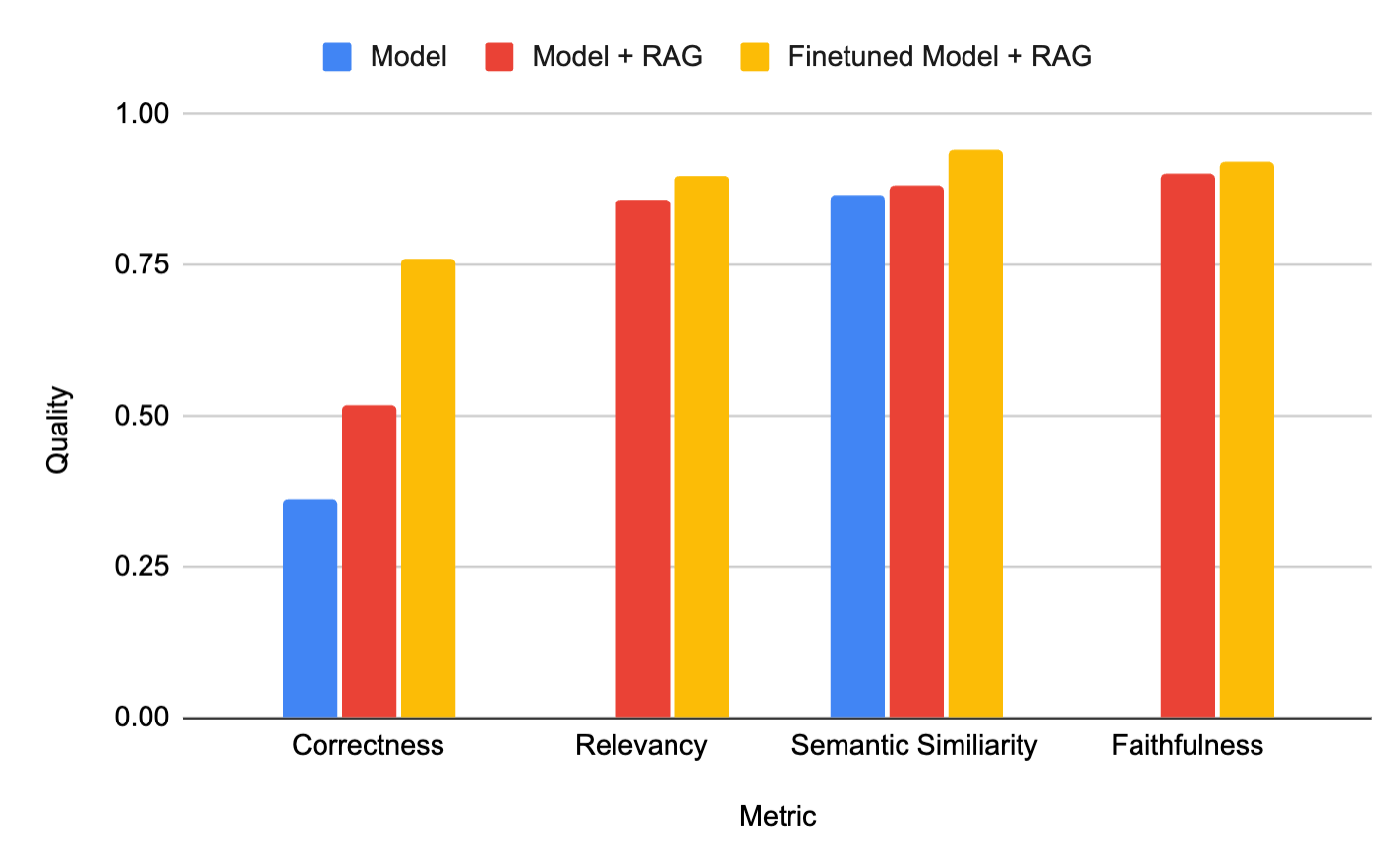

We regularly hear from prospects: ought to I take advantage of RAG or fine-tune fashions so as to incorporate my enterprise information? With Retrieval Augmented Tremendous-tuning (RAFT), mix each! For instance, our buyer Celebal Tech constructed a top quality domain-specific RAG system by finetuning their technology mannequin to enhance summarization high quality from retrieved context, decreasing hallucinations and bettering high quality (see Determine under).

Determine 1: Combining a finetuned mannequin with RAG (yellow) produced the very best high quality system for buyer Celebal Tech. Tailored from their weblog.

“We felt we hit a ceiling with RAG- we needed to write lots of prompts and directions, it was a problem. We moved on to fine-tuning + RAG and Mosaic AI Mannequin Coaching made it really easy! It not solely adopted the mannequin for Knowledge Linguistics and Area, however it additionally lowered hallucinations and elevated pace in RAG programs. After combining our Databricks fine-tuned mannequin with our RAG system, we bought a greater software and accuracy with the utilization of much less tokens.” Anurag Sharma, AVP Knowledge Science, Celebal Applied sciences

Analysis

Analysis strategies are vital to serving to you iterate on mannequin high quality and base mannequin decisions throughout fine-tuning experiments. From visible inspection checks to LLM-as-a-Decide, we’ve designed Mosaic AI Mannequin Coaching to seamlessly join all the opposite analysis programs inside Databricks:

- Prompts: Add as much as 10 prompts to observe throughout coaching. We’ll periodically log the mannequin’s outputs to the MLflow dashboard, so you may manually examine the mannequin’s progress throughout coaching.

- Playground: Deploy the fine-tuned mannequin and work together with the playground for handbook immediate testing and comparisons.

- LLM-as-a-Decide: With MLFlow Analysis, use one other LLM to evaluate your fine-tuned mannequin on an array of current or customized metrics.

- Notebooks: After deploying the fine-tuned mannequin, construct notebooks or customized scripts to run customized analysis code on the endpoint.

Get Began

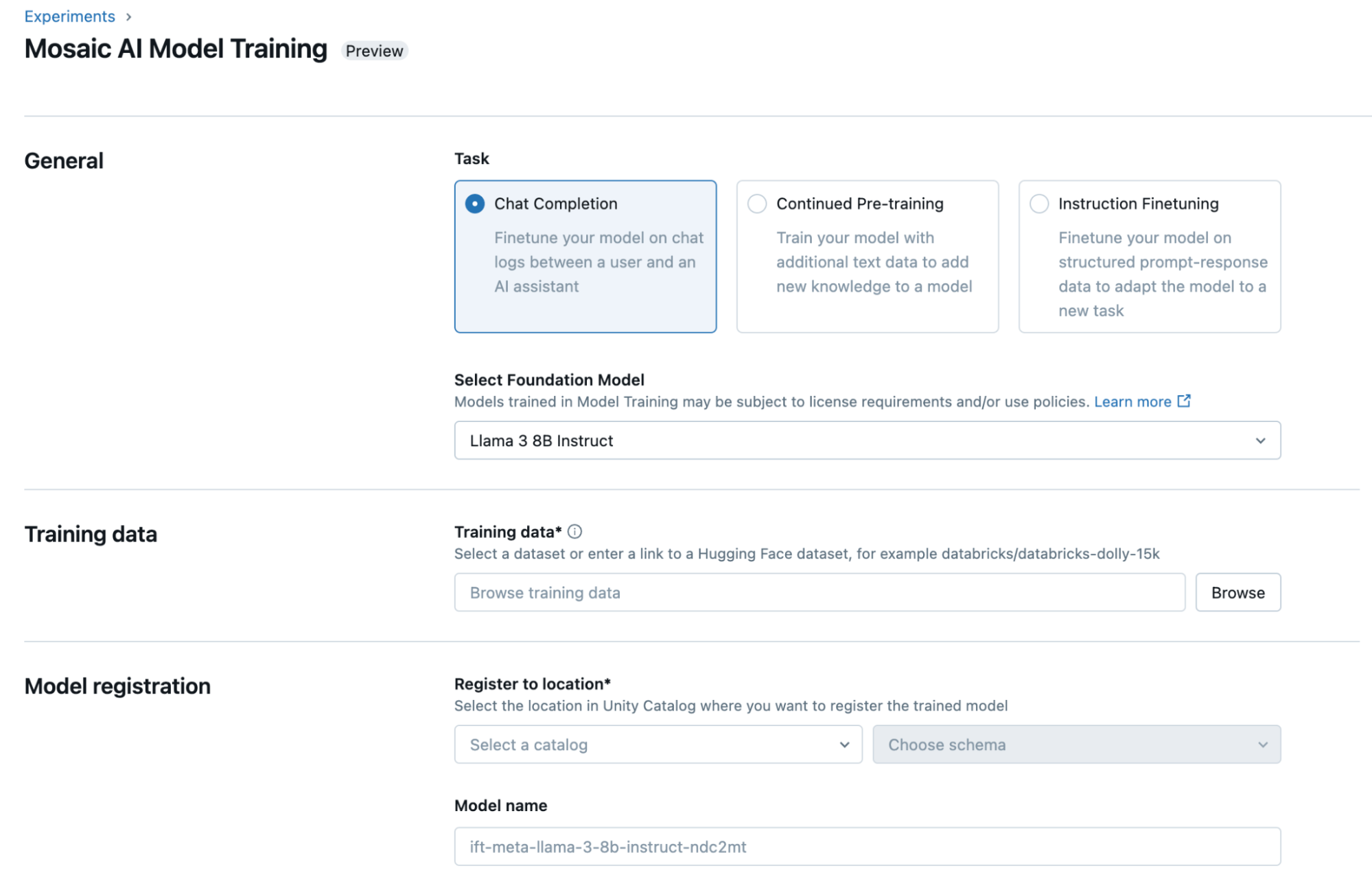

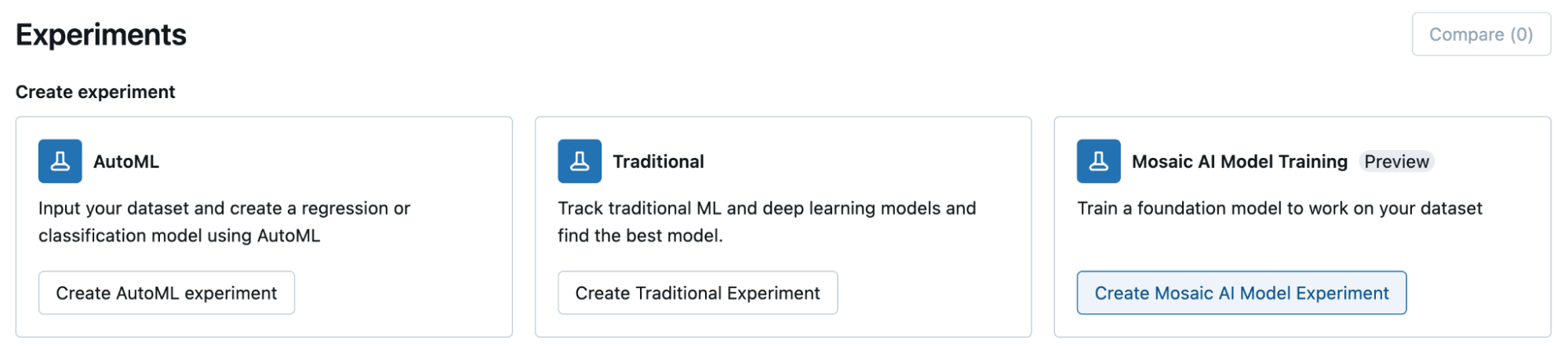

You’ll be able to fine-tune your mannequin by way of the Databricks UI or programmatically in Python. To get began, choose the placement of your coaching dataset in Unity Catalog or a public Hugging Face dataset, the mannequin you wish to customise, and the placement to register your mannequin for 1-click deployment.

- Watch our Knowledge and AI Summit presentation on Mosaic AI Mannequin Coaching

- Learn our documentation (AWS, Azure) and go to our pricing web page

- Strive our dbdemo to shortly see find out how to get high-quality fashions with Mosaic AI Mannequin Coaching

- Take our tutorial