The hidden price killing your AI apps roadmap

Throughout main tech organizations constructing AI-native apps, the first constraint has shifted from mannequin functionality to the underlying information structure, and particularly, information pipelines. The necessities of AI methods to entry real-time, stateful context for brokers and low marginal price for fast, experimental growth have uncovered the important flaw in conventional, separated information architectures.

Operational workloads usually run on cloud transactional databases (e.g., managed Postgres/MySQL engines), whereas analytics, ML pipelines, and have engineering dwell within the lakehouse. Synchronization between these layers depends on a fancy mesh of CDC pipelines, ETL/ELT jobs, and reverse ETL frameworks. This leads to systemic inefficiencies:

- Knowledge staleness: AI methods function on lagging snapshots reasonably than real-time state

- Architectural fragmentation: Governance, lineage, and entry management are duplicated throughout methods

- Operational overhead: Engineering effort shifts from product growth to pipeline orchestration and failure administration

We name this the builder’s tax: a structural inefficiency arising from decoupled operational and analytical stacks. For the folks inside tech corporations that construct the platforms, SaaS merchandise and developer instruments everybody else runs on, this tax is very damaging. Each new AI characteristic spawns one other database, one other pipeline, one other quarter of delay.

Architectural shift: co-locating apps and information

To interrupt this sample, main tech corporations are redesigning the structure, shifting past the adoption of simply one other specialised software. We see them operating apps and AI immediately on the identical ruled basis as their analytics.

That basis is Lakebase: a totally managed serverless Postgres engine natively built-in into the Databricks Platform.

- Apps learn and write immediately towards lakehouse-managed information

- Governance is centralized by Unity Catalog throughout all workloads

- Dependable operational information with automated snapshots and built-in failure restoration

This establishes an Interoperable Software Basis: a single, ruled layer the place apps, AI, and analytics share the identical operational retailer.

Actual world state of affairs

Amey Banarse offered “From Transactions to Brokers: PostgreSQL in Trendy AI Functions” at PostgresConf 2026 [Slides]. Amey covers a dwell walkthrough of a healthtech claims app constructed completely on Lakebase + the AI DevKit, displaying how lakehouse intelligence, operational insights and a steady studying loop run on a single Databricks basis.

Organizations are usually coming into this structure by three main vectors:

- Elimination of reverse ETL pipelines: Analytical datasets (gold-layer tables) are synchronized immediately into Lakebase by native integration. This removes dependency on exterior instruments and reduces pipeline fragility.

- AI-native apps and inside instruments: Run Databricks Apps + Lakebase as a single, serverless stack with mannequin serving, characteristic retailer and analytics. No further infrastructure to provision.

- Agentic reminiscence and state: Lakebase with pgvector for semantic search turns into the operational reminiscence layer for brokers constructed with Agent Bricks, alongside the information they purpose over.

What tech corporations are literally seeing

The outcomes under come from tech corporations which have moved to a Lakebase structure:

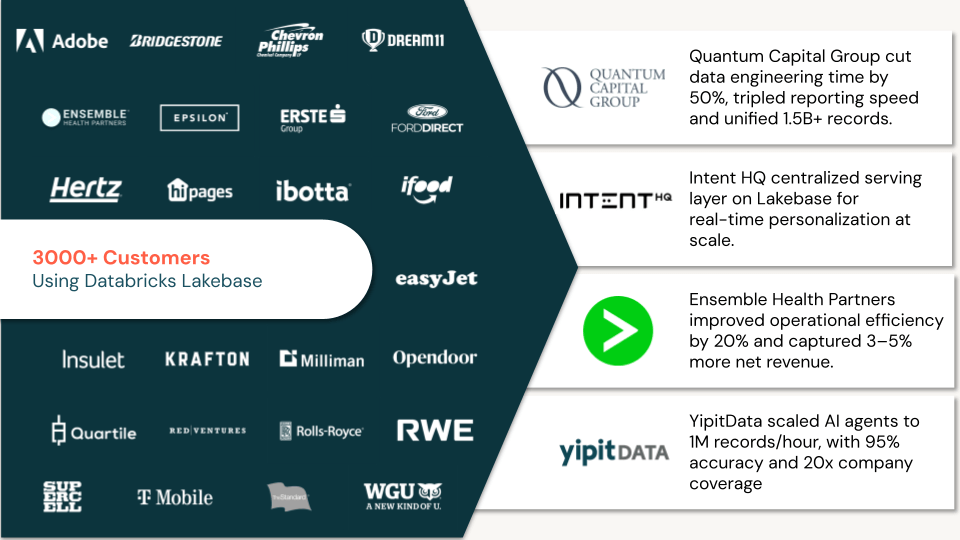

YipitData scaled their AI agent pipeline to course of 1M information per hour, attaining 92–95% tagging accuracy and 20x firm protection. By utilizing Lakebase because the relational system of file inside Unity Catalog, their brokers function with a sturdy, ruled state — no fragile exterior shops, no sync lag.

Quantum Capital Group was managing 1.5B+ information throughout six fragmented information sources. After consolidating on Lakebase, they eradicated 100+ redundant tables, reduce information engineering time by 50%, and tripled reporting velocity — groups now work from a single trusted dataset as an alternative of combating model sprawl.

Ensemble Well being Companions unified 15+ fragmented SQL Server methods. With Lakebase because the transactional layer, they deployed AI-driven revenue-cycle workflows that improved operational effectivity by 20% and helped clients seize 3–5% extra web income 12 months over 12 months.

Replit, whose platform powers hundreds of thousands of builders constructing and deploying software program, makes use of Lakebase + the Databricks AI DevKit to assist clients launch manufacturing code-generation AI in 3 weeks with 10x developer velocity, eliminating the hole between operational and analytical methods from day one.

IntentHQ, a client intelligence platform, centralized its serving layer on Lakebase to energy real-time personalization at scale — giving AI fashions a low-latency operational retailer that stays in sync with its lakehouse information with out customized pipelines.

The structure sample behind the outcomes

Regardless of differing use instances — from AI brokers and personalization engines to healthcare workflows and developer platforms — these organizations are usually not succeeding by remoted optimizations. They’re converging on a essentially totally different architectural mannequin.

At its core, this architectural mannequin eliminates the normal separation between transactional methods, analytical platforms, and AI pipelines, changing it with a shared, interoperable information basis.

This sample persistently consists of three tightly built-in layers:

Lakehouse intelligence layer

A ruled, scalable basis the place batch and streaming information, characteristic engineering, and AI/ML workloads function. This layer supplies the system of perception, enabling large-scale processing, mannequin coaching, and analytics on unified information.

Operational information layer

A low-latency transactional interface (Lakebase) that serves because the system of execution for functions and brokers. This layer allows real-time reads/writes, state administration, and software logic immediately on ruled information — with out replication or synchronization overhead.

Steady studying loop

A closed suggestions system the place software interactions, agent outputs, and consumer indicators are captured and reintegrated into mannequin pipelines. This establishes a system of steady enchancment, permitting AI capabilities to evolve primarily based on real-world use.

When these three layers share a basis, AI methods transition from remoted workloads to constantly bettering manufacturing methods.

Eliminating the builder’s tax

The cuilder’s tax isn’t inevitable. It’s a consequence of constructing AI on prime of infrastructure that was designed for a special period — when databases have been monolithic, apps have been stateless, and intelligence was a separate mission.

Lakebase adjustments the maths! Apps run the place the information lives. Brokers have the context they want. And the engineering time your crew spent on pipelines goes again to transport.

Watch Amey Banarse’s PostgresConf 2026 session, “From Transactions to Brokers: PostgreSQL in Trendy AI Functions” [Slides] to see a full AI-native app constructed on Lakebase in motion.

Databricks Lakebase is serverless Postgres constructed for brokers and apps. Study extra at databricks.com/product/lakebase.