(Gorodenkoff/Shutterstock)

Within the first two elements of this sequence, we checked out how AI’s progress is now constrained by energy — not chips, not fashions, however the capability to feed electrical energy to large compute clusters. We explored how corporations are turning to fusion startups, nuclear offers, and even constructing their very own vitality provide simply to remain forward. AI can’t hold scaling except the vitality does too.

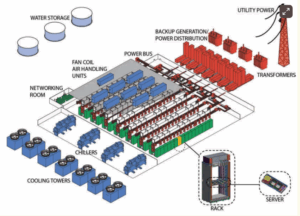

Nonetheless, even should you get the facility, that’s solely the beginning. It nonetheless has to land someplace. That someplace is the information middle. Many of the older information facilities weren’t constructed for this. Which means that the cooling programs aren’t chopping it. The format, the grid connection, and the best way warmth strikes by means of the constructing all must sustain with the altering calls for of the AI period. In Half 3, we have a look at what’s altering (or what ought to change) inside these websites: immersion tanks, smarter coordination with the grid, and the quiet redesign that’s now crucial to maintain AI shifting ahead.

Why Conventional Information Facilities Are Beginning to Break

The surge in AI workloads is bodily overwhelming the buildings meant to assist it. Conventional information facilities had been designed for general-purpose computing, with energy densities round 7 to eight kilowatts per rack, perhaps 15 on the excessive finish. Nonetheless, AI clusters working on next-gen chips like NVIDIA’s GB200 are blowing previous these numbers. Racks now usually draw 30 kilowatts or extra, and a few configurations are climbing towards 100 kilowatts.

In response to McKinsey, the speedy improve in energy density has created a mismatch between infrastructure capabilities and AI compute necessities. Grid connections that had been as soon as greater than ample at the moment are strained. Cooling programs, particularly conventional air-based setups, can’t take away warmth quick sufficient to maintain up with the thermal load.

In lots of circumstances, the bodily format of the constructing itself turns into an issue, whether or not it’s the burden limits on the ground or the spacing between racks. Even fundamental energy conversion and distribution programs inside legacy information facilities typically aren’t rated for the voltages and present ranges wanted to assist AI racks.

As Alex Stoewer, CEO of Greenlight Information Facilities, advised BigDATAwire, “Given this degree of density is new, only a few current information facilities had the facility distribution or liquid cooling in place when these chips hit the market. New improvement or materials retrofits had been required for anybody who wished to run these new chips.”

That’s the place the infrastructure hole actually opened up. Many legacy services merely couldn’t make the leap in time. Even when grid energy is out there, delays in interconnection approvals and allowing can gradual retrofits to a crawl. Goldman Sachs now describes this transition as a shift towards “hyper-dense computational environments,” the place even airflow and rack format have to be redesigned from the bottom up.

The Cooling Drawback Is Greater Than You Suppose

When you stroll into an information middle constructed just some years in the past and attempt to run as we speak’s AI workloads at full depth, cooling is commonly the very first thing that begins to offer. It doesn’t fail all of sudden. It breaks down in small elements however in additional compounding methods. Airflow will get tight. Energy utilization spikes. Reliability slips. And all of this contributes to a damaged system.

Conventional air programs had been by no means constructed for this sort of warmth. As soon as rack energy climbs above 30 or 40 kilowatts, the vitality wanted simply to maneuver and chill that air turns into its personal drawback. McKinsey places the ceiling for air-cooled programs at round 50 kilowatts per rack. However as we speak’s AI clusters are already going far past that. Some are hitting 80 and even 100 kilowatts. That degree of warmth disrupts your complete steadiness of the ability.

This is the reason extra operators are turning to immersion and liquid cooling. These programs pull warmth straight from the supply, utilizing fluid as a substitute of air. Some setups submerge servers totally in nonconductive liquid. Others run coolant straight to the chips. Each supply higher thermal efficiency and much larger effectivity at scale. In some circumstances, operators are even reusing that warmth to energy close by buildings or industrial programs.

Nonetheless, this shift isn’t as simple as one may assume. Liquid cooling calls for new {hardware}, plumbing, and ongoing assist. So, it requires area and cautious planning. Nonetheless, as densities rise, staying with air isn’t simply inefficient, it units a tough restrict on how far information facilities can scale. As operators notice there’s no option to air-tune their method out of 100 kilowatt racks, different options should emerge – they usually have.

The Case for Immersion Cooling

For a very long time, immersion cooling felt like overengineering. It was fascinating in concept, however not one thing most operators critically thought of. That’s modified. The nearer services get to the thermal ceiling of air and fundamental liquid programs, the extra immersion begins trying like the one actual possibility left.

As an alternative of making an attempt to drive extra air by means of hotter racks, immersion takes a distinct route. Servers go straight into nonconductive liquid, which pulls the warmth off passively. Some programs even use fluids that boil and recondense inside a closed tank, carrying warmth out with virtually no shifting elements. It’s quieter, denser, and infrequently extra secure beneath full load.

Whereas the advantages are clear, deploying immersion nonetheless takes planning. The tanks require bodily area, and the fluids include upfront prices. Nonetheless, in comparison with redesigning a whole air-cooled facility or throttling workloads to remain inside limits, immersion is beginning to appear like the extra simple path. For a lot of operators, it’s not an experiment anymore. It needs to be the following step.

From Compute Hubs to Power Nodes

If immersion cooling solves the warmth, however what concerning the timing? When are you able to really pull that a lot energy from the grid? That’s the place the following bottleneck is forming, and it’s forcing a shift in how hyperscalers function.

Google has already signed formal demand-response agreements with regional utilities just like the TVA. The deal goes past decreasing whole consumption because it shapes when and the place that energy will get used. AI workloads, particularly coaching jobs, have built-in flexibility.

With the appropriate software program stack, these jobs can migrate throughout services or delay execution by hours. That delay turns into a instrument. It’s a option to keep away from grid congestion, take in extra renewables, or preserve uptime when programs are tight.

It’s not simply Google. Microsoft has been testing energy-matching fashions throughout its information facilities, together with scheduling jobs to align with clear vitality availability. The Rocky Mountain Institute tasks that information middle alignment with grid dynamics could unlock gigawatts of in any other case stranded capability.

Make little doubt that these aren’t sustainability gestures. They’re survival methods. Grid queues are rising. Allowing timelines are slipping. Interconnect caps have gotten actual limits on AI infrastructure. The services that thrive received’t simply be well-cooled, they’ll be grid-smart, contract-flexible, and constructed to reply. So, from compute hubs to vitality nodes, it’s not nearly how a lot energy you want. It’s about how nicely you may dance with the system delivering it.

Designing for AI Means Rethinking All the pieces

You’ll be able to’t design round AI the best way information facilities used to deal with normal compute. The hundreds are heavier, the warmth is increased, and the tempo is relentless. You begin with racks that pull extra energy than complete server rooms did a decade in the past, and every part round them has to adapt.

New builds now work from the within out. Engineers begin with workload profiles, then form airflow, cooling paths, cable runs, and even structural helps based mostly on what these clusters will really demand. In some circumstances, several types of jobs get their very own electrical zones. Which means separate cooling loops, shorter throw cabling, devoted switchgear — a number of programs, all working beneath the identical roof.

Energy supply is altering, too. In a dialog with BigDATAwire, David Seashore, Market Section Supervisor at Anderson Energy, defined, “Gear is profiting from a lot increased voltages and concurrently rising present to attain the rack densities which can be essential. That is additionally necessitating the event of elements and infrastructure to correctly carry that energy.”

This shift isn’t nearly staying environment friendly. It’s about staying viable. Information facilities that aren’t constructed with warmth reuse, growth room, and versatile electrical design received’t maintain up lengthy. The calls for aren’t slowing down. The infrastructure has to fulfill them head-on.

What This Infrastructure Shift Means Going Ahead

We all know that {hardware} alone doesn’t transfer the needle anymore. The true benefit comes from pushing it on-line shortly, with out getting slowed down by energy, permits, and different obstacles. That’s the place the cracks are starting to open.

Website choice has develop into a high-stakes filter. An inexpensive piece of land isn’t sufficient. What you want is utility capability, native assist, and room to develop with out months of negotiating. Funded tasks are hitting partitions, even ones with distinctive sources.

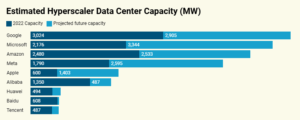

Those that have been pulling forward started early. Microsoft is already engaged on multi-campus builds that may deal with gigawatt masses. Google is pairing facility progress with versatile vitality contracts and close by renewables. Amazon is redesigning its electrical programs and dealing with zoning authorities earlier than permits even go stay.

The stress now could be regular, and any delays will ripple by means of every part. When you lose a window, you lose coaching cycles. The speed at which fashions are developed doesn’t look forward to the infrastructure to maintain up. Rear-end planning was a front-line technique. Now, information middle builders are those who’re defining what occurs subsequent. As we transfer ahead, AI efficiency received’t simply be measured in FLOPs or latency. It might come right down to who may construct when it actually mattered.

Associated Gadgets

New GenAI System Constructed to Speed up HPC Operations Information Analytics

Bloomberg Finds AI Information Facilities Fueling America’s Power Invoice Disaster

OpenAI Goals to Dominate the AI Grid With 5 New Information Facilities