Google has not too long ago launched their most clever mannequin that may create, purpose, and perceive throughout a number of modalities. Google Gemini 3 Professional isn’t just an incremental replace, it’s in truth a significant step ahead in AI functionality. This mannequin with the cutting-edge reasoning, multimodal understanding, and agentic capabilities goes to be the principle issue to alter the way in which builders create clever purposes. And with the brand new Gemini 3 Professional API, builders can now create smarter, extra dynamic programs than ever earlier than.

If you’re making complicated AI workflows, working with multimodal knowledge, or growing agentic programs that may handle multi-step duties on their very own, this information will train you all about using Gemini 3 Professional through its API.

What Makes Gemini 3 Professional Particular?

Allow us to not get misplaced within the technical particulars and first focus on the explanations behind the excitement among the many builders about this mannequin. The Google Gemini 3 Professional mannequin that has been in growth for some time has now reached the very prime of the AI benchmarking record with a improbable Elo score of 1501, and it was not merely designed to ship most efficiency however the entire package deal was oriented in the direction of an incredible expertise for the developer.

The principle options are:

- Superior reasoning: The mannequin is now able to fixing intricate, multi-step issues with very refined considering.

- Huge context window: A large 1 million token enter context permits for the feeding of entire codebases, full-length books or lengthy video content material.

- Multimodal mastery: Textual content, photos, video, PDFs and code can all be processed collectively in a really clean method.

- Agentic workflows: Run multi-step duties the place the mannequin orchestrates, checks and modifies its motion of being a robotic.

- Dynamic considering: Relying on the state of affairs, the mannequin will both undergo the issue step-by-step or simply give the reply.

You’ll be able to study extra about Gemini 3 Professional mannequin and its options within the following article: Gemini 3 Professional

Getting Began: Your First Gemini 3 Professional API Name

Step 1: Get Your API Key

Go to Google AI Studio and log in utilizing your Google account. Now, click on the profile icon on the prime proper nook, after which select “Get API key” possibility. If it’s your first time, choose “Create API key in new mission” in any other case import an present one. Make a duplicate of the API key immediately since you will be unable to see it once more.

Step 2: Set up the SDK

Select your most well-liked language and set up the official SDK utilizing following instructions:

Python:

pip set up google-genaiNode.js/JavaScript:

npm set up @google/genaiStep 3: Set Your Atmosphere Variable

Retailer your API key securely in an surroundings variable:

export GEMINI_API_KEY="your-api-key-here"Gemini 3 Professional API Pricing

The Gemini 3 Professional API makes use of a pay-as-you-go mannequin, the place your prices are primarily calculated based mostly on the variety of tokens consumed for each your enter (prompts) and the mannequin’s output (responses).

The important thing determinant for the pricing tier is the context window size of your request. Longer context home windows, which permit the mannequin to course of extra data concurrently (like giant paperwork or lengthy dialog histories), incur a better fee.

The next charges apply to the gemini-3-pro-preview mannequin through the Gemini API and are measured per a million tokens (1M).

| Function | Free Tier | Paid Tier (per 1M tokens in USD) |

|---|---|---|

| Enter value | Free (restricted day by day utilization) |

$2.00, prompts ≤ 200k tokens $4.00, prompts > 200k tokens |

| Output value (together with considering tokens) | Free (restricted day by day utilization) |

$12.00, prompts ≤ 200k tokens $18.00, prompts > 200k tokens |

| Context caching value | Not obtainable |

$0.20–$0.40 per 1M tokens (relies on immediate dimension) $4.50 per 1M tokens per hour (storage value) |

| Grounding with Google Search | Not obtainable |

1,500 RPD (free) Coming quickly: $14 per 1,000 search queries |

| Grounding with Google Maps | Not obtainable | Not obtainable |

Understanding Gemini 3 Professional’s New Parameters

The API presents a number of revolutionary parameters one in every of which is the considering degree parameter, giving full management over to the requester in a really detailed method.

The Considering Degree Parameter

This new parameter may be very doubtless essentially the most important one. You’re now not left to surprise how a lot the mannequin ought to “assume” however relatively it’s explicitly managed by you:

- thinking_level: “low”: For fundamental duties comparable to classification, Q&A, or chatting. There might be little or no latency, and the prices might be decrease which makes it excellent for high-throughput purposes.

- thinking_level: “excessive” (default): For complicated reasoning duties. The mannequin takes longer however the output will include a extra rigorously reasoned argument. That is the time for problem-solving and evaluation.

Tip: Don’t use thinking_level along side the older thinking_budget parameter, as they aren’t suitable and can end in a 400 error.

Media Decision Management

Whereas analysing photos, PDF paperwork, or movies, now you may regulate the digital processor utilization when analysing visible enter:

- Photos:

media_resolution_highfor the very best quality (1120 tokens/picture). - PDFs:

media_resolution_mediumfor doc understanding (560 tokens). - Movies:

media_resolution_lowfor motion recognition (70 tokens/body) andmedia_resolution_highfor conversation-heavy textual content (280 tokens/body).

This places the optimization of high quality and token utilization in your palms.

Temperature Settings

Right here is one thing that you could be discover intriguing: you may merely hold the temperature at its defaults of 1.0. In contrast to earlier fashions that might usually make productive use of temperature tuning, Gemini 3’s reasoning is optimized round this default setting. Decreasing the temperature may cause unusual loops or degrade efficiency on extra complicated duties.

Construct With Me: Fingers-On Examples of Gemini 3 Professional API

Demo 1: Constructing a Sensible Code Analyzer

Let’s construct one thing utilizing a real-world use case. We’ll create a system that first analyses code, identifies discrepancies, and suggests enhancements utilizing Gemini 3 Professional’s superior reasoning characteristic.

Python Implementation

import os

from google import genai

# Initialize the Gemini API consumer together with your API key

# You'll be able to set this immediately or through surroundings variable

api_key = "api-key" # Substitute together with your precise API key

consumer = genai.Consumer(api_key=api_key)

def analyze_code(code_snippet: str) -> str:

"""

Analyzes code for discrepancies and suggests enhancements utilizing Gemini 3 Professional.

Args:

code_snippet: The code to investigate

Returns:

Evaluation and enchancment solutions from the mannequin

"""

response = consumer.fashions.generate_content(

mannequin="gemini-3-pro-preview",

contents=[

{

"text": f"""Analyze this code for issues, inefficiencies, and potential improvements.

Provide:

1. Issues found (bugs, logic errors, security concerns)

2. Performance optimizations

3. Code quality improvements

4. Best practices violations

5. Refactored version with explanations

Code to analyze:

{code_snippet}

Be direct and concise in your analysis."""

}

]

)

return response.textual content

# Instance utilization

sample_code = """

def calculate_total(objects):

complete = 0

for i in vary(len(objects)):

complete = complete + objects[i]['price'] * objects[i]['quantity']

return complete

def get_user_data(user_id):

import requests

response = requests.get(f"http://api.instance.com/consumer/{user_id}")

knowledge = response.json()

return knowledge

"""

print("=" * 60)

print("GEMINI 3 PRO - SMART CODE ANALYZER")

print("=" * 60)

print("nAnalyzing code...n")

# Run the evaluation

evaluation = analyze_code(sample_code)

print(evaluation)

print("n" + "=" * 60)Output:

What’s Occurring Right here?

- We’re implementing

thinking_level ("excessive")since code evaluate entails some heavy reasoning. - The immediate is transient and to the purpose Gemini 3 responds extra successfully when prompts are direct, versus elaborately utilizing immediate engineering.

- The mannequin critiques the code with full reasoning capability and can present a responsive model which incorporates important revisions and insightful evaluation.

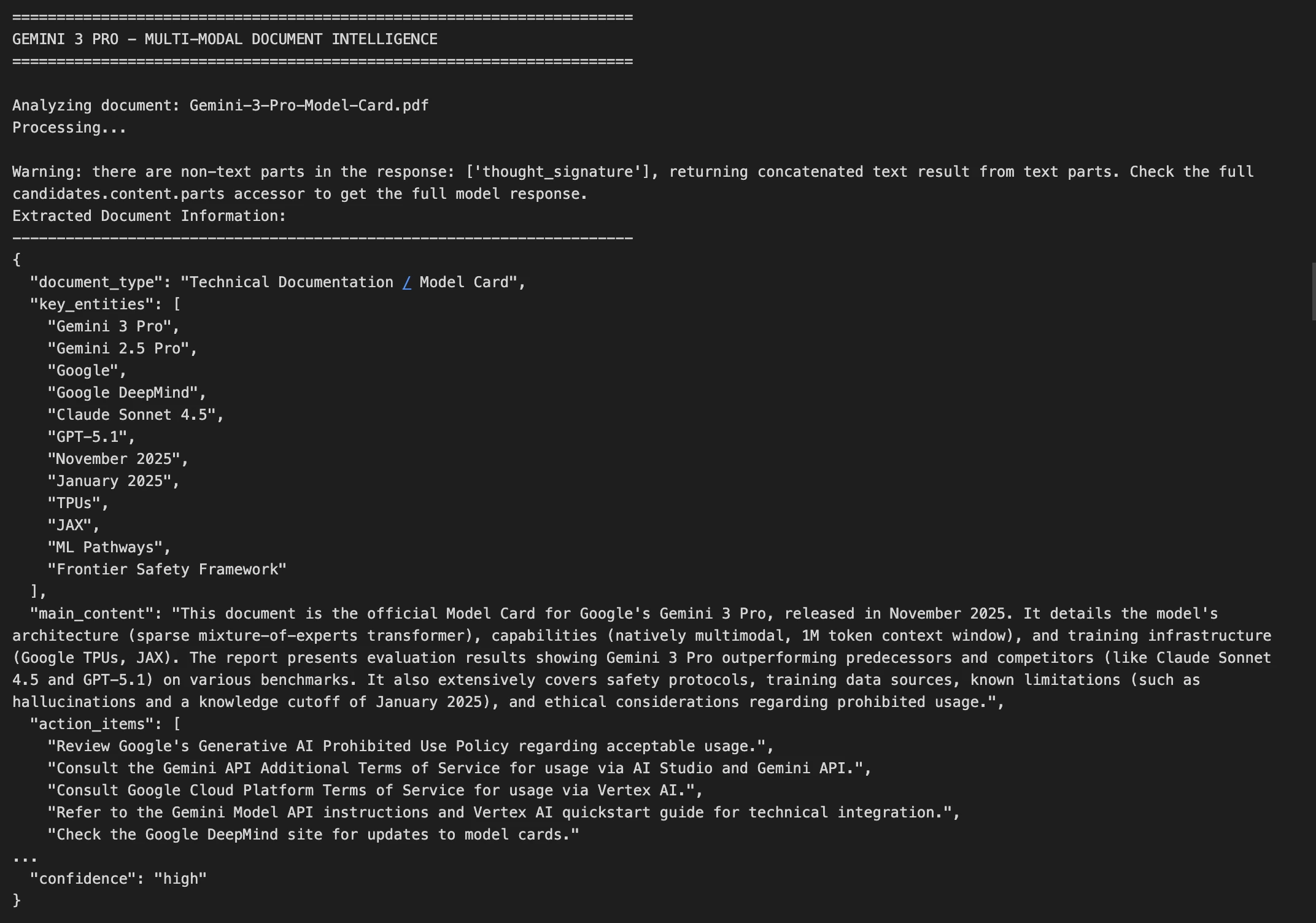

Demo 2: Multi-Modal Doc Intelligence

Now let’s tackle a extra complicated use case that’s analyzing a picture of a doc and extracting structured data.

Python Implementation

import base64

import json

from google import genai

# Initialize the Gemini API consumer

api_key = "api-key-here" # Substitute together with your precise API key

consumer = genai.Consumer(api_key=api_key)

def analyze_document_image(image_path: str) -> dict:

"""

Analyzes a doc picture and extracts key data.

Handles photos, PDFs, and different doc codecs.

Args:

image_path: Path to the doc picture file

Returns:

Dictionary containing extracted doc data as JSON

"""

# Learn and encode the picture

with open(image_path, "rb") as img_file:

image_data = base64.standard_b64encode(img_file.learn()).decode()

# Decide the MIME sort based mostly on file extension

mime_type = "picture/jpeg" # Default

if image_path.endswith(".png"):

mime_type = "picture/png"

elif image_path.endswith(".pdf"):

mime_type = "utility/pdf"

elif image_path.endswith(".gif"):

mime_type = "picture/gif"

elif image_path.endswith(".webp"):

mime_type = "picture/webp"

# Name the Gemini API with multimodal content material

response = consumer.fashions.generate_content(

mannequin="gemini-3-pro-preview",

contents=[

{

"text": """Extract and structure all information from this document image.

Return the data as JSON with these fields:

- document_type: What type of document is this?

- key_entities: List of important names, dates, amounts, etc.

- main_content: Brief summary of the document's purpose

- action_items: Any tasks or deadlines mentioned

- confidence: How confident you are in the extraction (high/medium/low)

Return ONLY valid JSON, no markdown formatting."""

},

{

"inline_data": {

"mime_type": mime_type,

"data": image_data

}

}

]

)

# Parse the JSON response

attempt:

outcome = json.hundreds(response.textual content)

return outcome

besides json.JSONDecodeError:

return {

"error": "Did not parse response",

"uncooked": response.textual content

}

# Instance utilization with a pattern doc

print("=" * 70)

print("GEMINI 3 PRO - MULTI-MODAL DOCUMENT INTELLIGENCE")

print("=" * 70)

document_path = "Gemini-3-Professional-Mannequin-Card.pdf" # Change this to your precise doc path

attempt:

print(f"nAnalyzing doc: {document_path}")

print("Processing...n")

document_info = analyze_document_image(document_path)

print("Extracted Doc Data:")

print("-" * 70)

print(json.dumps(document_info, indent=2))

besides FileNotFoundError:

print(f"Error: Doc file '{document_path}' not discovered.")

print("Please present a legitimate path to a doc picture (JPG, PNG, PDF, and so on.)")

print("nExample:")

print(' document_info = analyze_document_image("bill.pdf")')

print(' document_info = analyze_document_image("contract.png")')

print(' document_info = analyze_document_image("receipt.jpg")')

besides Exception as e:

print(f"Error processing doc: {str(e)}")

print("n" + "=" * 70)Output:

Key Methods Right here:

- Picture processing: We’re encoding the image in base64 format for supply

- Most high quality possibility: For textual content paperwork we apply

media_resolution_highto supply excellent OCR - Ordered outcome: We ask for a JSON format which is simple to attach with different programs

- Fault dealing with: We do it the way in which that JSON parsing errors are usually not noticeable

Superior Options: Past Fundamental Prompting

Thought Signatures: Sustaining Reasoning Context

Gemini 3 professional introduces a tremendous characteristic often called thought signatures, which has encrypted representations of the interior reasoning that mannequin does. These mannequin signatures maintain context throughout the API calls when utilizing operate calling or multi-turn conversations.

In case your choice is to make use of the official Python or Node.js SDKs methodology then this thought signatures is being dealt with mechanically and invisibly however if you happen to’re making uncooked API name then you must return the although Signature precisely because it’s acquired.

Context Caching for Value Optimization

Do you propose on sending related requests a number of instances? Reap the benefits of context caching which may cache the primary 2,048 tokens of your immediate, and get monetary savings on subsequent requests. That is improbable anytime you’re processing a bunch of paperwork and may reuse a system immediate in between.

Batch API: Course of at Scale

For workloads that aren’t time-sensitive, the Batch API can prevent as much as 90%. That is ideally suited for workloads the place there are many paperwork to course of or if you’ll run a big evaluation in a single day.

Conclusion

Google Gemini 3 Professional marks a inflection level in what’s doable with AI APIs. The mixture of superior reasoning, large context home windows, and inexpensive pricing means now you can construct programs that have been beforehand impractical.

Begin small: Construct a chatbot, analyze some paperwork, or automate a routine process. Then, as you get comfy with the API, discover extra complicated situations like autonomous brokers, code era, and multimodal evaluation.

Continuously Requested Questions

A. Its superior reasoning, multimodal help, and large context window allow smarter and extra succesful purposes.

A. Set thinking_level to low for quick/easy duties or excessive for complicated evaluation.

A. Use context caching or the Batch API to reuse prompts and run workloads effectively.

Login to proceed studying and revel in expert-curated content material.