Knowledge warehouses have lengthy been prized for his or her construction and rigor, and but many assume a lakehouse sacrifices that self-discipline. Right here we dispel two associated myths: that Databricks abandons relational modeling and that it doesn’t help keys or constraints. You’ll see that core rules like keys, constraints, and schema enforcement stay first-class residents in Databricks SQL. Watch the complete DAIS 2025 session right here →

Fashionable information warehouses have advanced, and the Databricks Lakehouse is a wonderful instance of this evolution. Over the previous 4 years, hundreds of organizations have migrated their legacy information warehouses to the Databricks Lakehouse, having access to a unified platform that seamlessly combines information warehousing, streaming analytics, and AI capabilities. Nevertheless, some options and capabilities of Basic Knowledge Warehouses will not be mainstays of Knowledge Lakes. This weblog dispels lingering information modeling myths and offers further finest practices for operationalizing your trendy cloud Lakehouse.

This complete information addresses probably the most prevalent myths surrounding Databricks’ information warehousing performance whereas showcasing the highly effective new capabilities introduced at Knowledge + AI Summit 2025. Whether or not you are an information architect evaluating platform choices or an information engineer implementing lakehouse options, this publish will offer you the definitive understanding of Databricks’ enterprise-grade information modeling capabilities.

- Delusion #1: “Databricks would not help relational modeling.”

- Delusion #2: “You may’t use main and overseas keys.”

- Delusion #3: “Column-level information high quality constraints are not possible.”

- Delusion #4: “You may’t do semantic modeling with out proprietary BI instruments.”

- Delusion #5: “You should not construct dimensional fashions in Databricks.”

- Delusion #6: “You want a separate engine for BI efficiency.”

- Delusion #7: “Medallion structure is required”

- BONUS Delusion #8: “Databricks would not help multi-statement transactions.”

The evolution from information warehouse to lakehouse

Earlier than diving into the myths, it is essential to know what units the lakehouse structure other than conventional information warehousing approaches. The lakehouse combines the reliability and efficiency of knowledge warehouses with the pliability and scale of knowledge lakes, making a unified platform that eliminates the normal trade-offs between structured and unstructured information processing.

Databricks SQL options:

- Unified information storage on low-cost cloud object storage with open codecs

- ACID transaction ensures by means of Delta Lake

- Superior question optimization with the Photon engine

- Complete governance by means of Unity Catalog

- Native help for each SQL and machine studying workloads

This structure addresses basic limitations of conventional approaches whereas sustaining compatibility with current instruments and practices.

Delusion #1: “Databricks would not help relational modeling”

Fact: Relational rules are basic to the Lakehouse

Maybe probably the most pervasive delusion is that Databricks abandons relational modeling rules. This could not be farther from the reality. The time period “lakehouse” explicitly emphasizes the “home” part – structured, dependable information administration that builds upon many years of confirmed relational database idea.

Delta Lake, the storage layer underlying each Databricks desk, offers full help for:

- ACID transactions guarantee information consistency

- Schema enforcement and evolution, sustaining information integrity

- SQL-compliant operations, together with complicated joins and analytical features

- Referential integrity ideas by means of main and overseas key definitions (these ideas are for question efficiency, however will not be enforced)

Fashionable options like Unity Catalog Metric Views, now in Public Preview, rely completely on well-structured relational fashions to perform successfully. These semantic layers require correct dimensions and truth tables to ship constant enterprise metrics throughout the group.

Most significantly, AI and machine studying fashions – also called “schema-on-read” approaches – carry out finest with clear, structured, tabular information that follows relational rules. The Lakehouse would not abandon construction; it makes construction extra versatile and scalable.

Delusion #2: “You may’t use main and overseas keys”

**Fact: Databricks has sturdy constraint help with optimization advantages**

Databricks has supported main and overseas key constraints since Databricks Runtime 11.3 LTS, with full Normal Availability as of Runtime 15.2. These constraints serve a number of important functions:

- Informational constraints that doc information relationships, with enforceable referential integrity constraints on the roadmap. Organizations planning their lakehouse migrations ought to design their information fashions with correct key relationships now to benefit from these capabilities as they turn out to be obtainable.

- Question optimization hints: For organizations that handle referential integrity of their ETL pipelines, the `RELY` key phrase offers a highly effective optimization trace. If you declare `FOREIGN KEY … RELY`, you are telling the Databricks optimizer that it will possibly safely assume referential integrity, enabling aggressive question optimizations that may dramatically enhance be part of efficiency.

- Instrument compatibility with BI platforms like Tableau and Energy BI that robotically detect and make the most of these relationships

Delusion #3: “Column-level information high quality constraints are not possible”

Fact: Databricks offers complete information high quality enforcement

Knowledge high quality is paramount in enterprise information platforms, and Databricks presents a number of layers of constraint enforcement that transcend what conventional information warehouses present.

The most typical are easy Native SQL Constraints, together with:

- CHECK constraints for customized enterprise guidelines validation

- NOT NULL constraints for required area validation

Moreover, Databricks presents Superior Knowledge High quality Options that transcend primary constraints to offer enterprise-grade information high quality monitoring.

Lakehouse Monitoring delivers automated information high quality monitoring with:

- Statistical profiling and drift detection

- Customized metric definitions and alerting

- Integration with Unity Catalog for governance

- Actual-time information high quality dashboards

Databricks Labs DQX Library presents:

- Customized information high quality guidelines for Delta tables

- DataFrame-level validations throughout processing

- Extensible framework for complicated high quality checks

These instruments mixed present information high quality capabilities that surpass conventional information warehouse constraint techniques, providing each preventive and detective controls throughout your total information pipeline.

Delusion #4: “You may’t do semantic modeling with out proprietary BI instruments”

Fact: Unity Catalog Metric Views revolutionize semantic layer administration

Probably the most important bulletins at Knowledge + AI Summit 2025 was the Public Preview announcement of Unity Catalog Metric Views – a game-changing strategy to semantic modeling that breaks free from vendor lock-in.

Unity Catalog Metric Views assist you to centralize Enterprise Logic:

- Outline metrics as soon as on the catalog degree

- Entry from wherever – dashboards, notebooks, SQL, AI instruments

- Preserve consistency throughout all consumption factors

- Model and govern like some other information asset

Not like proprietary BI semantic layers, Unity Catalog Metrics are Open and Accessible:

- SQL-addressable – question them like all desk or view

- Instrument-agnostic – work with any BI platform or analytical software

- AI-ready – accessible to LLMs and AI brokers by means of pure language

This strategy represents a basic shift from BI-tool-specific semantic layers to a unified, ruled, and open semantic basis that powers analytics throughout your total group.

Delusion #5: “You should not construct dimensional fashions in Databricks”

Fact: Dimensional modeling rules thrive within the Lakehouse

Removed from discouraging dimensional modeling, Databricks actively embraces and optimizes for these confirmed analytical patterns. Star and snowflake schemas translate exceptionally properly to Delta tables, usually providing superior efficiency traits in comparison with conventional information warehouses. These accepted Dimensional Modeling patterns supply:

- Enterprise understandability – acquainted patterns for analysts and enterprise customers

- Question efficiency – optimized for analytical workloads and BI instruments

- Slowly altering dimensions – straightforward to implement with Delta Lake’s time journey options

- Scalable aggregations – materialized views and incremental processing

Moreover, the Databricks Lakehouse offers distinctive advantages for dimensional modeling, together with Versatile Schema Evolution and Time Journey Integration. To get pleasure from the perfect expertise leveraging dimensional modeling on Databricks, comply with these finest practices:

- Use Unity Catalog’s three-level namespace (catalog.schema.desk) to prepare your dimensional fashions

- Implement correct main and overseas key constraints for documentation and optimization

- Leverage id columns for surrogate key era

- Apply liquid clustering on often joined columns

- Use materialized views for pre-aggregated truth tables

Delusion #6: “You want a separate engine for BI efficiency”

Fact: The Lakehouse delivers world-class BI efficiency natively

The misperception that lakehouse architectures cannot match conventional information warehouse efficiency for BI workloads is more and more outdated. Databricks has invested closely in question efficiency optimization, delivering outcomes that persistently exceed conventional MPP information warehouses.

The cornerstone of Databricks’ efficiency optimizations is the Photon Engine, which is particularly designed for OLAP workloads and analytical queries.

- Vectorized execution for complicated analytical operations

- Superior predicate pushdown minimizing information motion

- Clever information pruning leveraging dimensional mannequin constructions

- Columnar processing optimized for aggregations and joins

Moreover, Databricks SQL offers a totally managed, serverless warehouse expertise that scales robotically for high-concurrency BI workloads and integrates seamlessly with fashionable BI instruments. Our Serverless Warehouses mix best-in-class TCO and efficiency to ship optimum response occasions on your analytical queries. Usually neglected in recent times are Delta Lake’s Foundational advantages – i.e., file optimizations, superior statistics assortment, and information clustering on the open and environment friendly parquet information format. The ensuing efficiency advantages that organizations migrating from conventional information warehouses to Databricks persistently report:

- As much as 10-50x quicker question efficiency for complicated analytical workloads

- Excessive concurrency scaling with out efficiency degradation

- As much as 90% price discount in comparison with conventional MPP information warehouses

- Zero upkeep overhead with serverless compute

Knowledge + AI Summit 2025 introduced much more thrilling bulletins and optimizations, together with enhanced predictive optimization and automated liquid clustering.

Delusion #7: “Medallion structure is required”

Fact: Medallion is a tenet, not a inflexible requirement

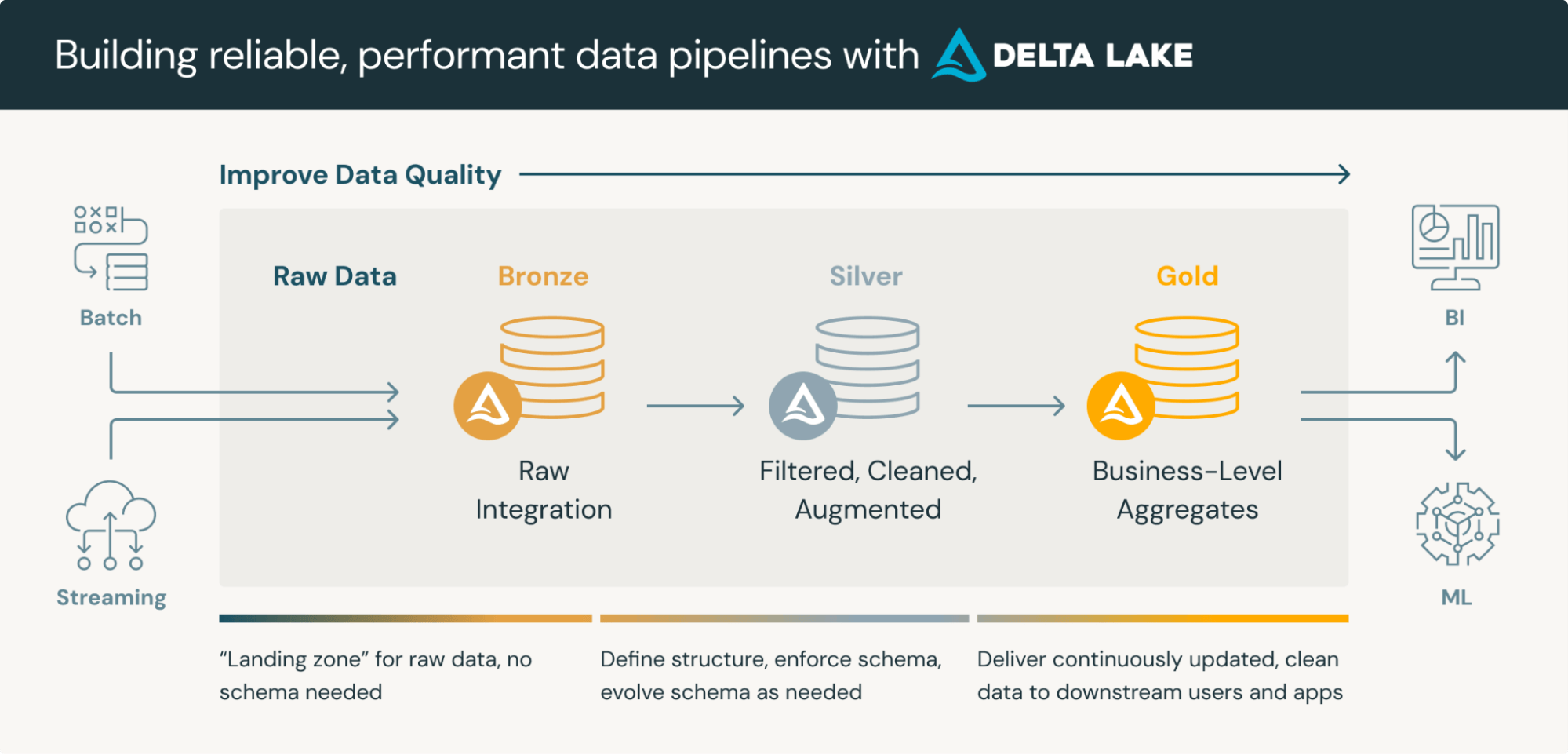

So, what’s a medallion structure? A medallion structure is an information design sample used to logically manage information in a lakehouse, with the aim of incrementally and progressively enhancing the construction and high quality of knowledge because it flows by means of every layer of the structure (from Bronze ⇒ Silver ⇒ Gold layer tables). Whereas the medallion structure, additionally known as a “multi-hop” structure, offers a superb framework for organizing information in a lakehouse, it is important to know that it is a reference structure, not a compulsory construction. The important thing to modeling on Databricks is to take care of flexibility whereas modeling real-world complexity, which may add and even take away layers of the medallion structure as wanted.

Many profitable Databricks implementations could even mix modeling approaches. Databricks is able to a myriad of Hybrid Modeling Approaches to accommodate Knowledge Vault, star schemas, snowflake or Area-Particular Layers to deal with industry-specific information fashions (i.e. healthcare, monetary companies, retail).

The hot button is to make use of medallion structure as a place to begin and adapt it to your particular organizational wants whereas sustaining the core rules of progressive information refinement and high quality enchancment. There are a lot of organizational elements that affect your Lakehouse Structure, and the implementation ought to come after cautious consideration of:

- Firm dimension and complexity – bigger organizations usually want extra layers

- Regulatory necessities – compliance wants could dictate further controls

- Utilization patterns – real-time vs. batch analytics have an effect on layer design

- Group construction – information engineering vs. analytics crew boundaries

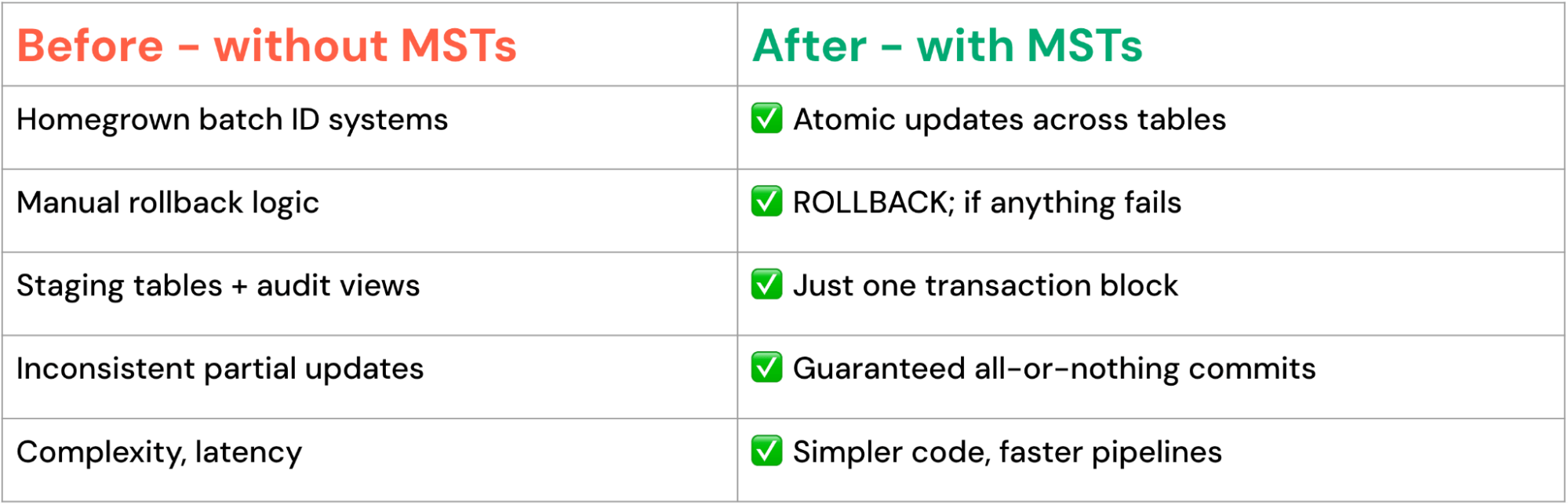

BONUS Delusion #8: “Databricks would not help multi-statement transactions”

Fact: Superior transaction capabilities at the moment are obtainable

One of many functionality gaps between conventional information warehouses and lakehouse platforms has been multi-table, multi-statement transaction help. This modified with the announcement of Multi-Assertion Transactions at Knowledge + AI Summit 2025. With the addition of MSTs, now in Non-public Preview, Databricks offers:

- Multi-format transactions throughout Delta Lake and Apache Iceberg™ tables

- Multi-table atomicity ensures all-or-nothing semantics

- Multi-statement consistency with full rollback capabilities

- Cross-catalog transactions spanning totally different information sources

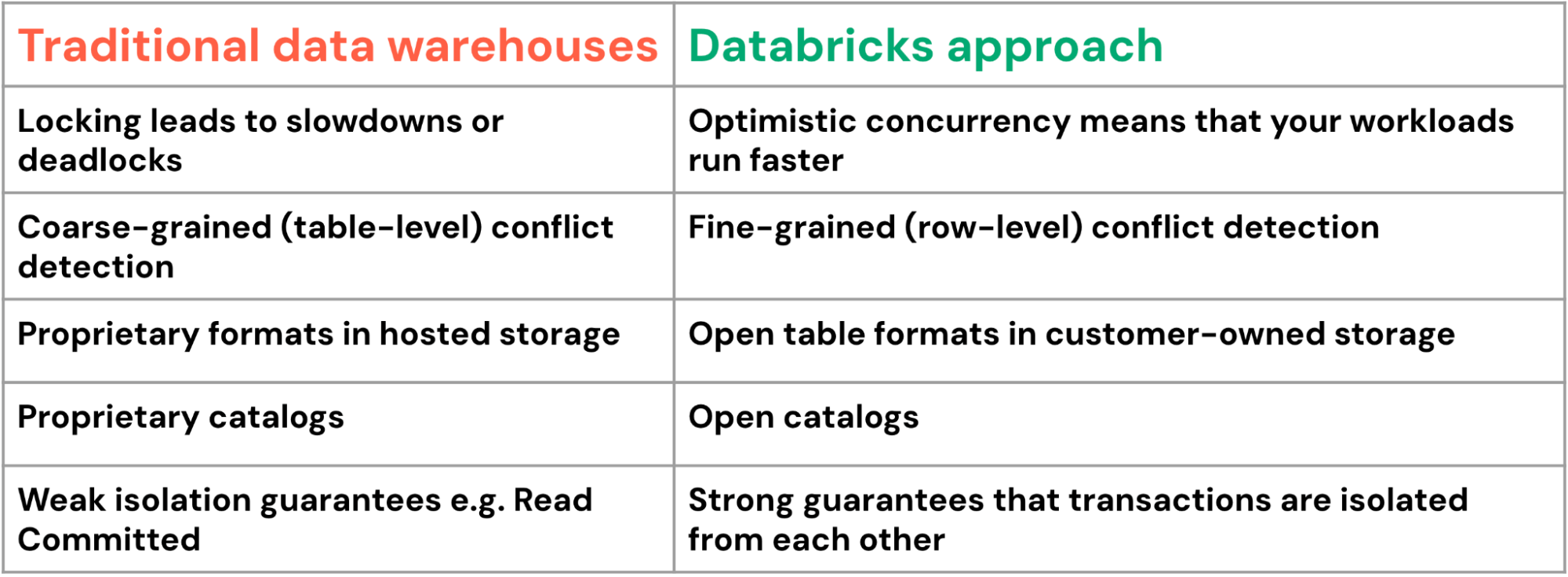

Databricks’ strategy presents important benefits in comparison with its conventional information warehouse counterparts:

Multi-statement transactions are compelling for complicated enterprise processes like provide chain administration, the place updates to tons of of associated tables should keep excellent consistency. Multi-statement transactions allow highly effective patterns:

Constant multi-table updates

Complicated information pipeline orchestration

Conclusion: Embracing the fashionable information warehouse

Technological developments and real-world implementations have totally debunked the myths surrounding Databricks’ information warehousing capabilities. The platform not solely helps conventional information warehousing ideas but additionally enhances them with trendy capabilities that tackle the restrictions of legacy techniques.

For organizations evaluating or implementing Databricks for information warehousing:

- Begin with confirmed patterns: Implement dimensional fashions and relational rules that your crew understands

- Leverage trendy optimizations: Use Liquid Clustering, Predictive Optimization, and Unity Catalog Metrics for superior efficiency.

- Design for scalability: Construct information fashions that may develop together with your group and adapt to altering necessities

- Embrace governance: Implement complete entry controls and lineage monitoring from day one.

- Plan for AI integration: Design your information warehouse to help future AI and machine studying initiatives

The Databricks Lakehouse represents the subsequent evolution of knowledge warehousing – combining the reliability and efficiency of conventional approaches with the pliability and scale required for contemporary analytics and AI. The myths that when questioned its capabilities have been changed by confirmed outcomes and steady innovation.

As we transfer ahead into an more and more AI-driven future, organizations that embrace the Lakehouse structure will discover themselves higher positioned to extract worth from their information, reply to altering enterprise necessities, and ship revolutionary analytics options that drive aggressive benefit.

The query is not whether or not Lakehouse can exchange conventional information warehouses—it is how rapidly you may start realizing its advantages to enterprise information administration.

The Lakehouse structure combines openness, flexibility, and full transactional reliability — a mix that legacy information warehouses battle to realize. From medallion to domain-specific fashions, and from single-table updates to multi-statement transactions, Databricks offers a basis that grows with your enterprise.

Prepared to remodel your information warehouse? The most effective information warehouse is a lakehouse! To study extra about Databricks SQL, take a product tour. Go to databricks.com/sql to discover Databricks SQL and see how organizations worldwide are revolutionizing their information platforms.