Over time, organizations have invested in constructing purpose-built cloud-based information warehouses which are siloed from each other. One of many main challenges these organizations encounter as we speak is enabling cross-organization discovery and entry to information throughout these siloed information warehouses constructed utilizing completely different expertise stacks. The information mesh sample addresses these points, based in 4 rules: domain-oriented decentralized information possession and structure, treating information as a product, offering self-serve information infrastructure as a platform, and implementing federated governance. The info mesh sample helps organizations mimic their organizational construction into information domains and makes it attainable to share the info throughout the group and past to enhance their enterprise fashions.

In 2019, Volkswagen AG and Amazon Internet Providers (AWS) began their collaboration to co-develop the Digital Manufacturing Platform (DPP), with the aim of enhancing manufacturing and logistics effectivity by 30% whereas decreasing manufacturing prices by the identical margin. The DPP was developed to streamline entry to information from store flooring gadgets and manufacturing methods by dealing with integrations and offering a variety of standardized interfaces. Nonetheless, as purposes and use instances developed on the platform, a major problem emerged: the power to share information throughout purposes saved in remoted information warehouses (inside Amazon Redshift in remoted AWS accounts designated for particular use instances), with out the necessity to consolidate information right into a central information warehouse. One other problem was discovering all of the out there information saved throughout a number of information warehouses and facilitating a workflow to request entry to information throughout enterprise domains inside every plant. The widespread technique used was largely guide, counting on emails and basic communication (by way of tickets and emails). The guide method not solely elevated the overhead but in addition diverse from one use case to a different when it comes to information governance.

On this submit, we introduce Amazon DataZone and discover how Volkswagen used Amazon DataZone to construct their information mesh, deal with the challenges encountered, and break the info silos. A key facet of the answer was enabling information suppliers to robotically publish their information merchandise to Amazon DataZone, serving as a central information mesh for enhanced information discoverability. Moreover, we offer code to information you thru the deployment and implementation course of.

Introduction to Amazon DataZone

Amazon DataZone is an information administration service that makes it quicker and easy to catalog, uncover, share, and govern information saved throughout AWS, on-premises, and third-party sources. Key options of Amazon DataZone embrace the enterprise information catalog, with which customers can seek for revealed information, request entry, and begin engaged on information in days as an alternative of weeks. As well as, the service facilitates collaboration throughout groups and helps them handle and monitor information belongings throughout completely different organizational items. The service additionally contains the Amazon DataZone portal, which provides a customized analytics expertise for information belongings by way of a web-based software or API. Lastly, Amazon DataZone provides ruled information sharing, which makes positive the suitable information is accessed by the suitable consumer for the suitable objective with a ruled workflow.

Answer overview

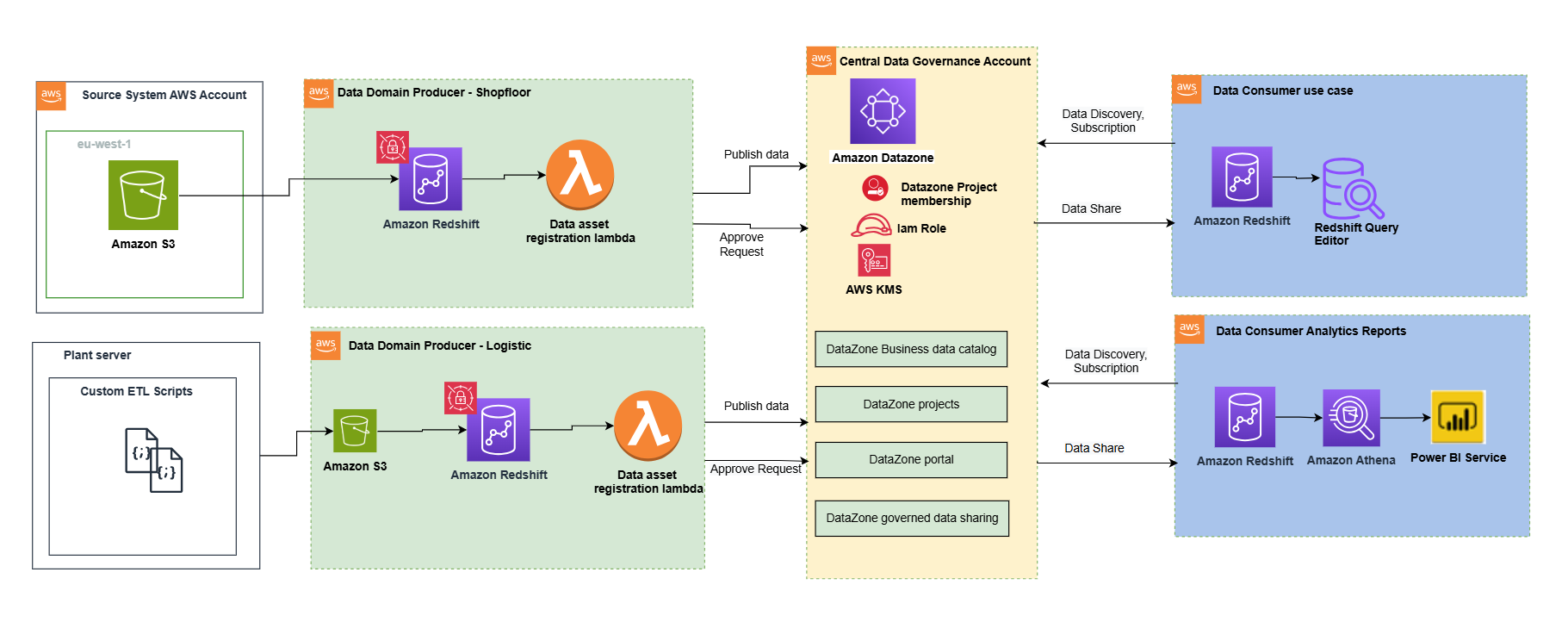

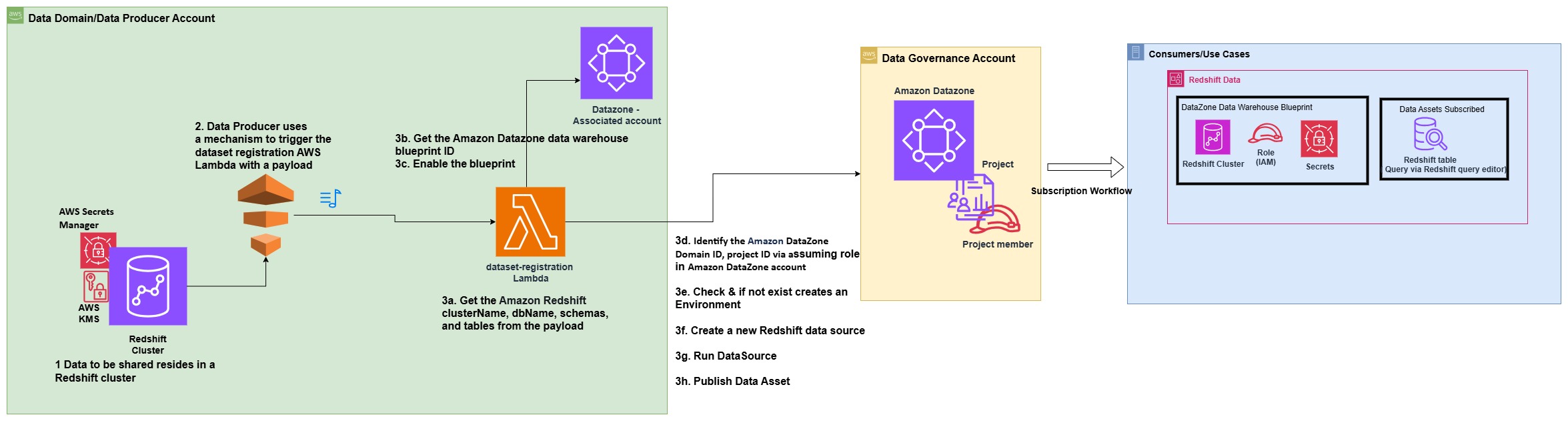

The next structure diagram represents a high-level design that’s constructed on prime of the info mesh sample. It separates supply methods, information area producers (information publishers), information area subscribers (information customers), and central governance to focus on the important thing features. This information mesh structure is specifically tailor-made for cross-AWS account utilization. The target of this method is to create a basis for constructing information governance on a scale, supporting the targets of information producers and customers with robust and constant governance.

This structure permits for the combination of a number of information warehouses right into a centralized governance account that shops all of the metadata from every setting.

An information area producer makes use of Amazon Redshift as their analytical information warehouse to retailer, course of, and handle structured and semi-structured information. The info area producers load information into their respective Amazon Redshift clusters by way of extract, remodel, and cargo (ETL) pipelines they handle, personal, and function. The producers preserve management over their information by way of Amazon Redshift security measures, together with column-level entry controls and dynamic information masking, supporting information governance on the supply. An information area producer makes use of Amazon Redshift ETL and Amazon Redshift Spectrum to course of and remodel uncooked information into consumable information merchandise. The info merchandise could possibly be Amazon Redshift tables, views, or materialized views.

Information area producers expose datasets to the remainder of the group by registering them to Amazon DataZone service, which acts as a central information catalog. They will select what information belongings to share, for a way lengthy, and the way customers can work together with these. They’re additionally liable for sustaining the info and ensuring it’s correct and present.

The info belongings from the producers are then revealed utilizing the info supply run to Amazon DataZone within the central governance account. This course of populates the technical metadata into the enterprise information catalog for every information asset. The enterprise metadata will be added by enterprise customers (information analysts) to offer enterprise context, tags, and information classification for the datasets. This method supplies the required options to permit producers to create catalog entries with Amazon Redshift from all their information warehouses inbuilt with Redshift clusters. As well as, the central information governance account is used to share datasets securely between producers and customers. It’s necessary to notice that sharing is finished by way of metadata linking alone. No information (besides logs) exists within the governance account. The info isn’t copied to the central account; only a reference to the info is used, in order that the info possession stays with the producer.

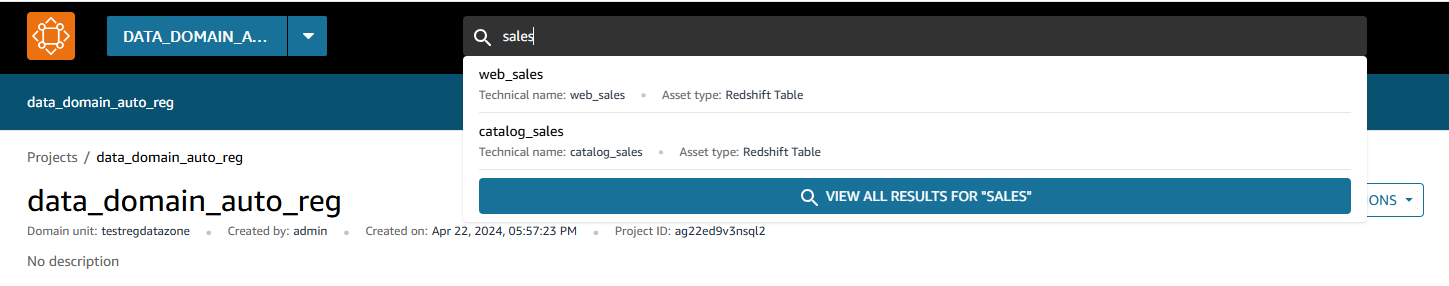

Amazon DataZone supplies a streamlined method to seek for information. The Amazon DataZone information portal supplies a customized view for customers to find and search information belongings. An Amazon DataZone consumer (client) with permissions to entry the info portal can seek for belongings and submit requests for subscription of information belongings utilizing a web-based software. An approver can then approve or reject the subscription request.

When an information area client has entry to an asset within the catalog, they’ll devour it (question and analyze) utilizing the Amazon Redshift question editor. Every client runs their very own workload based mostly on their use case. On this means, the group can select the instruments for the job to carry out analytics and machine studying actions in its AWS client setting.

Publishing and registering information belongings to Amazon DataZone

To publish an information asset from the producer account, every asset have to be registered in Amazon DataZone for client subscription. For extra info, discuss with Create and run an Amazon DataZone information supply for Amazon Redshift. Within the absence of an automatic registration course of, required duties have to be accomplished manually for every information asset.

Utilizing the automated registration workflow, the guide steps will be automated for the Amazon Redshift information asset (Redshift desk or view) that must be revealed in an Amazon DataZone area or when there’s a schema change in an already revealed information asset.

The next structure diagram represents how information belongings from Amazon Redshift information warehouses have been robotically revealed to the info mesh created with Amazon DataZone.

The method consists of the next steps:

- Within the producer account (Account B), the info to be shared resides in a Redshift cluster.

- The producer account (Account B) makes use of a mechanism to set off the dataset registration AWS Lambda operate with a particular payload containing the knowledge and title of the database, schema, desk, or view that has a change in metadata.

- The Lambda operate performs the steps to robotically register and publish the dataset in Amazon DataZone:

- Get the Amazon Redshift clusterName, dbName, schemas, and tables from the JSON payload, which is used because the occasion to set off the Lambda operate.

- Get the Amazon DataZone information warehouse blueprint ID.

- Allow the blueprint within the information producer account.

- Establish the Amazon DataZone Area ID and undertaking ID for the producer through assuming position in Amazon DataZone account (Account A).

- Verify if an setting already exists within the undertaking. If not, create an setting.

- Create a brand new Redshift information supply by offering the right Redshift database info within the newly created setting.

- Provoke an information supply run request within the information supply to make the Redshift tables or views out there in Amazon DataZone.

- Publish the tables or views within the Amazon DataZone catalog.

Stipulations

The next stipulations are required earlier than beginning:

- Two AWS accounts to implement the answer have been described on this submit. Nonetheless, you too can use Amazon DataZone to publish information inside a single account or throughout a number of accounts.

- Amazon DataZone account (Account A) – That is the central information governance account, which can have the Amazon DataZone area and undertaking.

- Information area producer account (Account B) – This account acts as the info area producer. It has been added as an related account to Account A.

Stipulations in information area producer account (Account B)

As a part of this submit, we wish to publish belongings and subscribe to belongings from a Redshift cluster that already exists. Full the next prerequisite steps to arrange Account B:

- Arrange the Redshift cluster, together with database, schema, tables, and views (elective). The node sort have to be from the RA3 household. For extra info, see Amazon Redshift provisioned clusters.

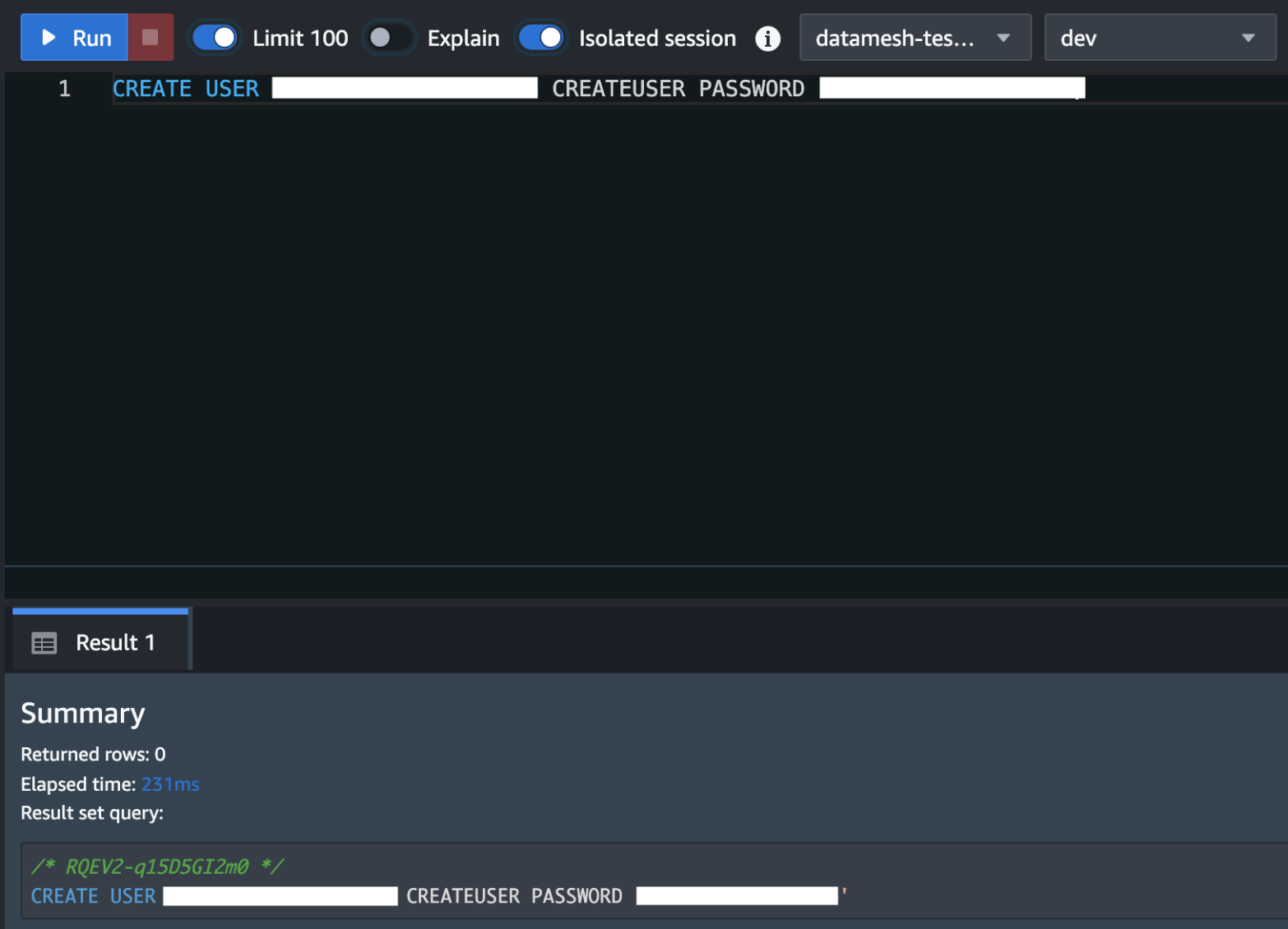

Create a superuser in Amazon Redshift for Amazon DataZone. For the Redshift cluster, the database consumer you present in AWS Secrets and techniques Supervisor should have superuser permissions. For reference please see the notice part on this QuickStart information with pattern Amazon Redshift information

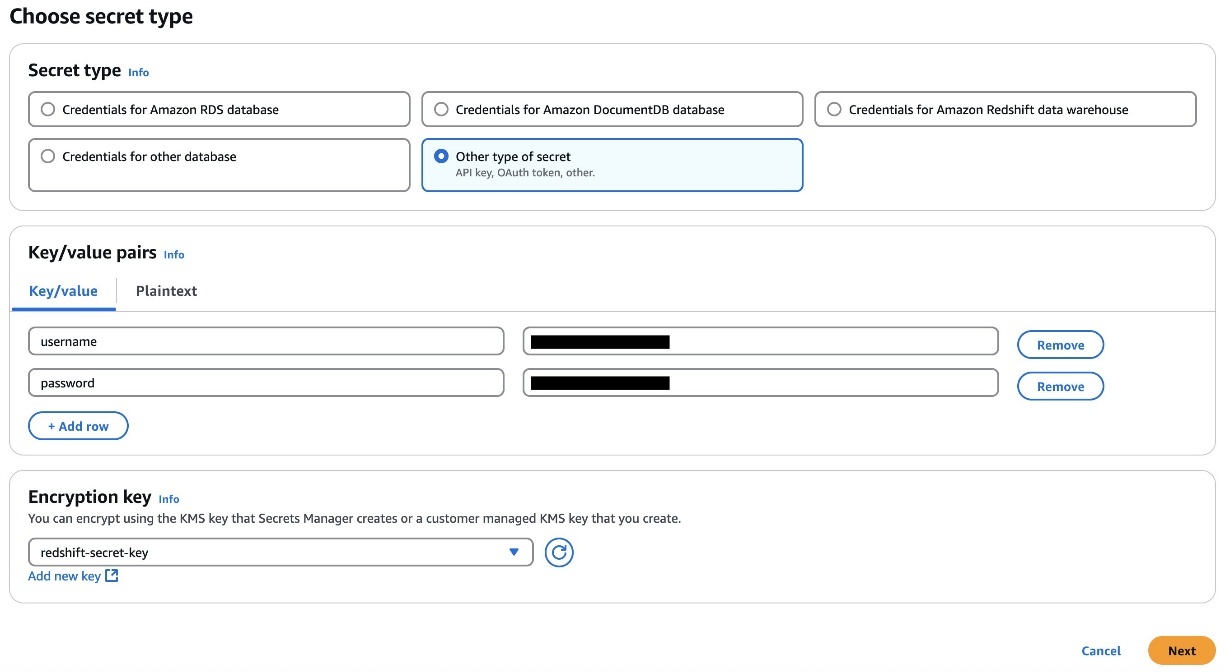

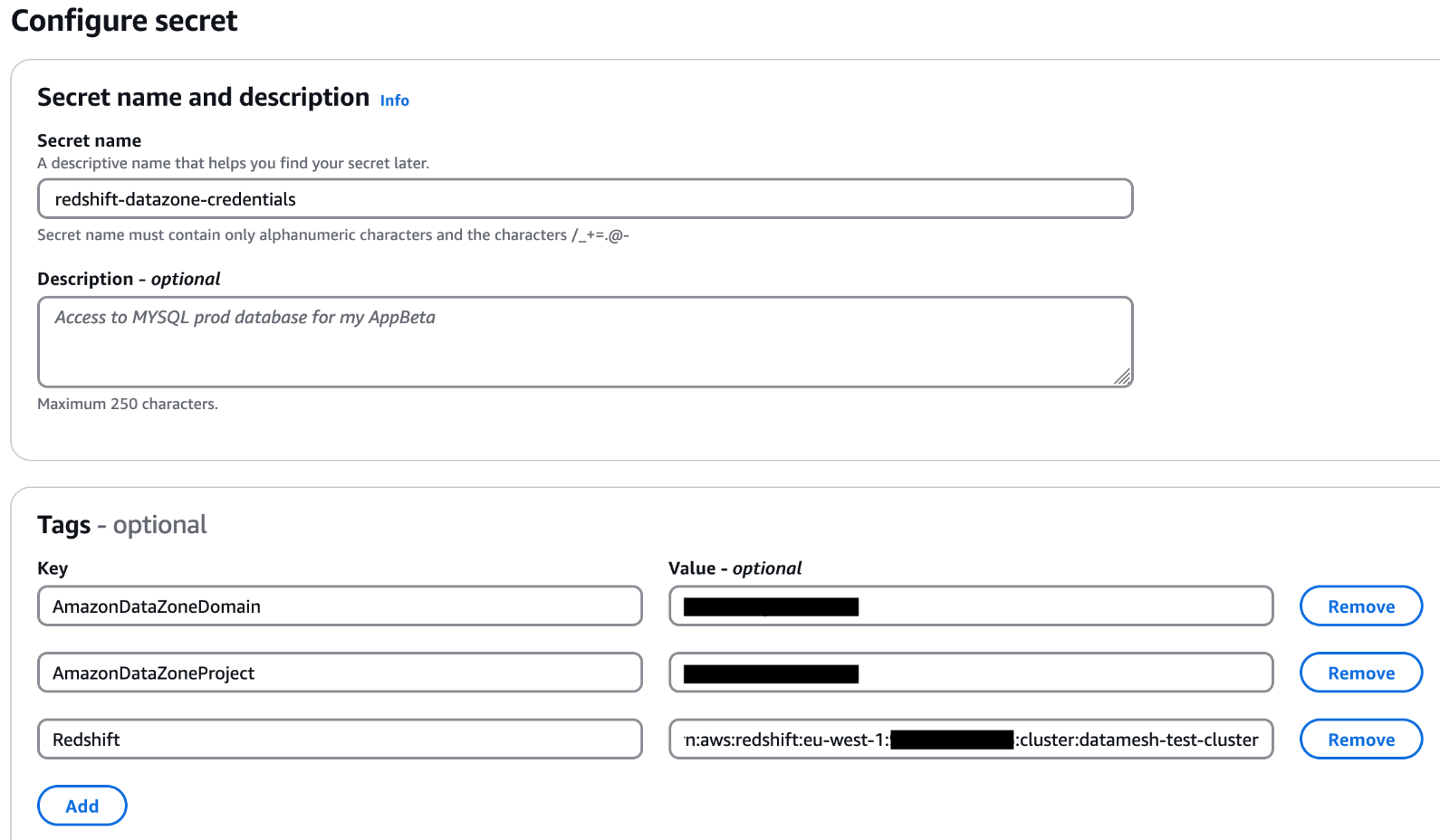

- Retailer the consumer’s credentials in Secrets and techniques Supervisor. Choose the credential sort, enter the credential values, and select the AWS Key Administration Service (AWS KMS) key with which to encrypt the key.

- Add the tags to the Secret Supervisor secret to permit Amazon DataZone to seek out this secret and restrict the entry to a specific Amazon DataZone area and Amazon DataZone undertaking. The Redshift cluster Amazon Useful resource Title (ARN) have to be added as a tag so it may be utilized by Amazon Redshift as a legitimate credential. For reference please see the notice part on this QuickStart information with pattern Amazon Redshift information

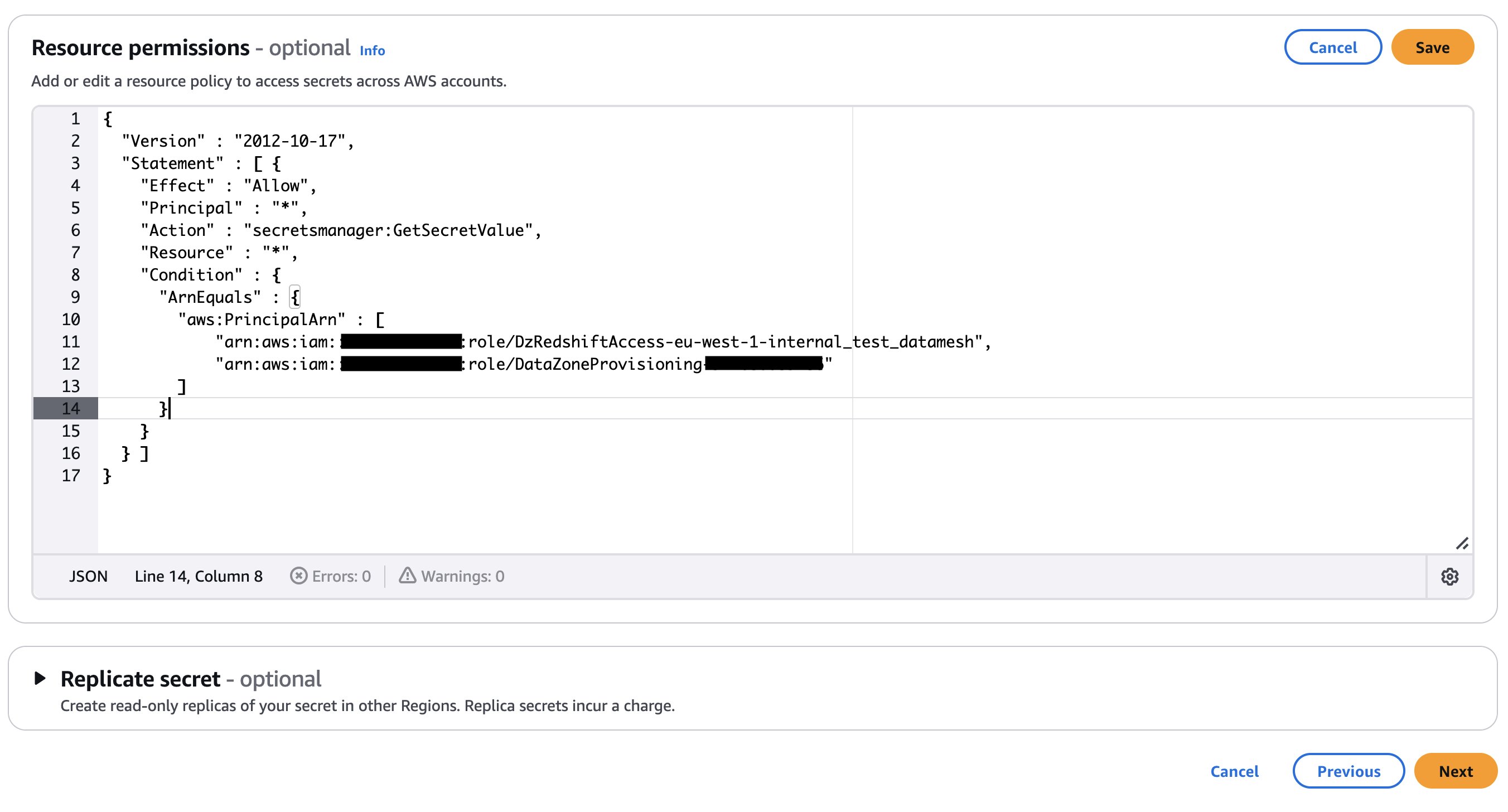

- Add an Amazon DataZone provisioning IAM position and Amazon Redshift handle entry IAM position within the secret’s useful resource coverage. The AWS Identification and Entry Administration (IAM) roles are created as a part of the AWS Cloud Improvement Package (AWS CDK) deployment (mentioned later on this submit). The next code exhibits an instance of the Secrets and techniques Supervisor secret’s useful resource coverage. Retailer the key ARN in an AWS Programs Supervisor parameter.

In case your secret is encrypted with a customized KMS key, append the important thing coverage with the next assertion and add a tag to the important thing:

In case your secret is encrypted with a customized KMS key, append the important thing coverage with the next assertion and add a tag to the important thing: AmazonDatazoneEnvironment = All. You’ll be able to skip this step should you’re utilizing an AWS managed KMS key. - Place a mechanism to generate the next payload to set off the dataset registration Lambda operate. The payload should comprise the related Redshift database, schema, and desk or view that you simply wish to publish within the Amazon DataZone area. The next instance code assumes you have got three databases in your Redshift cluster and inside these databases you have got completely different schemas, tables, and views. It is best to regulate the payload based mostly in your use case.

Stipulations in Amazon DataZone account (Account A)

Full the next steps to arrange your Amazon DataZone account (Account A):

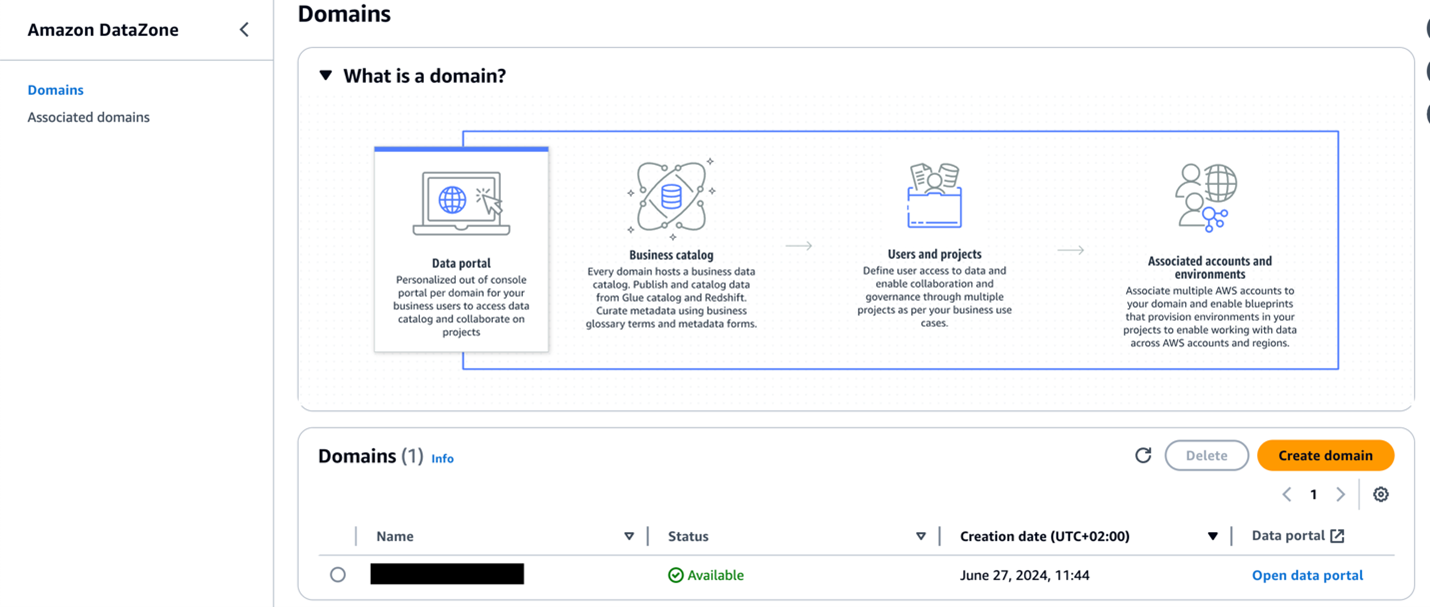

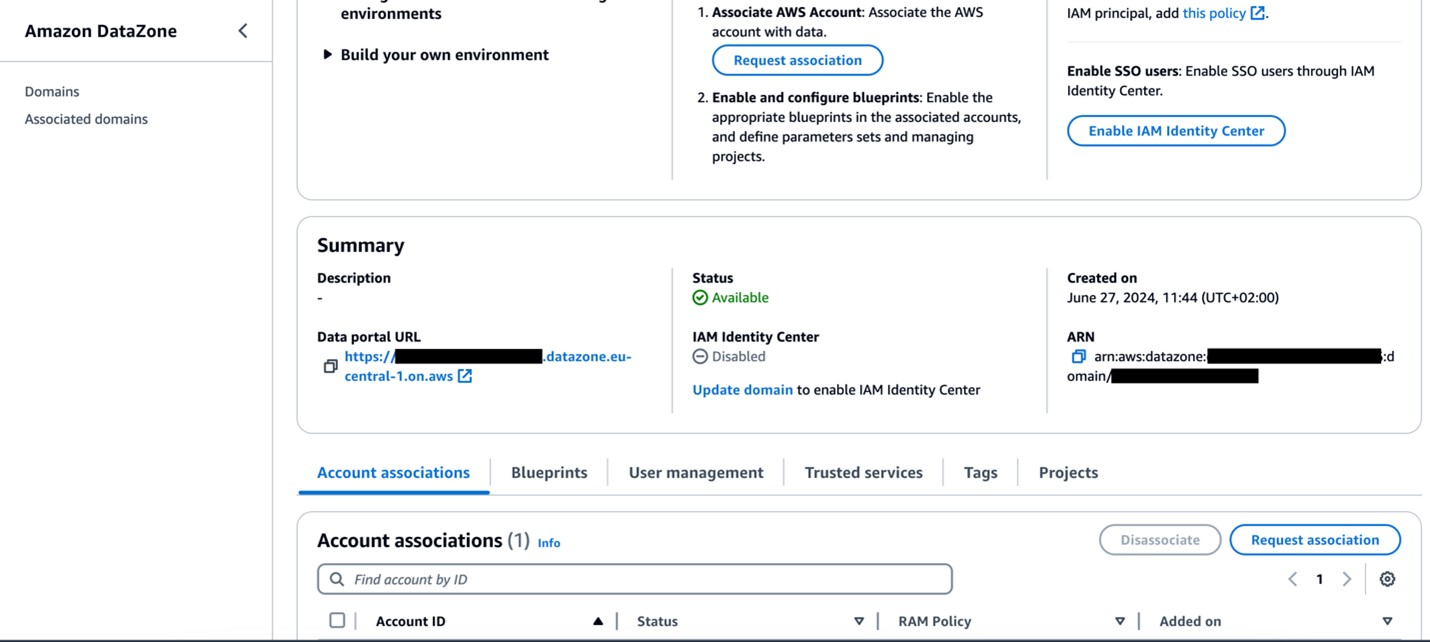

- Sign up to Account A and ensure you have already deployed an Amazon DataZone area and a undertaking inside that area. Confer with Create Amazon DataZone domains for directions to create a website.

- In case your Amazon DataZone area is encrypted with a KMS key, add the info area account (Account B) to the KMS key coverage with the next actions:

- Create an IAM position that’s assumable by Account B and ensure the position has a following coverage connected and is a member (as contributor) of your Amazon DataZone undertaking. For this submit, we name the position

dz-assumable-env-dataset-registration-role. By including this position, you possibly can efficiently run the registration Lambda operate.- Within the following coverage, present the AWS Area and account ID comparable to the place your Amazon DataZone area is created, and the KMS key ARN used to encrypt the area:

- Add Account B within the belief relationship of this position with the next belief relationship:

- Add the position as a member of the Amazon DataZone undertaking through which you wish to register your information sources. For extra info, see Add members to a undertaking.

Further instruments

The next instruments are wanted to deploy the answer utilizing the AWS CDK:

Deploy the answer

After you full the stipulations, use the AWS CDK stack offered on the GitHub repo to deploy the answer for computerized registration of information belongings into the Amazon DataZone area. Full the next steps:

- Clone the repository from GitHub to your most popular built-in improvement setting (IDE) utilizing the next instructions:

- On the base of the repository folder, run the next instructions to construct and deploy assets to AWS:

- Sign up to Account B (the info area producer account) utilizing the AWS CLI together with your profile title.

- Ensure you have configured the Area in your credential’s configuration file.

- Bootstrap the AWS CDK setting with the next instructions on the base of the repository folder. Present the profile title of your deployment account (Account B). Bootstrapping is a one-time exercise and isn’t wanted in case your AWS account is already bootstrapped.

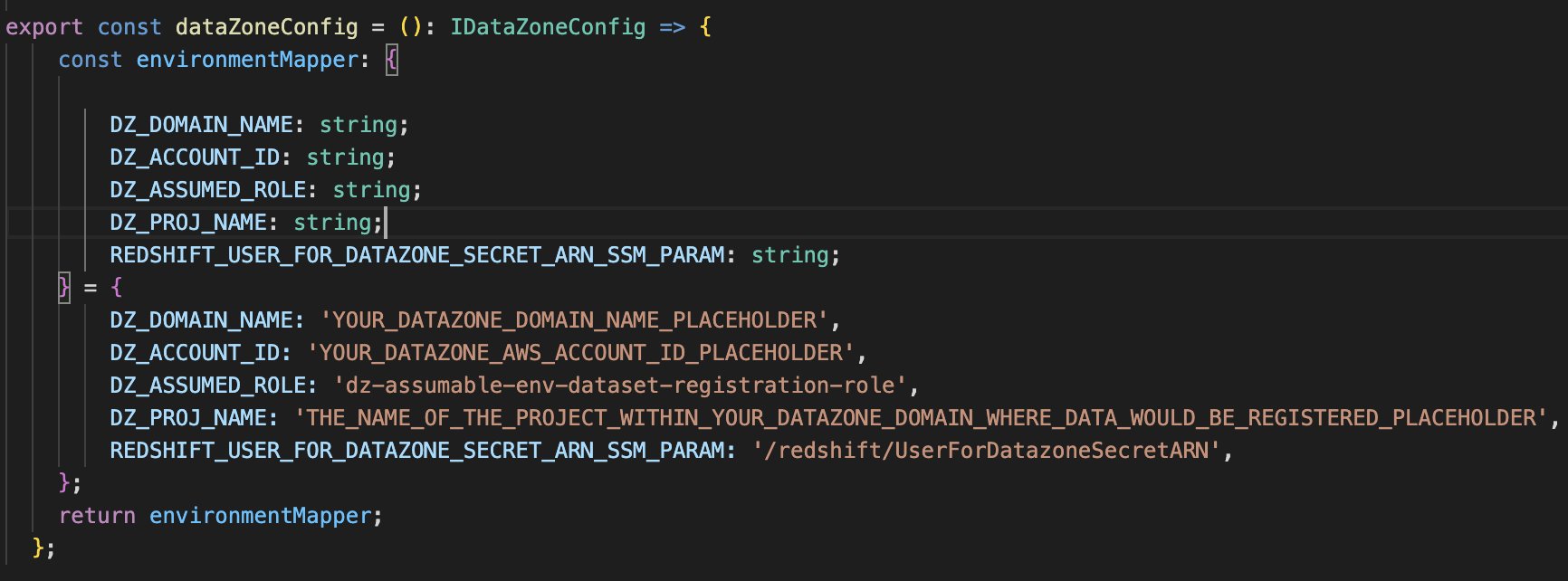

- Exchange the placeholder parameters (marked with the suffix

_PLACEHOLDER) within the fileconfig/DataZoneConfig.ts:- Amazon DataZone area and undertaking title of your Amazon DataZone occasion. Make sure that all names are in lowercase.

- The AWS account ID of the Amazon DataZone account (Account A).

- The assumable IAM position from the stipulations.

- The AWS Programs Supervisor parameter title containing the Secrets and techniques Supervisor secret ARN of the Amazon Redshift credentials.

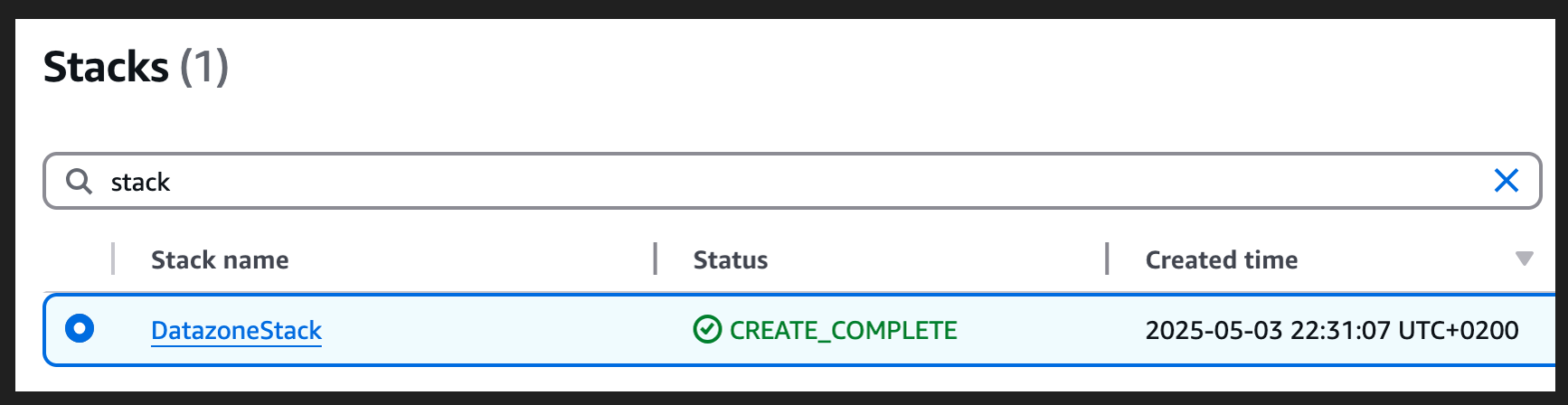

- Use the next command within the base folder to deploy the AWS CDK answer. Throughout deployment, enter

yif you wish to deploy the modifications for some stacks once you see the immediateDo you want to deploy these modifications (y/n)? - After the deployment is full, sign up to Account B and open the AWS CloudFormation console to confirm that the infrastructure was deployed.

Check computerized information registration to Amazon DataZone

Full the next steps to check the answer:

- Sign up to Account B (producer account).

- On the Lambda console, open the

datazone-redshift-dataset-registrationoperate. - Beneath TEST EVENTS, select Create new check occasion.

- For Occasion title, enter

Redshift, and for Occasion JSON, enter the next JSON construction (change the cluster, schema, database, and desk names in keeping with your setting): - Select Save.

- Select Invoke.

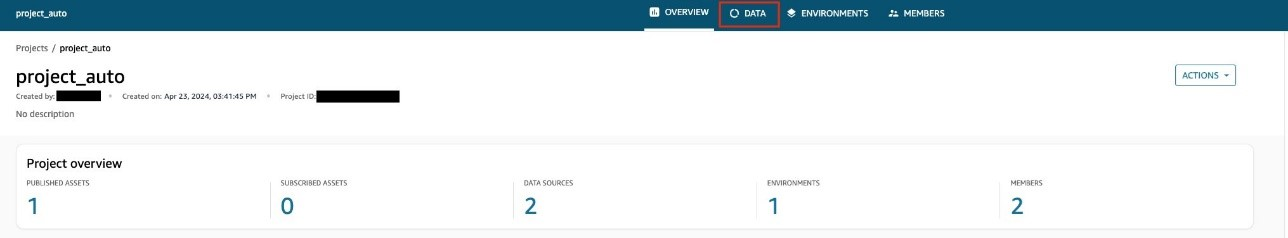

- Open the Amazon DataZone console in Account A the place you deployed the assets.

- Select Domains within the navigation pane, then open your area.

- On the area particulars web page, find the Amazon DataZone information portal URL within the Abstract part. Select the hyperlink to the info portal.

For extra particulars about accessing Amazon DataZone, discuss with How can I entry Amazon DataZone?

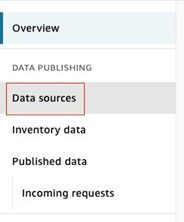

- Within the information portal, open your undertaking and select the Information tab.

- Within the navigation pane, select Information sources and discover the newly created information supply for Amazon Redshift.

- Confirm that the info supply has been efficiently revealed.

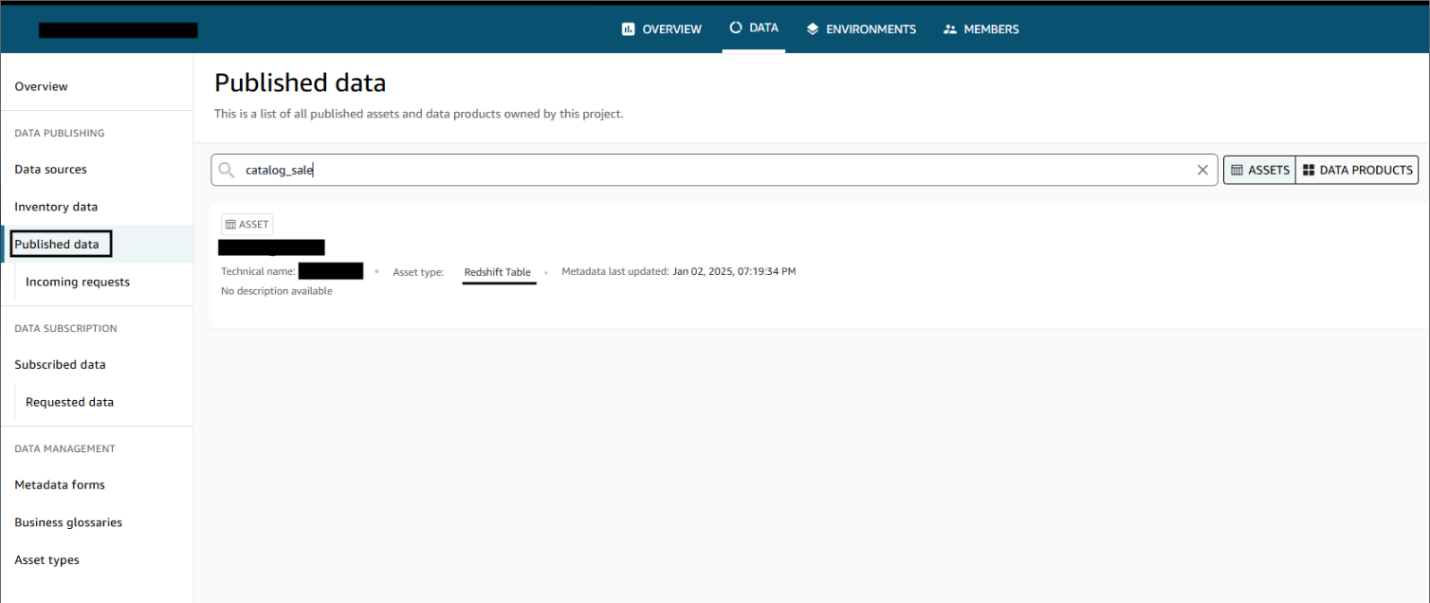

After the info sources are revealed, customers can uncover the revealed information and submit a subscription request. The info producer can approve or reject requests. Upon approval, customers can devour the info by querying the info within the Amazon Redshift question editor. The next screenshot illustrates information discovery within the Amazon DataZone information portal.

Clear up

Full the next steps to wash up the assets deployed by way of the AWS CDK:

- Sign up to Account B, go to the Amazon DataZone area portal, and examine there is no such thing as a subscription on your revealed information asset. If there’s a subscription, both ask the subscriber to unsubscribe or revoke the subscription request.

- Delete the revealed information belongings that have been created within the Amazon DataZone undertaking by the dataset registration Lambda operate.

- Delete the remaining assets created utilizing the next command within the base folder:

Conclusion

Amazon DataZone provides a seamless integration with AWS companies, offering a strong answer for organizations like Volkswagen to interrupt down their information silos and implement efficient information mesh architectures by way of a simple implementation highlighted on this submit. Through the use of Amazon DataZone, Volkswagen addressed its rapid information sharing hurdles and laid the groundwork for a extra agile, data-driven future in automotive manufacturing. The automated information publishing from numerous warehouses, coupled with standardized governance workflows, has considerably decreased the guide overhead that when slowed down Volkswagen’s information engineering groups. Now, as an alternative of navigating a labyrinth of emails, tickets, and communication, Volkswagen’s information engineers and information scientists can rapidly uncover and entry the info they want, all whereas sustaining their safety and compliance requirements.

Through the use of Amazon DataZone, organizations can carry their remoted information collectively in ways in which make it easier for groups to collaborate whereas sustaining safety and compliance at scale. This method not solely addresses present information governance challenges but in addition creates a extremely scalable basis for future data-driven improvements. For steering on establishing your group’s information mesh with Amazon DataZone, contact your AWS group as we speak.

In case your secret is encrypted with a customized KMS key, append the important thing coverage with the next assertion and add a tag to the important thing:

In case your secret is encrypted with a customized KMS key, append the important thing coverage with the next assertion and add a tag to the important thing: