An Introduction to Time Collection Forecasting with Generative AI

Time sequence forecasting has been a cornerstone of enterprise useful resource planning for many years. Predictions about future demand information crucial selections such because the variety of models to inventory, labor to rent, capital investments into manufacturing and success infrastructure, and the pricing of products and providers. Correct demand forecasts are important for these and lots of different enterprise selections.

Nevertheless, forecasts are hardly ever if ever excellent. Within the mid-2010s, many organizations coping with computational limitations and restricted entry to superior forecasting capabilities reported forecast accuracies of solely 50-60%. However with the broader adoption of the cloud, the introduction of way more accessible applied sciences and the improved accessibility of exterior information sources corresponding to climate and occasion information, organizations are starting to see enhancements.

As we enter the period of generative AI, a brand new class of fashions known as time sequence transformers seems able to serving to organizations ship much more enchancment. Much like massive language fashions (like ChatGPT) that excel at predicting the subsequent phrase in a sentence, time sequence transformers predict the subsequent worth in a numerical sequence. With publicity to massive volumes of time sequence information, these fashions grow to be specialists at selecting up on refined patterns of relationships between the values in these sequence with demonstrated success throughout quite a lot of domains.

On this weblog, we are going to present a high-level introduction to this class of forecasting fashions, meant to assist managers, analysts and information scientists develop a fundamental understanding of how they work. We are going to then present entry to a sequence of notebooks constructed round publicly obtainable datasets demonstrating how organizations housing their information in Databricks could simply faucet into a number of of the preferred of those fashions for his or her forecasting wants. We hope that this helps organizations faucet into the potential of generative AI for driving higher forecast accuracies.

Understanding Time Collection Transformers

Generative AI fashions are a type of a deep neural community, a fancy machine studying mannequin inside which numerous inputs are mixed in quite a lot of methods to reach at a predicted worth. The mechanics of how the mannequin learns to mix inputs to reach at an correct prediction is known as a mannequin’s structure.

The breakthrough in deep neural networks which have given rise to generative AI has been the design of a specialised mannequin structure known as a transformer. Whereas the precise particulars of how transformers differ from different deep neural community architectures are fairly advanced, the easy matter is that the transformer is excellent at selecting up on the advanced relationships between values in lengthy sequences.

To coach a time sequence transformer, an appropriately architected deep neural community is uncovered to a big quantity of time sequence information. After it has had the chance to coach on thousands and thousands if not billions of time sequence values, it learns the advanced patterns of relationships present in these datasets. When it’s then uncovered to a beforehand unseen time sequence, it could possibly use this foundational information to determine the place related patterns of relationships inside the time sequence exist and predict new values within the sequence.

This means of studying relationships from massive volumes of information is known as pre-training. As a result of the information gained by the mannequin throughout pre-training is extremely generalizable, pre-trained fashions known as basis fashions will be employed in opposition to beforehand unseen time sequence with out extra coaching. That stated, extra coaching on a corporation’s proprietary information, a course of known as fine-tuning, could in some cases assist the group obtain even higher forecast accuracy. Both method, as soon as the mannequin is deemed to be in a passable state, the group merely must current it with a time sequence and ask, what comes subsequent?

Addressing Widespread Time Collection Challenges

Whereas this high-level understanding of a time sequence transformer could make sense, most forecast practitioners will possible have three fast questions. First, whereas two time sequence could comply with the same sample, they could function at utterly totally different scales, how does a transformer overcome that downside? Second, inside most time sequence fashions there are day by day, weekly and annual patterns of seasonality that must be thought of, how do fashions know to search for these patterns? Third, many time sequence are influenced by exterior elements, how can this information be integrated into the forecast era course of?

The primary of those challenges is addressed by mathematically standardizing all time sequence information utilizing a set of methods known as scaling. The mechanics of this are inside to every mannequin’s structure however primarily incoming time sequence values are transformed to a typical scale that enables the mannequin to acknowledge patterns within the information based mostly on its foundational information. Predictions are made and people predictions are then returned to the unique scale of the unique information.

Concerning the seasonal patterns, on the coronary heart of the transformer structure is a course of known as self-attention. Whereas this course of is kind of advanced, basically this mechanism permits the mannequin to be taught the diploma to which particular prior values affect a given future worth.

Whereas that seems like the answer for seasonality, it is vital to grasp that fashions differ of their skill to select up on low-level patterns of seasonality based mostly on how they divide time sequence inputs. By means of a course of known as tokenization, values in a time sequence are divided into models known as tokens. A token could also be a single time sequence worth or it might be a brief sequence of values (sometimes called a patch).

The scale of the token determines the bottom stage of granularity at which seasonal patterns will be detected. (Tokenization additionally defines logic for coping with lacking values.) When exploring a selected mannequin, it is vital to learn the generally technical info round tokenization to grasp whether or not the mannequin is suitable to your information.

Lastly, relating to exterior variables, time sequence transformers make use of quite a lot of approaches. In some, fashions are skilled on each time sequence information and associated exterior variables. In others, fashions are architected to grasp {that a} single time sequence could also be composed of a number of, parallel, associated sequences. Whatever the exact approach employed, some restricted help for exterior variables will be discovered with these fashions.

A Transient Have a look at 4 Standard Time Collection Transformers

With a high-level understanding of time sequence transformers beneath our belt, let’s take a second to take a look at 4 widespread basis time sequence transformer fashions:

Chronos

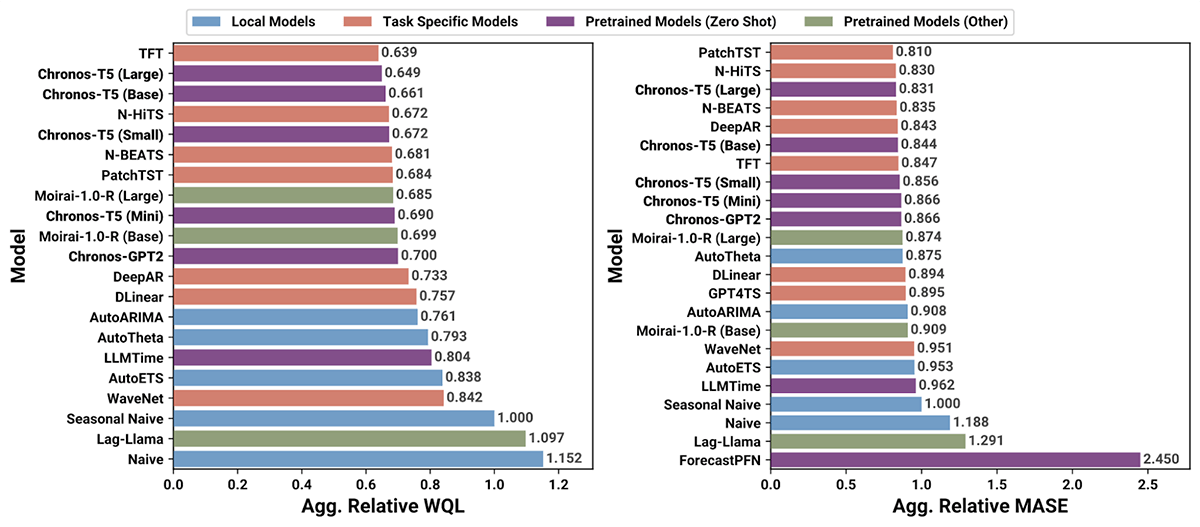

Chronos is a household of open-source, pretrained time sequence forecasting fashions from Amazon. These fashions take a comparatively naive strategy to forecasting by decoding a time sequence as only a specialised language with its personal patterns of relationships between tokens. Regardless of this comparatively simplistic strategy which incorporates help for lacking values however not exterior variables, the Chronos household of fashions has demonstrated some spectacular outcomes as a general-purpose forecasting answer (Determine 1).

Determine 1. Analysis metrics for Chronos and varied different forecasting fashions utilized to 27 benchmarking information units (from https://github.com/amazon-science/chronos-forecasting)

TimesFM

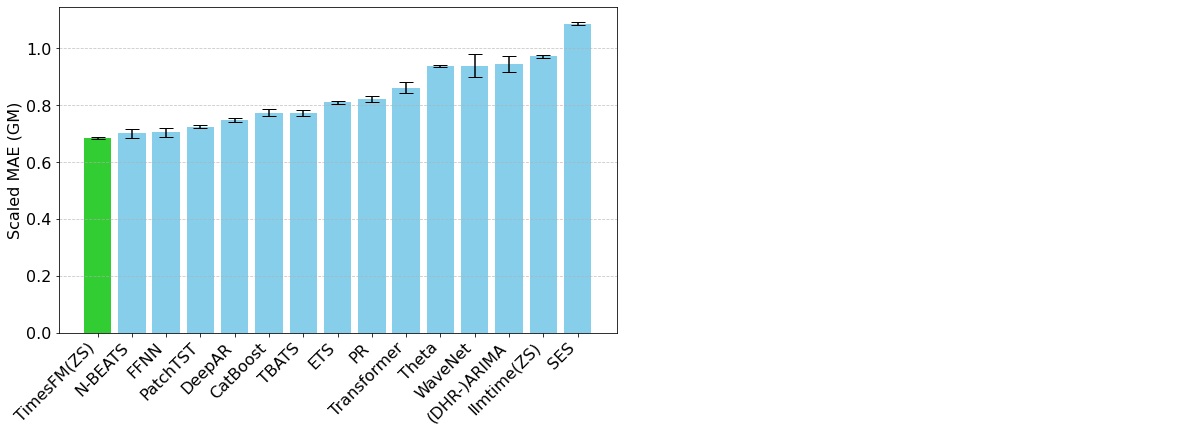

TimesFM is an open-source basis mannequin developed by Google Analysis, pre-trained on over 100 billion real-world time sequence factors. Not like Chronos, TimesFM contains a while series-specific mechanisms in its structure that allow the consumer to exert fine-grained management over how inputs and outputs are organized. This has an affect on how seasonal patterns are detected but additionally the computation occasions related to the mannequin. TimesFM has confirmed itself to be a really highly effective and versatile time sequence forecasting software (Determine 2).

Determine 2. Analysis metrics for TimesFM and varied different fashions in opposition to the Monash Forecasting Archive dataset (from https://analysis.google/weblog/a-decoder-only-foundation-model-for-time-series-forecasting/)

Moirai

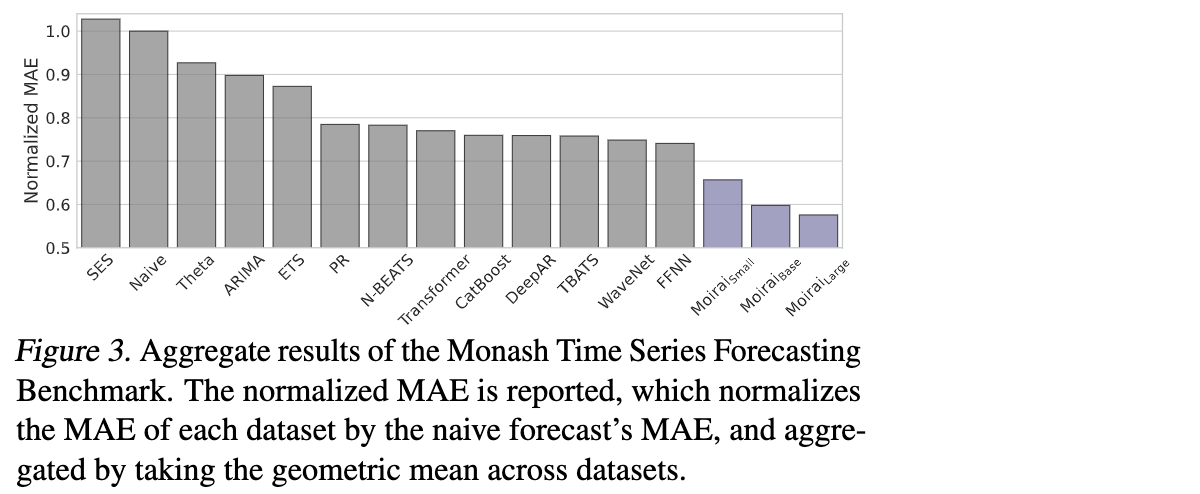

Moirai, developed by Salesforce AI Analysis, is one other open-source basis mannequin for time sequence forecasting. Skilled on “27 billion observations spanning 9 distinct domains”, Moirai is introduced as a common forecaster able to supporting each lacking values and exterior variables. Variable patch sizes permit organizations to tune the mannequin to the seasonal patterns of their datasets and when utilized correctly have been demonstrated to carry out fairly effectively in opposition to different fashions (Determine 3).

Determine 3. Analysis metrics for Moirai and varied different fashions in opposition to the Monash Time Collection Forecasting Benchmark (from https://weblog.salesforceairesearch.com/moirai/)

TimeGPT

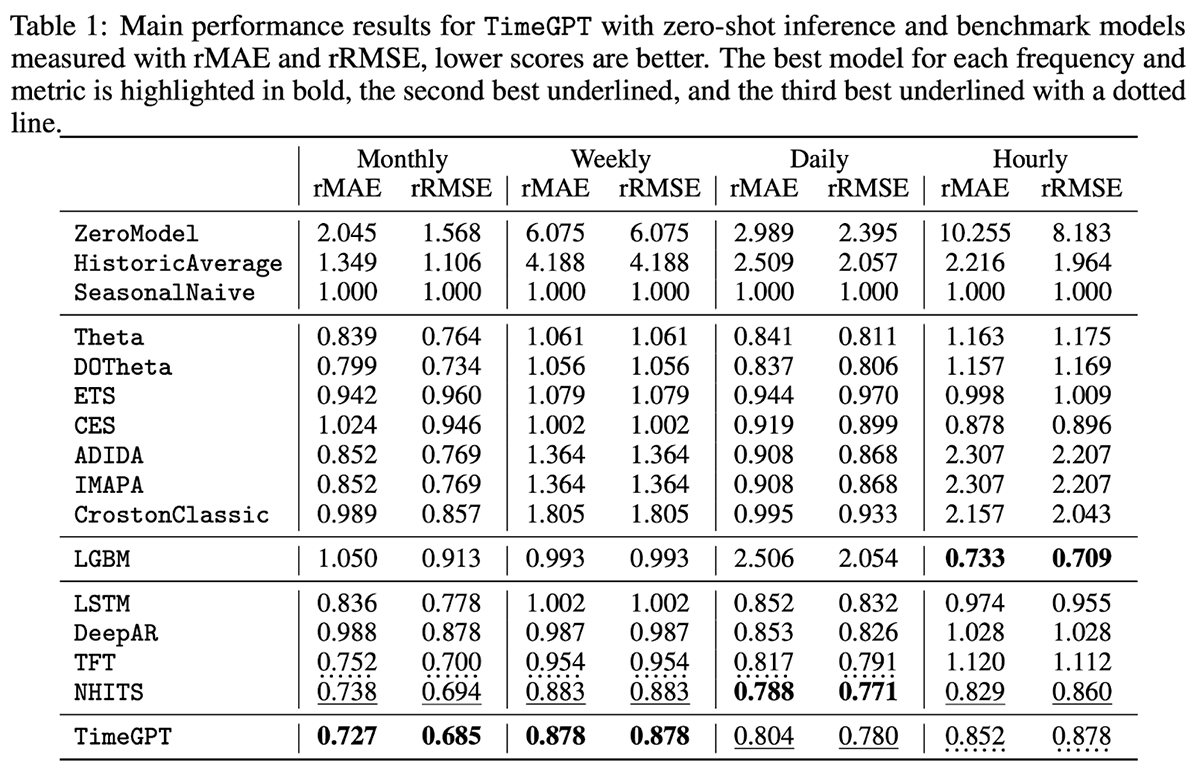

TimeGPT is a proprietary mannequin with help for exterior (exogenous) variables however not lacking values. Centered on ease of use, TimeGPT is hosted via a public API that enables organizations to generate forecasts with as little as a single line of code. In benchmarking the mannequin in opposition to 300,000 distinctive sequence at totally different ranges of temporal granularity, the mannequin was proven to supply some spectacular outcomes with little or no forecasting latency (Determine 4).

Determine 4. Analysis metrics for TimeGPT and varied different fashions in opposition to 300,000 distinctive sequence (from https://arxiv.org/pdf/2310.03589)

Getting Began with Transformer Forecasting on Databricks

With so many mannequin choices and extra nonetheless on the way in which, the important thing query for many organizations is, get began in evaluating these fashions utilizing their very own proprietary information? As with every different forecasting strategy, organizations utilizing time sequence forecasting fashions should current their historic information to the mannequin to create predictions, and people predictions have to be fastidiously evaluated and ultimately deployed to downstream programs to make them actionable.

Due to Databricks’ scalability and environment friendly use of cloud assets, many organizations have lengthy used it as the premise for his or her forecasting work, producing tens of thousands and thousands of forecasts on a day by day and even larger frequency to run their enterprise operations. The introduction of a brand new class of forecasting fashions does not change the character of this work, it merely supplies these organizations extra choices for doing it inside this surroundings.

That is to not say that there will not be some new wrinkles that include these fashions. Constructed on a deep neural community structure, many of those fashions carry out finest when employed in opposition to a GPU, and within the case of TimeGPT, they could require API calls to an exterior infrastructure as a part of the forecast era course of. However basically, the sample of housing a corporation’s historic time sequence information, presenting that information to a mannequin and capturing the output to a queriable desk stays unchanged.

To assist organizations perceive how they could use these fashions inside a Databricks surroundings, we have assembled a sequence of notebooks demonstrating how forecasts will be generated with every of the 4 fashions described above. Practitioners could freely obtain these notebooks and make use of them inside their Databricks surroundings to realize familiarity with their use. The code introduced could then be tailored to different, related fashions, offering organizations utilizing Databricks as the premise for his or her forecasting efforts extra choices for utilizing generative AI of their useful resource planning processes.

Get began with Databricks for forecasting modeling in the present day with this sequence of notebooks.