On this publish, you’ll learn to use Terraform to automate and streamline your Apache Flink software lifecycle administration on Amazon Managed Service for Apache Flink. We’ll stroll you thru the entire lifecycle together with deployment, updates, scaling, and troubleshooting frequent points.

Managing Apache Flink purposes by way of their total lifecycle from preliminary deployment to scaling or updating might be complicated and error-prone when performed manually. Groups usually battle with inconsistent deployments throughout environments, problem monitoring configuration adjustments over time, and sophisticated rollback procedures when points come up.

Infrastructure as Code (IaC) addresses these challenges by treating infrastructure configuration as code that may be versioned, examined, and automatic. Whereas there are completely different IaC instruments obtainable together with AWS CloudFormation or AWS Cloud Improvement Package (AWS CDK), we concentrate on HashiCorp Terraform to automate the entire lifecycle administration of Apache Flink purposes on Amazon Managed Service for Apache Flink.

Managed Service for Apache Flink means that you can run Apache Flink jobs at scale with out worrying about managing clusters and provisioning sources. You possibly can concentrate on creating your Apache Flink utilizing your Built-in Improvement Surroundings (IDE) of selection, constructing and packaging the appliance utilizing commonplace construct and CI/CD instruments. As soon as your software is packaged and uploaded to Amazon S3, you’ll be able to deploy and run it with a serverless expertise.

When you can management your Managed Service for Apache Flink purposes straight utilizing the AWS Console, CLI, or SDKs, Terraform gives key benefits comparable to model management of your software configuration, consistency throughout environments, and seamless CI/CD integration. This publish builds upon our two-part weblog sequence “Deep dive into the Amazon Managed Service for Apache Flink software lifecycle – Half 1” and “Half 2” that discusses the final lifecycle ideas of Apache Flink purposes.

We use the pattern code revealed on the GitHub repository to display the lifecycle administration. Observe that this isn’t a production-ready answer.

Establishing your Terraform atmosphere

Earlier than you’ll be able to handle your Apache Flink purposes with Terraform, that you must arrange your execution atmosphere. On this part, we’ll cowl find out how to configure Terraform state administration and credential dealing with. The Terraform AWS supplier helps Managed Service for Apache Flink by way of the aws_kinesis_analyticsv2_application useful resource (utilizing the legacy title “Kinesis Analytics V2“).

Terraform state administration

Terraform makes use of a state file to trace the sources it manages. In Terraform, storing the state file in Amazon S3 is a finest observe for groups working collaboratively as a result of it gives a centralised, sturdy, and safe location for monitoring infrastructure adjustments. Nonetheless, since a number of engineers or CI/CD pipelines might run Terraform concurrently, state locking is important to forestall race circumstances the place concurrent executions may corrupt the state. S3 as backend is usually used for state storage and locking, making certain that just one Terraform course of can modify the state at a time, thus sustaining infrastructure consistency and avoiding deployment conflicts.

Passing credentials

To run Terraform inside a Docker container whereas making certain that it has entry to the required AWS credentials and infrastructure code, we comply with a structured strategy. This course of entails exporting AWS credentials, mounting required directories, and executing Terraform instructions inside a Docker container. Let’s break this down step-by-step. Earlier than working Terraform, we have to guarantee that our Docker container has entry to the required AWS credentials. Since we’re utilizing non permanent credentials, we generate them utilizing the AWS CLI with the next command:

aws configure export-credentials --profile $AWS_PROFILE --format env-no-export > .env.dockerThis command does the next:

- It exports AWS credentials from a particular AWS profile (

$AWS_PROFILE). - The credentials are saved in

.env.dockerin a format appropriate for Docker. - The

--format env-no-exportchoice shows credentials as non-exported shell variables

This file (.env.docker) will later be used to cross credentials into the Docker container

Operating Terraform in Docker

Operating Terraform inside a Docker container gives a constant, moveable, and remoted atmosphere for managing infrastructure with out requiring Terraform to be put in straight on the native machine. This strategy ensures that Terraform runs in a managed atmosphere, decreasing dependency conflicts and bettering safety. To execute Terraform inside a Docker container, we use a docker run command that mounts the required directories and passes AWS credentials, permitting Terraform to use infrastructure adjustments seamlessly.

The Terraform configuration recordsdata are saved in an area terraform folder, which is nearly connected to the container utilizing the -v flag. This permits the containerised Terraform occasion to entry and modify infrastructure code as if it have been working regionally.

To run Terraform in Docker, the next command is executed:

Breaking down this command step-by-step:

--env-file .env.dockergives the AWS credentials required for Terraform to authenticate.--rm -itruns the container interactively and is eliminated after execution to forestall muddle.-v ./terraform:/residence/flink-project/terraformmounts the Terraform listing into the container, making the configuration recordsdata accessible.-v ./construct.sh:/residence/flink-project/construct.shmounts theconstruct.shscript, which comprises the logic to construct JAR file for flink and execute Terraform instructions.msf-terraformis the Docker picture used, which has Terraform pre-installed.bash construct.sh applyruns theconstruct.shscript contained in the container, passingapplyas an argument to set off the Terraform apply course of.

Contained in the container, construct.sh sometimes contains instructions comparable to terraform init to initialise the Terraform working listing and terraform apply to use infrastructure adjustments. Because the Terraform execution occurs completely inside the container, there is no such thing as a want to put in Terraform regionally, and the method stays constant throughout completely different programs. This technique is especially helpful for groups working in collaborative environments, because it standardises Terraform execution and permits for reproducibility throughout improvement, staging, and manufacturing environments.

Managing software lifecycle with Terraform

On this part, we stroll by way of every part of the Apache Flink software lifecycle and perceive how one can implement these operations utilizing Terraform. Whereas these operations are normally totally automated as a part of a CI/CD pipeline, you’ll execute the person steps manually from the command line for demonstration functions. There are various methods to run Terraform relying in your group’s tooling and infrastructure setup, however for this demonstration, we run Terraform in a container alongside the appliance construct to simplify dependency administration. In real-world situations, you’ll sometimes have separate CI/CD phases for constructing your software and deploying with Terraform, with distinct configurations for every atmosphere. Since each group has completely different CI/CD tooling and approaches, we hold these implementation particulars out of scope and concentrate on the core Terraform operations.

For a complete deep dive into Apache Flink software lifecycle operations, seek advice from our earlier two-part weblog sequence.

Create and begin a brand new software

To get began you wish to create your Apache Flink software working on Managed Service for Apache Flink. It’s best to execute the next Docker command:

This command will full the next operations by executing the bash script construct.sh:

- Constructing the Java ARchive (JAR) file out of your Apache Flink software

- Importing the JAR file to S3

- Setting the config variables in your Apache Flink software in

terraform/config.tfvars.json - Create and deploy the Apache Flink software to Managed Service for Apache Flink utilizing

terraform apply

Terraform totally covers this operation. You possibly can examine the working Apache Flink software utilizing AWS CLI or contained in the Managed Apache Flink Console after Terraform completes with Apply Full! Terraform is anticipating the Apache Flink artifact, i.e. the JAR file to be packaged and copied to S3. This operation is normally a part of the CI/CD pipeline and executed earlier than invoking the terraform apply. Right here, the operation is specified within the construct.sh script.

Deploy code change to an software

You’ve efficiently created and began the Flink software. Nonetheless, you notice that you must make a change to the Flink software code. Let’s make a code change to the appliance code in flink/ and see find out how to construct and deploy it. After making the required adjustments, you merely need to run the next Docker command once more that builds the JAR file, uploads it to S3 and deploys the Apache Flink software utilizing Terraform:

This part of the lifecycle is totally supported by Terraform so long as each purposes are state appropriate, that means that the operators of the upgraded Apache Flink software are in a position to restore the state from the snapshot that’s taken from the outdated software model, earlier than Managed Service for Apache Flink stops and deploys the change. For instance, eradicating a stateful operator with out enabling the allowNonRestoredState flag or altering an operator’s UID may stop the brand new software from restoring from the snapshot. For extra data on state compatibility, seek advice from Upgrading Purposes and Flink Variations. For an instance of state incompatibility, and methods for dealing with state incompatibility, seek advice from Introducing the brand new Amazon Kinesis supply connector for Apache Flink.

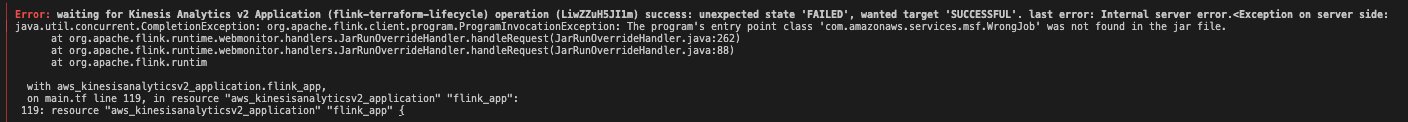

When deploying a code change goes fallacious – An issue prevents the appliance code from being deployed

You additionally must be cautious with deploying code adjustments that comprise bugs stopping the Apache Flink job from beginning. For extra data, seek advice from failure mode (a) – an issue prevents the appliance code from being deployed underneath When beginning or updating the appliance goes fallacious. As an illustration, this may be simulated by setting the mainClass in flink/pom.xml mistakenly to com.amazonaws.companies.msf.WrongJob. Just like earlier than you construct the JAR, add it and run the terraform apply by working the Docker command from above. Nonetheless, Terraform now fails to accurately apply the adjustments and throws an error message because the Apache Flink software fails to accurately replace. Lastly, the software standing strikes to READY.

To treatment the problem, you must change the worth of mainClass again to the unique one and deploy the adjustments to Managed Service for Apache Flink. The Apache Flink software stays in READY standing and doesn’t begin robotically, as this was its state earlier than making use of the repair. Observe that Terraform doesn’t attempt to begin the appliance if you deploy a change. You’ll have to manually begin the Flink software utilizing the AWS CLI or by way of the Managed Apache Flink Console.

As detailed in Half 2 of the companion weblog, there’s a second failure situation the place the appliance begins efficiently, however the job turns into caught in a steady fail-and-restart loop. A code change may trigger this failure mode. We are going to cowl the second error situation after we cowl deploying configuration adjustments.

Handbook rollback software code to earlier software code

As a part of the lifecycle administration of your Apache Flink software, you might have to explicitly rollback to a earlier working software model. That is significantly helpful when a newly deployed software model with software code adjustments reveals surprising behaviour and also you wish to explicitly rollback the appliance. At the moment, Terraform doesn’t help specific rollbacks of your Apache Flink software working in Managed Service for Apache Flink. You’ll have to resort to therollbackApplication API by way of the AWS CLI or the Managed Service for Apache Flink Console to revert the appliance to the earlier working model.

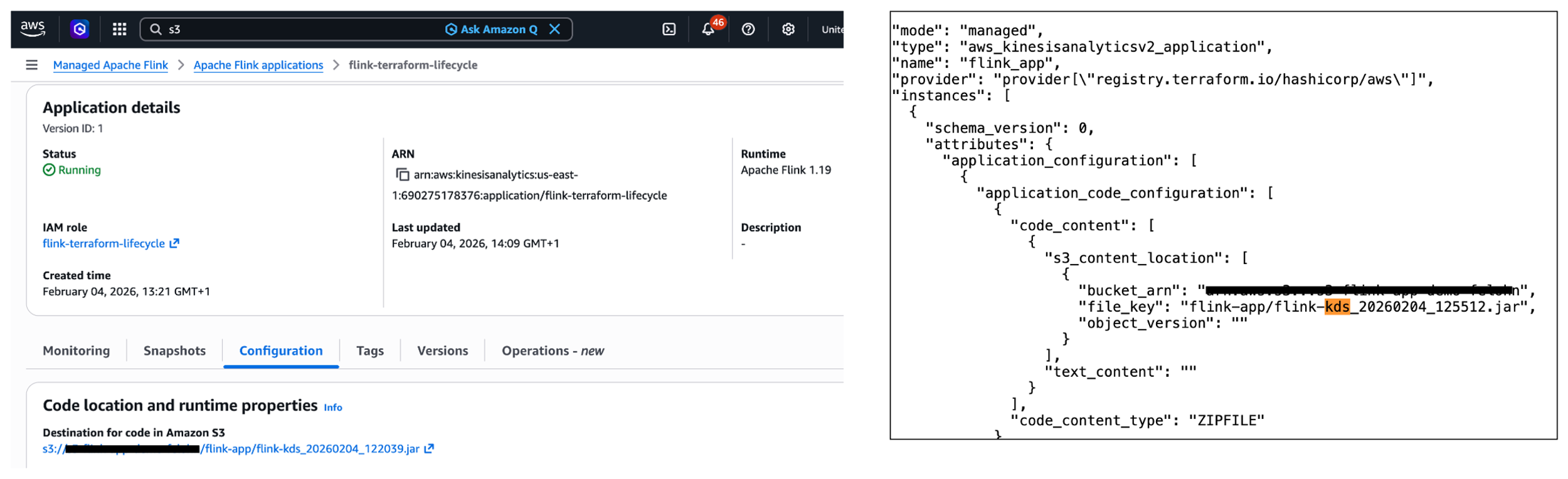

Once you carry out the express rollback, Terraform will initially not pay attention to the adjustments. Extra particularly, the S3 path to the JAR file within the Managed Service for Apache Flink service (see left a part of the picture under) is completely different to the S3 path denoted within the terraform.tfstate file saved in Amazon S3 (see the best a part of the picture under). Happily, Terraform will at all times carry out refreshing actions that embody studying the present settings from all managed distant objects and updating the Terraform state to match as a part of making a plan in each terraform plan and terraform apply instructions.

In abstract, whereas you can’t carry out a handbook rollback utilizing Terraform, Terraform will robotically refresh the state when deploying a change utilizing terraform apply.

Deploy config change to software

You’ve already made adjustments to the appliance code of your Apache Flink software. What about making adjustments to the config of the appliance, e.g., altering runtime parameters? Think about you wish to change the software logging stage of your working Apache Flink software. To vary the logging stage from ERROR to INFO, you must change the worth for flink_app_monitoring_metrics_level within the terraform/config.tfvars.json to INFO. To deploy the config adjustments, that you must run the docker run command once more as performed within the earlier sections. This situation works as anticipated and is totally coated by Terraform.

What occurs when the Apache Flink software deploys efficiently however fails and restarts throughout execution? For extra data, please seek advice from failure mode (b) – the appliance is began, the job is caught in a fail-and-restart loop underneath When beginning or updating the appliance goes fallacious. Observe that this failure mode can occur when making code adjustments as properly.

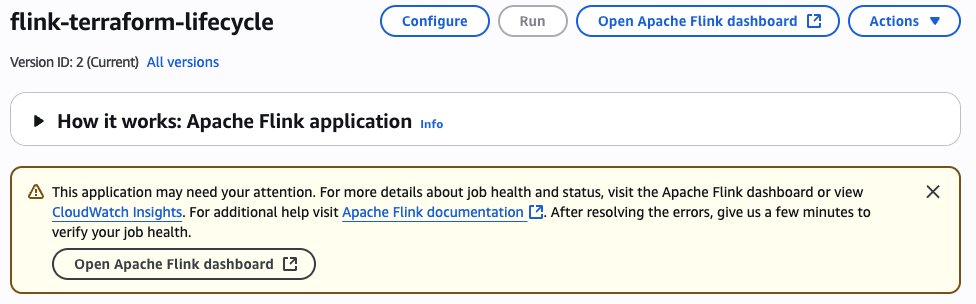

When deploying config change goes fallacious – The appliance is began, the job is caught in a fail-and-restart loop

Within the following instance, we apply a fallacious configuration change stopping the Kinesis connector from initialising accurately, finally placing the job in a fail-and-restart loop. To simulate this failure situation, you’ll want to switch the Kinesis stream configuration by altering the stream title to a non-existent one. This transformation is made within the terraform/config.tfvars.json file, particularly altering the stream.title worth underneath flink_app_environment_variables. Once you deploy with this invalid configuration, the preliminary deployment will seem profitable, displaying an Apply Full! message. The Flink software standing can even present as RUNNING. Nonetheless, the precise behaviour reveals issues. In the event you examine the Flink Dashboard, you’ll see the appliance is constantly failing and restarting. Additionally, you will note a warning message in regards to the software requiring consideration within the AWS Console.

As detailed within the part Monitoring Apache Flink software operations within the companion weblog (half 2), you’ll be able to monitor the FullRestarts metric to detect the fail-and-restart loop.

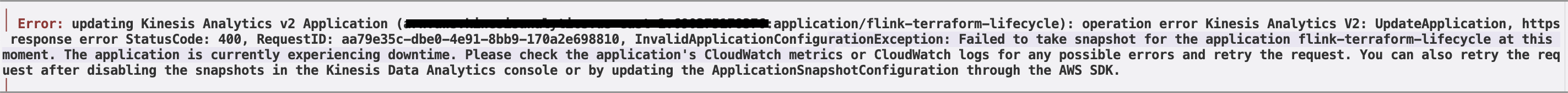

Reverting the adjustments made to the atmosphere variable and deploying the adjustments will end in Terraform displaying the next error message: Did not take snapshot for the appliance flink-terraform-lifecycle at this second. The appliance is at present experiencing downtime.

You need to force-stop with no snapshot and restart the appliance with a snapshot to get your Flink software again to a correctly functioning state. It’s best to always monitor the appliance state of your Apache Flink software to detect any points.

Different frequent operations

Manually scaling the appliance

One other frequent operation within the lifecycle of your Apache Flink software is scaling the appliance up or down by adjusting the parallelism. This operation adjustments the variety of Kinesis Processing Items (KPUs) allotted to your software. Let’s take a look at two completely different scaling situations and the way they’re dealt with by Terraform.

Within the first situation, you wish to change the parallelism of your working Apache Flink software inside the default parallelism quota. To do that, that you must modify the worth for flink_app_parallelism within the terraform/config.tfvars.json file. After updating the parallelism worth, you deploy the adjustments by working the Docker command as performed within the earlier sections:

This situation works as anticipated and is totally coated by Terraform. The appliance might be up to date with the brand new parallelism setting, and Managed Service for Apache Flink will regulate the allotted KPUs accordingly. Observe that there’s a default quota of 64 KPUs for a single Managed Service for Apache Flink software, which have to be raised proactively through a quota enhance request if that you must scale your Managed Service for Apache Flink software past 64 KPUs. For extra data, seek advice from Managed Service for Apache Flink quota.

Much less frequent change deployments which require particular dealing with On this part we analyze some much less frequent change deployment situations which require some particular dealing with.

Deploy code change that removes an operator

Eradicating an operator out of your Apache Flink software requires particular consideration, significantly relating to state administration. Once you take away an operator, the state from that operator nonetheless exists within the newest snapshot, however there’s not a corresponding operator to revive it. Let’s take a more in-depth take a look at this situation and perceive how one can deal with it correctly. First, that you must guarantee that the parameter AllowNonRestoredState is ready to True. This parameter specifies whether or not the runtime is allowed to skip a state that can not be mapped to the brand new program, when restoring from a snapshot. Permitting non-restored state is required to efficiently replace an Apache Flink software if you dropped an operator. To allow the AllowNonRestoredState, that you must set the configuration worth for flink_app_allow_non_restored_state to true in terraform/config.tfvars.json. Then, you’ll be able to go forward and take away an operator: For instance, you’ll be able to straight have the sourceStream write to the sink connector in flink/src/fundamental/java/com/amazonaws/companies/msf/StreamingJob.java. Change code line 146 from windowedStream.sinkTo(sink).uid("kinesis-sink")to sourceStream.sinkTo(sink).uid("kinesis-sink"). Just remember to have commented out the total windowedStream code block (traces 103 to 140).

This transformation will take away the windowed computation and straight join the supply stream to the sink, successfully eradicating the stateful operation. After eradicating the operator out of your Flink software code, you deploy the adjustments utilizing the Docker command as beforehand performed. Nonetheless, the deployment fails with the next error message: Couldn’t execute software. Consequently, the Apache Flink software strikes to the READY state. To get better from this example, that you must restart the Apache Flink software utilizing the newest snapshot for the appliance to efficiently begin and transfer to RUNNING standing. Importantly, that you must guarantee that AllowNonRestoredState is enabled. In any other case, the appliance will fail to begin because it can’t restore the state for the eliminated operator.

Deploy change that breaks state compatibility with system rollback enabled

Through the lifecycle administration of your Apache Flink software, you would possibly encounter situations the place code adjustments break state compatibility. This sometimes occurs if you modify stateful operators in ways in which stop them from restoring their state from earlier snapshots.

A standard instance of breaking state compatibility is altering the UID of a stateful operator (comparable to an aggregation or windowing operator) in your software code. To safeguard towards such breaking adjustments, you’ll be able to allow the automated system rollback characteristic in Managed Service for Apache Flink as described within the subsection Rollback underneath Lifecycle of an software in Managed Service for Apache Flink beforehand. This characteristic is disabled by default and might be enabled utilizing the AWS Administration Console or invoking the UpdateApplication API operation. There isn’t a manner in Terraform to allow system rollback.

Subsequent, let’s display this by breaking the state compatibility of your Apache Flink software by altering the UID of a stateful operator, e.g., the string windowed-avg-price in line 140 of flink/src/fundamental/java/com/amazonaws/companies/msf/StreamingJob.java to windowed-avg-price-v2 and deploy the adjustments as earlier than. You’ll encounter the next error:

Error: ready for Kinesis Analytics v2 Software (flink-terraform-lifecycle) operation (*) success: surprising state ‘FAILED’, needed goal ‘SUCCESSFUL’. final error: org.apache.flink.runtime.relaxation.handler.RestHandlerException: Couldn’t execute software.

At this level, Managed Service for Apache Flink robotically rolls again the appliance to the earlier snapshot with the earlier JAR file, sustaining your software’s availability as you have got enabled system-rollback functionality. Terraform will initially be not conscious of the carried out rollback. Happily, as we’ve already witnessed in subsection Handbook rollback software code to earlier software code, Terraform will robotically refresh the state after we change UID to the earlier worth and deploy the adjustments.

In-place improve of Apache Flink runtime model

Managed Service for Apache Flink helps in-place improve to new Flink runtime variations. See the documentation for extra particulars. Updating the appliance dependencies and any required code adjustments is a duty of the person. After getting up to date the code artifact, the service is ready to improve the runtime of your working software in-place, with out information loss. Let’s look at how Terraform handles Flink model upgrades.

To improve your Apache Flink software from model 1.19.1 to 1.20, that you must:

- Replace the Flink dependencies in your

flink/pom.xmlto model1.20.0(flink.modelto1.20.1andflink.connector.modelto5.0.0-1.20in<properties>) - Replace the

flink_app_runtime_environmenttoFLINK-1_20interraform/config.tfvars.json - Construct and deploy the adjustments utilizing the acquainted

docker runcommand

Terraform efficiently performs an in-place improve of your Flink software. You’ll obtain the next message: Apply full! Sources: 0 added, 1 modified, 0 destroyed.

Operations at present not supported by Terraform

Let’s take a more in-depth take a look at operations which are at present not supported by Terraform.

Beginning or stopping the appliance with none configuration change

Terraform gives the start_application parameter, indicating whether or not to begin or cease the appliance. You possibly can set this parameter utilizing flink_app_start in config.tfvars.json to cease your working Apache Flink software. Nonetheless, it will solely work if the present configuration worth is ready to true. In different phrases, Terraform solely responds to the change within the parameter worth, not absolutely the worth itself. After Terraform applies this variation, your Apache Flink software will cease and its software standing will transfer to READY. Equally, restarting the appliance requires altering the flink_app_start worth again to true, however it will solely take impact if the present configuration worth is false. Terraform will then restart your software, transferring it again to the RUNNING state.

In abstract, you can not begin or cease your Apache Flink software with out making any configuration change in Terraform. You need to use AWS CLI, AWS SDK or AWS Console to begin or cease your software.

Restarting software from an older snapshot or no snapshot with none configuration change

Just like the earlier part, Terraform requires an precise configuration change of application_restore_type to set off a restart with completely different snapshot settings. Merely reapplying the identical configuration values gained’t provoke a restart from a special snapshot or no snapshot. You need to use AWS CLI, AWS SDK or AWS Console to restart your software from an older snapshot.

Performing rollback triggered manually or by system-rollback characteristic

Terraform doesn’t help performing a handbook rollback nor computerized system rollback. As well as, Terraform can even not remember when such a rollback is happening. The state data might be outdated, e.g. S3 path data. Nonetheless, Terraform robotically performs refreshing actions to learn settings from all managed distant objects and updates the Terraform state to match. Consequently, you’ll be able to have Terraform refresh the Terraform state by efficiently working a terraform apply command.

Conclusion

On this publish, we demonstrated find out how to use Terraform to automate the lifecycle administration of your Apache Flink purposes on Managed Service for Apache Flink. We walked by way of elementary operations together with creating, updating, and scaling purposes, explored how Terraform handles numerous failure situations and examined superior situations comparable to eradicating operators and performing in-place runtime upgrades. We additionally recognized operations which are at present not supported by Terraform.

For extra data, see Run a Managed Service for Apache Flink software and our two-part weblog on Deep dive into the Amazon Managed Service for Apache Flink software lifecycle.