Take into consideration revisiting objects you’ve saved to Pocket, Notion or your bookmarks. Most individuals don’t have the time to re-read all of this stuff after they’ve saved them to those varied apps, until they’ve a necessity. We’re wonderful at accumulating tons of data. Nonetheless, we’re simply not superb at making any of these locations work together with one another or add a cumulative layer that connects them collectively.

In April of 2026, Andrej Karpathy (former AI Director of Tesla and co-founder of OpenAI) instructed an answer to this concern: use a big language mannequin (LLM) to construct your wiki in real-time.

This concept grew to become viral and was in the end adopted with a GitHub gist describing how to do that utilizing an LLM. This information will present all the help (with instance code) for constructing your individual residing, evolving private wiki.

The RAG Drawback: Rediscovering Data from Scratch, Each Single Time

Numerous fashionable AI data instruments use Retrieval-Augmented Technology (RAG). In easy phrases, you add paperwork, ask a query, and the system retrieves related textual content to assist the AI generate a solution. Instruments like NotebookLM, ChatGPT (file uploads), and most enterprise AI methods observe this method.

On the floor, this is smart. However as Andrej Karpathy factors out, there’s a key flaw: the mannequin doesn’t accumulate data. Every question begins from scratch.

If a query requires synthesizing 5 paperwork, RAG pulls and combines them for that one response. Ask the identical query once more tomorrow, and it repeats your complete course of. It additionally struggles to attach data throughout time, like linking an article from March with one from October.

Briefly, RAG produces solutions, however it doesn’t construct lasting data.

Wiki Resolution: Compile Data As soon as, Question Eternally

Karpathy’s method will change the best way we take a look at fashions. Somewhat than processing uncooked paperwork after our queries, we’ll now course of these paperwork on the time of ingestion. The outcomes of this processing will likely be a everlasting, structured wiki-like product (which can will let you retailer and retrieve paperwork with a excessive diploma of management over their location).

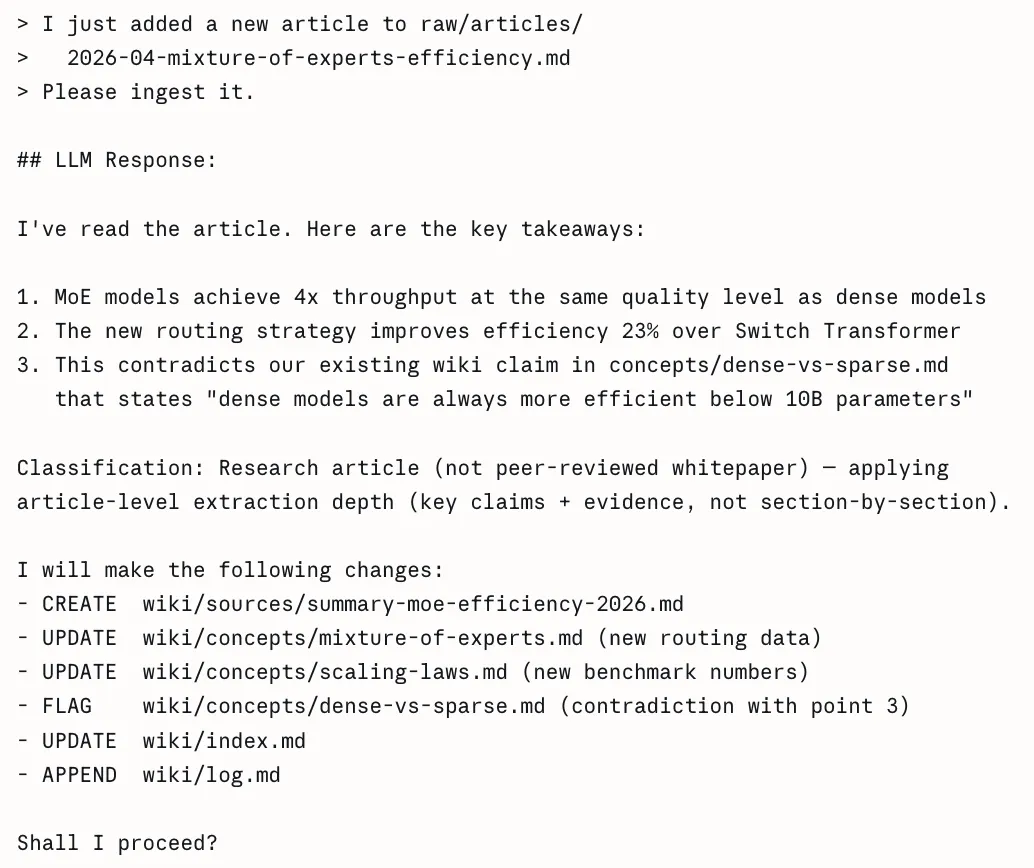

While you add a brand new doc supply to the LLM, the LLM doesn’t merely create an index of that supply for later retrieval. As an alternative, the LLM reads, understands, and integrates that supply into the data base, updating all related current pages (the place vital). It notes down any contradictions between the brand new and current claims or data, creating any vital new idea pages, and reinforcing the advanced relationships throughout your complete wiki.

Based on Karpathy, “With LLMs, data is created and maintained repeatedly and constantly quite than with the ability to use particular person queries to create data.” Right here is a straightforward comparability that illustrates this distinction additional.

How It Really Works: A Step-by-Step Information

Let’s assessment how a person would develop certainly one of these wikis.

Step 1: Get hold of your sources

It’s essential to accumulate every thing – articles that you’ve got saved, books loved, notes you’ve created, transcripts from discussions, and even your very personal historic conversations. All these supplies are your uncooked supplies, simply as ore should bear refining earlier than use.

Among the finest practices from this group is to not deal with all paperwork in the identical trend. For instance, a 50-page analysis white paper requires extraction on a section-by-section foundation whereas a tweet or social media thread solely requires a major perception and corresponding context. Likewise, a gathering transcript requires extraction of choices that had been made, motion objects which can be to be carried out and key quotations. By first classifying the kind of doc will assist extract the best sort of data to the right amount of element.

Step 3: The AI writes wiki pages (Question)

You’ll feed your supply supplies into your AI’s LLM by way of a structured question. It would permit the LLM to provide a number of wiki pages that conform to the established template of getting: a frontmatter block (YAML), a TLDR sentence, the physique of the content material, and the counterarguments/knowledge gaps.

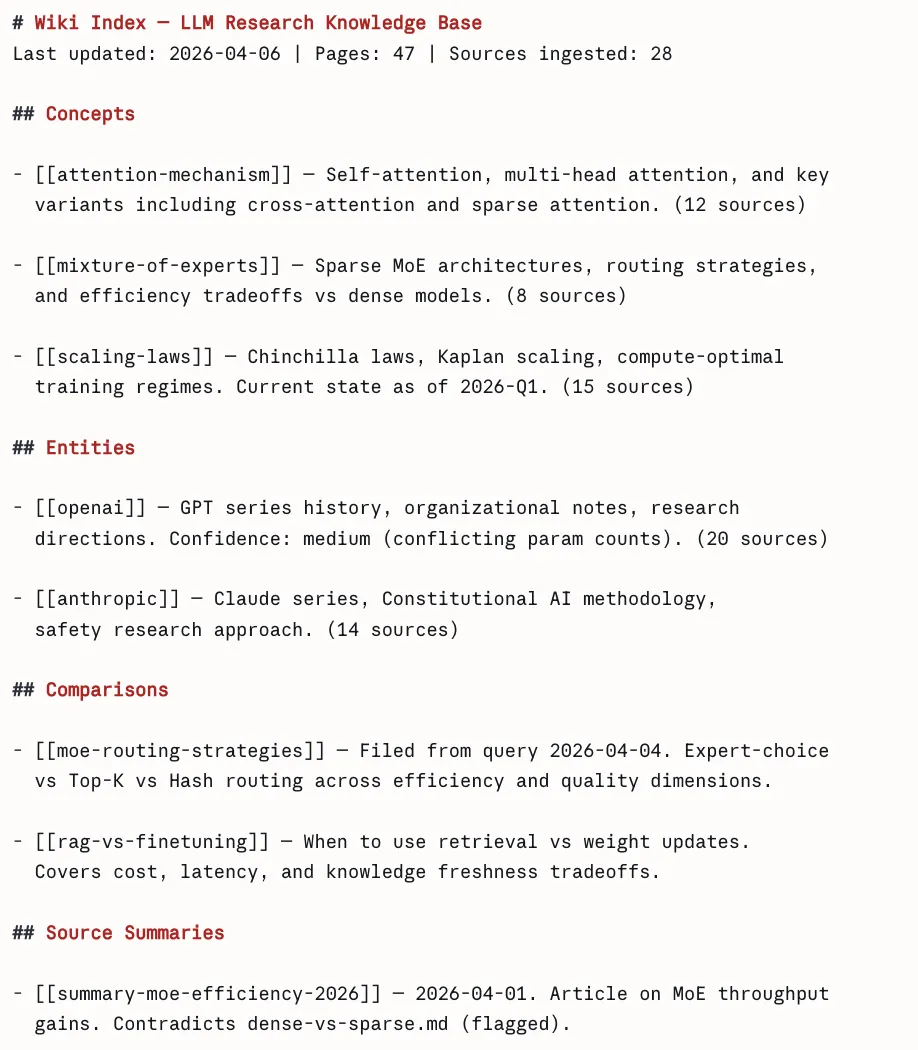

Step 4: Create an index

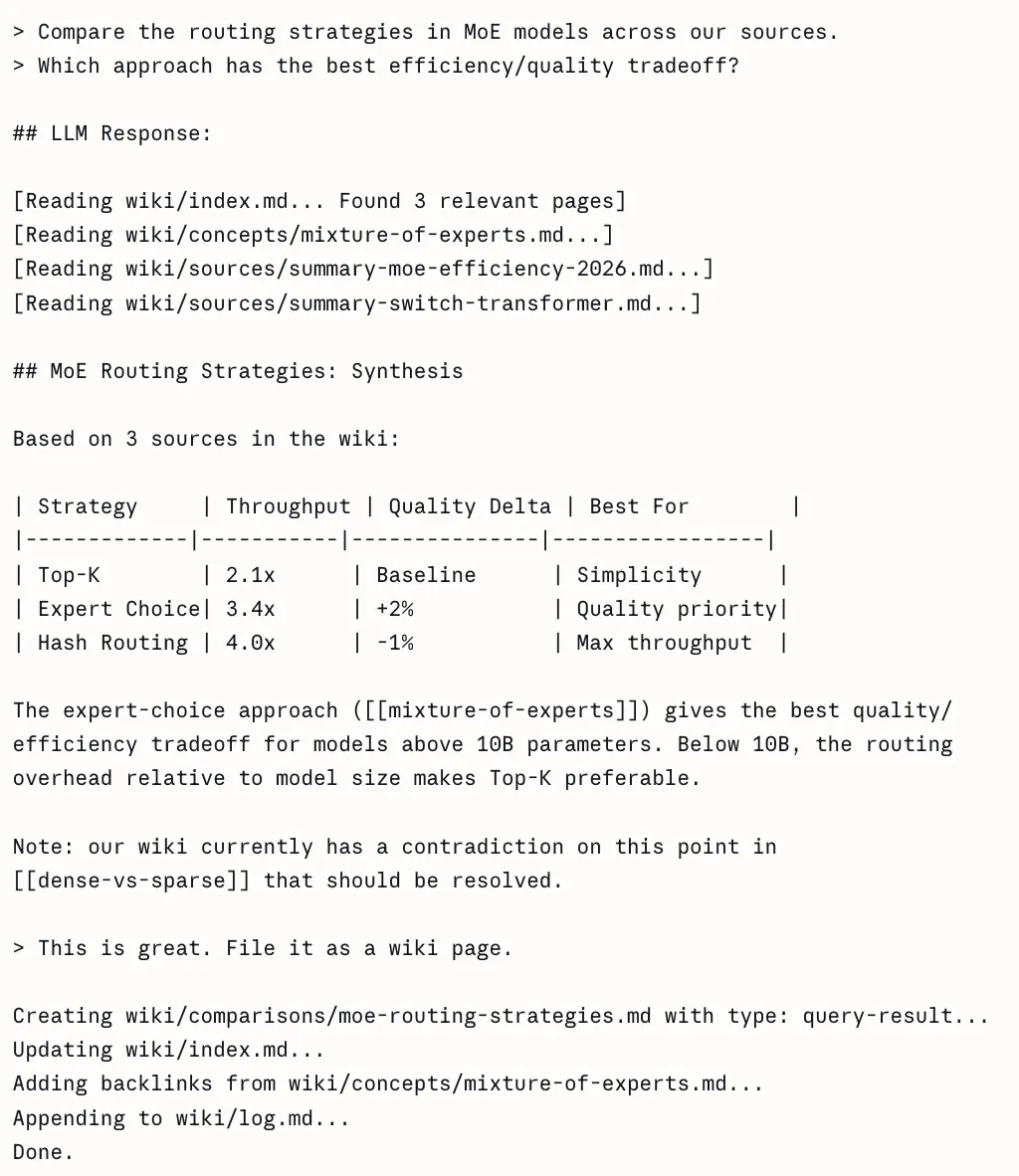

A central index.md will function a desk of contents, and hyperlink straight to every web page of the wiki. That is how an AI agent can effectively traverse your complete data base; it begins on the index, reads by the tldr’s, then drills down into solely these pages which can be related to its particular query.

Step 5: Document your questions

This is likely one of the most under-appreciated options of the system. While you ask the LLM a well-formed query and obtain a response that gives invaluable perception. For instance, a comparability between two frameworks, or a proof of how two ideas are associated, you save that response as a brand new wiki web page tagged with the label query-result. As time goes on, your finest considering has been collected quite than misplaced in chat logs.

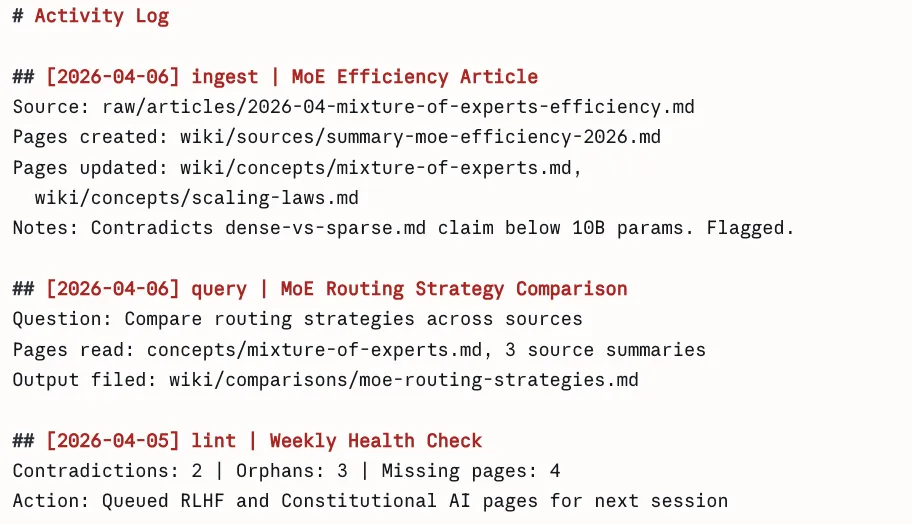

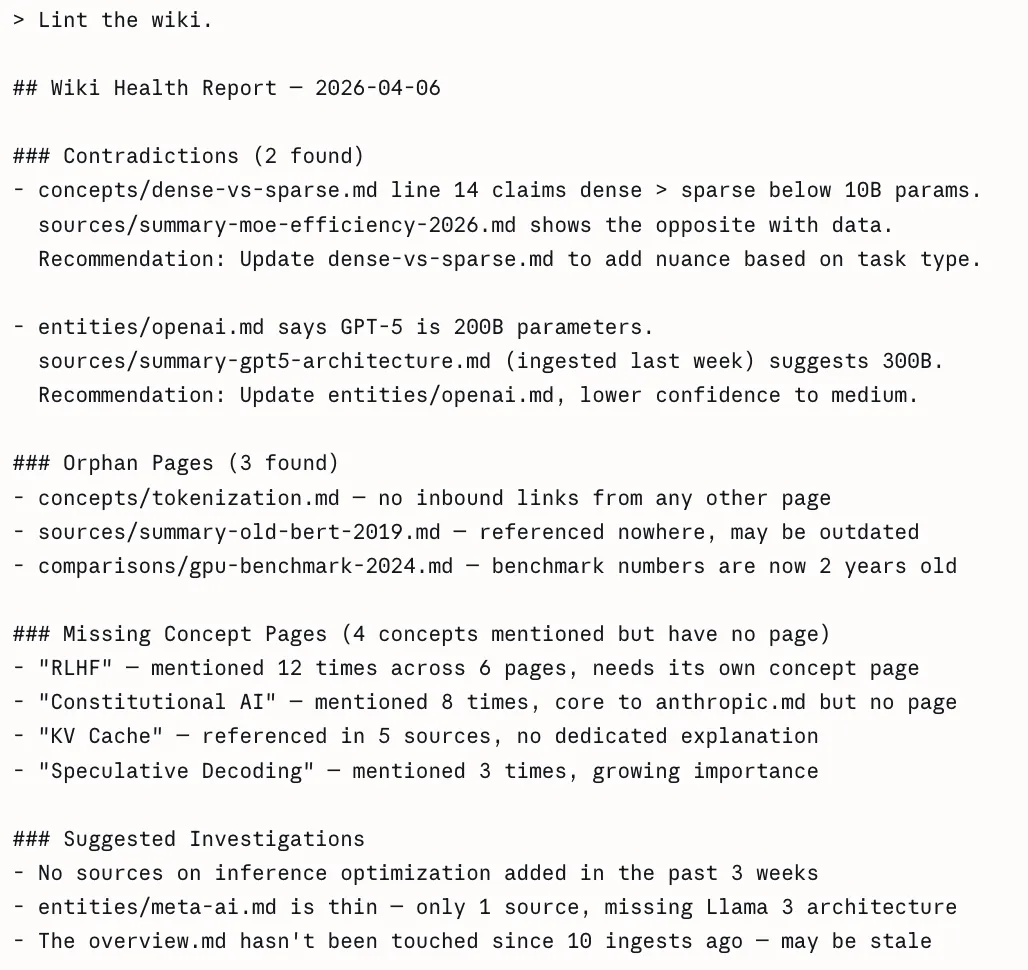

Step 6: Conduct lint passes

At acceptable intervals, you ask the LLM to audit your complete wiki for contradictions or inconsistencies between pages, and to point these statements which have been rendered out of date by a more moderen supply. Moreover, the LLM will present enter on figuring out orphan pages (i.e., pages that don’t have any hyperlinks pointing to them), and for offering an inventory of ideas which can be referenced inside the current content material however aren’t but represented by their very own respective pages.

Karpathy talks about numerous particular instruments in his Gist. Under you will discover what every device does and the way they match into his general workflow.

1. Obsidian – Your Wiki IDE

Obsidian is a free markdown data administration utility which makes use of a neighborhood listing as a vault. For Karpathy, that is the viewing interface used for the wiki as a result of it has three distinct options that matter for his system:

- The Graph View offers a graph of all wiki pages represented as nodes, and as well as, each wiki hyperlink ( [[wiki-links]] ) will likely be represented as edges connecting all nodes collectively. Hub pages will likely be linked to many nodes, and so will likely be represented as bigger than common nodes. Orphan pages will likely be represented as remoted nodes. This enables for rapid visible illustration of the density of data and gaps inside an individual’s data. No different doc view or file browser can present this illustration visually.

- The Dataview Plugin permits customers to show their wiki right into a database that may be queried. All pages will need to have yaml frontmatter, so the mixing specification is glad and subsequently permits the person to run SQL-like queries towards all pages within the wiki.

# In any Obsidian observe, Dataview renders this dynamically:

# Checklist all idea pages ordered by variety of sources

TABLE size(sources) AS "Supply Depend", confidence, up to date

FROM "wiki/ideas"

SORT size(sources) DESC

# Discover low-confidence pages that want assessment

TABLE title, sources

FROM "wiki"

WHERE confidence = "low"

SORT file.mtime ASC

# Discover pages not up to date within the final 2 weeks

LIST

FROM "wiki"

WHERE up to date < date(at present) - dur(14 days)

SORT up to date ASC- The Net Clipper browser extension (accessible for Chrome, Firefox, Safari, Edge, Arc, and Courageous) converts net articles to wash Markdown with YAML frontmatter in a single click on, saving on to your uncooked folder. You obtain all photos to your laptop by urgent the hotkey Ctrl+Shift+D after you end clipping as a result of the LLM requires entry to the photographs.

2. Qmd: Search at Scale

The LLM can use the index.md file to entry the wiki content material with out issues at small scale. The index turns into unreadable in a single context window when you’ve greater than 100 pages as a result of it reaches extreme measurement.

The native search engine qmd permits Markdown file searches by three search strategies which Tobi Lutke (CEO of Shopify) developed. The system operates fully in your gadget as a result of it makes use of node-llama-cpp with GGUF fashions which require no API connections and shield your knowledge from leaving your laptop.

# Set up qmd globally

npm set up -g @tobilu/qmd

# Register your wiki as a searchable assortment

qmd assortment add ./wiki --name my-research

# Primary key phrase search (BM25)

qmd search "combination of consultants routing effectivity"

# Semantic search, finds associated ideas even with completely different phrases

qmd vsearch "how do sparse fashions deal with load balancing"

# Hybrid search with LLM re-ranking, highest high quality outcomes

qmd question "what are the tradeoffs between top-k and expert-choice routing"

# JSON output for piping into agent workflows

qmd question "scaling legal guidelines" --json | jq '.outcomes[].title'

# Expose qmd as an MCP server so Claude Code can use it as a local device

qmd mcpThe MCP server mode permits Claude Code to make use of qmd straight as a built-in device which leads to smoother workflow integration all through your knowledge ingestion and question processing duties.

3. Git: Model Management for Data

As a result of your complete wiki is a folder of plain Markdown recordsdata, you get model historical past branching and collaboration totally free with Git. That is fairly highly effective:

# Initialize the repo whenever you begin

cd my-research && git init

# Commit after each ingest session

git add .

git commit -m "ingest: MoE effectivity article — flags dense-vs-sparse contradiction"

# See precisely what modified in any ingest

git diff HEAD~1

# Roll again a nasty compilation go

git revert abc1234

# See how your data advanced over time

git log --oneline wiki/ideas/mixture-of-experts.md

# Share with a crew by way of GitHub (the wiki turns into collaborative)

git distant add origin https://github.com/yourname/research-wiki

git push -u origin principalGetting Began: Your First LLM Wiki in Three Steps

Should you’re enthusiastic about this idea there may be a straightforward strategy to start:

- Choose one space of curiosity you might be at the moment exploring and provides the AI 5-10 of your finest sources. Don’t try to put every thing you’ve carried out digitally into one place on the primary day however as a substitute learn the way the system works and learn how to apply it to a small scale.

- Create the fundamental framework quickly. Create a wiki/listing on your wiki and have an index.md file there. Write down what your frontmatter is (title, sort, supply, created, up to date, tags), and be constant in naming your recordsdata e.g., concept-name.md or firstname-lastname.md. If this isn’t carried out will probably be troublesome to rectify later.

- Spend quite a lot of time creating your preliminary immediate. That is essentially the most essential step. Create guidelines for Classifying, writing TLDRs, writing the frontmatter in addition to guides for when to create Pages and when to edit the pages. Be certain that to maintain updating the immediate as you employ it.

Use Claude with Claude Code, or any AI with file entry, to construct and preserve the wiki. Begin at your index file when querying. Let the agent navigate.

The Sensible Challenges (And Find out how to Deal with Them)

Let’s be life like, Setting up an LLM powered wiki isn’t any simple job because it comes with a number of obstacles as effectively:

- Constructing an LLM-powered wiki is troublesome: It entails a number of challenges throughout setup, construction, and long-term upkeep.

- Immediate engineering is the primary problem: You want clear directions for structuring pages, deciding when to create vs replace them, and resolving conflicting data, which requires iteration and refinement.

- Scalability is a hidden issue: Easy setups break down past just a few hundred pages, so that you want tagging, folders, and search methods deliberate upfront.

- Consistency over time issues: With out common upkeep, your wiki will accumulate outdated data, contradictions, and orphaned pages.

- Agent proficiency is a key talent: Successfully guiding AI by prompts and construction takes apply, and those that spend money on studying this get considerably higher outcomes.

Conclusion

A very powerful recommendation for constructing your first LLM wiki is similar recommendation Karpathy offers in his gist: don’t overthink the setup. The schema template from this information will be simply copied after which you’ll be able to create the listing construction by executing the bash instructions.

The system achieves its magical impact by a number of architectural enhancements which develop from the primary day onwards. The wiki turns into extra invaluable with every new supply materials you embrace. The info belongs to you. The recordsdata exist in codecs which can be utilized by any system. You should use any AI you need to question it. The LLM takes care of all upkeep duties as a substitute of you needing to deal with them which creates a distinct expertise from different productiveness instruments.

Your data, lastly, working as laborious for you as you labored to amass it.

Incessantly Requested Questions

A. It doesn’t accumulate data; each question begins from scratch with out constructing on previous insights.

A. It processes data throughout ingestion, making a persistent, structured system that evolves over time.

A. It ensures the system extracts the best degree of element for every doc sort, bettering accuracy and usefulness.

Login to proceed studying and revel in expert-curated content material.