Think about asking your AI mannequin, “What’s the climate in Tokyo proper now?” and as a substitute of hallucinating a solution, it calls your precise Python perform, fetches dwell knowledge, and responds appropriately. That’s how empowering the software name capabilities within the Gemma 4 from Google are. A very thrilling addition to open-weight AI: this perform calling is structured, dependable, and constructed straight into the AI mannequin!

Coupled with Ollama for native referencing, it lets you develop non-cloud-dependent AI brokers. The most effective half – these brokers have entry to real-world APIs and providers domestically, with none subscription. On this information, we’ll cowl the idea and implementation structure in addition to three duties which you could experiment with instantly.

Additionally learn: Working Claude Code for Free with Gemma 4 and Ollama

Conversational language fashions have a restricted information primarily based on after they had been developed. Therefore, they will supply solely an approximate reply whenever you ask for present market costs or present climate circumstances. This lack was addressed by offering an API wrapper round widespread fashions (capabilities). The purpose – to unravel all these questions through (tool-calling) service(s).

By enabling tool-calling, the mannequin can acknowledge:

- When it’s essential to retrieve outdoors info

- Establish the proper perform primarily based on the offered API

- Compile appropriately formatted technique calls (with arguments)

It then waits till the execution of that code block returns the output. It then composes an assessed reply primarily based on the obtained output.

To make clear: the mannequin by no means executes the strategy calls which have been created by the consumer. It solely determines which strategies to name and how you can construction the strategy name argument listing. The consumer’s code will execute the strategies that they known as through the API perform. On this state of affairs, the mannequin represents the mind of a human, whereas the capabilities being known as symbolize the arms.

Earlier than you start writing code, it’s helpful to grasp how every part works. Right here is the loop that every software in Gemma 4 will comply with, because it makes software calls:

- Outline capabilities in Python to carry out precise duties (i.e., retrieve climate knowledge from an exterior supply, question a database, convert cash from one foreign money to a different).

- Create a JSON schema for every of the capabilities you might have created. The schema ought to comprise the title of the perform and what its parameters are (together with their sorts).

- When the system sends a message to you, you ship each the tool-schemas you might have created and the system’s message to the Ollama API.

- The Ollama API returns knowledge in a tool_calls block somewhat than plain textual content.

- You execute the perform utilizing the parameters despatched to you by the Ollama API.

- You come the consequence again to the Ollama API as a ‘position’:’software’ response.

- The Ollama API receives the consequence and returns the reply to you in pure language.

This two-pass sample is the inspiration for each function-calling AI agent, together with the examples proven under.

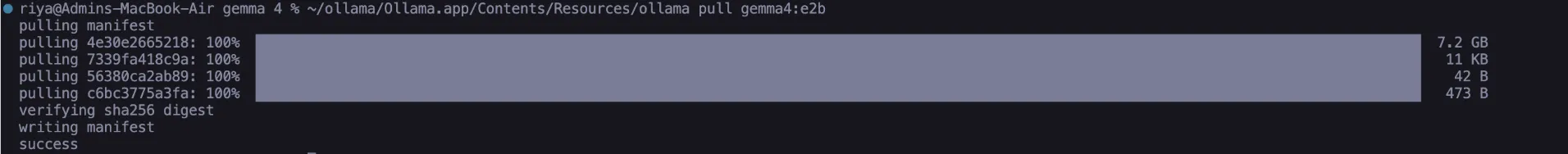

To execute these duties, you will have two parts: Ollama have to be put in domestically in your machine, and you will have to obtain the Gemma 4 Edge 2B mannequin. There are not any dependencies past what is supplied with the usual set up of Python, so that you don’t want to fret about putting in Pip packages in any respect.

1. To put in Ollama with Homebrew or MacOS:

# Set up Ollama (macOS/Linux)

curl --fail -fsSL https://ollama.com/set up.sh | sh 2. To obtain the mannequin (which is roughly 2.5 GB):

# Obtain the Gemma 4 Edge Mannequin – E2B

ollama pull gemma4:e2b

After downloading the mannequin, use the Ollama listing to verify it exists within the listing of fashions. Now you can hook up with the operating API on the URL http://localhost:11434 and run requests towards it utilizing the helper perform we’ll create:

import json, urllib.request, urllib.parse

def call_ollama(payload: dict) -> dict:

knowledge = json.dumps(payload).encode("utf-8")

req = urllib.request.Request(

"http://localhost:11434/api/chat",

knowledge=knowledge,

headers={"Content material-Kind": "utility/json"},

)

with urllib.request.urlopen(req) as resp:

return json.masses(resp.learn().decode("utf-8"))No third-party libraries are wanted; due to this fact, the agent can run independently and offers full transparency.

Additionally learn: Find out how to Run Gemma 4 on Your Cellphone: A Fingers-On Information

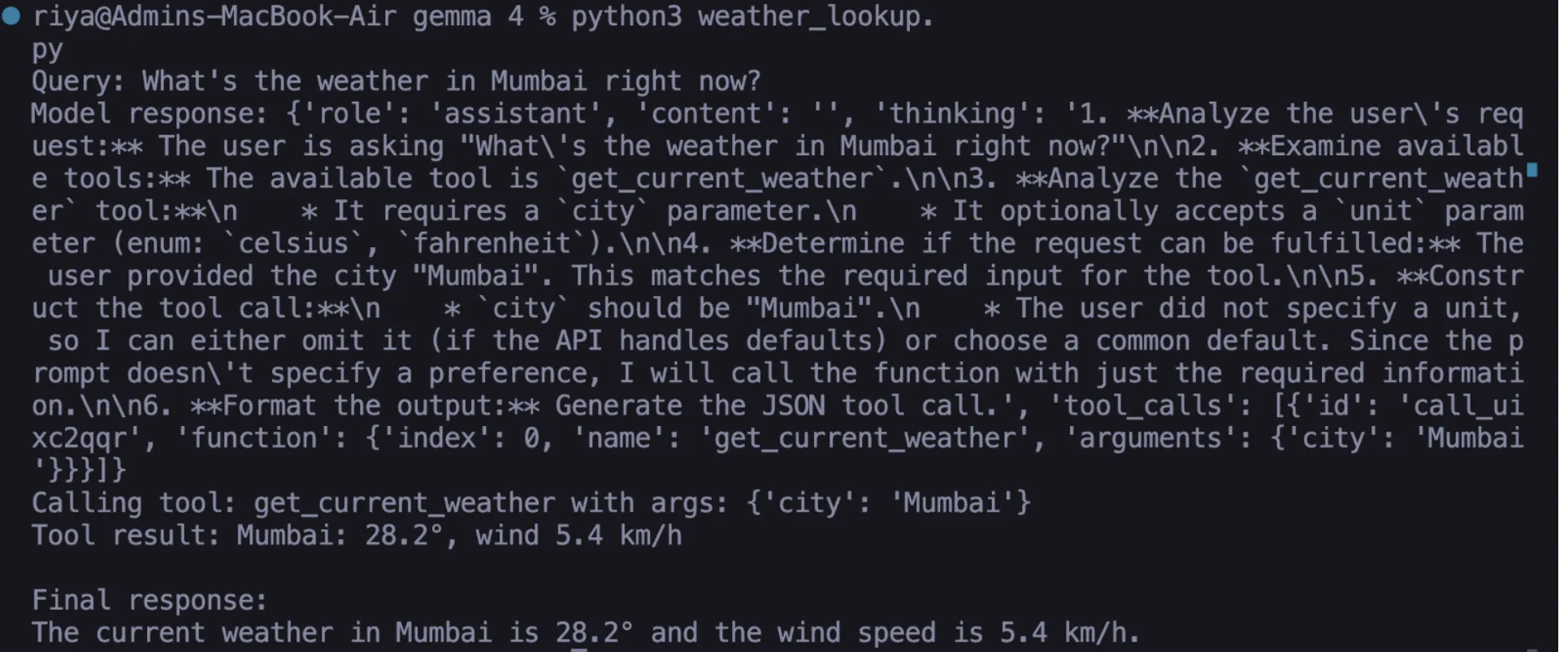

Fingers-on Job 01: Dwell Climate Lookup

The primary of our strategies makes use of open-meteo that pulls dwell knowledge for any location by a free climate API that doesn’t want a key with a view to pull the data right down to the native space primarily based on longitude/latitude coordinates. In case you’re going to make use of this API, you’ll have to carry out a collection of steps :

1. Write your perform in Python

def get_current_weather(metropolis: str, unit: str = "celsius") -> str:

geo_url = f"https://geocoding-api.open-meteo.com/v1/search?title={urllib.parse.quote(metropolis)}&depend=1"

with urllib.request.urlopen(geo_url) as r:

geo = json.masses(r.learn())

loc = geo["results"][0]

lat, lon = loc["latitude"], loc["longitude"]

url = (f"https://api.open-meteo.com/v1/forecast"

f"?latitude={lat}&longitude={lon}"

f"&present=temperature_2m,wind_speed_10m"

f"&temperature_unit={unit}")

with urllib.request.urlopen(url) as r:

knowledge = json.masses(r.learn())

c = knowledge["current"]

return f"{metropolis}: {c['temperature_2m']}°, wind {c['wind_speed_10m']} km/h" 2. Outline your JSON schema

This offers the data to the mannequin in order that Gemma 4 is aware of precisely what the perform can be doing/anticipating when it’s known as.

weather_tool = {

"sort": "perform",

"perform": {

"title": "get_current_weather",

"description": "Get dwell temperature and wind pace for a metropolis.",

"parameters": {

"sort": "object",

"properties": {

"metropolis": {"sort": "string", "description": "Metropolis title, e.g. Mumbai"},

"unit": {"sort": "string", "enum": ["celsius", "fahrenheit"]}

},

"required": ["city"]

}

}3. Create a question to your software name (in addition to deal with and course of the response again)

messages = [{"role": "user", "content": "What's the weather in Mumbai right now?"}] response = call_ollama({"mannequin": "gemma4:e2b", "messages": messages, "instruments": [weather_tool], "stream": False}) msg = response["message"]

if "tool_calls" in msg: tc = msg["tool_calls"][0] fn = tc["function"]["name"] args = tc["function"]["arguments"] consequence = get_current_weather(**args) # executed domestically

messages.append(msg)

messages.append({"position": "software", "content material": consequence, "title": fn})

last = call_ollama({"mannequin": "gemma4:e2b", "messages": messages, "instruments": [weather_tool], "stream": False})

print(last["message"]["content"])Output

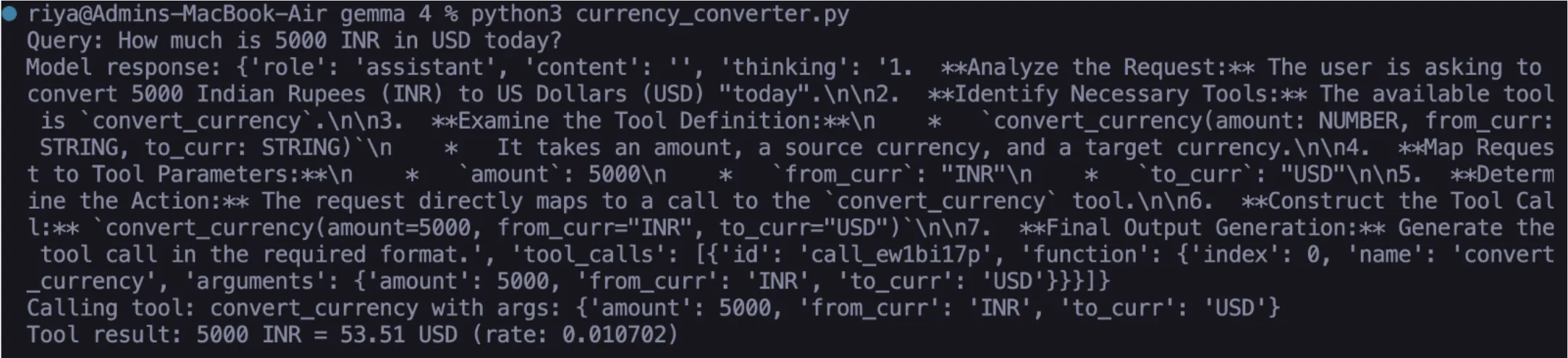

Fingers-on Job 02: Dwell Forex Converter

The traditional LLM fails by hallucinating foreign money values and never with the ability to present correct, up-to-date foreign money conversion. With the assistance of ExchangeRate-API, the converter can get the newest international change charges and convert precisely between two currencies.

When you full Steps 1-3 under, you’ll have a totally functioning converter in Gemma 4:

1. Write your Python perform

def convert_currency(quantity: float, from_curr: str, to_curr: str) -> str:

url = f"https://open.er-api.com/v6/newest/{from_curr.higher()}"

with urllib.request.urlopen(url) as r:

knowledge = json.masses(r.learn())

fee = knowledge["rates"].get(to_curr.higher())

if not fee:

return f"Forex {to_curr} not discovered."

transformed = spherical(quantity * fee, 2)

return f"{quantity} {from_curr.higher()} = {transformed} {to_curr.higher()} (fee: {fee})"2. Outline your JSON schema

currency_tool = {

"sort": "perform",

"perform": {

"title": "convert_currency",

"description": "Convert an quantity between two currencies at dwell charges.",

"parameters": {

"sort": "object",

"properties": {

"quantity": {"sort": "quantity", "description": "Quantity to transform"},

"from_curr": {"sort": "string", "description": "Supply foreign money, e.g. USD"},

"to_curr": {"sort": "string", "description": "Goal foreign money, e.g. EUR"}

},

"required": ["amount", "from_curr", "to_curr"]

}

}

} 3. Take a look at your answer utilizing a pure language question

response = call_ollama({

"mannequin": "gemma4:e2b",

"messages": [{"role": "user", "content": "How much is 5000 INR in USD today?"}],

"instruments": [currency_tool],

"stream": False

}) Gemma 4 will course of the pure language question and format a correct API name primarily based on quantity = 5000, from = ‘INR’, to = ‘USD’. The ensuing API name will then be processed by the identical ‘Suggestions’ technique described in Job 01.

Output

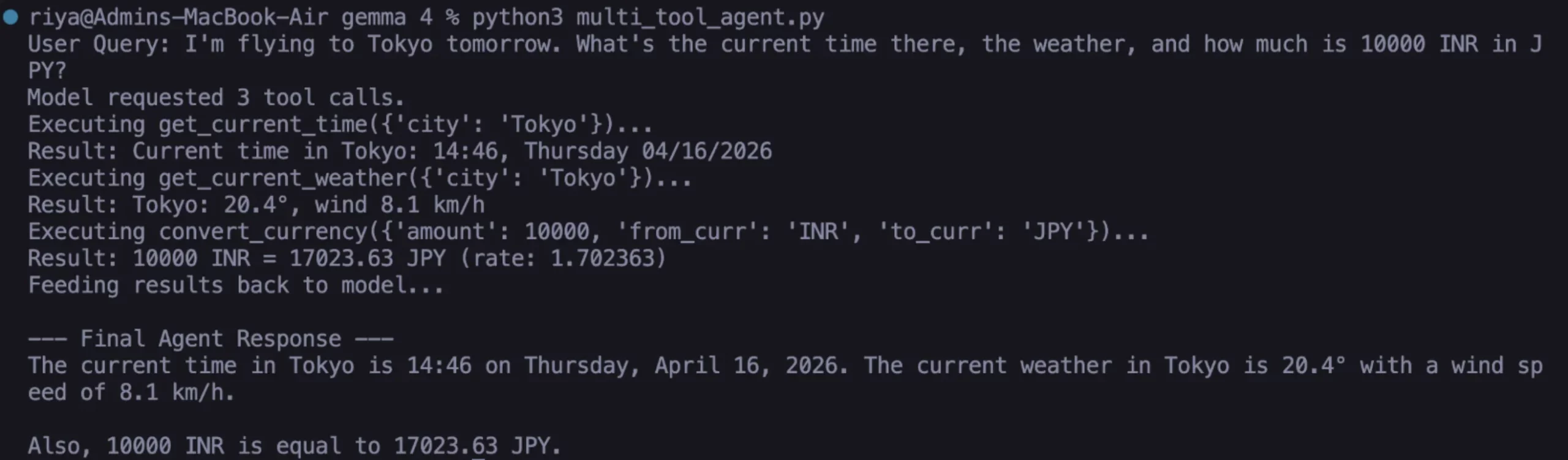

Gemma 4 excels at this process. You may provide the mannequin a number of instruments concurrently and submit a compound question. The mannequin coordinates all of the required calls in a single go; handbook chaining is pointless.

1. Add the timezone software

def get_current_time(metropolis: str) -> str:

url = f"https://timeapi.io/api/Time/present/zone?timeZone=Asia/{metropolis}"

with urllib.request.urlopen(url) as r:

knowledge = json.masses(r.learn())

return f"Present time in {metropolis}: {knowledge['time']}, {knowledge['dayOfWeek']} {knowledge['date']}"

time_tool = {

"sort": "perform",

"perform": {

"title": "get_current_time",

"description": "Get the present native time in a metropolis.",

"parameters": {

"sort": "object",

"properties": {

"metropolis": {"sort": "string", "description": "Metropolis title for timezone, e.g. Tokyo"}

},

"required": ["city"]

}

} 2. Construct the multi-tool agent loop

TOOL_FUNCTIONS = { "get_current_weather": get_current_weather, "convert_currency": convert_currency, "get_current_time": get_current_time, }

def run_agent(user_query: str): all_tools = [weather_tool, currency_tool, time_tool] messages = [{"role": "user", "content": user_query}]

response = call_ollama({"mannequin": "gemma4:e2b", "messages": messages, "instruments": all_tools, "stream": False})

msg = response["message"]

messages.append(msg)

if "tool_calls" in msg:

for tc in msg["tool_calls"]:

fn = tc["function"]["name"]

args = tc["function"]["arguments"]

consequence = TOOL_FUNCTIONS[fn](**args)

messages.append({"position": "software]]]", "content material": consequence, "title": fn})

last = call_ollama({"mannequin": "gemma4:e2b", "messages": messages, "instruments": all_tools, "stream": False})

return last["message"]["content"]

return msg.get("content material", "")3. Execute a compound/multi-intent question

print(run_agent(

"I am flying to Tokyo tomorrow. What is the present time there, "

"the climate, and the way a lot is 10000 INR in JPY?"

))eOutput

Right here, we described three distinct capabilities with three separate APIs in real-time by pure language processing utilizing one widespread idea. It consists of all native execution with out cloud options from the Gemma 4 occasion; none of those parts make the most of any distant sources or cloud.

What Makes Gemma 4 Totally different for Agentic AI?

Different open weight fashions can name instruments, but they don’t carry out reliably, and that is what differentiates them from Gemma 4. The mannequin persistently offers legitimate JSON arguments, processes non-compulsory parameters appropriately, and determines when to return information and never name a software. As you retain utilizing it, consider the next:

- Schema high quality is critically vital. In case your description area is imprecise, you’ll have a troublesome time figuring out arguments to your software. Be particular with items, codecs, and examples.

- The required array is validated by Gemma 4. Gemma 4 respects the wanted/non-compulsory distinction.

- As soon as the software returns a consequence, that consequence turns into a context for any of the “position”: “software” messages you ship throughout your last cross. The richer the consequence from the software, the richer the response can be.

- A typical mistake is to return the software consequence as “position”: “consumer” as a substitute of “position”: “software”, because the mannequin is not going to attribute it appropriately and can try to re-request the decision.

Additionally learn: High 10 Gemma 4 Tasks That Will Blow Your Thoughts

Conclusion

You’ve gotten created an actual AI agent that makes use of the Gemma 4 function-calling function, and it’s working completely domestically. The agent-based system makes use of all of the parts of the structure in manufacturing. Potential subsequent steps can embody:

- including a file system software that may permit for studying and writing native information on demand;

- utilizing a SQL database as a way for making pure language knowledge queries;

- making a reminiscence software that may create session summaries and write them to disk, thus offering the agent with the power to recall previous conversations

The open-weight AI agent ecosystem is evolving shortly. The flexibility for Gemma 4 to natively assist structured perform calling affords substantial autonomous performance to you with none reliance on the cloud. Begin small, create a working system, and the constructing blocks to your subsequent initiatives can be prepared so that you can chain collectively.

Login to proceed studying and luxuriate in expert-curated content material.