70

Telcos are exploring NVIDIA’s AI grid, however edge GPU deployment lacks a robust latency or value case at the moment; solely bodily AI use circumstances justify it, with gradual rollout anticipated towards future 6G networks.

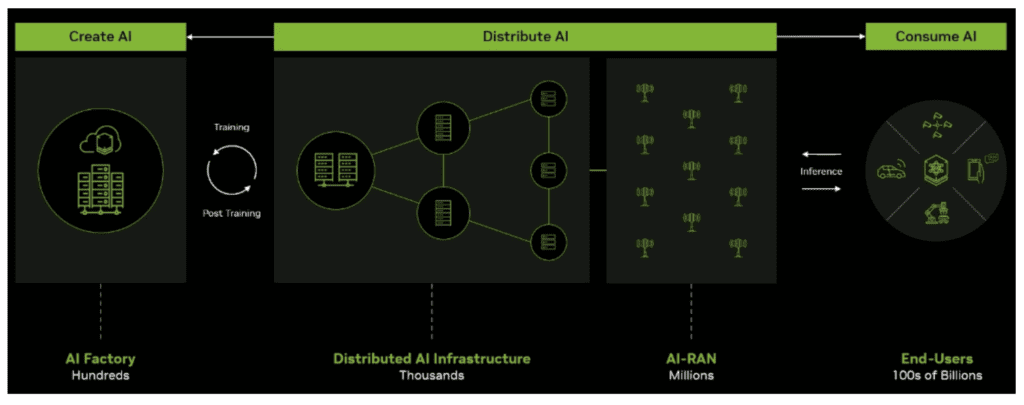

The diffusion of AI in telco networks continues with NVIDIA’s announcement about an AI grid idea, in addition to current telco bulletins about adoption of AI infrastructure, together with from T-Cellular US, Comcast, SoftBank, and others. For mission-critical functions, token technology will doubtless have to happen on the far fringe of the community to reap the benefits of low latency, notably for bodily AI functions like robotics, related vehicles, and different near-real-time use circumstances.

T-Cellular has argued that bodily AI begins with clever networks and NVIDIA has stated that telcos are in a super place to change into a key element of this new AI grid idea. Naturally, the corporate that can profit essentially the most from its realization is NVIDIA, which would supply each {hardware} and software program. The graphic under is NVIDIA’s interpretation of the AI grid, which is purportedly already being constructed by telcos.

The case for decrease latency is weak

A powerful, if not the strongest, argument for deploying GPUs on the close to/far-edge of the community is latency, which is required for functions that require close to ‘real-time’ actuation and management. The instance shared at GTC confirmed a chatbot agent with a 2,000ms round-trip, versus the identical with a 400ms latency – which, certainly, makes a major distinction to person expertise.

However community latency doesn’t usually contribute a lot to an important ‘time-to-first-token’ (TTFT) metric. In a typical instance, community ‘round-trip time’ (RTT) latency could also be as excessive as 100 ms, however different latency parameters will apply, whatever the place of the inference server, together with DNS decision and tunnel institution. For a medium immediate measurement of 1,000 tokens, a typical prefill might take 160 ms, whereas the decoding part might take a number of seconds to finish.

Transferring the inference server nearer to the tip person is not going to doubtless make any materials distinction to the person expertise. It’s merely not financially viable at the moment or within the subsequent two to 3 years to deploy servers all through a rustic to scale back this latency additional. Deploying servers on the cell website is sophisticated even additional because the addressable subscriber base and geographical space related via a single website would prohibit the enterprise case even additional, apart from very-high worth and highly-critical use circumstances.

The dialogue is flipped totally if the use case turns into bodily AI, with the latency finances coming all the way down to by round 10 ms. In these circumstances, cloud inference turns into untenable and inference wants to maneuver to the sting of the community: a 100 ms latency for an autonomous automobile transferring at 100 km/h would imply the automobile could be blind for two.8 meters. The identical could possibly be stated for video surveillance, drone supply robots, and a bunch of different functions.

However the query is, what’s the price of a distributed AI grid – particularly, say, for T-Cellular within the US?

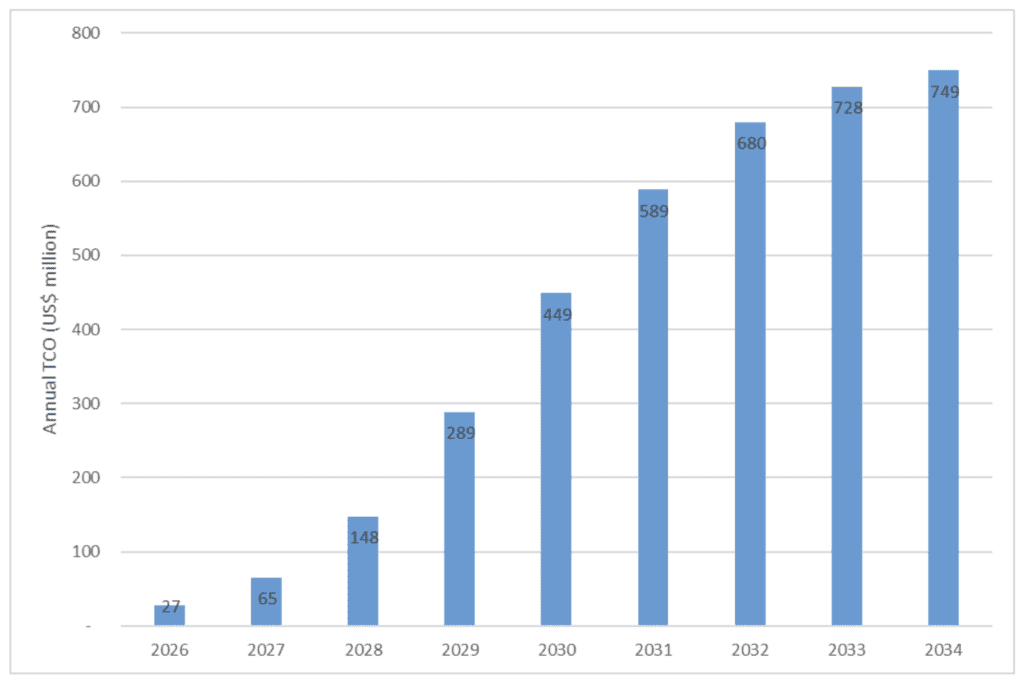

Quantifying TCO for GPU-enabled RAN

T-Cellular US stated at GTC that kinetic tokens might be an enormous alternative for telcos globally and for them to reap the benefits of their actual property, they’ll want AI-RAN methods, and GPUs of their community. If we assume that T-Cellular US operates round 13,000 rooftop cell websites within the US, and that it begins retrofitting them with AI-RAN servers (on this case an NVIDIA ARC-1 server, costing $60,000, to energy three cells), and that it completes this deployment with 100% rooftop GPU protection in 2035 – then the whole cumulative value might be $3.7 billion, together with deployment, cooling, and different ancillary prices.

Chart 1, under, illustrates the annual TCO for this instance deployment.

Unfold throughout 9 years, the funding to deploy an AI grid turns into extra manageable, assuming income scales accordingly. Furthermore, the $3.7 billion estimate is hardly a blip within the NVIDIA universe, however telcos and their traders will want a robust enterprise case to spend this quantity – particularly when that is within the order of a new-generation radio community being deployed.

At the moment, there is no such thing as a justification for deploying GPUs on the cell website, which signifies that AI inference will doubtless begin to be centrally deployed after which regularly fan out to cell websites. Certainly, AI inference servers will most likely be deployed in core community areas which are usually fewer than 10 throughout a rustic after which regularly unfold out when the necessity arises for decrease latency.

Many use circumstances, together with video surveillance, autonomous driving, last-mile supply robotics, good glasses, and AR/VR functions characterize the situations the place edge inference isn’t non-compulsory, however architecturally needed. Preliminary deployments of the AI grid will doubtless future-proof the telco community to allow incremental upgrades because the adoption of those use circumstances widens. Telcos that perceive this the earliest will set the muse for 6G and can solidify their place within the AI tremendous cycle.