Among the finest-performing algorithms in machine studying is the boosting algorithm. These are characterised by good predictive skills and accuracy. All of the strategies of gradient boosting are based mostly on a common notion. They get to be taught via the errors of the previous fashions. Every new mannequin is aimed toward correcting the earlier errors. This fashion, a weak group of learners is became a robust crew on this course of.

This text compares 5 widespread methods of boosting. These are Gradient Boosting, AdaBoost, XGBoost, CatBoost, and LightGBM. It describes the way in which each approach features and reveals main variations, together with their strengths and weaknesses. It additionally addresses the utilization of each strategies. There are efficiency benchmarks and code samples.

Introduction to Boosting

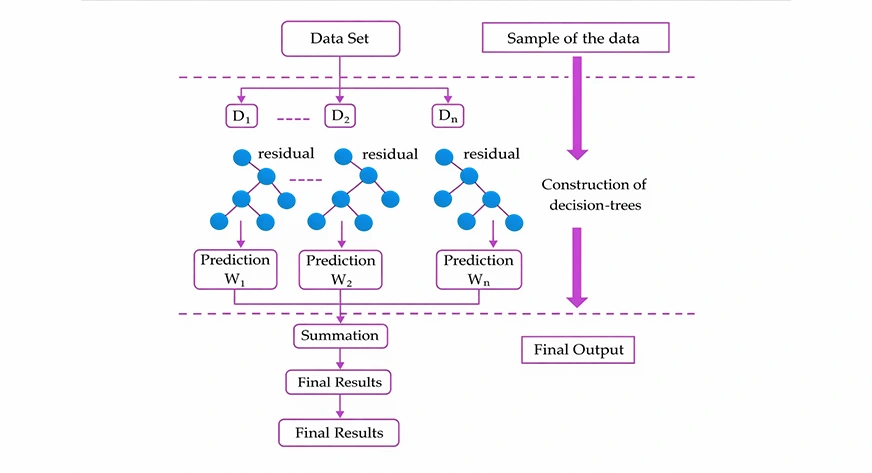

Boosting is a technique of ensemble studying. It fuses a number of weak learners with frequent shallow resolution bushes into a powerful mannequin. The fashions are educated sequentially. Each new mannequin dwells upon the errors dedicated by the previous one. You may be taught all about boosting algorithms in machine studying right here.

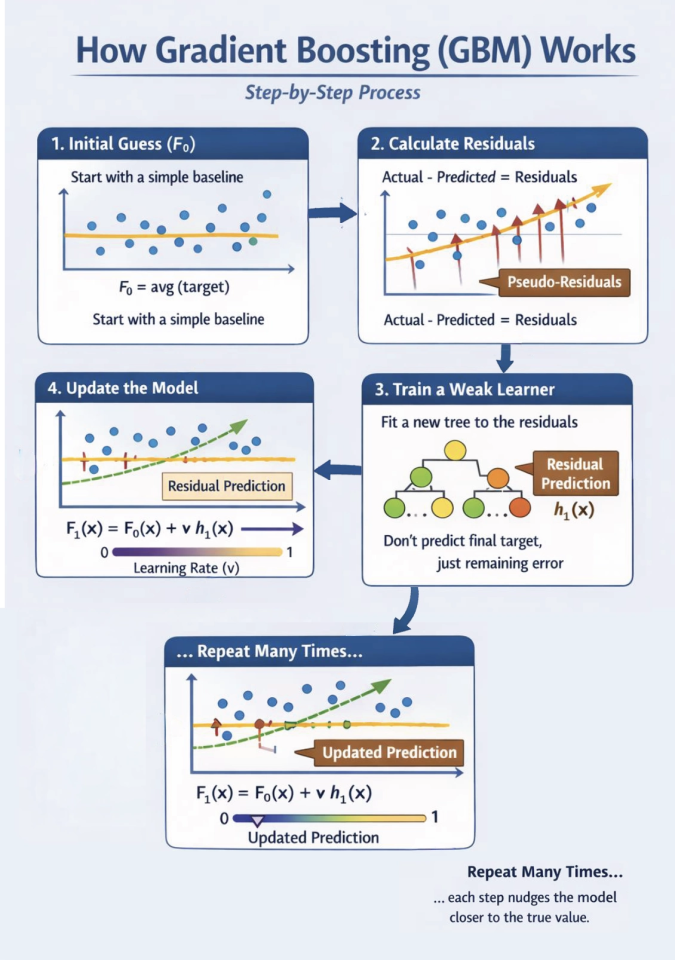

It begins with a primary mannequin. In regression, it may be used to forecast the common. Residuals are subsequently obtained by figuring out the distinction between the precise and predicted values. These residuals are predicted by coaching a brand new weak learner. This assists within the rectification of previous errors. The process is repeated till minimal errors are attained or a cease situation is achieved.

This concept is utilized in varied boosting strategies in another way. Some reweight knowledge factors. Others minimise a loss operate by gradient descent. Such variations affect efficiency and adaptability. The final word prediction is, in any case, a weighted common of all weak learners.

AdaBoost (Adaptive Boosting)

One of many first boosting algorithms is AdaBoost. It was developed within the mid-Nineteen Nineties. It builds fashions step-by-step. Each successive mannequin is devoted to the errors made within the earlier theoretical fashions. The purpose is that there’s adaptive reweighting of information factors.

How It Works (The Core Logic)

AdaBoost works in a sequence. It doesn’t practice fashions unexpectedly; it builds them one after the other.

- Begin Equal: Give each knowledge level the identical weight.

- Prepare a Weak Learner: Use a easy mannequin (often a Determination Stump—a tree with just one break up).

- Discover Errors: See which knowledge factors the mannequin bought flawed.

- Reweight:

Improve weights for the “flawed” factors. They turn out to be extra essential.

Lower weights for the “right” factors. They turn out to be much less essential. - Calculate Significance (alpha): Assign a rating to the learner. Extra correct learners get a louder “voice” within the ultimate resolution.

- Repeat: The following learner focuses closely on the factors beforehand missed.

- Ultimate Vote: Mix all learners. Their weighted votes decide the ultimate prediction.

Strengths & Weaknesses

| Strengths | Weaknesses |

|---|---|

| Easy: Simple to arrange and perceive. | Delicate to Noise: Outliers get enormous weights, which may damage the mannequin. |

| No Overfitting: Resilient on clear, easy knowledge. | Sequential: It’s gradual and can’t be educated in parallel. |

| Versatile: Works for each classification and regression. | Outdated: Fashionable instruments like XGBoost usually outperform it on advanced knowledge. |

Gradient Boosting (GBM): The “Error Corrector”

Gradient Boosting is a robust ensemble technique. It builds fashions one after one other. Every new mannequin tries to repair the errors of the earlier one. As a substitute of reweighting factors like AdaBoost, it focuses on residuals (the leftover errors).

How It Works (The Core Logic)

GBM makes use of a way referred to as gradient descent to attenuate a loss operate.

- Preliminary Guess (F0): Begin with a easy baseline. Normally, that is simply the common of the goal values.

- Calculate Residuals: Discover the distinction between the precise worth and the present prediction. These “pseudo-residuals” signify the gradient of the loss operate.

- Prepare a Weak Learner: Match a brand new resolution tree (hm) particularly to foretell these residuals. It isn’t attempting to foretell the ultimate goal, simply the remaining error.

- Replace the Mannequin: Add the brand new tree’s prediction to the earlier ensemble. We use a studying price (v) to forestall overfitting.

- Repeat: Do that many instances. Every step nudges the mannequin nearer to the true worth.

Strengths & Weaknesses

| Strengths | Weaknesses |

|---|---|

| Extremely Versatile: Works with any differentiable loss operate (MSE, Log-Loss, and so on.). | Gradual Coaching: Timber are constructed one after the other. It’s exhausting to run in parallel. |

| Superior Accuracy: Usually beats different fashions on structured/tabular knowledge. | Information Prep Required: You have to convert categorical knowledge to numbers first. |

| Characteristic Significance: It’s simple to see which variables are driving predictions. | Tuning Delicate: Requires cautious tuning of studying price and tree rely. |

XGBoost: The “Excessive” Evolution

XGBoost stands for eXtreme Gradient Boosting. It’s a quicker, extra correct, and extra sturdy model of Gradient Boosting (GBM). It turned well-known by profitable many Kaggle competitions. You may be taught all about it right here.

Key Enhancements (Why it’s “Excessive”)

In contrast to commonplace GBM, XGBoost consists of sensible math and engineering tips to enhance efficiency.

- Regularization: It makes use of $L1$ and $L2$ regularization. This penalizes advanced bushes and prevents the mannequin from “overfitting” or memorizing the info.

- Second-Order Optimization: It makes use of each first-order gradients and second-order gradients (Hessians). This helps the mannequin discover one of the best break up factors a lot quicker.

- Sensible Tree Pruning: It grows bushes to their most depth first. Then, it prunes branches that don’t enhance the rating. This “look-ahead” method prevents ineffective splits.

- Parallel Processing: Whereas bushes are constructed one after one other, XGBoost builds the person bushes by options in parallel. This makes it extremely quick.

- Lacking Worth Dealing with: You don’t must fill in lacking knowledge. XGBoost learns the easiest way to deal with “NaNs” by testing them in each instructions of a break up.

Strengths & Weaknesses

| Strengths | Weaknesses |

|---|---|

| Prime Efficiency: Usually essentially the most correct mannequin for tabular knowledge. | No Native Categorical Help: You have to manually encode labels or one-hot vectors. |

| Blazing Quick: Optimized in C++ with GPU and CPU parallelization. | Reminiscence Hungry: Can use quite a lot of RAM when coping with huge datasets. |

| Sturdy: Constructed-in instruments deal with lacking knowledge and stop overfitting. | Complicated Tuning: It has many hyperparameters (like eta, gamma, and lambda). |

LightGBM: The “Excessive-Velocity” Different

LightGBM is a gradient boosting framework launched by Microsoft. It’s designed for excessive pace and low reminiscence utilization. It’s the go-to selection for enormous datasets with hundreds of thousands of rows.

Key Improvements (How It Saves Time)

LightGBM is “gentle” as a result of it makes use of intelligent math to keep away from every bit of information.

- Histogram-Based mostly Splitting: Conventional fashions kind each single worth to discover a break up. LightGBM teams values into “bins” (like a bar chart). It solely checks the bin boundaries. That is a lot quicker and makes use of much less RAM.

- Leaf-wise Development: Most fashions (like XGBoost) develop bushes level-wise (filling out a whole horizontal row earlier than shifting deeper). LightGBM grows leaf-wise. It finds the one leaf that reduces error essentially the most and splits it instantly. This creates deeper, extra environment friendly bushes.

- GOSS (Gradient-Based mostly One-Facet Sampling): It assumes knowledge factors with small errors are already “realized.” It retains all knowledge with massive errors however solely takes a random pattern of the “simple” knowledge. This focuses the coaching on the toughest elements of the dataset.

- EFB (Unique Characteristic Bundling): In sparse knowledge (a number of zeros), many options by no means happen on the identical time. LightGBM bundles these options collectively into one. This reduces the variety of options the mannequin has to course of.

- Native Categorical Help: You don’t must one-hot encode. You may inform LightGBM which columns are classes, and it’ll discover the easiest way to group them.

Strengths & Weaknesses

| Strengths | Weaknesses |

|---|---|

| Quickest Coaching: Usually 10x–15x quicker than unique GBM on massive knowledge. | Overfitting Threat: Leaf-wise development can overfit small datasets in a short time. |

| Low Reminiscence: Histogram binning compresses knowledge, saving enormous quantities of RAM. | Delicate to Hyperparameters: You have to fastidiously tune num_leaves and max_depth. |

| Extremely Scalable: Constructed for large knowledge and distributed/GPU computing. | Complicated Timber: Ensuing bushes are sometimes lopsided and tougher to visualise. |

CatBoost: The “Categorical” Specialist

CatBoost, developed by Yandex, is brief for Categorical Boosting. It’s designed to deal with datasets with many classes (like metropolis names or person IDs) natively and precisely with no need heavy knowledge preparation.

Key Improvements (Why It’s Distinctive)

CatBoost modifications each the construction of the bushes and the way in which it handles knowledge to forestall errors.

- Symmetric (Oblivious) Timber: In contrast to different fashions, CatBoost builds balanced bushes. Each node on the identical depth makes use of the very same break up situation.

Profit: This construction is a type of regularization that forestalls overfitting. It additionally makes “inference” (making predictions) extraordinarily quick. - Ordered Boosting: Most fashions use your entire dataset to calculate class statistics, which ends up in “goal leakage” (the mannequin “dishonest” by seeing the reply early). CatBoost makes use of random permutations. An information level is encoded utilizing solely the data from factors that got here earlier than it in a random order.

- Native Categorical Dealing with: You don’t must manually convert textual content classes to numbers.

– Low-count classes: It makes use of one-hot encoding.

– Excessive-count classes: It makes use of superior goal statistics whereas avoiding the “leaking” talked about above. - Minimal Tuning: CatBoost is legendary for having wonderful “out-of-the-box” settings. You usually get nice outcomes with out touching the hyperparameters.

Strengths & Weaknesses

| Strengths | Weaknesses |

|---|---|

| Greatest for Classes: Handles high-cardinality options higher than another mannequin. | Slower Coaching: Superior processing and symmetric constraints make it slower to coach than LightGBM. |

| Sturdy: Very exhausting to overfit because of symmetric bushes and ordered boosting. | Reminiscence Utilization: It requires quite a lot of RAM to retailer categorical statistics and knowledge permutations. |

| Lightning Quick Inference: Predictions are 30–60x quicker than different boosting fashions. | Smaller Ecosystem: Fewer group tutorials in comparison with XGBoost. |

The Boosting Evolution: A Facet-by-Facet Comparability

Selecting the best boosting algorithm depends upon your knowledge measurement, function sorts, and {hardware}. Under is a simplified breakdown of how they evaluate.

Key Comparability Desk

| Characteristic | AdaBoost | GBM | XGBoost | LightGBM | CatBoost |

|---|---|---|---|---|---|

| Essential Technique | Reweights knowledge | Matches to residuals | Regularized residuals | Histograms & GOSS | Ordered boosting |

| Tree Development | Degree-wise | Degree-wise | Degree-wise | Leaf-wise | Symmetric |

| Velocity | Low | Reasonable | Excessive | Very Excessive | Reasonable (Excessive on GPU) |

| Cat. Options | Handbook Prep | Handbook Prep | Handbook Prep | Constructed-in (Restricted) | Native (Wonderful) |

| Overfitting | Resilient | Delicate | Regularized | Excessive Threat (Small Information) | Very Low Threat |

Evolutionary Highlights

- AdaBoost (1995): The pioneer. It centered on hard-to-classify factors. It’s easy however gradual on huge knowledge and lacks trendy math like gradients.

- GBM (1999): The inspiration. It makes use of calculus (gradients) to attenuate loss. It’s versatile however might be gradual as a result of it calculates each break up precisely.

- XGBoost (2014): The sport changer. It added Regularization ($L1/L2$) to cease overfitting. It additionally launched parallel processing to make coaching a lot quicker.

- LightGBM (2017): The pace king. It teams knowledge into Histograms so it doesn’t have to take a look at each worth. It grows bushes Leaf-wise, discovering essentially the most error-reducing splits first.

- CatBoost (2017): The class grasp. It makes use of Symmetric Timber (each break up on the identical stage is identical). This makes it extraordinarily steady and quick at making predictions.

When to Use Which Methodology

The next desk clearly marks when to make use of which technique.

| Mannequin | Greatest Use Case | Decide It If | Keep away from It If |

|---|---|---|---|

| AdaBoost | Easy issues or small, clear datasets | You want a quick baseline or excessive interpretability utilizing easy resolution stumps | Your knowledge is noisy or comprises sturdy outliers |

| Gradient Boosting (GBM) | Studying or medium-scale scikit-learn tasks | You need customized loss features with out exterior libraries | You want excessive efficiency or scalability on massive datasets |

| XGBoost | Common-purpose, production-grade modeling | Your knowledge is generally numeric and also you need a dependable, well-supported mannequin | Coaching time is important on very massive datasets |

| LightGBM | Massive-scale, speed- and memory-sensitive duties | You might be working with hundreds of thousands of rows and wish fast experimentation | Your dataset is small and liable to overfitting |

| CatBoost | Datasets dominated by categorical options | You will have high-cardinality classes and wish minimal preprocessing | You want most CPU coaching pace |

Professional Tip: Many competition-winning options don’t select only one. They use an Ensemble averaging the predictions of XGBoost, LightGBM, and CatBoost to get one of the best of all worlds.

Conclusion

Boosting algorithms remodel weak learners into sturdy predictive fashions by studying from previous errors. AdaBoost launched this concept and stays helpful for easy, clear datasets, but it surely struggles with noise and scale. Gradient Boosting formalized boosting via loss minimization and serves because the conceptual basis for contemporary strategies. XGBoost improved this method with regularization, parallel processing, and robust robustness, making it a dependable all-round selection.

LightGBM optimized pace and reminiscence effectivity, excelling on very massive datasets. CatBoost solved categorical function dealing with with minimal preprocessing and robust resistance to overfitting. No single technique is greatest for all issues. The optimum selection depends upon knowledge measurement, function sorts, and {hardware}. In lots of real-world and competitors settings, combining a number of boosting fashions usually delivers one of the best efficiency.

Login to proceed studying and revel in expert-curated content material.