Retrieval-based brokers are on the coronary heart of many mission-critical enterprise use instances. Enterprise clients anticipate them to carry out reasoning duties that require following particular consumer directions and working successfully throughout heterogeneous information sources. Nevertheless, as a rule, conventional retrieval augmented era (RAG) fails to translate fine-grained consumer intent and information supply specs into exact search queries. Most present options successfully ignore this drawback, using off-the-shelf search instruments. Others drastically underestimate the problem, relying solely on customized fashions for embedding and reranking, that are essentially restricted of their expressiveness. On this weblog, we current the Instructed Retriever – a novel retrieval structure that addresses the constraints of RAG, and reimagines seek for the agentic period. We then illustrate how this structure allows extra succesful retrieval-based brokers, together with methods like Agent Bricks: Information Assistant, which should motive over complicated enterprise knowledge and keep strict adherence to consumer directions.

As an illustration, contemplate an instance at Determine 1, the place a consumer asks about battery life expectancy in a fictitious FooBrand product. As well as, system specs embrace directions about recency, varieties of doc to contemplate, and response size. To correctly observe the system specs, the consumer request has to first be translated into structured search queries that include the suitable column filters along with key phrases. Then, a concise response grounded within the question outcomes, needs to be generated primarily based on the consumer directions. Such complicated and deliberate instruction-following shouldn’t be achievable by a easy retrieval pipeline that focuses on consumer question alone.

![Figure 1. Example of the instructed retrieval workflow for query [What is the battery life expectancy for FooBrand products]. User instructions are translated into (a) two structured retrieval queries, retrieving both recent reviews, as well as an official product description (b) a short response, grounded in search results.](https://www.databricks.com/sites/default/files/inline-images/image7_24.png)

Conventional RAG pipelines depend on single-step retrieval utilizing consumer question alone and don’t incorporate any extra system specs equivalent to particular directions, examples or information supply schemas. Nevertheless, as we present in Determine 1, these specs are key to profitable instruction following in agentic search methods. To deal with these limitations, and to efficiently full duties such because the one described in Determine 1, our Instructed Retriever structure allows the movement of system specs into every of the system elements.

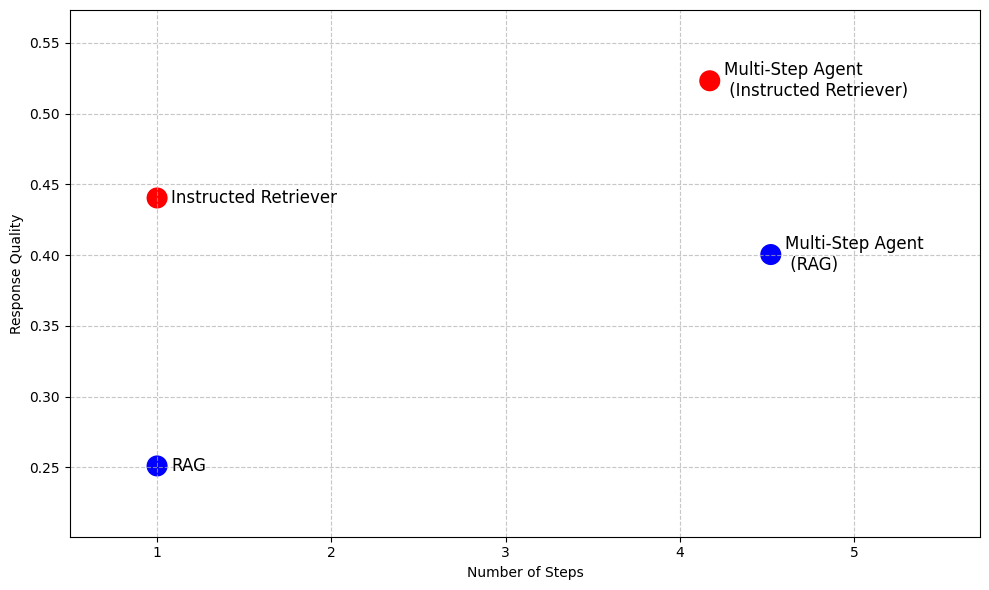

Even past RAG, in additional superior agentic search methods that permit iterative search execution, instruction following and underlying information supply schema comprehension are key capabilities that can’t be unlocked by merely executing RAG as a software for a number of steps, as Desk 1 illustrates. Thus, Instructed Retriever structure offers a highly-performant different to RAG, when low latency and small mannequin footprint are required, whereas enabling more practical search brokers for situations like deep analysis.

Retrieval Augmented Technology (RAG) | Instructed Retriever | Multi-step Agent (RAG) | Multi-step Agent (Instructed Retriever) | |

Variety of search steps | Single | Single | A number of | A number of |

Means to observe directions | ✖️ | ✅ | ✖️ | ✅ |

Information supply comprehension | ✖️ | ✅ | ✖️ | ✅ |

Low latency | ✅ | ✅ | ✖️ | ✖️ |

Small mannequin footprint | ✅ | ✅ | ✖️ | ✖️ |

Reasoning about outputs | ✖️ | ✖️ | ✅ | ✅ |

Desk 1. A abstract of capabilities of conventional RAG, Instructed Retriever, and a multi-step search agent applied utilizing both of the approaches as a software

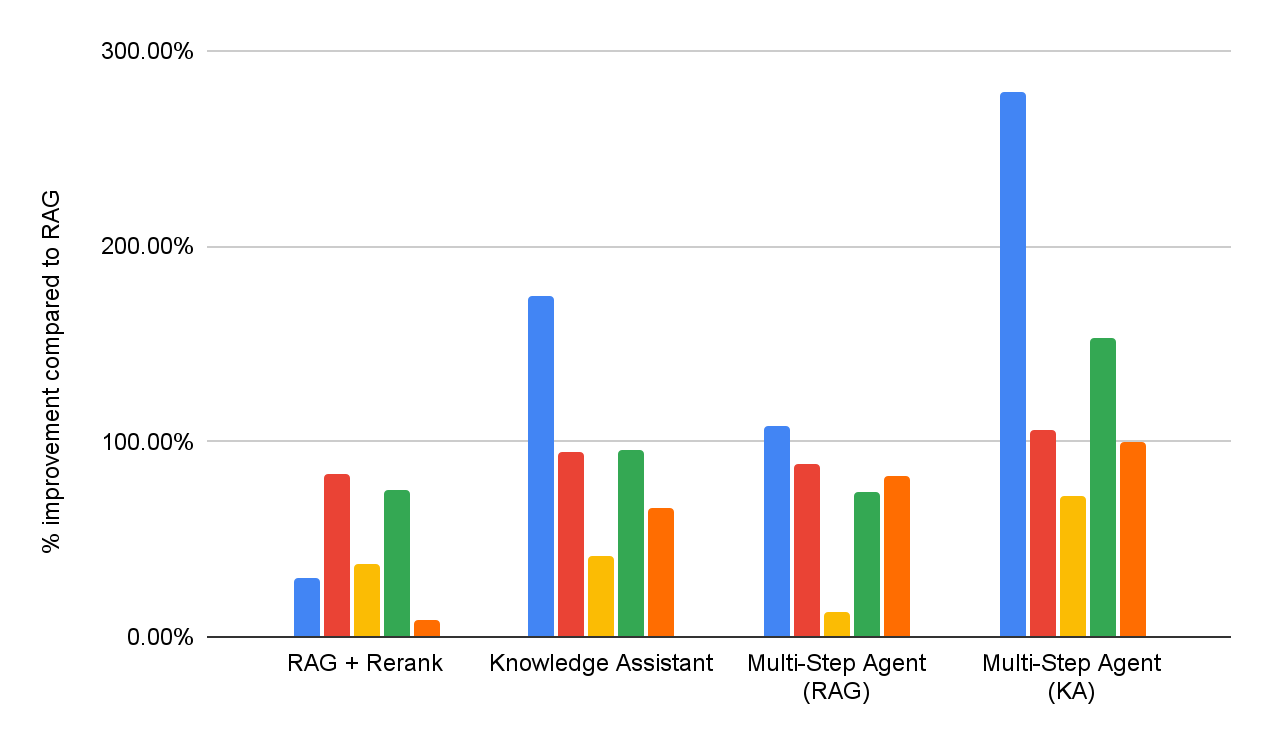

To show the benefits of the Instructed Retriever, Determine 2 previews its efficiency in comparison with RAG-based baselines on a collection of enterprise query answering datasets1. On these complicated benchmarks, Instructed Retriever will increase efficiency by greater than 70% in comparison with conventional RAG. Instructed Retriever even outperforms a RAG-based multi-step agent by 10%. Incorporating it as a software in a multi-step agent brings extra good points, whereas decreasing the variety of execution steps, in comparison with RAG.

In the remainder of the weblog submit, we talk about the design and the implementation of this novel Instructed Retriever structure. We show that the instructed retriever results in a exact and strong instruction following on the question era stage, which ends up in vital retrieval recall enhancements. Moreover, we present that these question era capabilities may be unlocked even in small fashions via offline reinforcement studying. Lastly, we additional break down the end-to-end efficiency of the instructed retriever, each in single-step and multi-step agentic setups. We present that it constantly allows vital enhancements in response high quality in comparison with conventional RAG architectures.

Instructed Retriever Structure

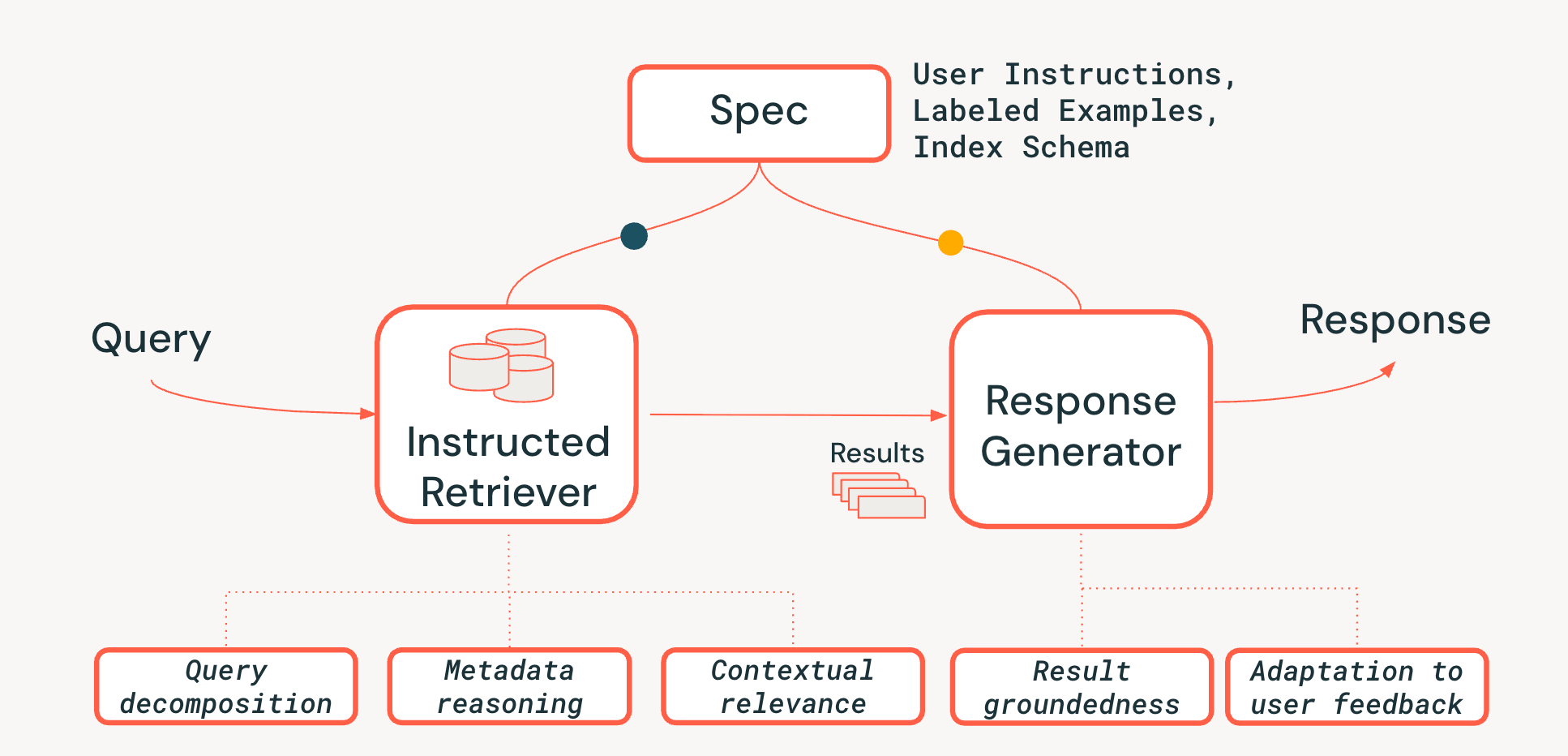

To deal with the challenges of system-level reasoning in agentic retrieval methods, we suggest a novel Instructed Retriever structure, proven in Determine 3. The Instructed Retriever can both be known as in a static workflow or uncovered as a software to an agent. The important thing innovation is that this new structure offers a streamlined option to not simply deal with the consumer’s speedy question, but in addition to propagate the entirety of the system specs to each retrieval and era system elements. This can be a elementary shift from conventional RAG pipelines, the place system specs would possibly (at finest) affect the preliminary question however are then misplaced, forcing the retriever and the response generator to function with out the very important context of those specs.

System specs are thus a set of guiding rules and directions that the agent should observe to faithfully fulfill the consumer request, which can embrace:

- Person Directions: Common preferences or constraints, like “concentrate on opinions from the previous few years” or “Don’t present any FooBrand merchandise within the outcomes“.

- Labeled Examples: Concrete samples of related / non-relevant <question, doc> pairs that assist outline what a high-quality, instruction-following retrieval appears to be like like for a selected activity.

- Index Descriptions: A schema that tells the agent what metadata is truly obtainable to retrieve from (e.g. product_brand, doc_timestamp, within the instance in Determine 1).2

To unlock the persistence of specs all through the whole pipeline, we add three vital capabilities to the retrieval course of:

- Question Decomposition: The flexibility to interrupt down a fancy, multi-part request (“Discover me a FooBrand product, however solely from final yr, and never a ‘lite’ mannequin“) right into a full search plan, containing a number of key phrase searches and filter directions.

- Contextual Relevance: Shifting past easy textual content similarity to true relevance understanding within the context of question and system directions. This implies the re-ranker, for instance, can use the directions to spice up paperwork that match the consumer intent (e.g., “recency“), even when the key phrases are a weaker match.

- Metadata Reasoning: One of many key differentiators of our Instructed Retriever structure is the power to translate pure language directions (“from final yr“) into exact, executable search filters (“doc_timestamp > TO_TIMESTAMP(‘2024-11-01’)”).

We additionally be sure that the response era stage is concordant with the retrieved outcomes, system specs, and any earlier consumer historical past or suggestions (as described in additional element in this weblog).

Instruction adherence in search brokers is difficult as a result of consumer info wants may be complicated, imprecise, and even conflicting, usually accrued via many rounds of pure language suggestions. The retriever should even be schema-aware — capable of translate consumer language into structured filters, fields, and metadata that truly exist within the index. Lastly, the elements should work collectively seamlessly to fulfill these complicated, typically multi-layered constraints with out dropping or misinterpreting any of them. Such coordination requires holistic system-level reasoning. As our experiments within the subsequent two sections show, Instructed Retriever structure is a serious advance towards unlocking this functionality in search workflows and brokers.

Evaluating Instruction-Following in Question Technology

Most present retrieval benchmarks overlook how fashions interpret and execute natural-language specs, notably these involving structured constraints primarily based on index schema. Due to this fact, to guage the capabilities of our Instructed Retriever structure, we prolong the StaRK (Semi-Structured Retrieval Benchmark) dataset and design a brand new instruction-following retrieval benchmark, StaRK-Instruct, utilizing its e-commerce subset, STaRK-Amazon.

For our dataset, we concentrate on three frequent varieties of consumer directions that require the mannequin to motive past plain textual content similarity:

- Inclusion directions – choosing paperwork that should include a sure attribute (e.g., “discover a jacket from FooBrand that’s finest rated for chilly climate”).

- Exclusion directions – filtering out gadgets that shouldn’t seem within the outcomes (e.g., “advocate a fuel-efficient SUV, however I’ve had unfavourable experiences with FooBrand, so keep away from something they make”).

- Recency boosting – preferring newer gadgets when time-related metadata is on the market (e.g., “Which FooBrand laptops have aged effectively? Prioritize opinions from the final 2–3 years—older opinions matter much less on account of OS adjustments”).

To construct StaRK-Instruct, whereas with the ability to reuse the present relevance judgments from StaRK-Amazon, we observe prior work on instruction following in info retrieval, and synthesize the present queries into extra particular ones by together with extra constraints that slim the present relevance definitions. The related doc units are then programmatically filtered to make sure alignment with the rewritten queries. By way of this course of, we synthesize 81 StaRK-Amazon queries (19.5 related paperwork per question) into 198 queries in StaRK-Instruct (11.7 related paperwork per question, throughout the three instruction sorts).

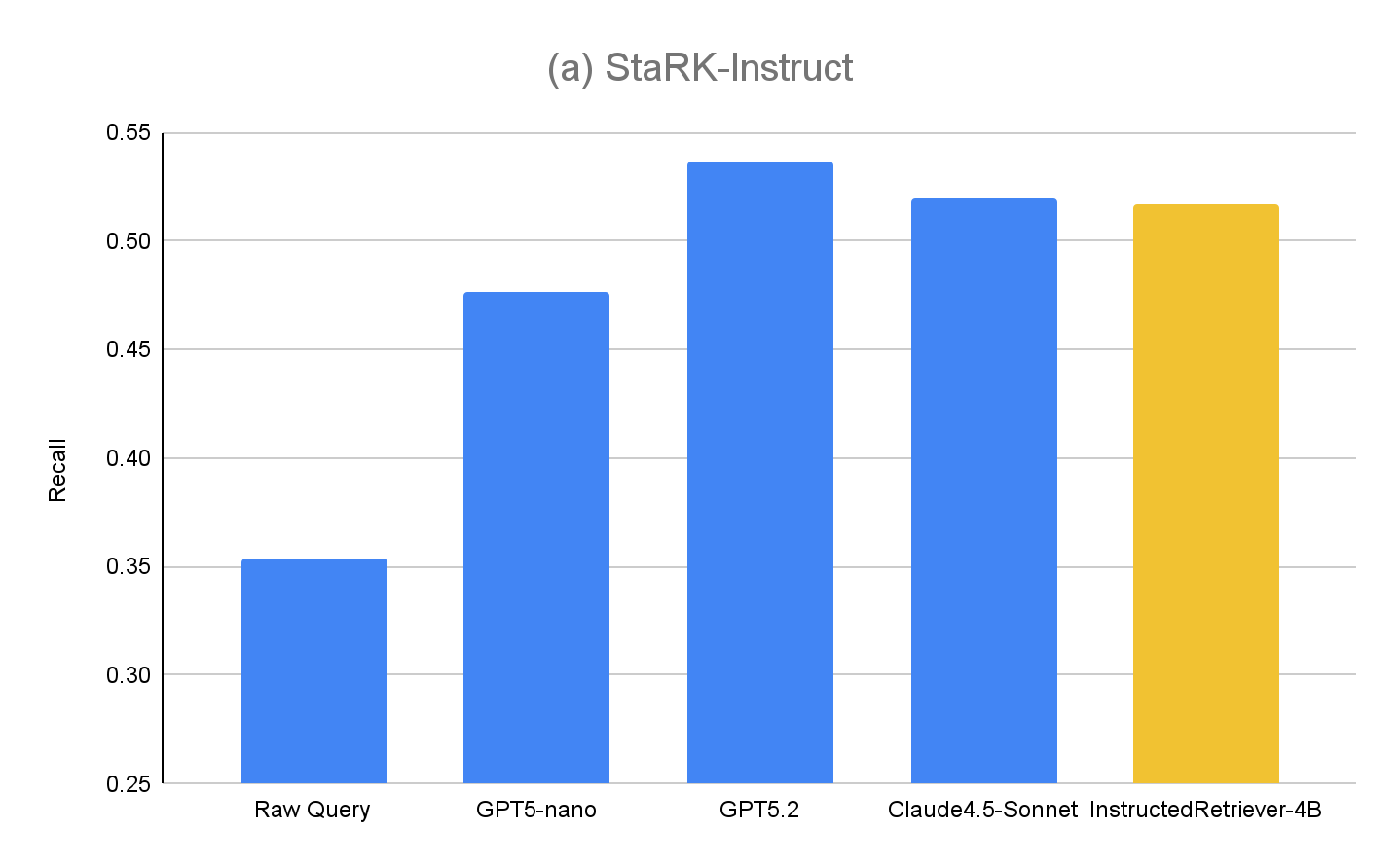

To judge the question era capabilities of Instructed Retriever utilizing StaRK-Instruct, we consider the next strategies (in a single step retrieval setup)

- Uncooked Question – as a baseline, we use the unique consumer question for retrieval, with none extra question era levels. That is akin to a conventional RAG method.

- GPT5-nano, GPT5.2, Claude4.5-Sonnet – we use every of the respective fashions to generate retrieval question, utilizing each authentic consumer queries, system specs together with consumer directions, and index schema.

- InstructedRetriever-4B – Whereas frontier fashions like GPT5.2 and Claude4.5-Sonnet are extremely efficient, they could even be too costly for duties like question and filter era, particularly for large-scale deployments. Due to this fact, we apply the Take a look at-time Adaptive Optimization (TAO) mechanism, which leverages test-time compute and offline reinforcement studying (RL) to show a mannequin to do a activity higher primarily based on previous enter examples. Particularly, we use the “synthetized” question subset from StaRK-Amazon, and generate extra instruction-following queries utilizing these synthetized queries. We immediately use recall because the reward sign to fine-tune a small 4B parameter mannequin, by sampling candidate software calls and reinforcing these reaching larger recall scores.

The outcomes for StaRK-Instruct are proven at Determine 4(a). Instructed question era achieves 35–50% larger recall on the StaRK-Instruct benchmark in comparison with the Uncooked Question baseline. The good points are constant throughout mannequin sizes, confirming that efficient instruction parsing and structured question formulation can ship measurable enhancements even below tight computational budgets. Bigger fashions usually exhibit additional good points, suggesting scalability of the method with mannequin capability. Nevertheless, our fine-tuned InstructedRetriever-4B mannequin nearly equals the efficiency of a lot bigger frontier fashions, and outperforms the GPT5-nano mannequin, demonstrating that alignment can considerably improve the effectiveness of instruction-following in agentic retrieval methods, even with smaller fashions.

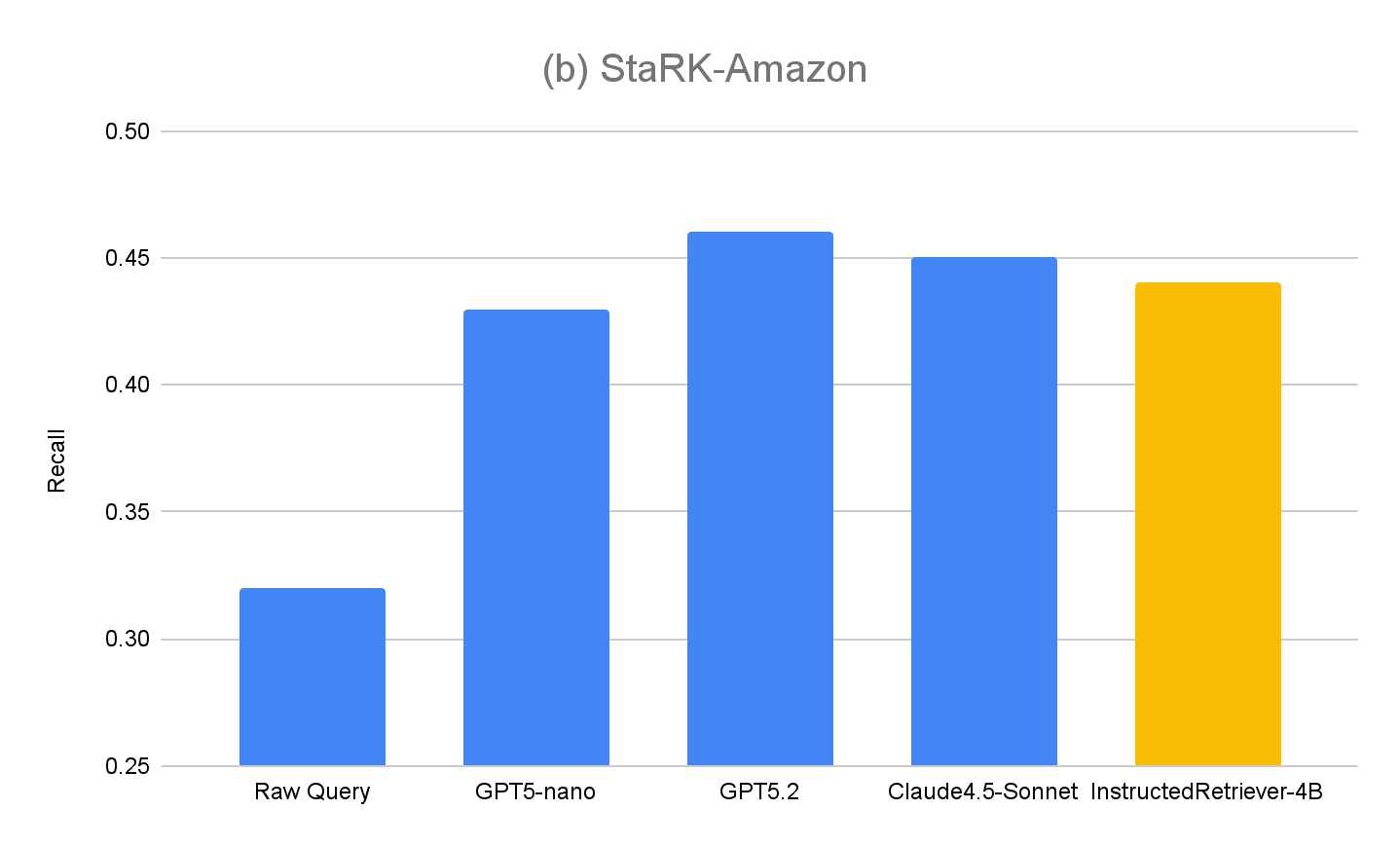

To additional consider the generalization of our method, we additionally measure efficiency on the authentic analysis set, StaRK-Amazon, the place queries should not have express metadata-related directions. As proven in Determine 4(b), all of the instructed question era strategies exceed Uncooked Question recall on StaRK-Amazon by round 10%, confirming that instruction-following is helpful in unconstrained question era situations as effectively. We additionally see no degradation in InstructedRetriever-4B efficiency in comparison with non-finetuned fashions, confirming that specialization to structured question era doesn’t harm its common question era capabilities.

Deploying Instructed Retriever in Agent Bricks

Within the earlier part, we demonstrated the numerous good points in retrieval high quality which can be achievable utilizing instruction-following question era. On this part, we additional discover the usefulness of an instructed retriever as part of a production-grade agentic retrieval system. Particularly, Instructed Retriever is deployed in Agent Bricks Information Assistant, a QA chatbot with which you’ll ask questions and obtain dependable solutions primarily based on the supplied domain-specialized information.

We contemplate two DIY RAG options as baselines:

- RAG We feed the highest retrieved outcomes from our extremely performant vector search right into a frontier giant language mannequin for era.

- RAG + Rerank We observe the retrieval stage by a reranking stage, which was proven to spice up retrieval accuracy by a mean of 15 proportion factors in earlier exams. The reranked outcomes are fed right into a frontier giant language mannequin for era.

To evaluate the effectiveness of each DIY RAG options, and Information Assistant, we conduct reply high quality analysis throughout the identical enterprise query answering benchmark suite as reported at Determine 1. Moreover, we implement two muti-step brokers which have entry to both RAG or Information Assistant as a search software, respectively. Detailed efficiency for every dataset is reported in Determine 5 (as a % enchancment in comparison with the RAG baseline).

General, we are able to see that every one methods constantly outperform the straightforward RAG baseline throughout all datasets, reflecting its incapability to interpret and constantly implement multi-part specs. Including a re-ranking stage improves outcomes, demonstrating some profit from post-hoc relevance modeling. Information Assistant, applied utilizing the Instructed Retriever structure, brings additional enhancements, indicating the significance of persisting the system specs – constraints, exclusions, temporal preferences, and metadata filters – via each stage of retrieval and era.

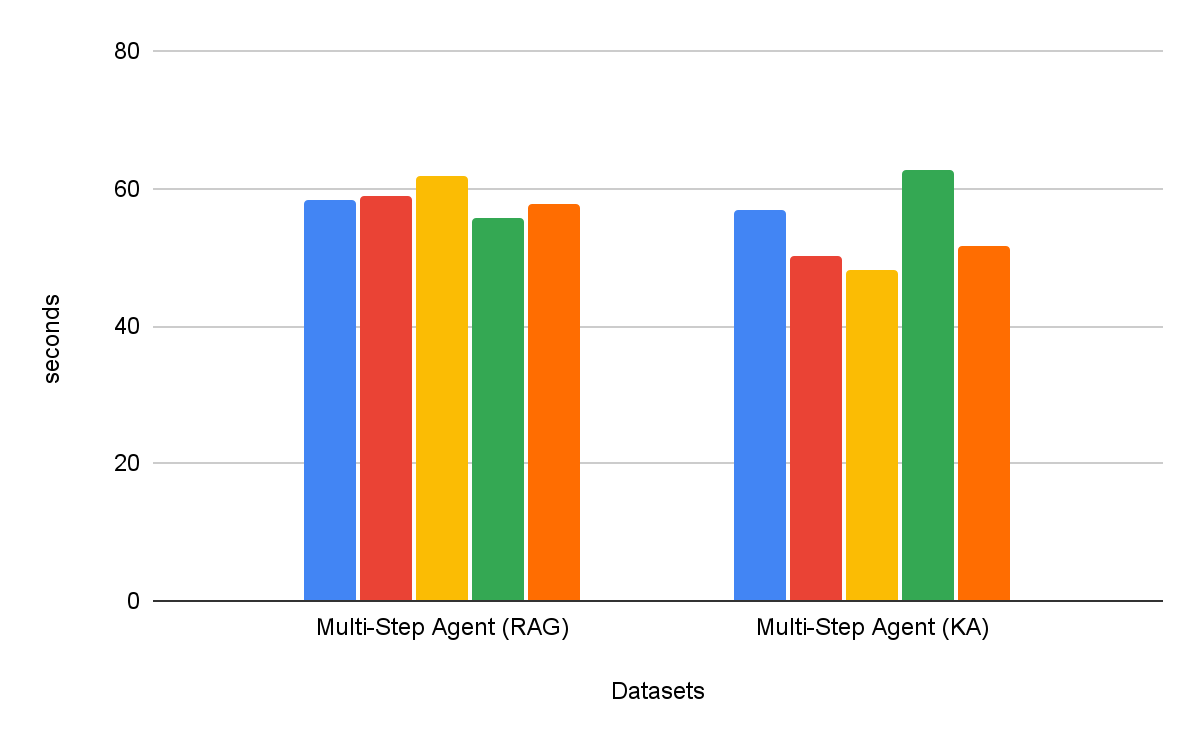

Multi-step search brokers are constantly more practical than single-step retrieval workflows. Moreover, the selection of software issues – Information Assistant as a software outperforms RAG as a software by over 30%, with constant enchancment throughout all datasets. Curiously, it doesn’t simply enhance high quality, but in addition achieves decrease time to activity completion in most datasets, with common discount of 8% (Determine 6).

Conclusion

Constructing dependable enterprise brokers requires complete instruction-following and system-level reasoning when retrieving from heterogeneous information sources. To this finish, on this weblog we current the Instructed Retriever structure, with the core innovation of propagating full system specs — from directions to examples and index schema — via each stage of the search pipeline.

We additionally offered a brand new StaRK-Instruct dataset, which evaluates retrieval agent’s potential to deal with real-world directions like inclusion, exclusion, and recency. On this benchmark, the Instructed Retriever structure delivered substantial 35-50% good points in retrieval recall, empirically demonstrating the advantages of a system-wide instruction-awareness for question era. We additionally present {that a} small, environment friendly mannequin may be optimized to match the instruction-following efficiency of bigger proprietary fashions, making Instructed Retriever an economical agentic structure appropriate for real-world enterprise deployments.

When built-in with an Agent Bricks Information Assistant, Instructed Retriever structure interprets immediately into higher-quality, extra correct responses for the tip consumer. On our complete high-difficulty benchmark suite, it offers good points of upward of 70% in comparison with a simplistic RAG resolution, and upward of 15% high quality achieve in comparison with extra refined DIY options that incorporate reranking. Moreover, when built-in as a software for a multi-step search agent, Instructed Retriever cannot solely enhance efficiency by over 30%, but in addition lower time to activity completion by 8%, in comparison with RAG as a software.

Instructed Retriever, together with many beforehand printed improvements like immediate optimization, ALHF, TAO, RLVR, is now obtainable within the Agent Bricks product. The core precept of Agent Bricks is to assist enterprises develop brokers that precisely motive on their proprietary knowledge, constantly study from suggestions, and obtain state-of-the-art high quality and cost-efficiency on domain-specific duties. We encourage clients to attempt the Information Assistant and different Agent Bricks merchandise for constructing steerable and efficient brokers for their very own enterprise use instances.

Authors: Cindy Wang, Andrew Drozdov, Michael Bendersky, Wen Solar, Owen Oertell, Jonathan Chang, Jonathan Frankle, Xing Chen, Matei Zaharia, Elise Gonzales, Xiangrui Meng

1 Our suite incorporates a mixture of 5 proprietary and educational benchmarks that check for the next capabilities: instruction-following, domain-specific search, report era, checklist era, and search over PDFs with complicated layouts. Every benchmark is related to a customized high quality choose, primarily based on the response kind.

2 Index descriptions may be included within the user-specified instruction, or routinely constructed through strategies for schema linking which can be usually employed in methods for text-to-SQL, e.g. worth retrieval.