Socure is among the main suppliers of digital id verification and fraud options. Its predictive analytics platform applies synthetic intelligence (AI) and machine studying (ML) methods to course of each on-line and offline intelligence, together with government-issued paperwork, contact info (electronic mail, telephone, tackle), private identifiers (DOB, SSN), and machine or community knowledge (IP, velocity) to confirm identities precisely and in actual time.

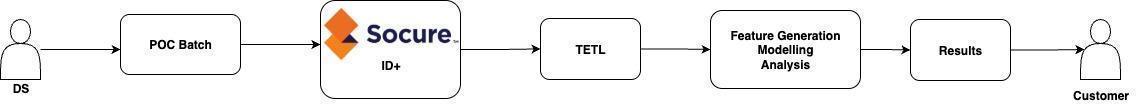

Socure ID+ is an id verification platform that makes use of a number of Socure choices corresponding to KYC, SIGMA, eCBSV. Cellphone Danger and extra. It has two environments centered on proof of idea (POC) and dwell clients. The Information Science (DS) atmosphere is designed for the POC or proof of worth (POV) stage. On this atmosphere, clients present datasets through SFTP, that are processed by Socure’s knowledge scientists by means of an inside endpoint. The info undergoes ML-based scoring and different intelligence calculations relying on the chosen modules and processed outcomes are saved in Amazon Easy Storage Service (Amazon S3) in delta open desk format . Within the Manufacturing (Prod) atmosphere, clients can confirm identities both in actual time by means of dwell endpoints or through a batch processing interface.

Socure’s knowledge science atmosphere features a streaming pipeline referred to as Transaction ETL (TETL), constructed on OSS Apache Spark working on Amazon EKS. TETL ingests and processes knowledge volumes starting from small to giant datasets whereas sustaining high-throughput efficiency.

The first function of this pipeline is to offer knowledge scientists a versatile atmosphere to run POC workloads for purchasers.

Information scientists…

- set off ingestion of POC datasets, starting from small batches to large-scale volumes.

- eat the processed outputs written by the pipeline for evaluation and mannequin growth.

- share the outcomes with Socure’s clients.

The next diagram exhibits the Transaction ETL (TETL) structure.

This pipeline instantly helps buyer POCs, making certain that the suitable knowledge is accessible for experimentation, validation, and demonstration. As such, it’s a vital hyperlink between uncooked knowledge and customer-facing outcomes, making its reliability and efficiency important for delivering worth. On this submit, we present how Socure was capable of obtain 50% value discount by migrating the TETL streaming pipeline from self-managed spark to Amazon EMR serverless.

Motivation

As knowledge volumes have scaled by 10x, a number of challenges like latency and knowledge reliability have emerged that instantly impression the client expertise:

- Efficiency points as a result of inefficient autoscaling main to extend in latency as much as 5x

- Excessive operational value of sustaining an OSS Spark atmosphere on EKS

Moreover, we have now recognized different necessary points:

- Useful resource constraints as a result of occasion provisioning limits, forcing using smaller nodes. This results in frequent spark executor out of reminiscence (OOM) failures underneath heavy hundreds, rising job latency and delaying knowledge availability.

- Efficiency bottlenecks with Delta Lake, the place giant batch operations corresponding to OPTIMIZE compete for assets and decelerate streaming workloads.

Throughout this migration, we additionally took the chance to transition to AWS Graviton, enabling further value efficiencies as defined on this submit.

With these two major drivers we started exploring different structure utilizing Amazon EMR. We already dd in depth benchmarking on a number of id verification associated batch workloads on completely different EMR platforms and got here to the conclusion that Amazon EMR Serverless (EMR-S) presents a path to scale back operational value, enhance reliability, and higher deal with large-scale batch and streaming workloads; tackling each customer-facing points and platform-level inefficiencies.

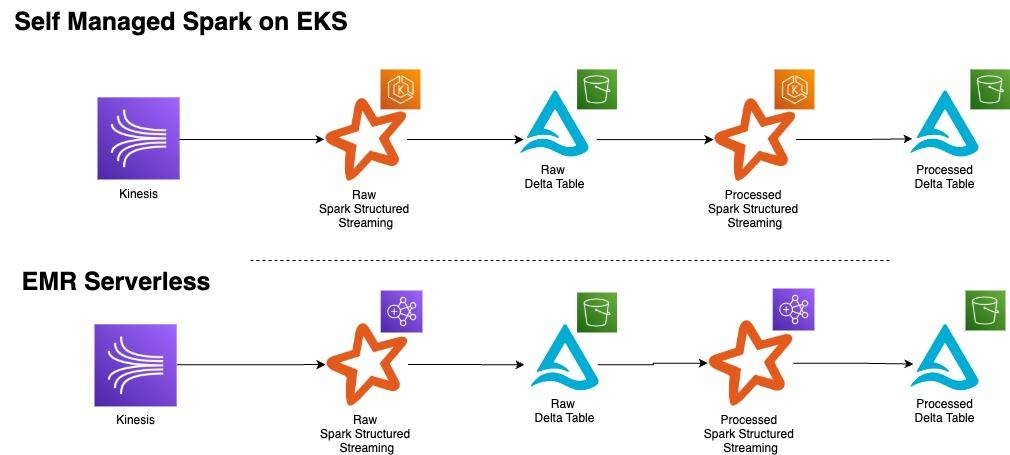

The brand new pipeline structure

The info processing pipeline follows a two-stage structure the place streaming knowledge from Amazon Kinesis Information Stream first flows into the uncooked layer, which parses incoming knowledge into giant JSON blobs, applies encryption, and shops the ends in append-only Delta Tables. The processed layer consumes knowledge from these uncooked Delta tables, performs decryption, transforms the info right into a flattened and vast construction with correct area parsing, applies particular person encryption to personally identifiable info (PII) fields, and writes the refined knowledge to separate append-only Delta Tables for downstream consumption.

The next diagram exhibits the TETL earlier than/after structure we carried out, transitioning from OSS Spark on EKS to Spark on EMR Serverless.

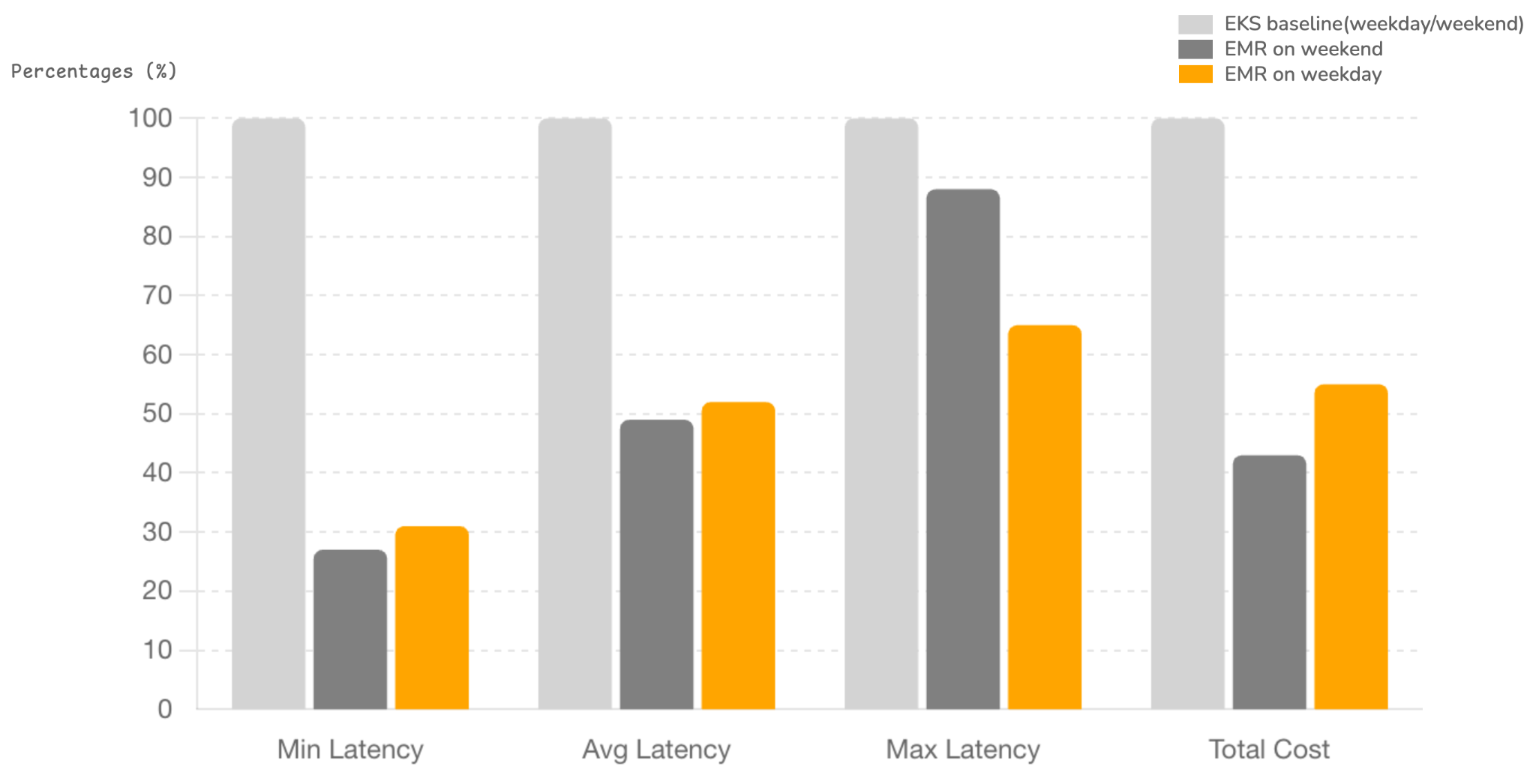

Benchmarking

We benchmarked end-to-end pipeline efficiency throughout OSS Spark on EKS and EMR Serverless. The analysis centered on latency and price underneath comparable useful resource configurations.

Useful resource Configuration

EKS (OSS Spark):

- Min 30 executors

- Max 90 executors

- 14 GB reminiscence / 2 cores per executor

EMR Serverless:

- Min 10 executors

- Max 30 executors

- 27 GB reminiscence / 4 cores per executor

- Successfully ~60 executors when normalized for 2x reminiscence and cores, designed to mitigate the OOM points described earlier.

Observations

- Autoscaling Effectivity: EMR Serverless scaled down successfully to twenty staff on common over the weekend (low site visitors day), leading to decrease prices as much as 12% in comparison with weekday.

- Executor Sizing: Bigger executors on EMR Serverless prevented OOM failures and improved stability underneath load.

Definitions

- Price: It’s the service value for each uncooked & processed jobs from the AWS Price Explorer.

- Latency: Finish-to-end latency measures the time from Socure ID+ occasion technology till knowledge arrives within the processed delta desk, calculated as Inserted Date minus Occasion Date.

Outcomes

The values within the following desk characterize share enhancements noticed when working on EMR in comparison with EKS.

| Low Site visitors (Weekend) | Common Site visitors (Weekday) | |

| Data Rely | ~1M | ~5M |

| Min Latency (finest case) | 73.3% | 69.2% |

| Avg Latency (consultant workload) | 51.0% | 47.9% |

Max Latency (worst case) | 12.3% | 34.7% |

| Whole Price | 57.1% | 45.2% |

Notice: Even with a conservative 40% value discount utilized to the EKS atmosphere to account for Graviton, EMR-S stays roughly 15% cheaper.

The benchmarking outcomes clearly reveal that EMR Serverless outperforms OSS Spark on EKS for our end-to-end pipeline workloads. By shifting to EMR Serverless, we achieved:

- Improved efficiency: Common latency lowered by greater than 50%, with constantly decrease min and max latencies.

- Price effectivity: General pipeline execution prices dropped by greater than half.

- Scalability: Autoscaling optimized useful resource utilization, additional reducing value throughout off-peak intervals.

- Operational overhead: EMR-S totally managed and serverless nature eliminates the necessity to preserve EKS and OSS Spark.

Conclusion

On this submit, we confirmed how Socure transitioning to EMR Serverless not solely resolved vital points round value, reliability, and latency, but additionally supplied a extra scalable and sustainable structure for serving buyer POCs successfully, enabling us to ship outcomes to clients sooner and strengthen our place for potential customized contracts.