For organizations working Apache Spark workloads, model upgrades have lengthy represented a big operational problem. What needs to be a routine upkeep process typically evolves into an engineering mission spanning a number of months, consuming precious sources that would drive innovation as an alternative of managing technical debt. Engineering groups should typically manually analyze API deprecation, resolve behavioral adjustments within the engine, handle shifting dependency necessities, and re-validate each performance and knowledge high quality, all whereas conserving manufacturing workloads working easily. This complexity delays entry to efficiency enhancements, new options, and important safety updates.

At re:Invent 2025, we introduced the AI-powered improve agent for Apache Spark on Amazon EMR. Working immediately inside your IDE, this agent handles the heavy lifting of model upgrades that entails analyzing code, making use of fixes, and validating outcomes, when you preserve management over each change. What as soon as took months can now be accomplished in hours.

On this publish, you’ll learn to:

- Assess your present Amazon EMR Spark functions

- Use the Spark improve agent immediately from the Kiro IDE

- Improve a pattern e-commerce order analytics Spark utility mission (construct configs, supply code, checks, knowledge high quality validation)

- Evaluate code adjustments after which roll them out by means of your CI/CD pipeline

Spark improve agent structure

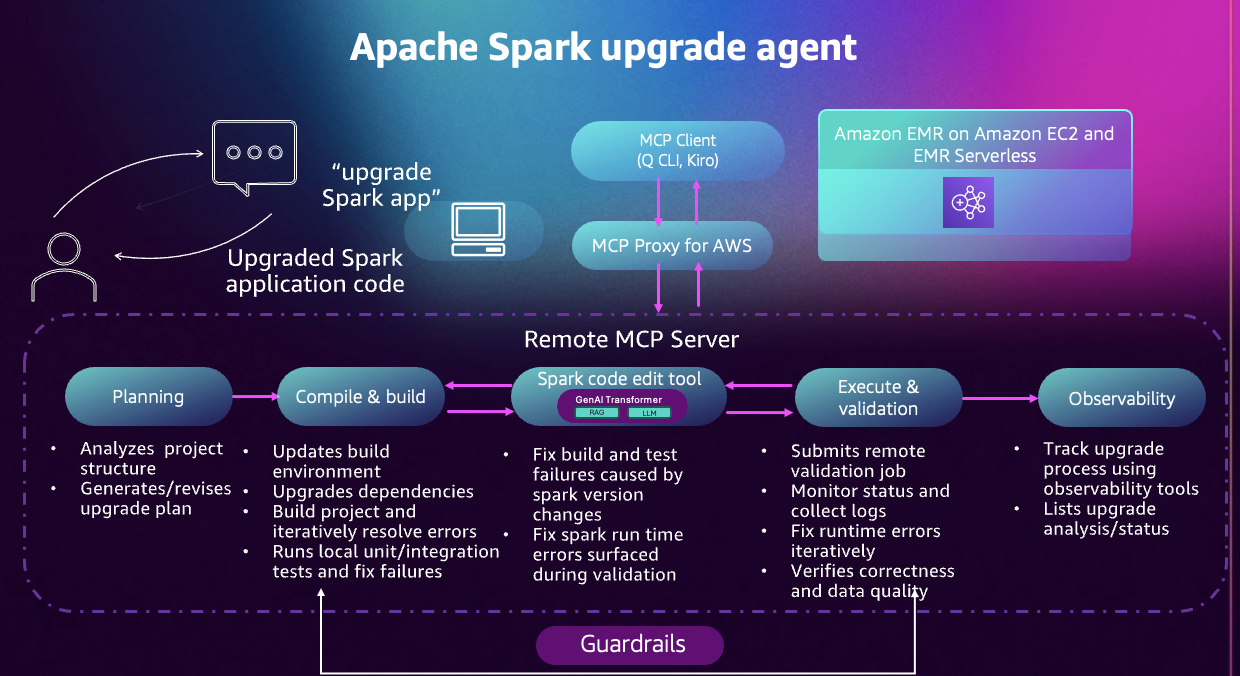

The Apache Spark improve agent for Amazon EMR is a conversational AI functionality designed to speed up Spark model upgrades for EMR functions. By way of an MCP-compatible shopper, such because the Amazon Q Developer CLI, the Kiro IDE, or any customized agent constructed with frameworks like Strands, you possibly can work together with a Mannequin Context Protocol (MCP) server utilizing pure language.

Determine 1: A diagram of the Apache Spark improve agent workflow.

Working as a completely managed, cloud-hosted MCP server, the agent removes the necessity to preserve any native infrastructure. All instrument calls and AWS useful resource interactions are ruled by your AWS Identification and Entry Administration (IAM) permissions, guaranteeing the agent operates solely inside the entry you authorize. Your utility code stays in your machine, and solely the minimal info required to diagnose and repair improve points is transmitted. Each instrument invocation is recorded in AWS CloudTrail, offering full auditability all through the method.

Constructed on years of expertise serving to EMR prospects improve their Spark functions, the improve agent automates the end-to-end modernization workflow, lowering guide effort and eliminating a lot of the trial-and-error sometimes concerned in main model upgrades. The agent guides you thru six phases:

- Planning: The agent analyzes your mission construction, identifies compatibility points, and generates an in depth improve plan. You evaluate and customise this plan earlier than execution begins.

- Atmosphere setup: The agent configures construct instruments, updates language variations, and manages dependencies. For Python tasks, it creates digital environments with appropriate package deal variations.

- Code transformation: The agent updates construct recordsdata, replaces deprecated APIs, fixes sort incompatibilities, and modernizes code patterns. Adjustments are defined and proven earlier than being utilized.

- Native validation: The agent compiles your mission and runs your take a look at suite. When checks fail, it analyzes errors, applies fixes, and retries. This continues till all checks cross.

- EMR validation: The agent packages your utility, deploys it to EMR, screens execution, and analyzes logs. Runtime points are fastened iteratively.

- Information high quality checks: The agent can run your utility on each supply and goal Spark variations, evaluate outputs, and report variations in schemas, values, or statistics.

All through the method, the agent explains its reasoning and collaborates with you on choices.

Getting began

(Non-obligatory) Assessing your accounts for EMR Spark Upgrades

Earlier than starting a Spark improve, it’s useful to grasp the present state of your setting. Many shoppers run Spark functions throughout a number of Amazon EMR clusters and variations, making it difficult to know which workloads needs to be prioritized for modernization. In case you have already recognized the Spark functions that you just wish to improve or have already got a dashboard, you possibly can skip this evaluation step and transfer to the subsequent part to get began with the Spark improve agent.

Constructing an Evaluation Dashboard

To simplify this discovery course of, we offer a light-weight Python-based evaluation instrument that scans your EMR setting and generates an interactive dashboard summarizing your Spark utility footprint. The instrument evaluations EMR steps, extracts utility metadata, and computes EMR lifecycle timelines that can assist you to:

- Perceive your Spark functions and their executions distribution over totally different EMR variations.

- Evaluate days remaining till every EMR model reaches finish of assist (EOS) for all Spark functions.

- Consider what functions needs to be prioritized emigrate to newer EMR model.

Key insights from the evaluation

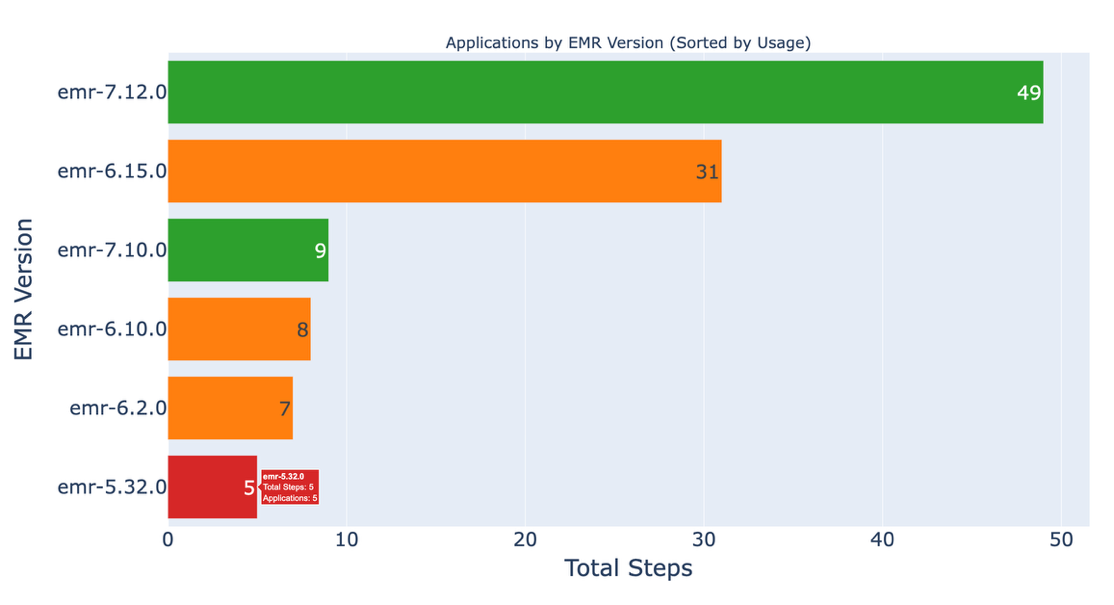

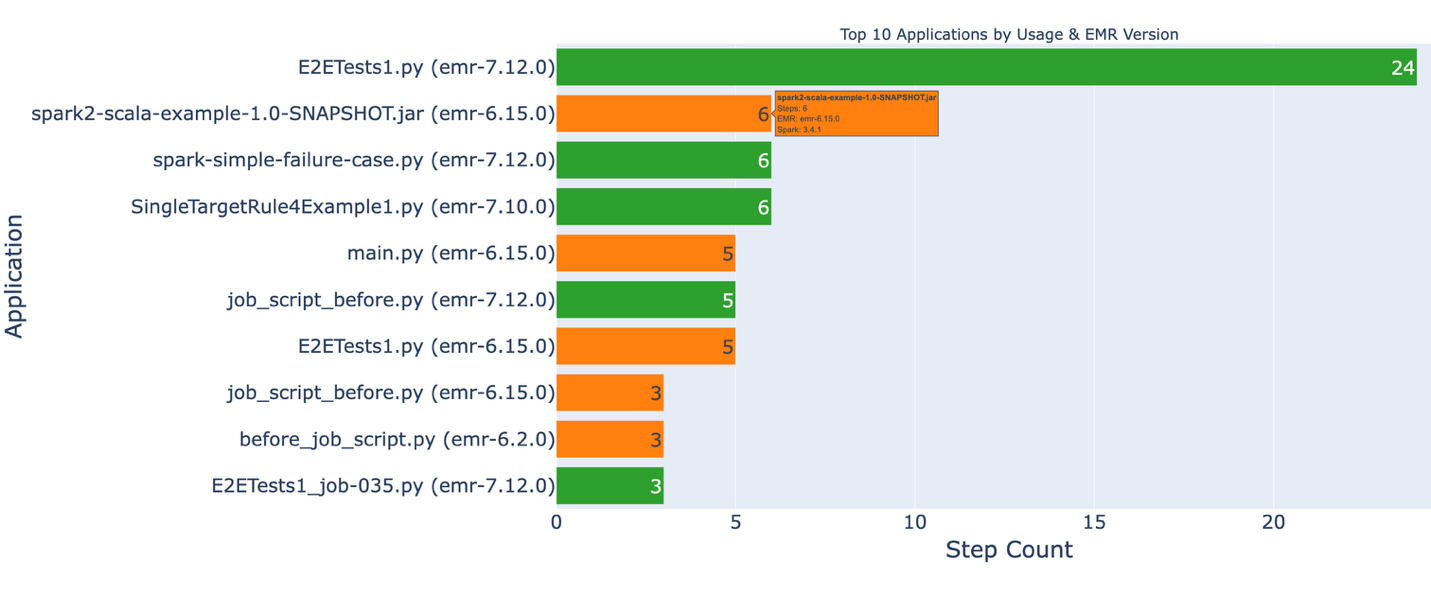

Determine 2: A graph of EMR variations per utility.

This dashboard exhibits what number of Spark functions are working on legacy EMR variations, serving to you establish which workloads emigrate first.

Determine 3: a graph of utility use and present variations.

This dashboard identifies your most ceaselessly used functions and their present EMR variations. Purposes marked in purple point out high-impact workloads that needs to be prioritized for migration.

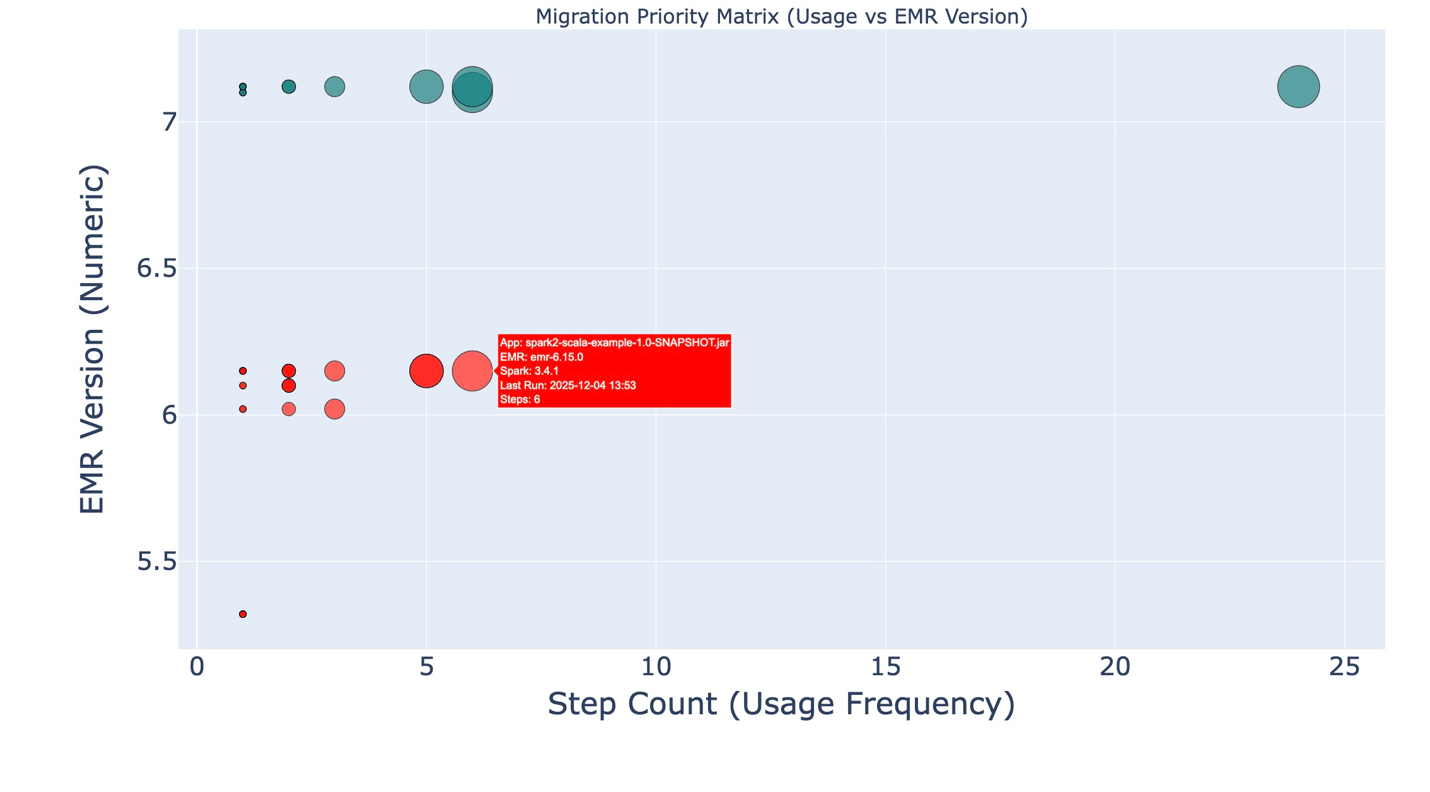

Determine 4: a utilization and EMR model graph.

This dashboard highlights high-usage functions working on older EMR variations. Bigger bubbles symbolize extra ceaselessly used functions, and the Y-axis exhibits the EMR model. Collectively, these dimensions make it simple to identify which functions needs to be prioritized for improve.

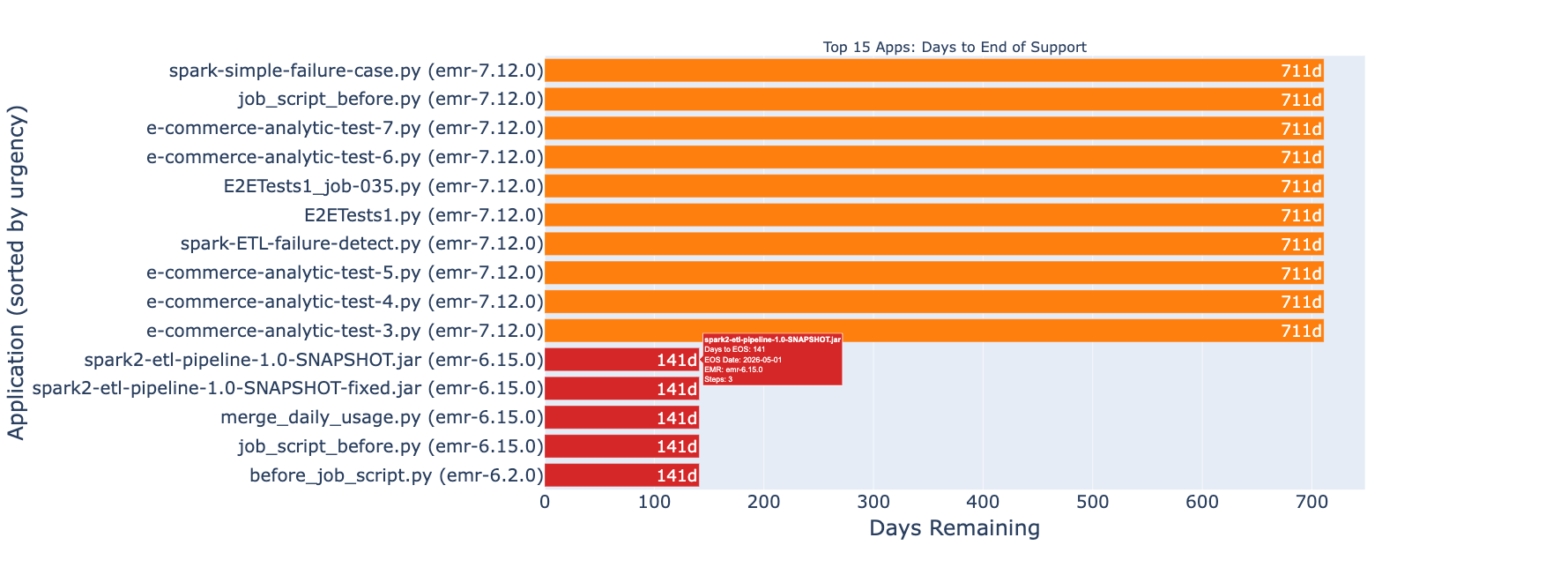

Determine 5: a graph highlighting functions nearing Finish of Assist.

The dashboard identifies functions approaching EMR Finish of Assist, serving to you prioritize migrations earlier than updates and technical assist are discontinued. For extra details about assist timelines, see Amazon EMR normal assist.

After getting recognized the functions that have to be upgraded, you need to use any IDE similar to VS Code, Kiro IDE, or another setting that helps putting in an MCP server to start the improve.

Getting began with Spark improve agent utilizing Kiro IDE

Stipulations

System necessities

IAM permissions

Your AWS IAM profile should embody permissions to invoke the MCP server and entry your Spark workload sources. The CloudFormation template offered within the setup documentation creates an IAM function with these permissions, together with supporting sources such because the Amazon S3 staging bucket the place the improve artifacts will probably be uploaded. You can too customise the template to manage which sources are created or skip sources you favor to handle manually.

- Deploy the template inside the identical area you run your workloads in.

- Open the CloudFormation Outputs tab and replica the 1-line instruction

ExportCommand, then execute it in your native setting. - Configure your AWS CLI profile:

Arrange Kiro IDE and hook up with the Spark improve agent

Kiro IDE offers a visible improvement setting with built-in AI help for interacting with the Apache Spark improve agent.

Set up and configuration:

- Set up Kiro IDE

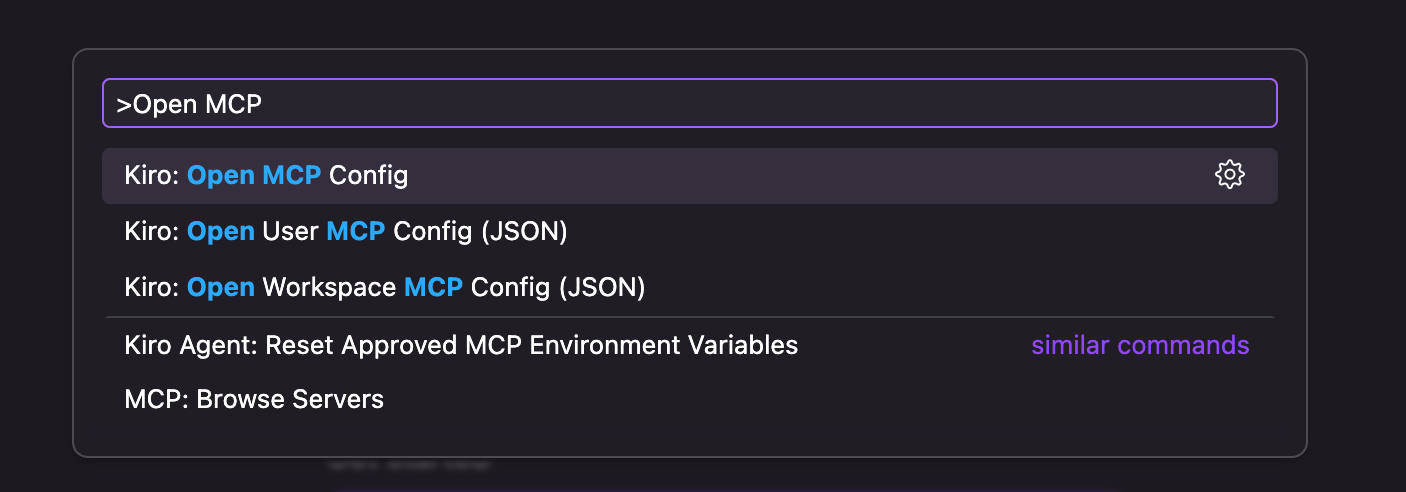

- Open the command palette utilizing Ctrl + Shift + P (Linux) or Cmd + Shift + P (macOS) and Seek for Kiro: Open MCP Config

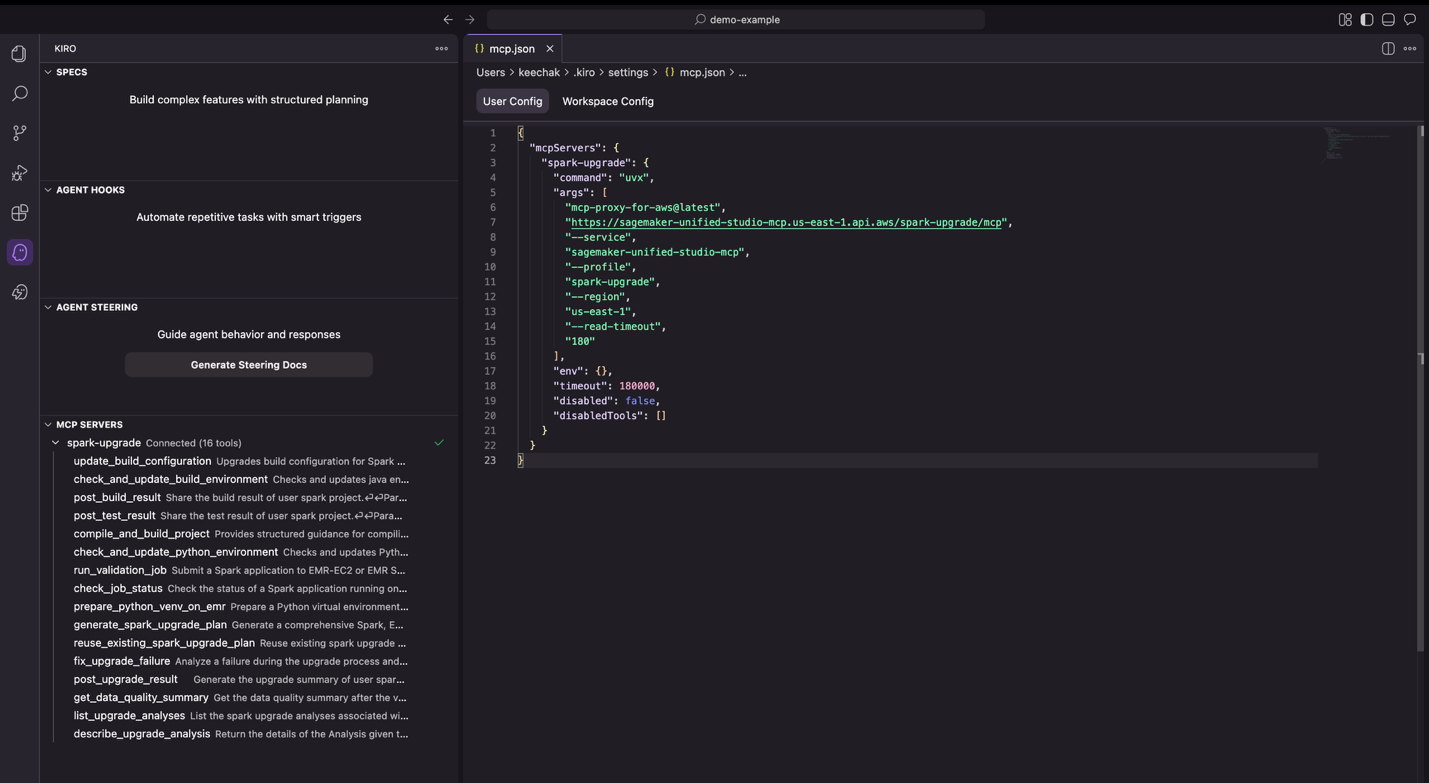

Determine 6: the Kiro command palette. - Add the Spark improve agent configuration

- As soon as saved, the Kiro sidebar shows a profitable connection to the improve server.

Determine 7: Kiro IDE displaying a profitable connection to the MCP server.

Upgrading a pattern Spark utility utilizing Kiro IDE

To reveal upgrading from EMR 6.1.0 (Spark 3.0.0) to EMR 7.11.0 (Spark 3.5.6), now we have ready a pattern e-commerce order processing utility. This utility fashions a typical analytics pipeline that processes order knowledge to generate enterprise insights, together with buyer income metrics, supply date calculations, and multi-dimensional gross sales studies. The workload incorporates struct operations, date/interval math, grouping semantics, and aggregation logic patterns generally present in manufacturing knowledge pipelines.

Obtain the pattern mission

Clone the pattern mission from the Amazon EMR utilities GitHub repository:

Open the mission in Kiro IDE

Launch Kiro IDE and open the demo-spark-application folder. Take a second to discover the mission construction, which incorporates the Maven configuration (pom.xml), the primary Scala utility, unit checks, and pattern knowledge.

Beginning an improve

After getting the mission loaded within the Kiro IDE, choose the Chat tab on the right-hand facet of the IDE and kind the next immediate to begin the improve of the pattern income analytics utility:

Be aware: Substitute j-XXXXXXXXXXXXX along with your EMR cluster ID and <path to add improve artifacts> along with your S3 bucket title.

How the improve agent works

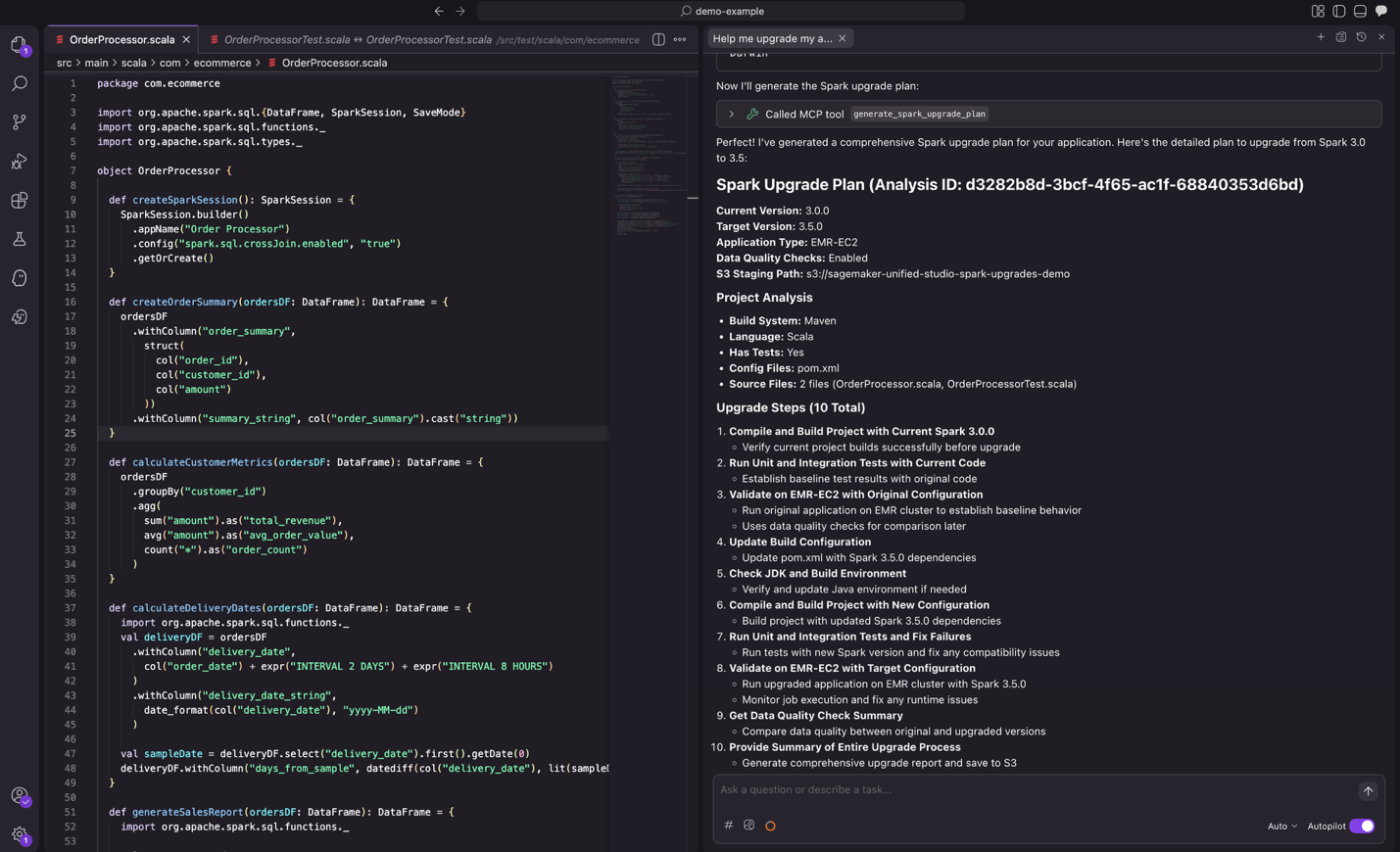

Step 1: Analyze and plan

After you submit the immediate, the agent analyzes your mission construction, construct system, and dependencies to create an improve plan. You may evaluate the proposed plan and recommend modifications earlier than continuing.

Determine 8: the proposed improve plan from the agent, prepared for evaluate.

Step 2: Improve dependencies

The agent will analyze all mission dependencies and makes the required adjustments to improve the variations for compatibility with the goal Spark model. It then compiles the mission, builds the appliance, and runs checks to confirm the whole lot works accurately with the goal Spark model.

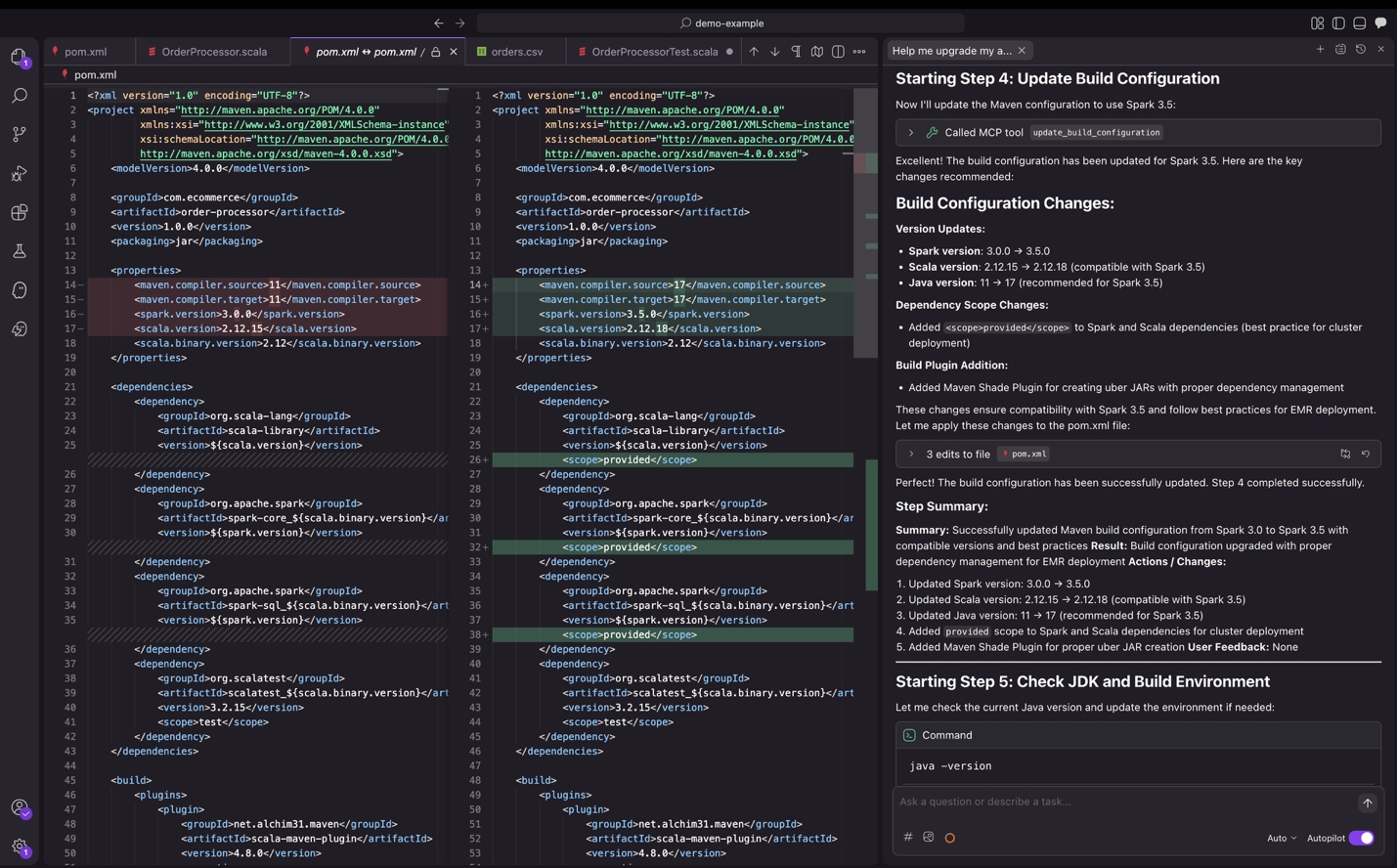

Determine 9: Kiro IDE upgrading dependency variations.

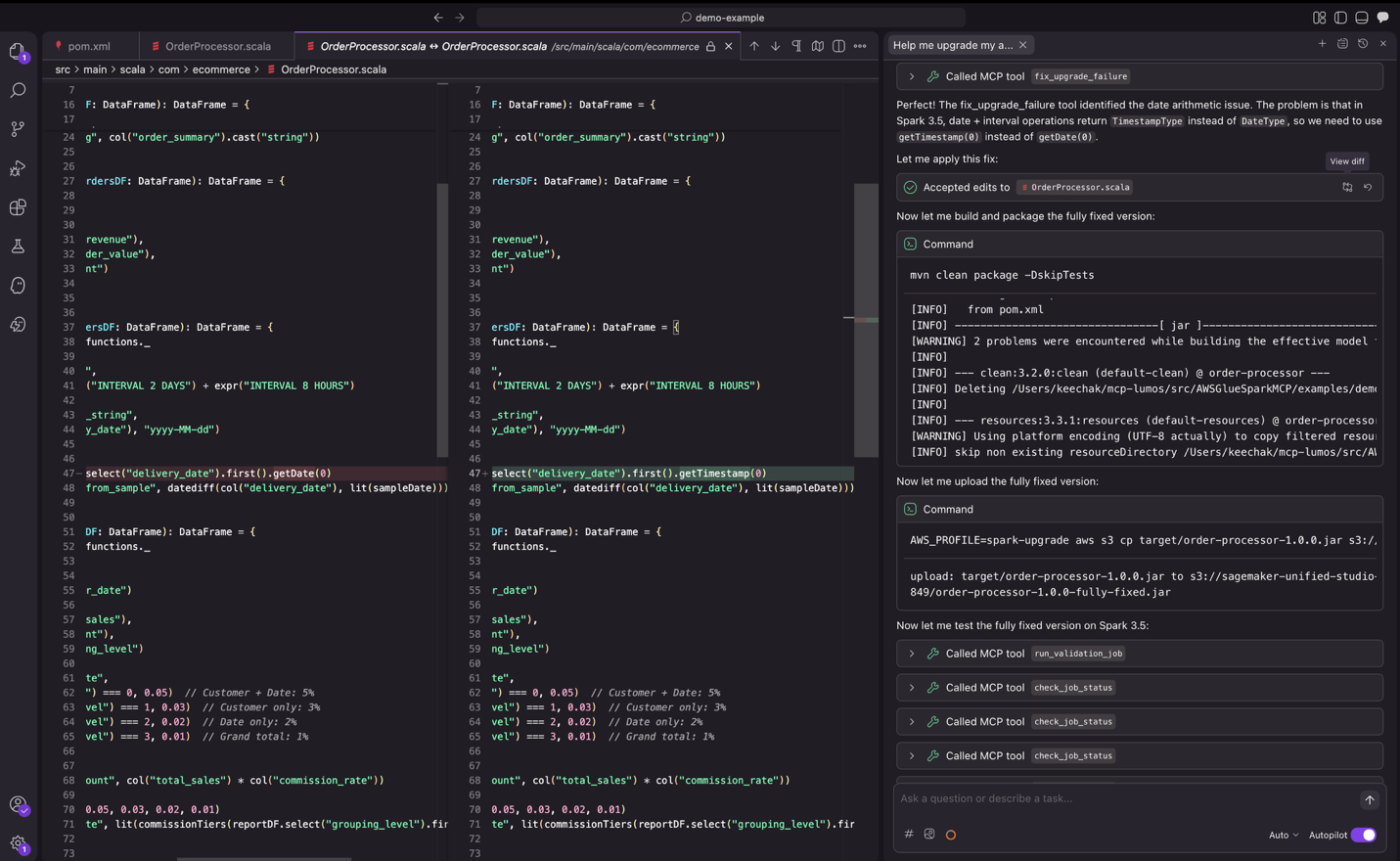

Step 3: Code transformation

Alongside dependency updates, the agent identifies and fixes code adjustments in supply and take a look at recordsdata arising from deprecated APIs, modified dependencies, or backward incompatible conduct. The agent validates these modifications by means of unit, integration, and distant validation on Amazon EMR on Amazon EC2 or EMR Serverless relying in your deployment mode, iterating till profitable execution.

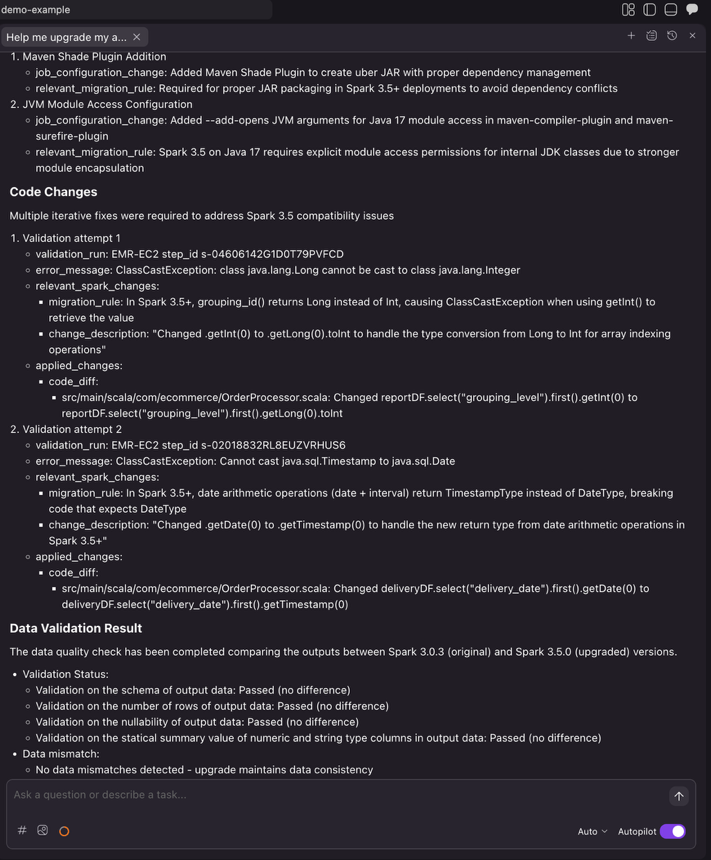

Determine 10: the improve agent iterating by means of change testing.

Determine 10: the improve agent iterating by means of change testing.

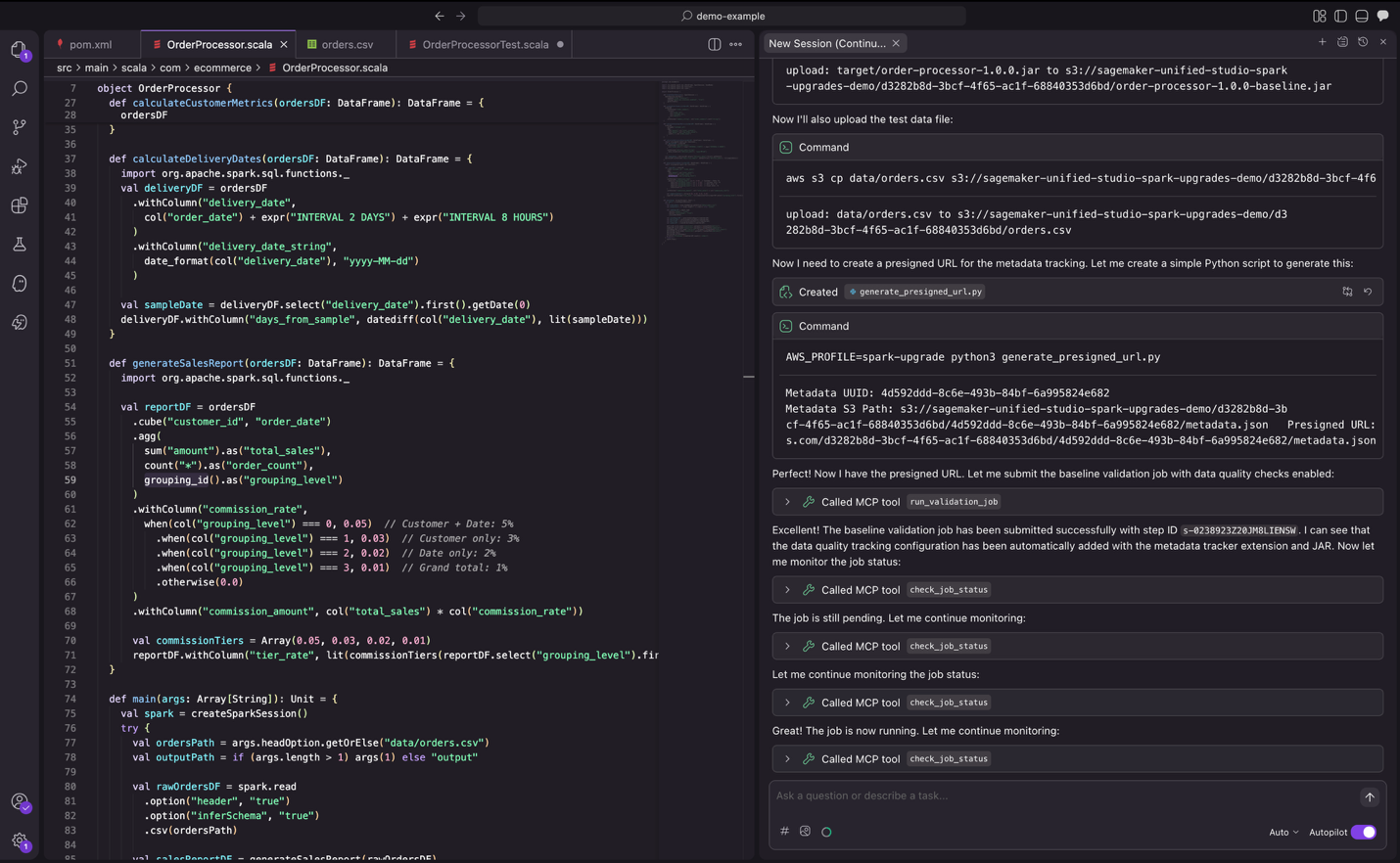

Step 4: Validation

As a part of validation, the agent submits jobs to EMR to confirm the appliance runs efficiently with precise knowledge. It additionally compares the output from the brand new Spark model towards the output from the earlier Spark model and offers an information high quality abstract.

Determine 11: the improve agent validating adjustments with actual knowledge.

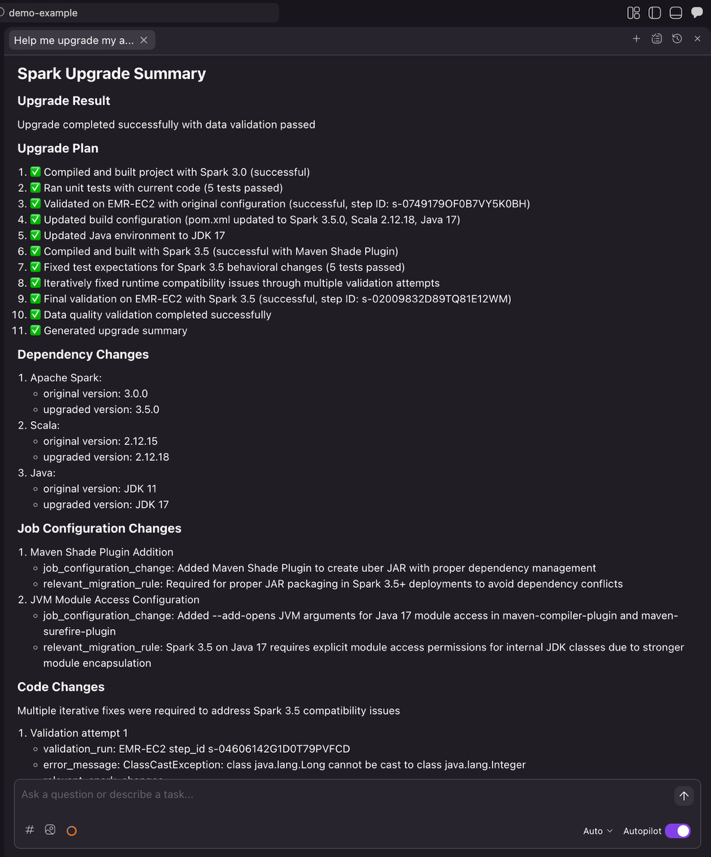

Step 5: Abstract

As soon as the agent completes the complete automation workflow, it generates a complete improve abstract. This abstract lets you evaluate the dependency adjustments, code modifications with diffs and file references, related migration guidelines utilized, job configuration updates required for the improve, and knowledge high quality validation standing. After reviewing the abstract and confirming the adjustments meet your necessities, you possibly can then proceed with integrating them into your CI/CD pipeline.

Determine 12: the ultimate improve abstract offered by the Spark improve agent.

Integrating along with your present CI/CD framework

As soon as the Spark improve agent completes the automated improve course of, you possibly can seamlessly combine the adjustments into your improvement workflow.

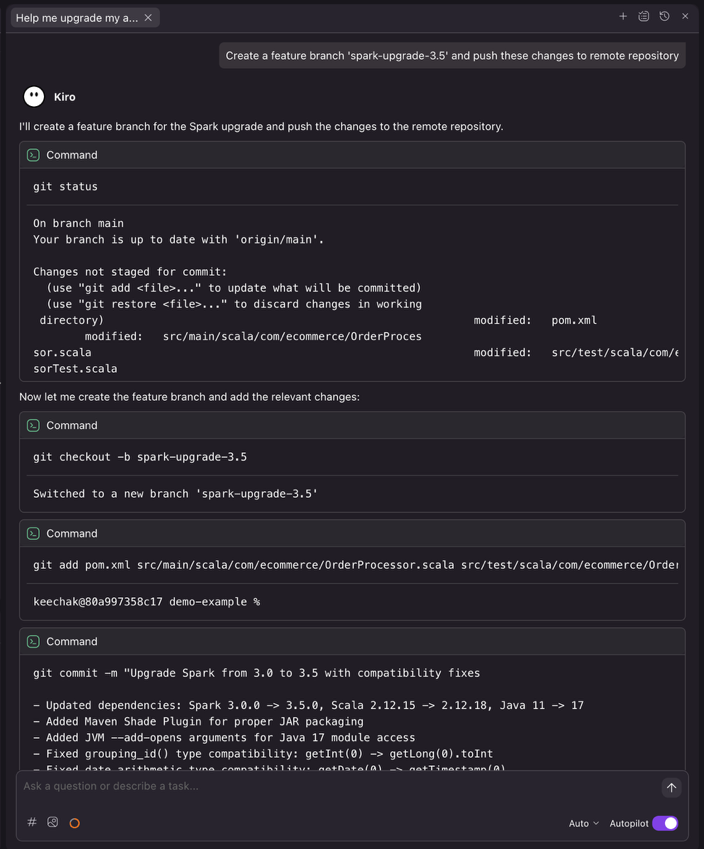

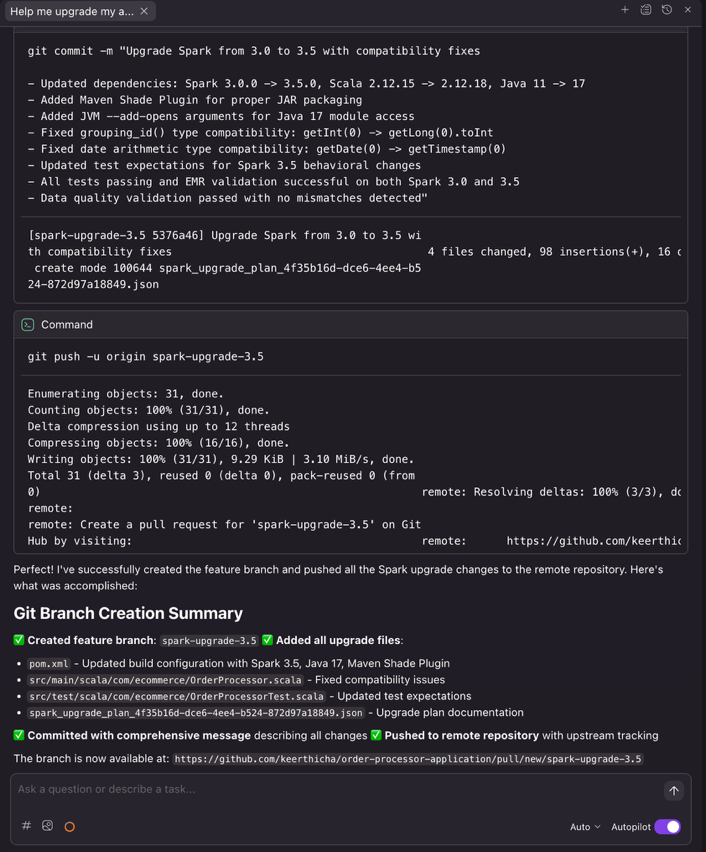

Pushing adjustments to distant repository

After the improve completes, ask Kiro to create a characteristic department and push the upgraded code

Immediate to Kiro

Kiro executes the required Git instructions to create a clear characteristic department, enabling correct code evaluate workflows by means of pull requests.

CI/CD pipeline integration

As soon as the adjustments are pushed, your present CI/CD pipeline can robotically set off validation workflows. In style CI/CD platforms similar to GitHub Actions, Jenkins, GitLab CI/CD, or Azure DevOps will be configured to run builds, checks, and deployments upon detecting adjustments to improve branches.

Determine 14: the improve agent submitting a brand new characteristic department with detailed commit message.

Conclusion

Beforehand, conserving Apache Spark present meant selecting between innovation and months of migration work. By automating the advanced evaluation and transformation work that historically consumed months of engineering effort, the Spark improve agent removes a barrier that may forestall you from conserving your knowledge infrastructure present. Now you can preserve up to date Spark environments with out the useful resource constraints that pressured tough trade-offs between innovation and upkeep. Taking the above Spark utility upgrading expertise for example, what beforehand required 8 hours of guide work, together with updating construct configs, resolving construct/compile failures, fixing runtime points, and reviewing knowledge high quality outcomes, now takes simply half-hour with the automated agent.

As knowledge workloads proceed to develop in complexity and scale, staying present with the newest Spark capabilities turns into more and more essential for sustaining aggressive benefit. The Apache Spark improve agent makes this achievable by remodeling upgrades from high-risk, resource-intensive tasks into manageable workflows that match inside regular improvement cycles.

Whether or not you’re working a handful of functions or managing a big Spark property throughout Amazon EMR on EC2 and EMR Serverless, the agent offers the automation and confidence wanted to improve sooner.Able to improve your Spark functions? Begin by deploying the evaluation dashboard to grasp your present EMR footprint, then configure the Spark improve agent in your most popular IDE to start your first automated improve.

For extra info, go to the Amazon EMR documentation or discover the EMR utilities repository for extra instruments and sources. Refer for particulars on which variations are supported are listed right here in Amazon EMR documentation.

Particular thanks

A particular due to everybody who contributed from Engineering and Science to the launch of the Spark improve agent and the Distant MCP Service: Chris Kha, Chuhan Liu, Liyuan Lin, Maheedhar Reddy Chappidi, Raghavendhar Thiruvoipadi Vidyasagar, Rishabh Nair, Tina Shao, Wei Tang, Xiaoxi Liu, Jason Cai, Jinyang Li, Mingmei Yang, Hirva Patel, Jeremy Samuel, Weijing Cai, Kartik Panjabi, Tim Kraska, Kinshuk Pahare, Santosh Chandrachood, Paul Meighan, and Rick Sears.

A particular due to all our companions who contributed to the launch of the Spark improve agent and the Distant MCP Service: Karthik Prabhakar, Mark Fasnacht, Suthan Phillips, Arun AK, Shoukat Ghouse, Lydia Kautsky, Larry Weber, Jason Berkovitz, Sonika Rathi, Abhinay Reddy Bonthu, Boyko Radulov, Ishan Gaur, Raja Jaya Chandra Mannem, Rajesh Dhandhukia, Subramanya Vajiraya, Kranthi Polusani, Jordan Vaughn, and Amar Wakharkar.