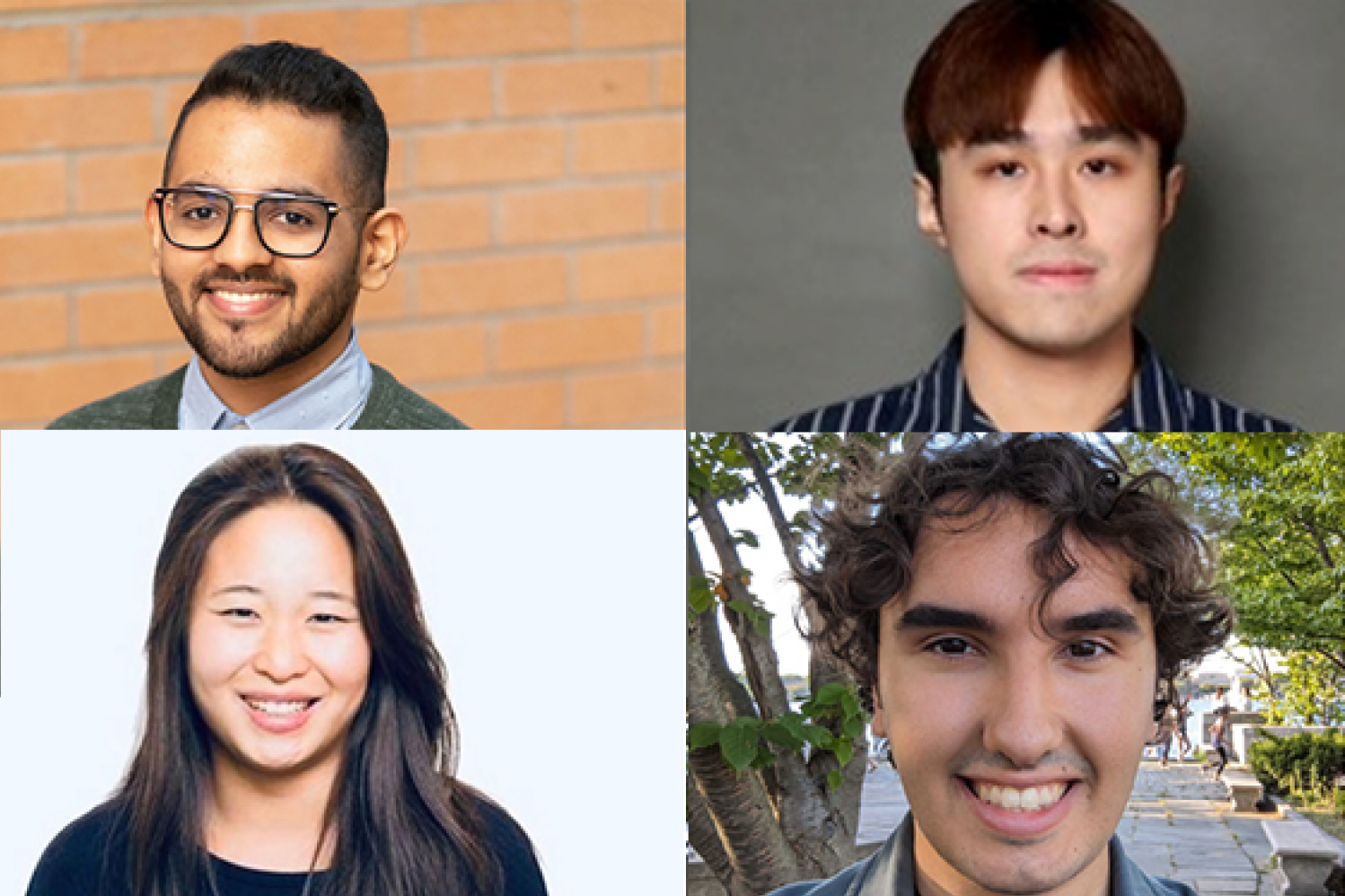

To ensure that pure language to be an efficient type of communication, the events concerned want to have the ability to perceive phrases and their context, assume that the content material is basically shared in good religion and is reliable, purpose concerning the data being shared, after which apply it to real-world situations. MIT PhD college students interning with the MIT-IBM Watson AI Lab — Athul Paul Jacob SM ’22, Maohao Shen SM ’23, Victor Butoi, and Andi Peng SM ’23 — are working to assault every step of this course of that’s baked into pure language fashions, in order that the AI techniques might be extra reliable and correct for customers.

To realize this, Jacob’s analysis strikes on the coronary heart of current pure language fashions to enhance the output, utilizing sport idea. His pursuits, he says, are two-fold: “One is knowing how people behave, utilizing the lens of multi-agent techniques and language understanding, and the second factor is, ‘How do you utilize that as an perception to construct higher AI techniques?’” His work stems from the board sport “Diplomacy,” the place his analysis crew developed a system that might study and predict human behaviors and negotiate strategically to attain a desired, optimum final result.

“This was a sport the place it’s worthwhile to construct belief; it’s worthwhile to talk utilizing language. You’ll want to additionally play towards six different gamers on the similar time, which had been very completely different from all of the sorts of process domains individuals had been tackling up to now,” says Jacob, referring to different video games like poker and GO that researchers put to neural networks. “In doing so, there have been quite a lot of analysis challenges. One was, ‘How do you mannequin people? How have you learnt whether or not when people are likely to act irrationally?’” Jacob and his analysis mentors — together with Affiliate Professor Jacob Andreas and Assistant Professor Gabriele Farina of the MIT Division of Electrical Engineering and Laptop Science (EECS), and the MIT-IBM Watson AI Lab’s Yikang Shen — recast the issue of language era as a two-player sport.

Utilizing “generator” and “discriminator” fashions, Jacob’s crew developed a pure language system to supply solutions to questions after which observe the solutions and decide if they’re right. If they’re, the AI system receives some extent; if not, no level is rewarded. Language fashions notoriously are likely to hallucinate, making them much less reliable; this no-regret studying algorithm collaboratively takes a pure language mannequin and encourages the system’s solutions to be extra truthful and dependable, whereas holding the options near the pre-trained language mannequin’s priors. Jacob says that utilizing this method along with a smaller language mannequin might, doubtless, make it aggressive with the identical efficiency of a mannequin many occasions larger.

As soon as a language mannequin generates a outcome, researchers ideally need its confidence in its era to align with its accuracy, however this ceaselessly isn’t the case. Hallucinations can happen with the mannequin reporting excessive confidence when it must be low. Maohao Shen and his group, with mentors Gregory Wornell, Sumitomo Professor of Engineering in EECS, and lab researchers with IBM Analysis Subhro Das, Prasanna Sattigeri, and Soumya Ghosh — are seeking to repair this by way of uncertainty quantification (UQ). “Our undertaking goals to calibrate language fashions when they’re poorly calibrated,” says Shen. Particularly, they’re trying on the classification drawback. For this, Shen permits a language mannequin to generate free textual content, which is then transformed right into a multiple-choice classification process. As an illustration, they could ask the mannequin to unravel a math drawback after which ask it if the reply it generated is right as “sure, no, or possibly.” This helps to find out if the mannequin is over- or under-confident.

Automating this, the crew developed a way that helps tune the boldness output by a pre-trained language mannequin. The researchers skilled an auxiliary mannequin utilizing the ground-truth data to ensure that their system to have the ability to right the language mannequin. “In case your mannequin is over-confident in its prediction, we’re in a position to detect it and make it much less assured, and vice versa,” explains Shen. The crew evaluated their approach on a number of in style benchmark datasets to indicate how properly it generalizes to unseen duties to realign the accuracy and confidence of language mannequin predictions. “After coaching, you’ll be able to simply plug in and apply this method to new duties with out every other supervision,” says Shen. “The one factor you want is the info for that new process.”

Victor Butoi additionally enhances mannequin functionality, however as an alternative, his lab crew — which incorporates John Guttag, the Dugald C. Jackson Professor of Laptop Science and Electrical Engineering in EECS; lab researchers Leonid Karlinsky and Rogerio Feris of IBM Analysis; and lab associates Hilde Kühne of the College of Bonn and Wei Lin of Graz College of Know-how — is creating methods to permit vision-language fashions to purpose about what they’re seeing, and is designing prompts to unlock new studying talents and perceive key phrases.

Compositional reasoning is simply one other side of the decision-making course of that we ask machine-learning fashions to carry out to ensure that them to be useful in real-world conditions, explains Butoi. “You want to have the ability to take into consideration issues compositionally and clear up subtasks,” says Butoi, “like, in case you’re saying the chair is to the left of the individual, it’s worthwhile to acknowledge each the chair and the individual. You’ll want to perceive instructions.” After which as soon as the mannequin understands “left,” the analysis crew needs the mannequin to have the ability to reply different questions involving “left.”

Surprisingly, vision-language fashions don’t purpose properly about composition, Butoi explains, however they are often helped to, utilizing a mannequin that may “lead the witness”, if you’ll. The crew developed a mannequin that was tweaked utilizing a way known as low-rank adaptation of huge language fashions (LoRA) and skilled on an annotated dataset known as Visible Genome, which has objects in a picture and arrows denoting relationships, like instructions. On this case, the skilled LoRA mannequin can be guided to say one thing about “left” relationships, and this caption output would then be used to offer context and immediate the vision-language mannequin, making it a “considerably simpler process,” says Butoi.

On the earth of robotics, AI techniques additionally have interaction with their environment utilizing pc imaginative and prescient and language. The settings could vary from warehouses to the house. Andi Peng and mentors MIT’s H.N. Slater Professor in Aeronautics and Astronautics Julie Shah and Chuang Gan, of the lab and the College of Massachusetts at Amherst, are specializing in aiding individuals with bodily constraints, utilizing digital worlds. For this, Peng’s group is growing two embodied AI fashions — a “human” that wants help and a helper agent — in a simulated atmosphere known as ThreeDWorld. Specializing in human/robotic interactions, the crew leverages semantic priors captured by massive language fashions to assist the helper AI to deduce what talents the “human” agent won’t be capable to do and the motivation behind actions of the “human,” utilizing pure language. The crew’s seeking to strengthen the helper’s sequential decision-making, bidirectional communication, capability to grasp the bodily scene, and the way greatest to contribute.

“Lots of people suppose that AI packages must be autonomous, however I believe that an essential a part of the method is that we construct robots and techniques for people, and we need to convey human data,” says Peng. “We don’t desire a system to do one thing in a bizarre manner; we would like them to do it in a human manner that we will perceive.”