Organizations constructing petabyte-scale information lakes face rising challenges as their information grows. Batch updates and compliance deletes create a proliferation of positional delete information, slowing downstream information pipelines and driving up storage prices. Monitoring information adjustments for audit trails and incremental processing requires customized, engine-specific implementations that add complexity and upkeep burden. As information volumes scale, these challenges compound, leaving information groups juggling customized options and rising operational prices simply to take care of information freshness and compliance.

Apache Iceberg V3 addresses these challenges with two new capabilities: deletion vectors and row lineage. AWS now delivers these capabilities throughout Apache Spark on Amazon EMR 7.12, AWS Glue, Amazon SageMaker notebooks, Amazon S3 Tables, and the AWS Glue Knowledge Catalog, supplying you with a whole, built-in V3 expertise with out customized implementation. This implies quicker writes, decrease storage prices, complete audit trails, and environment friendly incremental processing, all working seamlessly throughout your complete information lake structure.

On this put up, we stroll you thru the brand new capabilities in Iceberg V3, clarify how deletion vectors and row lineage deal with these challenges, discover real-world use instances throughout industries, and supply sensible steerage on implementing Iceberg V3 options throughout AWS analytics, catalog, and storage companies.

What’s new in Iceberg V3

Iceberg V3 introduces new capabilities and information sorts. Two key capabilities that deal with the challenges mentioned earlier are deletion vectors and row lineage.

Deletion vectors exchange positional delete information with an environment friendly binary format saved as Puffin information. As an alternative of making separate delete information for every delete operation, the deletion vector consolidates these delete references to a single delete vector per information file, quite than a delete reference file per deleted row. Throughout question execution, engines effectively filter out deleted rows utilizing these compact vectors, sustaining question efficiency whereas eradicating the necessity to merge a number of delete information.

This avoids write amplification from random batch updates and GDPR compliance deletes, considerably decreasing the overhead of sustaining contemporary information. Excessive-frequency replace workloads can see rapid enhancements in write efficiency and lowered storage prices from fewer small delete information. Moreover, having fewer small delete information reduces desk upkeep prices for compaction operations.

Row lineage allows exact change monitoring on the row stage with full auditability. Row lineage provides metadata fields to every information file that observe when rows had been created and final modified. The _row_id subject uniquely identifies every row, and the _last_updated_sequence_number subject tracks the snapshot when the row was final modified. These fields allow environment friendly change monitoring queries with out scanning complete tables, they usually’re robotically maintained by the Iceberg specification with out requiring customized code.

Earlier than row lineage, change monitoring in Iceberg offered solely the web adjustments between snapshots, making it tough to trace particular person file modifications. Row lineage metadata fields can now be queried to return all incremental adjustments, supplying you with full constancy for auditing information modifications and regulatory compliance. For information transformations, your downstream techniques can course of adjustments incrementally, rushing up information pipelines and decreasing compute prices for change information seize (CDC) workflows. Row lineage is engine agnostic, interoperable, and constructed into the Iceberg V3 specification, assuaging the necessity for customized, engine-specific change monitoring implementations.

Actual-world use instances

The brand new Iceberg V3 capabilities deal with crucial challenges throughout a number of industries:

- Advertising and marketing and promoting companies organizations – Now you can effectively deal with GDPR right-to-be-forgotten requests and regulatory compliance deletes with out the write amplification that beforehand degraded pipeline efficiency. Row lineage supplies full audit trails for information modifications, assembly strict regulatory necessities for information governance.

- Ecommerce platforms processing tens of millions of product updates and stock adjustments every day – You may preserve information freshness whereas decreasing storage prices. Deletion vectors allow quicker upsert operations, serving to groups meet aggressive SLA necessities throughout peak purchasing durations.

- Healthcare and life sciences firms – You may observe affected person information modifications with precision for compliance functions whereas effectively processing large-scale genomic datasets. Row lineage supplies the detailed change historical past required for scientific trial audits and regulatory submissions.

- Media and leisure suppliers managing massive catalogs of consumer viewing information – You may effectively course of incremental adjustments for suggestion engines. Row lineage allows downstream analytics techniques to course of solely modified data, decreasing compute prices in incremental processing eventualities.

Get began with Iceberg V3

To reap the benefits of deletion vectors for optimized writes and row lineage for built-in change monitoring in Iceberg V3, set the desk property format-version = 3 throughout desk creation. Alternatively, setting this property on an current Iceberg V2 desk atomically upgrades the desk with out information rewrites. Earlier than creating or upgrading V3 tables, ensure the Iceberg engines in your answer are V3-compatible. Discuss with Apache Iceberg V3 on AWS for extra particulars.

Create a brand new V3 desk with Apache Spark on Amazon EMR 7.12

The next code creates a brand new desk named customer_data. Setting the desk property format-version = 3 creates a V3 desk. If the format-version desk property just isn’t explicitly set, a V2 desk is created. V2 is presently the Iceberg default desk model. Setting write.delete.mode, write.replace.mode, and write.merge.mode to merge-on-read configures Spark to put in writing deletion vectors for delete, replace, or merge statements carried out on the desk.

Run the next code to insert data into the customer_data desk:

Delete a file the place customer_id = 5 to generate a delete file:

Updating a file with the next replace assertion additionally generates a delete file:

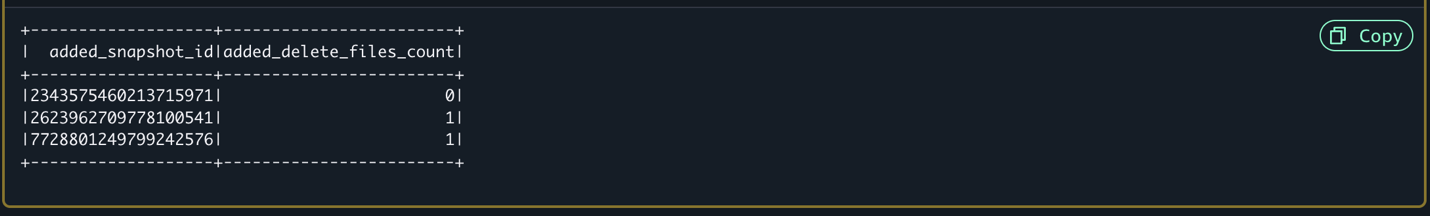

The final a part of this instance queries the manifest’s metadata desk to confirm delete information had been produced:

This question will lead to three data returned, as proven within the following screenshot. The added_delete_files_count for the primary snapshot that inserts data must be 0. The subsequent two snapshots for the corresponding delete and replace statements ought to have 1 every for added_delete_files_count worth.

Question row lineage for change monitoring

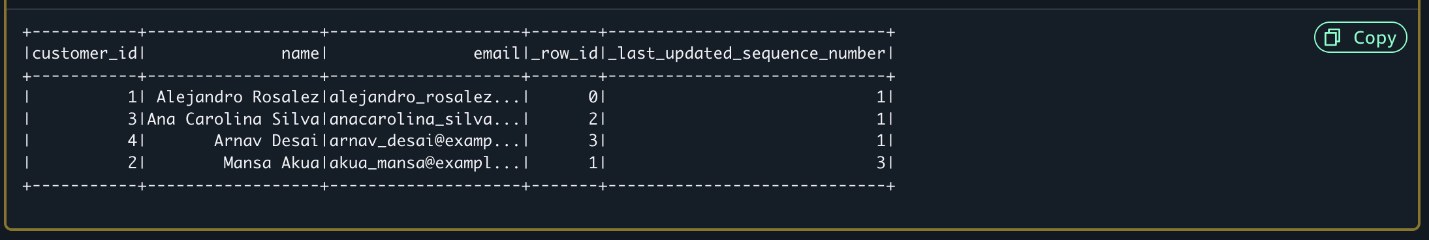

Row lineage is robotically enabled on V3 tables. The next instance contains row lineage metadata fields and an instance of how one can question desk adjustments after a row lineage sequence quantity:

Working this question after the earlier insert, replace, and delete statements returns 4 data, as proven within the following screenshot. The deleted file is eliminated. The _last_updated_sequence_number is 3 for the replace to customer_id = 2.

Improve an current V2 desk

You may improve your current V2 tables to V3 with the next command:

Once you improve a desk from V2 to V3, a number of essential operations happen atomically:

- A brand new metadata snapshot is created atomically, leading to no information loss.

- Present Parquet information information are reused with out modification.

- Row-lineage fields (

_row_idand_last_updated_sequence_number) are added to the desk metadata. - The subsequent compaction operation will take away previous V2 positional delete information. If new deletion vector information are generated earlier than compaction runs, they’ll merge current V2 positional delete information.

- New modifications will robotically use V3’s deletion vector information.

- The improve doesn’t carry out a historic backfill of row-lineage change monitoring data.

The improve course of is synchronous and completes in seconds for many tables. If the improve fails, an error message is returned instantly, and the desk stays in its V2 state.

Getting probably the most from Iceberg V3

On this part, we share the important thing issues we’ve discovered from clients already utilizing these options.

Know your workload sample

Deletion vectors work finest whenever you’re doing a number of writes, comparable to high-frequency updates, batch deletes, or CDC workloads making random non-append-only updates. If you happen to’re writing greater than you’re studying, deletion vectors will ship rapid efficiency positive factors. To unlock these advantages, set your desk to merge-on-read mode for delete, replace, and merge operations.

Let AWS deal with compaction

Allow computerized compaction by the Knowledge Catalog or use S3 Tables (on by default). You’re going to get hands-free optimization with out constructing customized upkeep jobs. Deletion vectors produce fewer delete information than positional deletes in Iceberg V2. Given an identical sample and quantity of modified data, V3 compaction must be faster and price lower than V2.

Perceive the significance of row lineage when utilizing the V2 changelog

With the Spark changelog process in Iceberg V2, if a row will get inserted after which deleted between snapshots, it disappears out of your change feed—you by no means see it. Iceberg V3 row lineage captures each operations as a result of _last_updated_sequence_number updates on every modification. This full constancy is essential for audit trails and regulatory compliance the place it’s essential show what occurred to each file. Efficiency-wise, the V2 changelog requires scanning and merging delete information to compute adjustments—that’s compute you’re paying for on each learn. V3 row lineage shops metadata fields immediately on every row, so filtering by _last_updated_sequence_number is a straightforward metadata scan.

Take a look at earlier than you improve

Iceberg V3 upgrades are atomic and quick, however check in dev first. Ensure all of your question engines assist Iceberg V3 earlier than upgrading shared tables—mixing V2 and V3 engines causes complications. After upgrading, preserve a number of V2 snapshots round quickly for time-travel queries whilst you validate efficiency.

Conclusion

Iceberg V3 assist throughout AWS analytics, catalog, and storage companies marks a major development in information lake capabilities. By combining deletion vectors’ write optimization with row lineage’s complete change monitoring, you possibly can construct extra environment friendly, auditable, and cost-effective information lakes at scale. The seamless interoperability throughout AWS companies makes certain your information lake structure stays versatile and future-proof.

To study extra about AWS assist for Iceberg V3, consult with Utilizing Apache Iceberg on AWS.

To study extra about constructing trendy information lakes with Iceberg on AWS, consult with Analytics on AWS.