On this article, you’ll learn to flip free-form massive language mannequin (LLM) textual content into dependable, schema-validated Python objects with Pydantic.

Subjects we’ll cowl embrace:

- Designing sturdy Pydantic fashions (together with customized validators and nested schemas).

- Parsing “messy” LLM outputs safely and surfacing exact validation errors.

- Integrating validation with OpenAI, LangChain, and LlamaIndex plus retry methods.

Let’s break it down.

The Full Information to Utilizing Pydantic for Validating LLM Outputs

Picture by Editor

Introduction

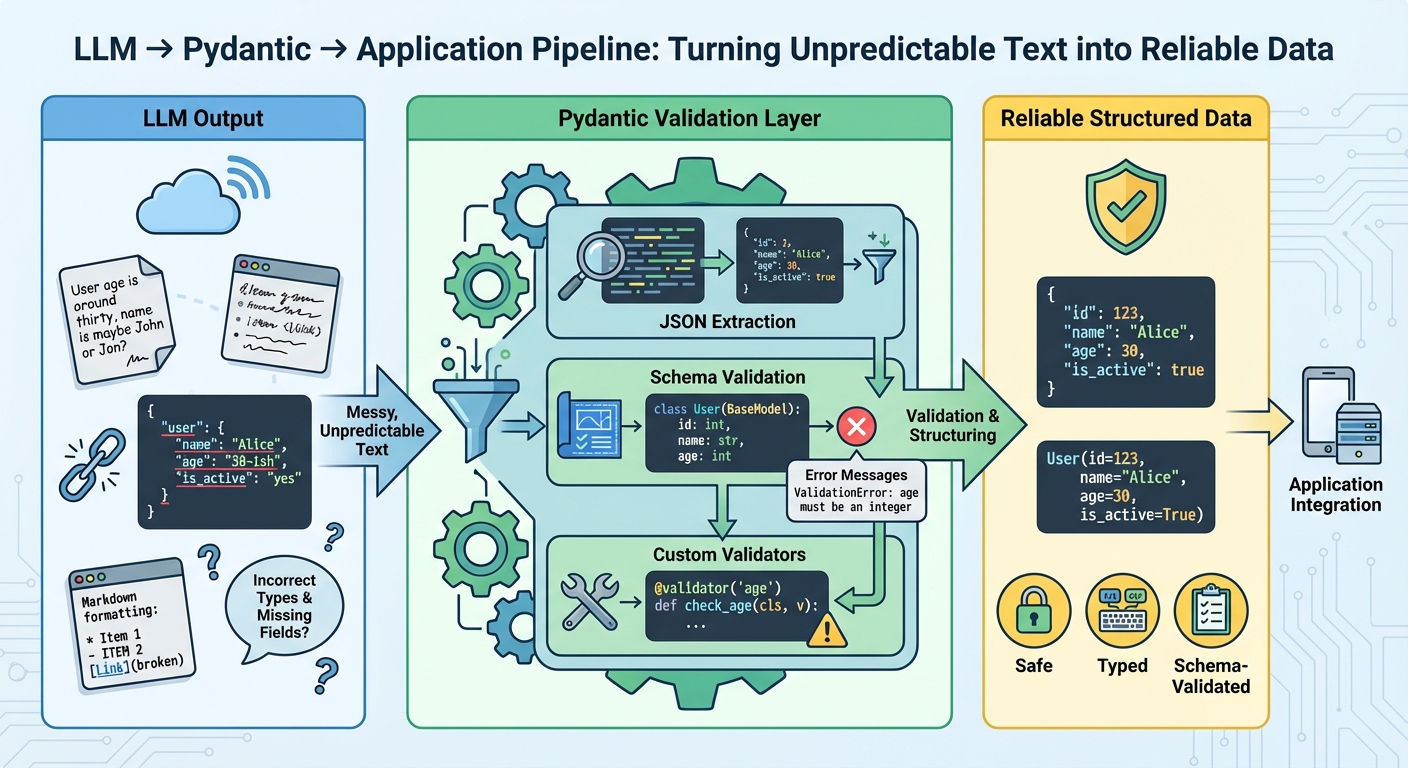

Giant language fashions generate textual content, not structured information. Even whenever you immediate them to return structured information, they’re nonetheless producing textual content that appears to be like like legitimate JSON. The output could have incorrect subject names, lacking required fields, unsuitable information sorts, or further textual content wrapped across the precise information. With out validation, these inconsistencies trigger runtime errors which might be troublesome to debug.

Pydantic helps you validate information at runtime utilizing Python sort hints. It checks that LLM outputs match your anticipated schema, converts sorts mechanically the place doable, and supplies clear error messages when validation fails. This provides you a dependable contract between the LLM’s output and your utility’s necessities.

This text reveals you the right way to use Pydantic to validate LLM outputs. You’ll learn to outline validation schemas, deal with malformed responses, work with nested information, combine with LLM APIs, implement retry logic with validation suggestions, and extra. Let’s not waste any extra time.

🔗 Yow will discover the code on GitHub. Earlier than you go forward, set up Pydantic model 2.x with the non-compulsory electronic mail dependencies:

pip set up pydantic[email].

Getting Began

Let’s begin with a easy instance by constructing a software that extracts contact data from textual content. The LLM reads unstructured textual content and returns structured information that we validate with Pydantic:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 | from pydantic import BaseModel, EmailStr, field_validator from typing import Elective

class ContactInfo(BaseModel): title: str electronic mail: EmailStr cellphone: Elective[str] = None firm: Elective[str] = None

@field_validator(‘cellphone’) @classmethod def validate_phone(cls, v): if v is None: return v cleaned = ”.be part of(filter(str.isdigit, v)) if len(cleaned) < 10: increase ValueError(‘Telephone quantity will need to have a minimum of 10 digits’) return cleaned |

All Pydantic fashions inherit from BaseModel, which supplies automated validation. Kind hints like title: str assist Pydantic validate sorts at runtime. The EmailStr sort validates electronic mail format while not having a customized regex. Fields marked with Elective[str] = None could be lacking or null. The @field_validator decorator allows you to add customized validation logic, like cleansing cellphone numbers and checking their size.

Right here’s the right way to use the mannequin to validate pattern LLM output:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 | import json

llm_response = ”‘ { “title”: “Sarah Johnson”, “electronic mail”: “sarah.johnson@techcorp.com”, “cellphone”: “(555) 123-4567”, “firm”: “TechCorp Industries” } ‘”

information = json.hundreds(llm_response) contact = ContactInfo(**information)

print(contact.title) print(contact.electronic mail) print(contact.model_dump()) |

Whenever you create a ContactInfo occasion, Pydantic validates the whole lot mechanically. If validation fails, you get a transparent error message telling you precisely what went unsuitable.

Parsing and Validating LLM Outputs

LLMs don’t all the time return excellent JSON. Typically they add markdown formatting, explanatory textual content, or mess up the construction. Right here’s the right way to deal with these circumstances:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 | from pydantic import BaseModel, ValidationError, field_validator import json import re

class ProductReview(BaseModel): product_name: str score: int review_text: str would_recommend: bool

@field_validator(‘score’) @classmethod def validate_rating(cls, v): if not 1 <= v <= 5: increase ValueError(‘Score should be an integer between 1 and 5’) return v

def extract_json_from_llm_response(response: str) -> dict: “”“Extract JSON from LLM response that may comprise further textual content.”“” json_match = re.search(r‘{.*}’, response, re.DOTALL) if json_match: return json.hundreds(json_match.group()) increase ValueError(“No JSON present in response”)

def parse_review(llm_output: str) -> ProductReview: “”“Safely parse and validate LLM output.”“” strive: information = extract_json_from_llm_response(llm_output) assessment = ProductReview(**information) return assessment besides json.JSONDecodeError as e: print(f“JSON parsing error: {e}”) increase besides ValidationError as e: print(f“Validation error: {e}”) increase besides Exception as e: print(f“Surprising error: {e}”) increase |

This method makes use of regex to seek out JSON inside response textual content, dealing with circumstances the place the LLM provides explanatory textual content earlier than or after the info. We catch completely different exception sorts individually:

JSONDecodeErrorfor malformed JSON,ValidationErrorfor information that doesn’t match the schema, and- Normal exceptions for surprising points.

The extract_json_from_llm_response operate handles textual content cleanup whereas parse_review handles validation, holding issues separated. In manufacturing, you’d need to log these errors or retry the LLM name with an improved immediate.

This instance reveals an LLM response with further textual content that our parser handles appropriately:

messy_response = ”‘ Right here’s the assessment in JSON format:

{ “product_name”: “Wi-fi Headphones X100”, “score”: 4, “review_text”: “Nice sound high quality, comfy for lengthy use.”, “would_recommend”: true }

Hope this helps! ”‘

assessment = parse_review(messy_response) print(f“Product: {assessment.product_name}”) print(f“Score: {assessment.score}/5”) |

The parser extracts the JSON block from the encircling textual content and validates it towards the ProductReview schema.

Working with Nested Fashions

Actual-world information is never flat. Right here’s the right way to deal with nested buildings like a product with a number of evaluations and specs:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 | from pydantic import BaseModel, Area, field_validator from typing import Checklist

class Specification(BaseModel): key: str worth: str

class Evaluation(BaseModel): reviewer_name: str score: int = Area(..., ge=1, le=5) remark: str verified_purchase: bool = False

class Product(BaseModel): id: str title: str worth: float = Area(..., gt=0) class: str specs: Checklist[Specification] evaluations: Checklist[Review] average_rating: float = Area(..., ge=1, le=5)

@field_validator(‘average_rating’) @classmethod def check_average_matches_reviews(cls, v, information): evaluations = information.information.get(‘evaluations’, []) if evaluations: calculated_avg = sum(r.score for r in evaluations) / len(evaluations) if abs(calculated_avg – v) > 0.1: increase ValueError( f‘Common score {v} doesn’t match calculated common {calculated_avg:.2f}’ ) return v |

The Product mannequin incorporates lists of Specification and Evaluation objects, and every nested mannequin is validated independently. Utilizing Area(..., ge=1, le=5) provides constraints instantly within the sort trace, the place ge means “higher than or equal” and gt means “higher than”.

The check_average_matches_reviews validator accesses different fields utilizing information.information, permitting you to validate relationships between fields. Whenever you move nested dictionaries to Product(**information), Pydantic mechanically creates the nested Specification and Evaluation objects.

This construction ensures information integrity at each degree. If a single assessment is malformed, you’ll know precisely which one and why.

This instance reveals how nested validation works with an entire product construction:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 | llm_response = { “id”: “PROD-2024-001”, “title”: “Good Espresso Maker”, “worth”: 129.99, “class”: “Kitchen Home equipment”, “specs”: [ {“key”: “Capacity”, “value”: “12 cups”}, {“key”: “Power”, “value”: “1000W”}, {“key”: “Color”, “value”: “Stainless Steel”} ], “evaluations”: [ { “reviewer_name”: “Alex M.”, “rating”: 5, “comment”: “Makes excellent coffee every time!”, “verified_purchase”: True }, { “reviewer_name”: “Jordan P.”, “rating”: 4, “comment”: “Good but a bit noisy”, “verified_purchase”: True } ], “average_rating”: 4.5 }

product = Product(**llm_response) print(f“{product.title}: ${product.worth}”) print(f“Common Score: {product.average_rating}”) print(f“Variety of evaluations: {len(product.evaluations)}”) |

Pydantic validates your complete nested construction in a single name, checking that specs and evaluations are correctly fashioned and that the common score matches the person assessment scores.

Utilizing Pydantic with LLM APIs and Frameworks

Up to now, we’ve realized that we want a dependable strategy to convert free-form textual content into structured, validated information. Now let’s see the right way to use Pydantic validation with OpenAI’s API, in addition to frameworks like LangChain and LlamaIndex. You’ll want to set up the required SDKs.

Utilizing Pydantic with OpenAI API

Right here’s the right way to extract structured information from unstructured textual content utilizing OpenAI’s API with Pydantic validation:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 | from openai import OpenAI from pydantic import BaseModel from typing import Checklist import os

consumer = OpenAI(api_key=os.getenv(“OPENAI_API_KEY”))

class BookSummary(BaseModel): title: str writer: str style: str key_themes: Checklist[str] main_characters: Checklist[str] brief_summary: str recommended_for: Checklist[str]

def extract_book_info(textual content: str) -> BookSummary: “”“Extract structured e-book data from unstructured textual content.”“”

immediate = f“”“ Extract e-book data from the next textual content and return it as JSON.

Required format: {{ “title“: “e-book title“, “writer“: “writer title“, “style“: “style“, “key_themes“: [“theme1“, “theme2“], “predominant_characters“: [“character1“, “character2“], “temporary_abstract“: “abstract in 2–3 sentences“, “beneficial_for“: [“audience1“, “audience2“] }}

Textual content: {textual content}

Return ONLY the JSON, no extra textual content. ““”

response = consumer.chat.completions.create( mannequin=“gpt-4o-mini”, messages=[ {“role”: “system”, “content”: “You are a helpful assistant that extracts structured data.”}, {“role”: “user”, “content”: prompt} ], temperature=0 )

llm_output = response.decisions[0].message.content material

import json information = json.hundreds(llm_output) return BookSummary(**information) |

The immediate contains the precise JSON construction we count on, guiding the LLM to return information matching our Pydantic mannequin. Setting temperature=0 makes the LLM extra deterministic and fewer inventive, which is what we wish for structured information extraction. The system message primes the mannequin to be a knowledge extractor moderately than a conversational assistant. Even with cautious prompting, we nonetheless validate with Pydantic since you ought to by no means belief LLM output with out verification.

This instance extracts structured data from a e-book description:

book_text = “”“ ‘The Midnight Library’ by Matt Haig is a recent fiction novel that explores themes of remorse, psychological well being, and the infinite potentialities of life. The story follows Nora Seed, a lady who finds herself in a library between life and dying, the place every e-book represents a special life she may have lived. By her journey, she encounters numerous variations of herself and should determine what actually makes a life price residing. The e-book resonates with readers coping with despair, anxiousness, or life transitions. ““”

strive: book_info = extract_book_info(book_text) print(f“Title: {book_info.title}”) print(f“Writer: {book_info.writer}”) print(f“Themes: {‘, ‘.be part of(book_info.key_themes)}”) besides Exception as e: print(f“Error extracting e-book information: {e}”) |

The operate sends the unstructured textual content to the LLM with clear formatting directions, then validates the response towards the BookSummary schema.

Utilizing LangChain with Pydantic

LangChain supplies built-in help for structured output extraction with Pydantic fashions. There are two predominant approaches that deal with the complexity of immediate engineering and parsing for you.

The primary technique makes use of PydanticOutputParser, which works with any LLM by utilizing immediate engineering to information the mannequin’s output format. The parser mechanically generates detailed format directions out of your Pydantic mannequin:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 | from langchain_openai import ChatOpenAI from langchain.output_parsers import PydanticOutputParser from langchain.prompts import PromptTemplate from pydantic import BaseModel, Area from typing import Checklist, Elective

class Restaurant(BaseModel): “”“Details about a restaurant.”“” title: str = Area(description=“The title of the restaurant”) delicacies: str = Area(description=“Kind of delicacies served”) price_range: str = Area(description=“Value vary: $, $$, $$$, or $$$$”) score: Elective[float] = Area(default=None, description=“Score out of 5.0”) specialties: Checklist[str] = Area(description=“Signature dishes or specialties”)

def extract_restaurant_with_parser(textual content: str) -> Restaurant: “”“Extract restaurant information utilizing LangChain’s PydanticOutputParser.”“”

parser = PydanticOutputParser(pydantic_object=Restaurant)

immediate = PromptTemplate( template=“Extract restaurant data from the next textual content.n{format_instructions}n{textual content}n”, input_variables=[“text”], partial_variables={“format_instructions”: parser.get_format_instructions()} )

llm = ChatOpenAI(mannequin=“gpt-4o-mini”, temperature=0)

chain = immediate | llm | parser

end result = chain.invoke({“textual content”: textual content}) return end result |

The PydanticOutputParser mechanically generates format directions out of your Pydantic mannequin, together with subject descriptions and kind data. It really works with any LLM that may comply with directions and doesn’t require operate calling help. The chain syntax makes it straightforward to compose complicated workflows.

The second technique is to make use of the native operate calling capabilities of contemporary LLMs by way of the with_structured_output() operate:

def extract_restaurant_structured(textual content: str) -> Restaurant: “”“Extract restaurant information utilizing with_structured_output.”“”

llm = ChatOpenAI(mannequin=“gpt-4o-mini”, temperature=0)

structured_llm = llm.with_structured_output(Restaurant)

immediate = PromptTemplate.from_template( “Extract restaurant data from the next textual content:nn{textual content}” )

chain = immediate | structured_llm end result = chain.invoke({“textual content”: textual content}) return end result |

This technique produces cleaner, extra concise code and makes use of the mannequin’s native operate calling capabilities for extra dependable extraction. You don’t must manually create parsers or format directions, and it’s usually extra correct than prompt-based approaches.

Right here’s an instance of the right way to use these features:

restaurant_text = “”“ Mama’s Italian Kitchen is a comfortable family-owned restaurant serving genuine Italian delicacies. Rated 4.5 stars, it is identified for its home made pasta and wood-fired pizzas. Costs are reasonable ($$), and their signature dishes embrace lasagna bolognese and tiramisu. ““”

strive: restaurant_info = extract_restaurant_structured(restaurant_text) print(f“Restaurant: {restaurant_info.title}”) print(f“Delicacies: {restaurant_info.delicacies}”) print(f“Specialties: {‘, ‘.be part of(restaurant_info.specialties)}”) besides Exception as e: print(f“Error: {e}”) |

Utilizing LlamaIndex with Pydantic

LlamaIndex supplies a number of approaches for structured extraction, with notably sturdy integration for document-based workflows. It’s particularly helpful when you could extract structured information from massive doc collections or construct RAG programs.

Probably the most simple method in LlamaIndex is utilizing LLMTextCompletionProgram, which requires minimal boilerplate code:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 | from llama_index.core.program import LLMTextCompletionProgram from pydantic import BaseModel, Area from typing import Checklist, Elective

class Product(BaseModel): “”“Details about a product.”“” title: str = Area(description=“Product title”) model: str = Area(description=“Model or producer”) class: str = Area(description=“Product class”) worth: float = Area(description=“Value in USD”) options: Checklist[str] = Area(description=“Key options”) score: Elective[float] = Area(default=None, description=“Buyer score out of 5”)

def extract_product_simple(textual content: str) -> Product: “”“Extract product information utilizing LlamaIndex’s easy method.”“”

prompt_template_str = “”“ Extract product data from the next textual content and construction it correctly:

{textual content} ““”

program = LLMTextCompletionProgram.from_defaults( output_cls=Product, prompt_template_str=prompt_template_str, verbose=False )

end result = program(textual content=textual content) return end result |

The output_cls parameter mechanically handles Pydantic validation. This works with any LLM by way of immediate engineering and is nice for fast prototyping and easy extraction duties.

For fashions that help operate calling, you should utilize FunctionCallingProgram. And whenever you want express management over parsing habits, you should utilize the PydanticOutputParser technique:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 | from llama_index.core.program import LLMTextCompletionProgram from llama_index.core.output_parsers import PydanticOutputParser from llama_index.llms.openai import OpenAI

def extract_product_with_parser(textual content: str) -> Product: “”“Extract product information utilizing express parser.”“”

prompt_template_str = “”“ Extract product data from the next textual content:

{textual content}

{format_instructions} ““”

llm = OpenAI(mannequin=“gpt-4o-mini”, temperature=0)

program = LLMTextCompletionProgram.from_defaults( output_parser=PydanticOutputParser(output_cls=Product), prompt_template_str=prompt_template_str, llm=llm, verbose=False )

end result = program(textual content=textual content) return end result |

Right here’s the way you’d extract product data in observe:

product_text = “”“ The Sony WH-1000XM5 wi-fi headphones function industry-leading noise cancellation, distinctive sound high quality, and as much as 30 hours of battery life. Priced at $399.99, these premium headphones embrace Adaptive Sound Management, multipoint connection, and speak-to-chat expertise. Clients charge them 4.7 out of 5 stars. ““”

strive: product_info = extract_product_with_parser(product_text) print(f“Product: {product_info.title}”) print(f“Model: {product_info.model}”) print(f“Value: ${product_info.worth}”) print(f“Options: {‘, ‘.be part of(product_info.options)}”) besides Exception as e: print(f“Error: {e}”) |

Use express parsing whenever you want customized parsing logic, are working with fashions that don’t help operate calling, or are debugging extraction points.

Retrying LLM Calls with Higher Prompts

When the LLM returns invalid information, you’ll be able to retry with an improved immediate that features the error message from the failed validation try:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 | from pydantic import BaseModel, ValidationError from typing import Elective import json

class EventExtraction(BaseModel): event_name: str date: str location: str attendees: int event_type: str

def extract_with_retry(llm_call_function, max_retries: int = 3) -> Elective[EventExtraction]: “”“Attempt to extract legitimate information, retrying with error suggestions if validation fails.”“”

last_error = None

for try in vary(max_retries): strive: response = llm_call_function(last_error) information = json.hundreds(response) return EventExtraction(**information)

besides ValidationError as e: last_error = str(e) print(f“Try {try + 1} failed: {last_error}”)

if try == max_retries – 1: print(“Max retries reached, giving up”) return None

besides json.JSONDecodeError: print(f“Try {try + 1}: Invalid JSON”) last_error = “The response was not legitimate JSON. Please return solely legitimate JSON.”

if try == max_retries – 1: return None

return None |

Every retry contains the earlier error message, serving to the LLM perceive what went unsuitable. After max_retries, the operate returns None as a substitute of crashing, permitting the calling code to deal with the failure gracefully. Printing every try’s error makes it straightforward to debug why extraction is failing.

In an actual utility, your llm_call_function would assemble a brand new immediate together with the Pydantic error message, like "Earlier try failed with error: {error}. Please repair and check out once more."

This instance reveals the retry sample with a mock LLM operate that progressively improves:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 | def mock_llm_call(previous_error: Elective[str] = None) -> str: “”“Simulate an LLM that improves primarily based on error suggestions.”“”

if previous_error is None: return ‘{“event_name”: “Tech Convention 2024”, “date”: “2024-06-15”, “location”: “San Francisco”}’ elif “attendees” in previous_error.decrease(): return ‘{“event_name”: “Tech Convention 2024”, “date”: “2024-06-15”, “location”: “San Francisco”, “attendees”: “about 500”, “event_type”: “Convention”}’ else: return ‘{“event_name”: “Tech Convention 2024”, “date”: “2024-06-15”, “location”: “San Francisco”, “attendees”: 500, “event_type”: “Convention”}’

end result = extract_with_retry(mock_llm_call)

if end result: print(f“nSuccess! Extracted occasion: {end result.event_name}”) print(f“Anticipated attendees: {end result.attendees}”) else: print(“Did not extract legitimate information”) |

The primary try misses the required attendees subject, the second try contains it however with the unsuitable sort, and the third try will get the whole lot right. The retry mechanism handles these progressive enhancements.

Conclusion

Pydantic helps you go from unreliable LLM outputs into validated, type-safe information buildings. By combining clear schemas with sturdy error dealing with, you’ll be able to construct AI-powered purposes which might be each highly effective and dependable.

Listed below are the important thing takeaways:

- Outline clear schemas that match your wants

- Validate the whole lot and deal with errors gracefully with retries and fallbacks

- Use sort hints and validators to implement information integrity

- Embody schemas in your prompts to information the LLM

Begin with easy fashions and add validation as you discover edge circumstances in your LLM outputs. Completely satisfied exploring!