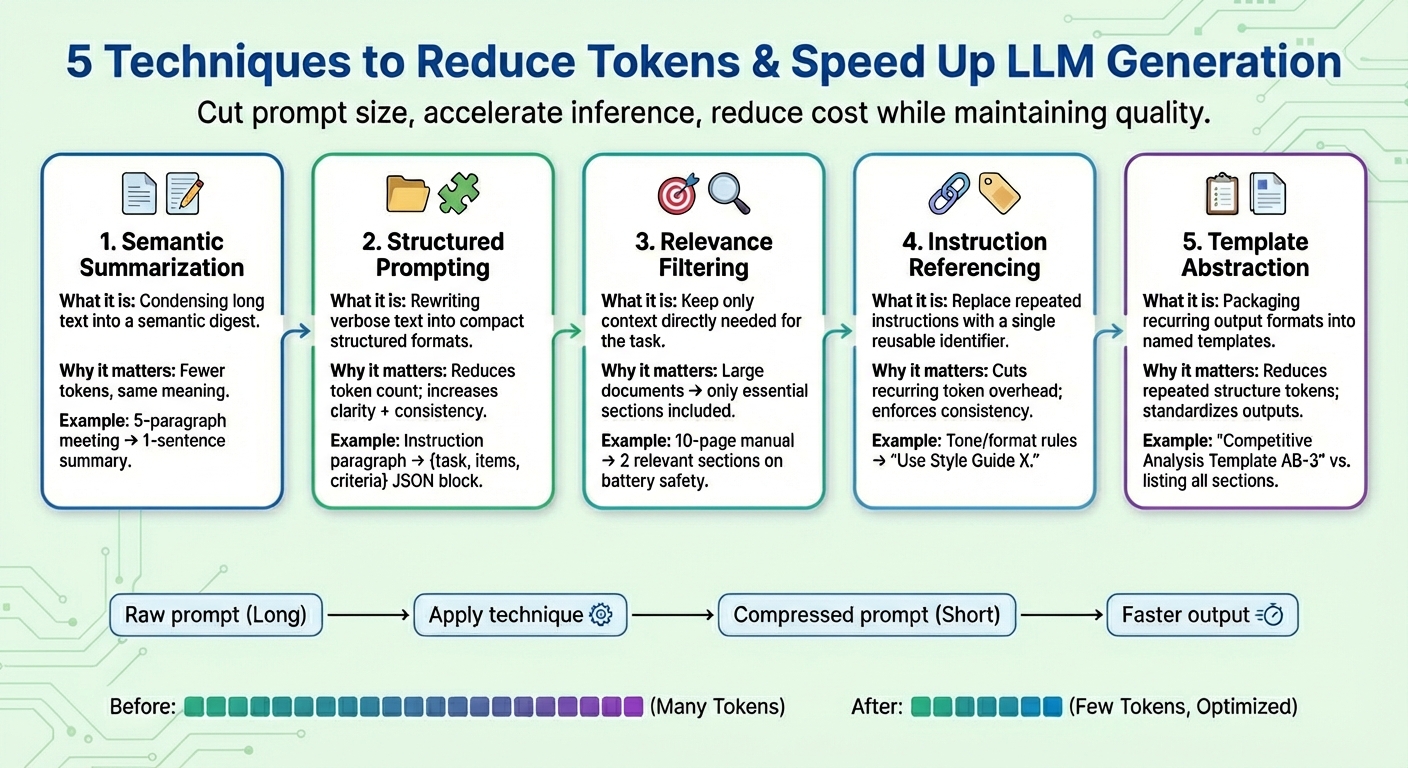

On this article, you’ll be taught 5 sensible immediate compression strategies that scale back tokens and pace up giant language mannequin (LLM) technology with out sacrificing activity high quality.

Matters we are going to cowl embody:

- What semantic summarization is and when to make use of it

- How structured prompting, relevance filtering, and instruction referencing reduce token counts

- The place template abstraction suits and easy methods to apply it persistently

Let’s discover these strategies.

Immediate Compression for LLM Technology Optimization and Price Discount

Picture by Editor

Introduction

Giant language fashions (LLMs) are primarily educated to generate textual content responses to consumer queries or prompts, with complicated reasoning underneath the hood that not solely includes language technology by predicting every subsequent token within the output sequence, but additionally entails a deep understanding of the linguistic patterns surrounding the consumer enter textual content.

Immediate compression strategies are a analysis matter that has recently gained consideration throughout the LLM panorama, because of the have to alleviate gradual, time-consuming inference brought on by bigger consumer prompts and context home windows. These strategies are designed to assist lower token utilization, speed up token technology, and scale back total computation prices whereas retaining the standard of the duty consequence as a lot as doable.

This text presents and describes 5 generally used immediate compression strategies to hurry up LLM technology in difficult eventualities.

1. Semantic Summarization

Semantic summarization is a way that condenses lengthy or repetitive content material right into a extra succinct model whereas retaining its important semantics. Slightly than feeding your complete dialog or textual content paperwork to the mannequin iteratively, a digest containing solely the necessities is handed. The outcome: the variety of enter tokens the mannequin has to “learn” turns into decrease, thereby accelerating the next-token technology course of and decreasing value with out shedding key data.

Suppose an extended immediate context consisting of assembly minutes, like “In yesterday’s assembly, Iván reviewed the quarterly numbers…”, summing as much as 5 paragraphs. After semantic summarization, the shortened context could seem like “Abstract: Iván reviewed quarterly numbers, highlighted a gross sales dip in This fall, and proposed cost-saving measures.”

2. Structured (JSON) Prompting

This method focuses on expressing lengthy, easily flowing items of textual content data in compact, semi-structured codecs like JSON (i.e., key–worth pairs) or a listing of bullet factors. The goal codecs used for structured prompting sometimes entail a discount within the variety of tokens. This helps the mannequin interpret consumer directions extra reliably and, consequently, enhances mannequin consistency and reduces ambiguity whereas additionally decreasing prompts alongside the way in which.

Structured prompting algorithms could rework uncooked prompts with directions like Please present an in depth comparability between Product X and Product Y, specializing in value, product options, and buyer rankings right into a structured kind like: {activity: “evaluate”, objects: [“Product X”, “Product Y”], standards: [“price”, “features”, “ratings”]}

3. Relevance Filtering

Relevance filtering applies the precept of “specializing in what actually issues”: it measures relevance in elements of the textual content and incorporates within the ultimate immediate solely the items of context which might be actually related for the duty at hand. Slightly than dumping total items of knowledge like paperwork which might be a part of the context, solely small subsets of the data which might be most associated to the goal request are saved. That is one other method to drastically scale back immediate dimension and assist the mannequin behave higher when it comes to focus and boosted prediction accuracy (keep in mind, LLM token technology is, in essence, a next-word prediction activity repeated many occasions).

Take, for instance, a complete 10-page product guide for a cellphone being added as an attachment (immediate context). After making use of relevance filtering, solely a few quick related sections about “battery life” and “charging course of” are retained as a result of the consumer was prompted about security implications when charging the system.

4. Instruction Referencing

Many prompts repeat the identical sorts of instructions over and over, e.g., “undertake this tone,” “reply on this format,” or “use concise sentences,” to call a couple of. Instruction referencing creates a reference for every frequent instruction (consisting of a set of tokens), registers every one solely as soon as, and reuses it as a single token identifier. At any time when future prompts point out a registered “frequent request,” that identifier is used. Moreover shortening prompts, this technique additionally helps keep constant activity conduct over time.

A mixed set of directions like “Write in a pleasant tone. Keep away from jargon. Hold sentences succinct. Present examples.” might be simplified as “Use Type Information X.” after which be reused when the equal directions are specified once more.

5. Template Abstraction

Some patterns or directions typically seem throughout prompts — for example, report constructions, analysis codecs, or step-by-step procedures. Template abstraction applies an analogous precept to instruction referencing, however it focuses on what form and format the generated outputs ought to have, encapsulating these frequent patterns underneath a template identify. Then template referencing is used, and the LLM does the job of filling the remainder of the data. Not solely does this contribute to retaining prompts clearer, it additionally dramatically reduces the presence of repeated tokens.

After template abstraction, a immediate could also be was one thing like “Produce a Aggressive Evaluation utilizing Template AB-3.” the place AB-3 is a listing of requested content material sections for the evaluation, every one being clearly outlined. One thing like:

Produce a aggressive evaluation with 4 sections:

- Market Overview (2–3 paragraphs summarizing business tendencies)

- Competitor Breakdown (desk evaluating no less than 5 opponents)

- Strengths and Weaknesses (bullet factors)

- Strategic Suggestions (3 actionable steps).

Wrapping Up

This text presents and describes 5 generally used methods to hurry up LLM technology in difficult eventualities by compressing consumer prompts, typically specializing in the context a part of it, which is most of the time the basis reason for “overloaded prompts” inflicting LLMs to decelerate.