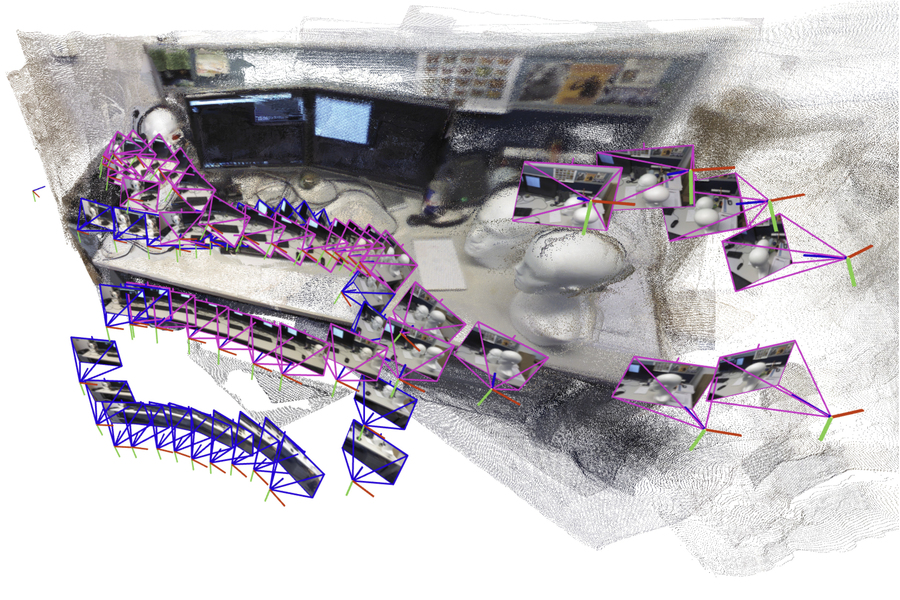

The unreal intelligence-driven system incrementally creates and aligns smaller submaps of the scene, which it stitches collectively to reconstruct a full 3D map, like of an workplace cubicle, whereas estimating the robotic’s place in real-time. Picture courtesy of the researchers.

The unreal intelligence-driven system incrementally creates and aligns smaller submaps of the scene, which it stitches collectively to reconstruct a full 3D map, like of an workplace cubicle, whereas estimating the robotic’s place in real-time. Picture courtesy of the researchers.

By Adam Zewe

A robotic trying to find staff trapped in {a partially} collapsed mine shaft should quickly generate a map of the scene and determine its location inside that scene because it navigates the treacherous terrain.

Researchers have not too long ago began constructing highly effective machine-learning fashions to carry out this advanced job utilizing solely pictures from the robotic’s onboard cameras, however even the most effective fashions can solely course of just a few pictures at a time. In a real-world catastrophe the place each second counts, a search-and-rescue robotic would want to shortly traverse massive areas and course of hundreds of pictures to finish its mission.

To beat this downside, MIT researchers drew on concepts from each latest synthetic intelligence imaginative and prescient fashions and classical laptop imaginative and prescient to develop a brand new system that may course of an arbitrary variety of pictures. Their system precisely generates 3D maps of sophisticated scenes like a crowded workplace hall in a matter of seconds.

The AI-driven system incrementally creates and aligns smaller submaps of the scene, which it stitches collectively to reconstruct a full 3D map whereas estimating the robotic’s place in real-time.

Not like many different approaches, their method doesn’t require calibrated cameras or an knowledgeable to tune a posh system implementation. The less complicated nature of their strategy, coupled with the pace and high quality of the 3D reconstructions, would make it simpler to scale up for real-world functions.

Past serving to search-and-rescue robots navigate, this technique may very well be used to make prolonged actuality functions for wearable gadgets like VR headsets or allow industrial robots to shortly discover and transfer items inside a warehouse.

“For robots to perform more and more advanced duties, they want way more advanced map representations of the world round them. However on the identical time, we don’t wish to make it tougher to implement these maps in observe. We’ve proven that it’s attainable to generate an correct 3D reconstruction in a matter of seconds with a device that works out of the field,” says Dominic Maggio, an MIT graduate pupil and lead creator of a paper on this technique.

Maggio is joined on the paper by postdoc Hyungtae Lim and senior creator Luca Carlone, affiliate professor in MIT’s Division of Aeronautics and Astronautics (AeroAstro), principal investigator within the Laboratory for Data and Choice Techniques (LIDS), and director of the MIT SPARK Laboratory. The analysis shall be introduced on the Convention on Neural Data Processing Techniques.

Mapping out an answer

For years, researchers have been grappling with a vital ingredient of robotic navigation referred to as simultaneous localization and mapping (SLAM). In SLAM, a robotic recreates a map of its setting whereas orienting itself inside the area.

Conventional optimization strategies for this job are likely to fail in difficult scenes, or they require the robotic’s onboard cameras to be calibrated beforehand. To keep away from these pitfalls, researchers prepare machine-learning fashions to study this job from information.

Whereas they’re less complicated to implement, even the most effective fashions can solely course of about 60 digicam pictures at a time, making them infeasible for functions the place a robotic wants to maneuver shortly by means of a various setting whereas processing hundreds of pictures.

To resolve this downside, the MIT researchers designed a system that generates smaller submaps of the scene as a substitute of your entire map. Their technique “glues” these submaps collectively into one general 3D reconstruction. The mannequin continues to be solely processing just a few pictures at a time, however the system can recreate bigger scenes a lot sooner by stitching smaller submaps collectively.

“This appeared like a quite simple answer, however once I first tried it, I used to be shocked that it didn’t work that properly,” Maggio says.

Trying to find an evidence, he dug into laptop imaginative and prescient analysis papers from the Eighties and Nineties. By means of this evaluation, Maggio realized that errors in the way in which the machine-learning fashions course of pictures made aligning submaps a extra advanced downside.

Conventional strategies align submaps by making use of rotations and translations till they line up. However these new fashions can introduce some ambiguity into the submaps, which makes them tougher to align. As an illustration, a 3D submap of a one facet of a room might need partitions which might be barely bent or stretched. Merely rotating and translating these deformed submaps to align them doesn’t work.

“We’d like to ensure all of the submaps are deformed in a constant manner so we will align them properly with one another,” Carlone explains.

A extra versatile strategy

Borrowing concepts from classical laptop imaginative and prescient, the researchers developed a extra versatile, mathematical method that may characterize all of the deformations in these submaps. By making use of mathematical transformations to every submap, this extra versatile technique can align them in a manner that addresses the paradox.

Primarily based on enter pictures, the system outputs a 3D reconstruction of the scene and estimates of the digicam areas, which the robotic would use to localize itself within the area.

“As soon as Dominic had the instinct to bridge these two worlds — learning-based approaches and conventional optimization strategies — the implementation was pretty simple,” Carlone says. “Developing with one thing this efficient and easy has potential for lots of functions.

Their system carried out sooner with much less reconstruction error than different strategies, with out requiring particular cameras or further instruments to course of information. The researchers generated close-to-real-time 3D reconstructions of advanced scenes like the within of the MIT Chapel utilizing solely quick movies captured on a cellular phone.

The typical error in these 3D reconstructions was lower than 5 centimeters.

Sooner or later, the researchers wish to make their technique extra dependable for particularly sophisticated scenes and work towards implementing it on actual robots in difficult settings.

“Figuring out about conventional geometry pays off. In the event you perceive deeply what’s going on within the mannequin, you may get significantly better outcomes and make issues way more scalable,” Carlone says.

This work is supported, partially, by the U.S. Nationwide Science Basis, U.S. Workplace of Naval Analysis, and the Nationwide Analysis Basis of Korea. Carlone, at the moment on sabbatical as an Amazon Scholar, accomplished this work earlier than he joined Amazon.

MIT Information