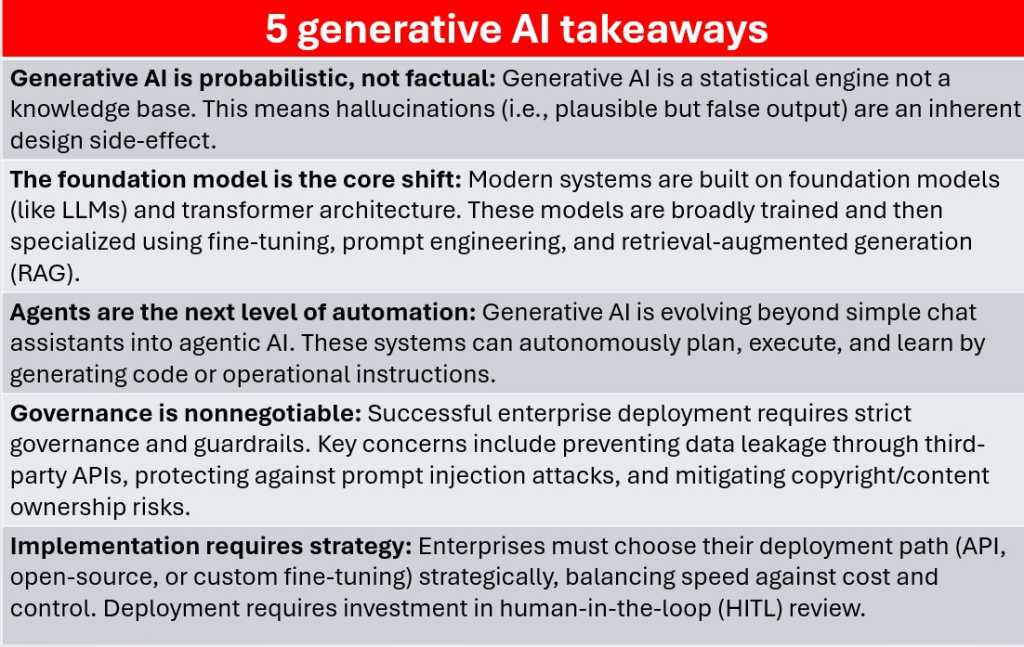

Each generative AI system, irrespective of how superior, is constructed round prediction. Bear in mind, a mannequin doesn’t really know details—it appears to be like at a sequence of tokens, then calculates, based mostly on evaluation of its underlying coaching knowledge, what token is most probably to return subsequent. That is what makes the output fluent and human-like, but when its prediction is fallacious, that shall be perceived as a hallucination.

Foundry

As a result of the mannequin doesn’t distinguish between one thing that’s recognized to be true and one thing prone to observe on from the enter textual content it’s been given, hallucinations are a direct aspect impact of the statistical course of that powers generative AI. And don’t neglect that we’re usually pushing AI fashions to provide you with solutions to questions that we, who even have entry to that knowledge, can’t reply ourselves.

In textual content fashions, hallucinations would possibly imply inventing quotes, fabricating references, or misrepresenting a technical course of. In code or knowledge evaluation, it may well produce syntactically right however logically fallacious outcomes. Even RAG pipelines, which offer actual knowledge context to fashions, solely cut back hallucination—they don’t eradicate it. Enterprises utilizing generative AI want evaluation layers, validation pipelines, and human oversight to forestall these failures from spreading into manufacturing programs.