In hospital intensive care items (ICUs), steady affected person monitoring is vital. Medical units generate huge quantities of real-time information on very important indicators equivalent to coronary heart price, blood stress, and oxygen saturation. The important thing problem lies in early detection of affected person deterioration by very important signal trending. Healthcare groups should course of hundreds of knowledge factors every day per affected person to establish regarding patterns, a process essential for well timed intervention and doubtlessly life-saving care.

AWS Lambda occasion supply mapping may help on this state of affairs by mechanically polling information streams and triggering capabilities in real-time with out further infrastructure administration. Through the use of AWS Lambda for real-time processing of sensor information and storing aggregated leads to safe information buildings designed for big analytic datasets known as Iceberg tables in Amazon Easy Storage Service (Amazon S3) buckets, medical groups can obtain each quick alerting capabilities and achieve long-term analytical insights, enhancing their capacity to offer well timed and efficient care.

On this publish, we display tips on how to construct a serverless structure that processes real-time ICU affected person monitoring information utilizing Lambda occasion supply mapping for quick alert era and information aggregation, adopted by persistent storage in Amazon S3 with an Iceberg catalog for complete healthcare analytics. The answer demonstrates tips on how to deal with high-frequency very important signal information, implement vital threshold monitoring, and create a scalable analytics platform that may develop together with your healthcare group’s wants and assist monitor sensor alert fatigue within the ICU.

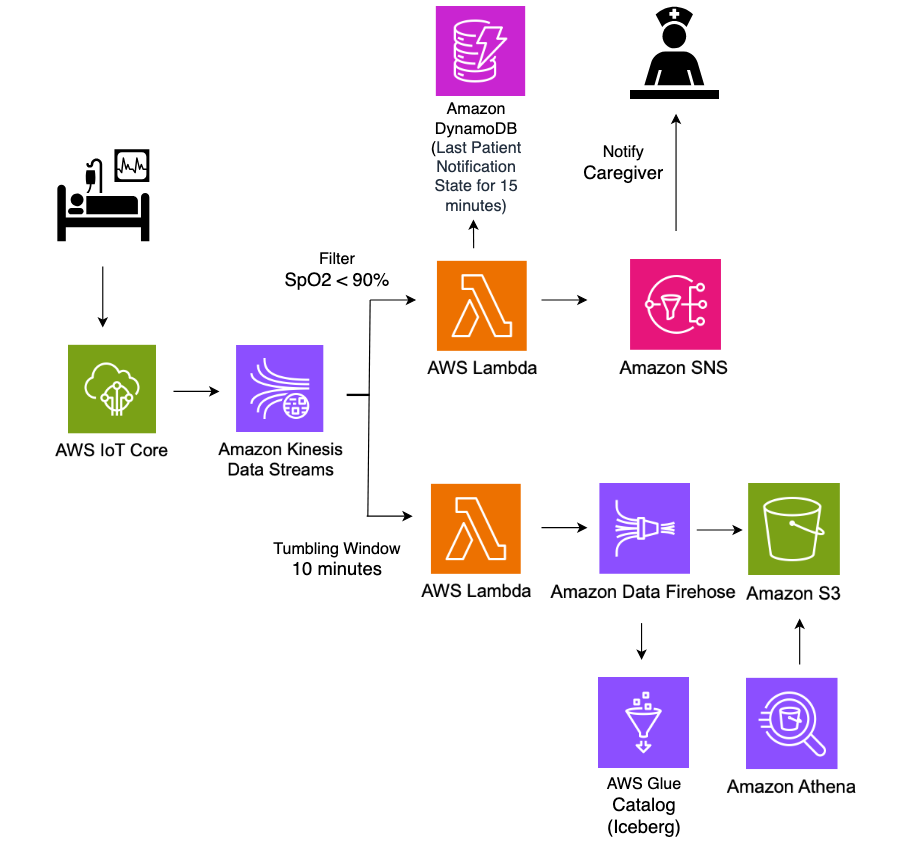

Structure

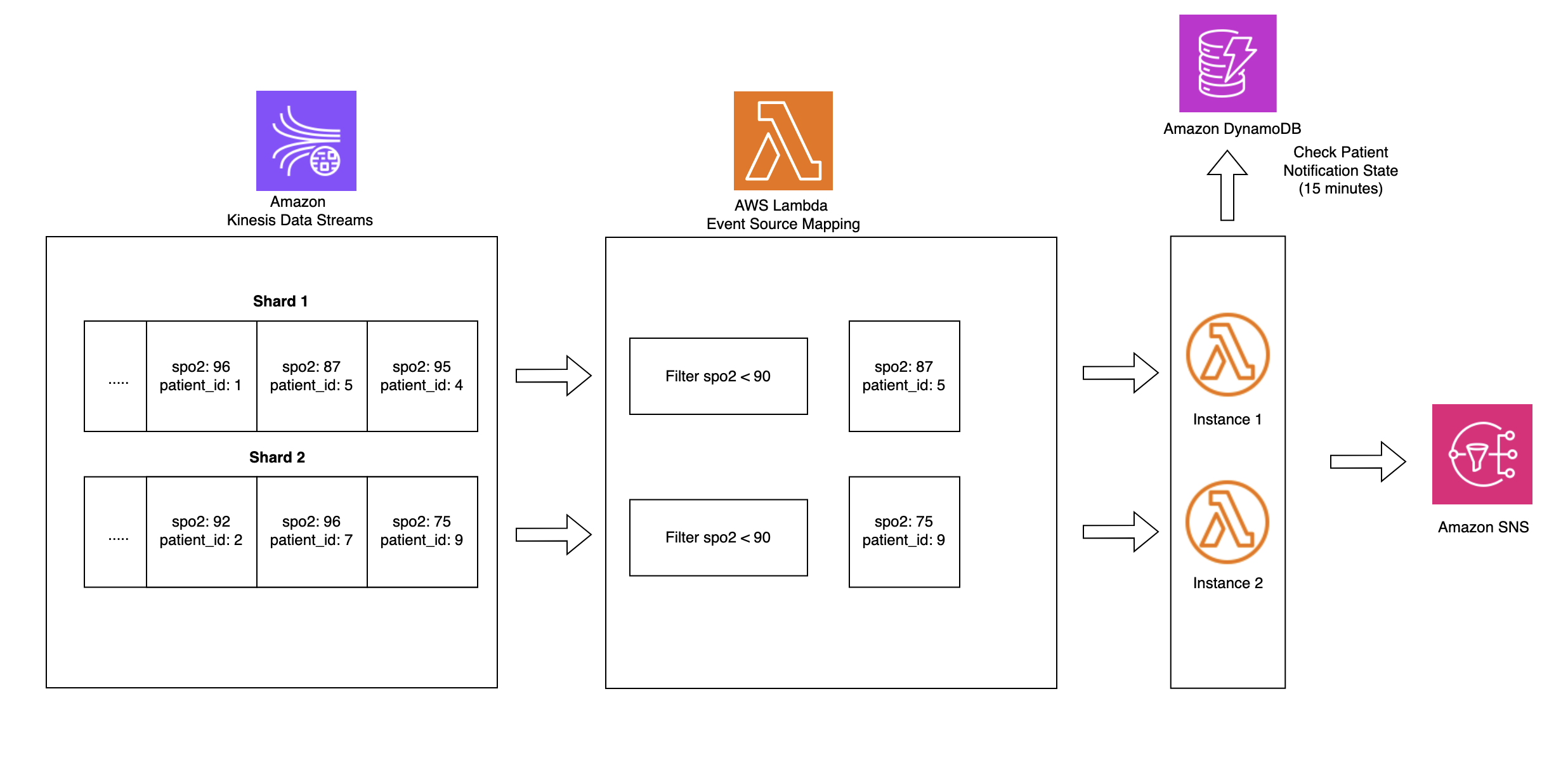

The next structure diagram illustrates a real-time ICU affected person analytics system.

On this structure, real-time affected person monitoring information from hospital ICU sensors is ingested into AWS IoT Core, which then streams the info into Amazon Kinesis Knowledge Streams. Two Lambda capabilities devour this streaming information concurrently for various functions, each utilizing Lambda occasion supply mapping integration with Kinesis Knowledge Streams. The primary Lambda operate makes use of the filtering function of occasion supply mapping to detect vital well being occasions the place SpO2(blood oxygen saturation) ranges fall under 90%, instantly triggering notifications to caregivers by Amazon Easy Notification Service (Amazon SNS) for fast response. The second Lambda operate employs the tumbling window function of occasion supply mapping to mixture sensor information over 10-minute time intervals. This aggregated information is then systematically saved in S3 buckets in Apache Iceberg format for historic evaluation and reporting. Your entire pipeline operates in a serverless method, offering scalable, real-time processing of vital healthcare information whereas sustaining each quick alerting capabilities and long-term information storage for analytics.

Amazon S3 information, with its help for Apache Iceberg desk format, allows healthcare organizations to effectively retailer and question giant volumes of time-series affected person information. This resolution permits for complicated analytical queries throughout historic affected person information whereas sustaining excessive efficiency and value effectivity.

Conditions

To implement the answer offered on this publish, it is best to have the next:

- An lively AWS account

- IAM permissions to deploy CloudFormation templates and provision AWS sources

- Python put in in your machine to run the ICU affected person sensor information simulator code

Deploy a real-time ICU affected person analytics pipeline utilizing CloudFormation

You utilize AWS CloudFormation templates to create the sources for a real-time information analytics pipeline.

- To get began, Sign up to the console as Account consumer and choose the suitable Area.

- Obtain and launch CloudFormation template the place you need to host the Lambda capabilities.

- Select Subsequent.

- On the Specify stack particulars web page, enter a Stack title (for instance, IoTHealthMonitoring).

- For Parameters, enter the next:

- IoTTopic: Enter the MQTT matter on your IoT units (for instance,

icu/sensors). - EmailAddress: Enter an e mail tackle for receiving notifications.

- IoTTopic: Enter the MQTT matter on your IoT units (for instance,

- Look ahead to the stack creation to finish. This course of may take 5-10 minutes.

- After the CloudFormation stack completes, it creates following sources:

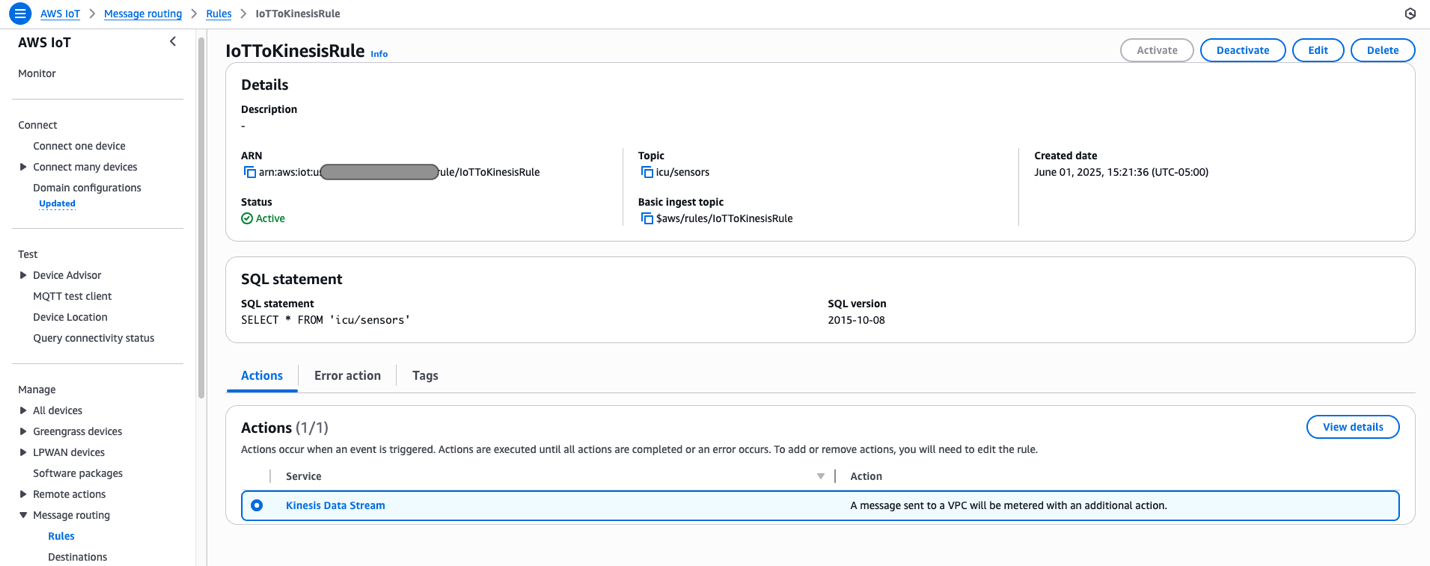

- An AWS IoT Core rule to seize information from the required IoTTopic matter and routes it to Kinesis information stream.

- A Kinesis information stream for ingesting IoT sensor information.

- Two Lambda capabilities:

FilterSensorData: Displays vital well being metrics and sends alerts.AggregateSensorData: Aggregates sensor information in 10 minutes window.

- An Amazon DynamoDB desk (

NotificationTimestamps) to retailer notification timestamps for price limiting alerts. - An Amazon SNS matter and subscription to ship e mail notifications for vital affected person situations.

- An Amazon Knowledge Firehose supply stream to ship processed information to Amazon S3 utilizing Iceberg format.

- Amazon S3 buckets to retailer sensor information.

- Amazon Athena and AWS Glue sources for the database and an Iceberg desk for querying aggregated information.

- AWS Id and Entry Administration (IAM) roles and insurance policies to help required permissions for Amazon IoT guidelines, Lambda capabilities, and Knowledge Firehose streams.

- Amazon CloudWatch log teams to document for Kinesis Firehose exercise and Lambda capabilities.

Answer walkthrough

Now that you simply’ve deployed the answer, let’s assessment a purposeful walkthrough. First, simulate affected person very important indicators information and ship it to AWS IoT Core utilizing the next Python code in your native machine. To run this code efficiently, guarantee you will have the required IAM permissions to publish messages to the IoT matter within the AWS account the place the answer is deployed.

The next is the format of a pattern ICU sensor message produced by the simulator.

Knowledge is revealed to the icu/sensors IoT matter each 30 seconds for 10 totally different sufferers, making a steady stream of ICU affected person monitoring information. Messages revealed to AWS IoT Core are handed to Kinesis Knowledge Streams utilizing the next message routing rule deployed by our resolution.

Two Lambda capabilities devour information from Knowledge Streams concurrently, each utilizing the Lambda occasion supply mapping integration with Kinesis Knowledge Streams.

Occasion supply mapping

Lambda occasion supply mapping mechanically triggers Lambda capabilities in response to information adjustments from supported occasion sources like Amazon DynamoDB Streams, Amazon Kinesis Knowledge Streams, Amazon Easy Queue Service (Amazon SQS), Amazon MQ, and Amazon Managed Streaming for Apache Kafka. This serverless integration works by having Lambda ballot these sources for brand spanking new data, that are then processed in configurable batch sizes starting from 1 to 10,000 data. When new information is detected, Lambda mechanically invokes the operate synchronously, dealing with the scaling mechanically based mostly on the workload. The service helps at-least-once supply and offers strong error dealing with by retry insurance policies and dead-letter queues for failed occasions. Occasion supply mappings might be fine-tuned by varied parameters equivalent to batch home windows, most document age, and retry makes an attempt, making them extremely adaptable to totally different use circumstances. This function is especially beneficial in event-driven architectures, in order that clients can concentrate on enterprise logic whereas AWS manages the complexities of occasion processing, scaling, and reliability.

Occasion supply mapping makes use of tumbling home windows and filtering to course of and analyze information.

Tumbling home windows

Tumbling home windows in Lambda occasion processing allow information aggregation in mounted, non-overlapping time intervals, the place every occasion belongs to precisely one window. That is very best for time-based analytics and periodic reporting. When mixed with occasion supply mapping, this strategy permits environment friendly batch processing of occasions inside outlined time durations (for instance, 10-minute home windows), enabling calculations equivalent to common very important indicators or cumulative fluid consumption and output whereas optimizing operate invocations and useful resource utilization.

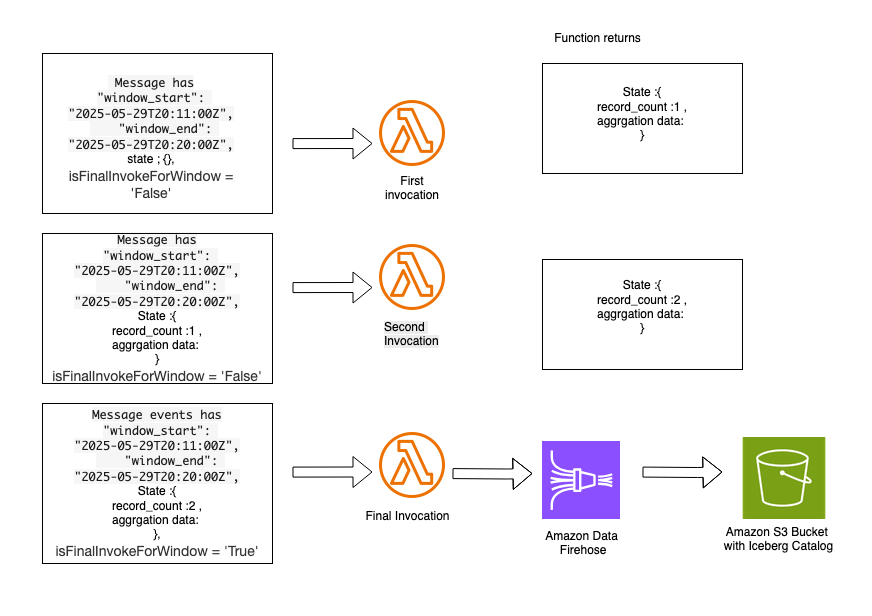

Once you configure an occasion supply mapping between Kinesis Knowledge Streams and a Lambda operate, use the Tumbling Window Period setting, which seems within the set off configuration within the Lambda console. The answer you deployed utilizing the CloudFormation template contains the AggregateSensorData Lambda operate, which makes use of a 10-minute tumbling window configuration. Relying on the amount of messages flowing by the Amazon Kinesis stream, the AggregateSensorData operate might be invoked a number of occasions for every 10-minute window, sequentially, with the next attributes within the occasion provided to the operate.

- Window begin and finish: The start and ending timestamps for the present tumbling window.

- State: An object containing the state returned from the earlier window, which is initially empty. The state object can include as much as 1 MB of knowledge.

- isFinalInvokeForWindow: Signifies if that is the final invocation for the tumbling window. This solely happens as soon as per window interval.

- isWindowTerminatedEarly: A window ends early provided that the state exceeds the utmost allowed measurement of 1 MB.

In a tumbling window, there’s a sequence of Lambda invocations within the following sample:

AggregateSensorData Lambda code snippet:

- The primary invocation incorporates an empty state object within the occasion. The operate returns a state object containing customized attributes which can be particular to the customized logic within the aggregation.

- The second invocation incorporates the state object offered by the primary Lambda invocation. This operate returns an up to date state object with new aggregated values. Subsequent invocations observe this identical sequence. Following is a pattern of the aggregated state, which might be provided to subsequent Lambda invocations throughout the identical 10-minute tumbling window.

- The ultimate invocation within the tumbling window has the

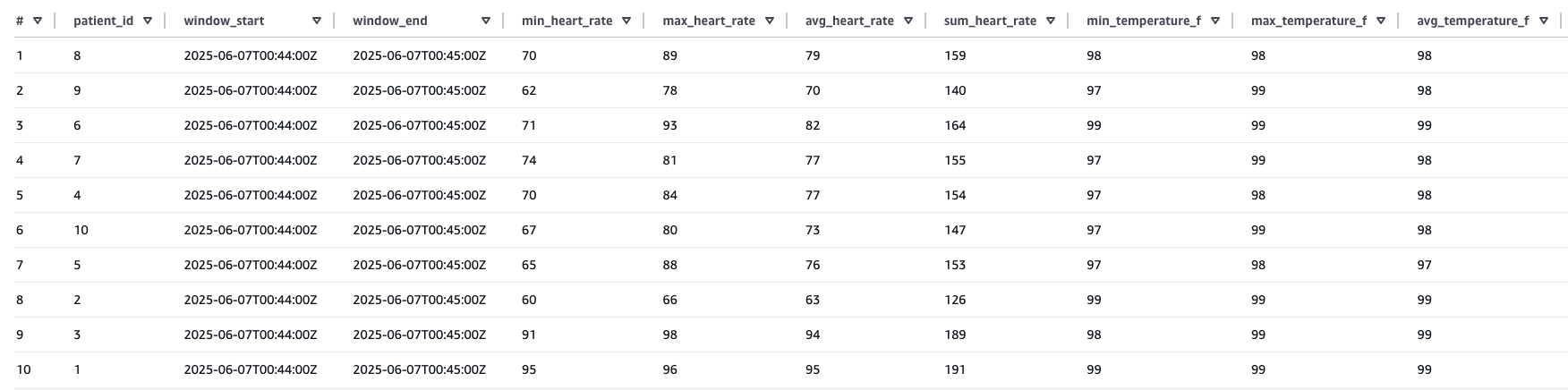

isFinalInvokeForWindowflag set to the true. This incorporates the state returned by the newest Lambda invocation. This invocation is answerable for passing aggregated state messages to the Knowledge Firehose stream, which delivers information to the Amazon S3 bucket utilizing Iceberg information format. - After the aggregated information is distributed to Amazon S3, you may question the info utilizing Athena.

Pattern results of the previous Athena question:

Occasion supply mapping with filtering

Lambda occasion supply mapping with filtering optimizes information processing from sources like Amazon Kinesis by making use of JSON sample filtering earlier than operate invocation. That is demonstrated within the ICU affected person monitoring resolution, the place the system filters for SpO2 readings from Kinesis Knowledge Streams which can be under 90%. As a substitute of processing all incoming information, the filtering functionality is used to selectively processes solely vital readings, considerably decreasing prices and processing overhead. The answer makes use of DynamoDB for classy state administration, monitoring low SpO2 occasions by a schema combining PatientID and timestamp-based keys inside outlined monitoring home windows.

This state-aware implementation balances scientific urgency with operational effectivity by sending quick Amazon SNS notifications when vital situations are first detected whereas implementing a 15-minute alert suppression window to forestall alert fatigue amongst healthcare suppliers. By sustaining state throughout a number of Lambda invocations, the system helps guarantee fast response to doubtlessly life-threatening conditions whereas minimizing pointless notifications for a similar affected person situation. The mixing of Lambda’occasion filtering, DynamoDB state administration, and dependable alert supply offered by Amazon SNS creates a sturdy, scalable healthcare monitoring resolution that exemplifies how AWS companies might be strategically mixed to deal with complicated necessities whereas balancing technical effectivity with scientific effectiveness.

Filter sensor information Lambda code snippet:

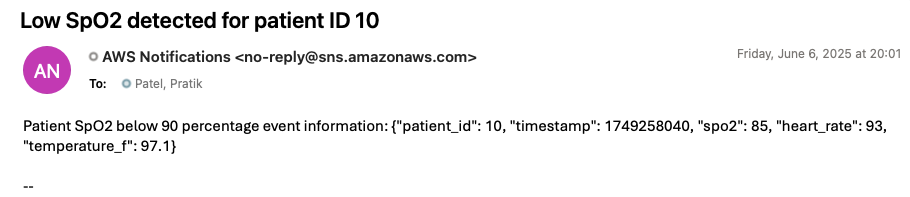

To generate an alert notification by the deployed resolution, replace the previous simulator code by setting the SpO2 worth to lower than 90 and run it once more. Inside 1 minute, it is best to obtain an alert notification on the e mail tackle you offered throughout stack creation. The next picture is an instance of an alert notification generated by the deployed resolution.

Clear up

To keep away from ongoing prices after finishing this tutorial, delete the CloudFormation stack that you simply deployed earlier on this publish. This may take away many of the AWS sources created for this resolution. You may must manually delete objects created in Amazon S3, as a result of CloudFormation received’t take away non-empty buckets throughout stack deletion.

Conclusion

As demonstrated on this publish, you may construct a serverless real-time analytics pipeline for healthcare monitoring by utilizing AWS IoT Core, Amazon S3 buckets with iceberg format, and Amazon Kinesis Knowledge Streams integration with AWS Lambda occasion supply mapping. This architectural strategy eliminates the necessity for complicated code whereas enabling fast vital affected person care alerts and information aggregation for evaluation utilizing Lambda. The answer is especially beneficial for healthcare organizations seeking to modernize their affected person monitoring methods with real-time capabilities. The structure might be prolonged to deal with varied medical units and sensor information streams, making it adaptable for various healthcare monitoring eventualities. This publish presents one implementation strategy, and organizations adopting this resolution ought to make sure the structure and code meets their particular software efficiency, safety, privateness, and regulatory compliance wants.

If this publish helps you or evokes you to resolve an issue, we might love to listen to about it!