As Databricks Engineers, we now have the privilege of engaged on difficult issues with nice colleagues. On this Engineering weblog publish, we are going to stroll you thru how we constructed a excessive efficiency fee limiting system at Databricks. If you’re a Databricks person, you don’t want to grasp this weblog to have the ability to use the platform to its fullest. However should you’re keen on taking a peek below the hood, learn on to listen to about a few of the cool stuff we’ve been engaged on!

Background

Charge limiting is about controlling useful resource utilization to supply isolation and overload safety between tenants in a multitenant system. Within the context of Databricks, this may very well be offering isolation between accounts, workspaces, customers, and many others., and is most frequently seen externally as a per time unit restrict, such because the variety of jobs launched per second, variety of API requests per second, and many others. However there may be inner usages of fee limits, reminiscent of managing capability between shoppers of a service. Charge restrict enforcement performs an essential function in making Databricks dependable, nevertheless it’s essential to notice that this enforcement incurs overhead that must be minimized.

The Downside

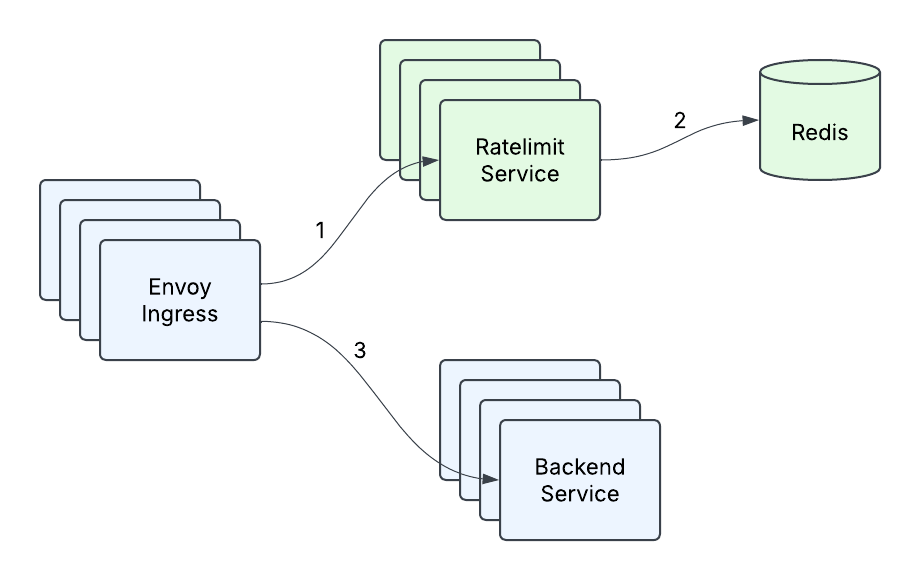

Again in early 2023, the present Ratelimit infrastructure at Databricks consisted of an Envoy ingress gateway making calls to the Ratelimit Service, with a single occasion of Redis backing the service (Determine 1). This was completely appropriate for the present queries per second (QPS) that any cluster of machines inside a area was anticipated to obtain, in addition to for the transient nature of per second counting. However as the corporate expanded its buyer base and added new use instances, it grew to become clear that what had gotten us to that time wouldn’t be enough to satisfy our future wants. With the introduction of real-time mannequin serving and different excessive qps use instances, the place one buyer may very well be sending orders of magnitude extra site visitors than what the Ratelimit Service may at present deal with, just a few issues emerged:

- Excessive tail latency – the tail latency of our service was unacceptably excessive below heavy site visitors, particularly when contemplating there are two community hops concerned and that there was P99 community latency of 10ms-20ms in one of many cloud suppliers.

- Restricted Throughput – at a sure level, including extra machines and doing level optimizations (reminiscent of caching) now not allowed us to deal with extra site visitors.

- Redis as a single level of failure – Our single Redis occasion was our single level of failure, and we needed to do one thing about that. It was time to revamp our service.

Terminology

At Databricks, we now have an idea of a RatelimitGroup (RLG), which is a string identifier that represents a useful resource or set of assets that we have to shield, reminiscent of an API endpoint. These assets can be protected on sure Dimensions, reminiscent of setting limits on the workspace/person/account degree. For instance, a dimension would convey “I need to shield FooBarAPI on workspaceId and the workspaceId for this request is 12345.” A Dimension can be represented like this:

A single shouldRateLimit request may have a number of descriptors, and an instance is perhaps setting limits, for a specific API, on the workspace and on the person degree.

The place the Descriptor schema will seem like this:

Answer

Low Latency Responses

The primary drawback we wished to sort out was to enhance the latency of our Ratelimit Service. Charge Limiting is in the end only a counting drawback, and we knew we ideally wished to maneuver to a mannequin the place we may at all times reply fee restrict requests in-memory, as a result of it’s ultra-fast and most of our fee limits had been primarily based on QPS, which meant that these counts had been transient and didn’t should be resilient to service cases restarting or crashing. Our current setup did a restricted quantity of in-memory counting already through the use of Envoy’s constant hashing to extend cache hit charges, by sending the identical request to the identical machine. Nevertheless, 1) this was not doable to share with non-Envoy companies, 2) the task churn throughout service resizes and restarts meant we nonetheless needed to frequently synchronize with Redis, and three) constant hashing is vulnerable to hotspotting, and when load wasn’t distributed evenly we oftentimes may solely enhance the variety of cases to try to distribute load higher, resulting in suboptimal service utilization.

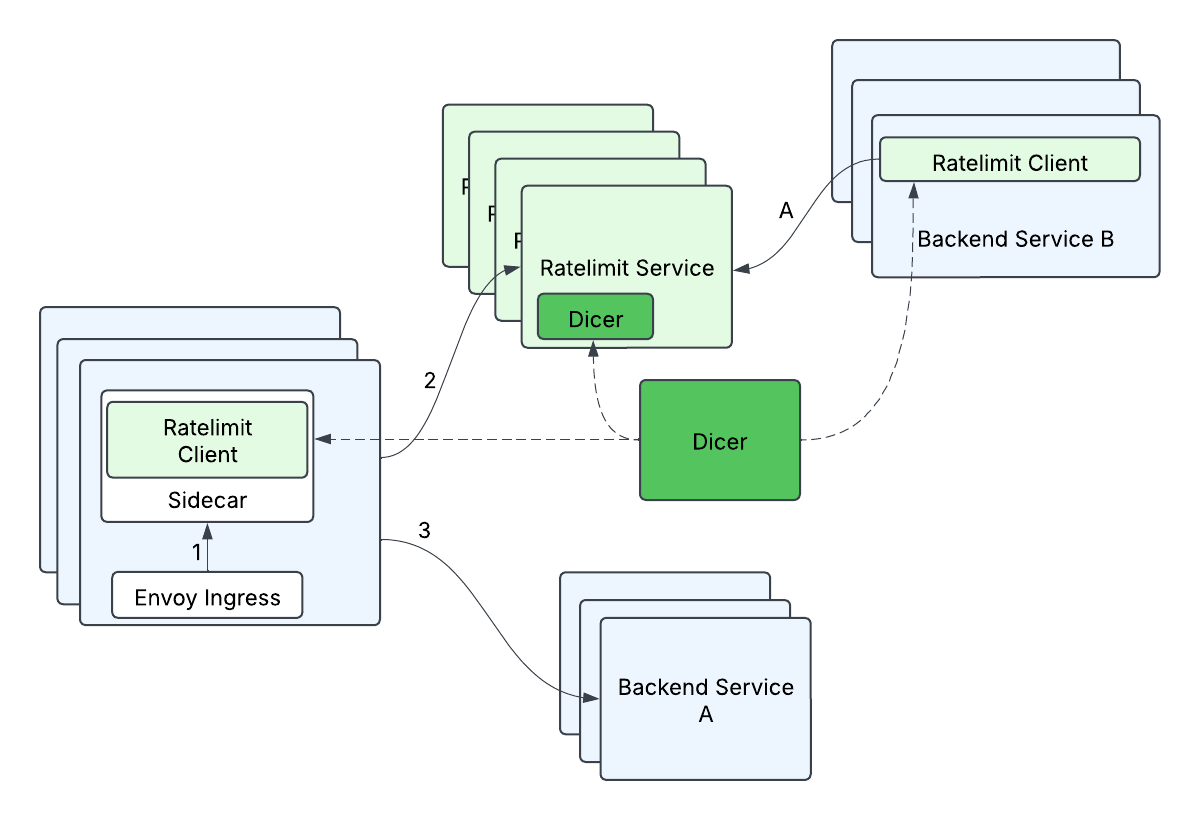

As luck would have it, we had some superior people be a part of Databricks, they usually had been designing Dicer, an autosharding know-how that may make stateful companies simpler to handle, whereas nonetheless preserving all the advantages of a stateless service deployment. This could permit us to tame our server aspect latency by preserving all the fee restrict counting in reminiscence, as a result of the shoppers would be capable to ask Dicer to map a request to a vacation spot server, and the server would be capable to validate with Dicer that it was the correct proprietor of a request. Counting in reminiscence is clearly a lot less complicated and quicker than trying up this data from one other supply, and Dicer enabled us to each enhance our server aspect tail latency and scale horizontally with out worrying a couple of storage answer. i.e this eliminated our single level of failure (Redis) and gave us quicker requests on the identical time!

Scaling Effectively

Although we understood how we may clear up a part of our issues, we nonetheless didn’t have a extremely good method to deal with the anticipated large quantity of requests. We needed to be extra environment friendly and smarter about this, relatively than throwing an enormous variety of servers on the drawback. In the end, we didn’t need one consumer request to translate into one request to the Ratelimit Service, as a result of at scale, tens of millions of requests to the Ratelimit Service can be costly.

What had been our choices? We thought by way of lots of them however a few of the choices we thought of had been

- Prefetching tokens on the consumer and attempting to reply requests regionally.

- Batching up a set of requests, sending, after which ready for a response to let the site visitors by way of.

- Solely sending a fraction of the requests (i.e. Sampling).

None of those choices had been notably enticing; Prefetching (a) has a whole lot of edge instances throughout initialization and when the tokens run out on the consumer or expire. Batching (b) provides pointless delay and reminiscence stress. And Sampling (c) would solely be appropriate for prime qps instances, however not usually, the place we truly may have low fee limits.

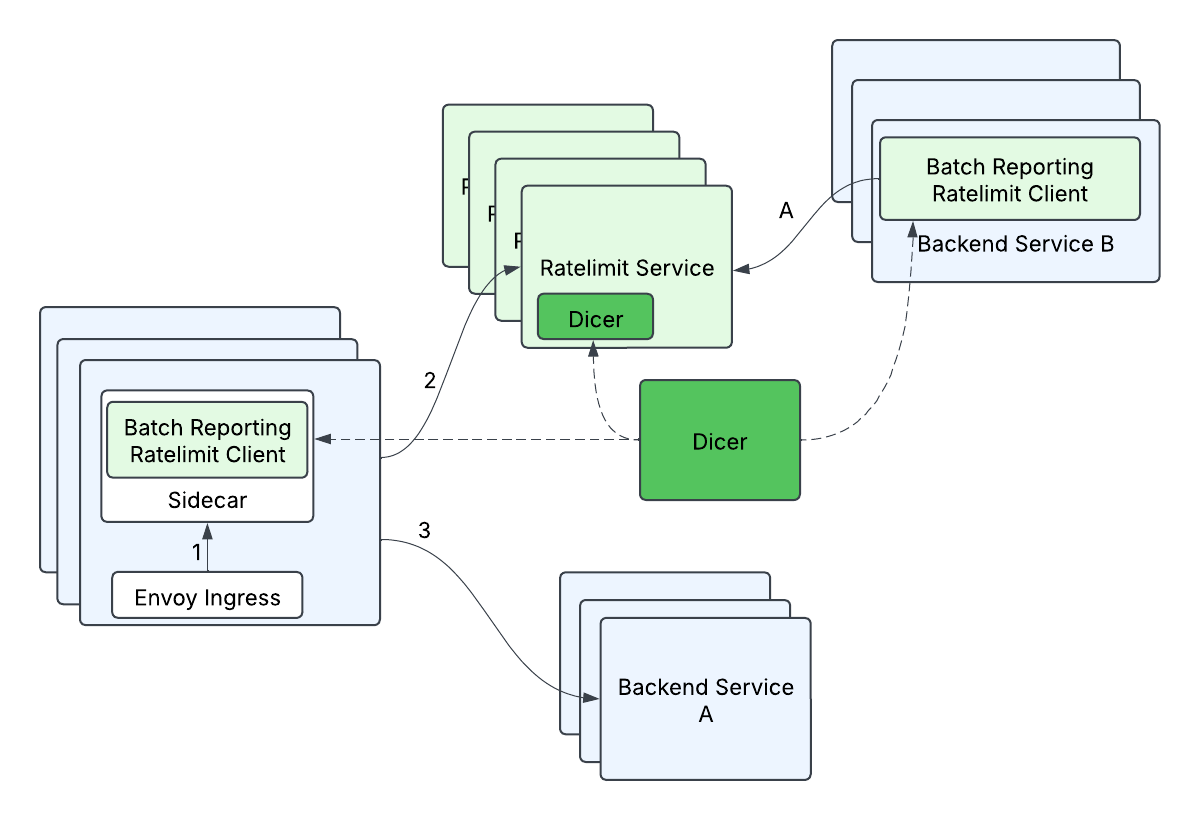

What we ended up designing is a mechanism we name batch-reporting, that mixes two rules: 1) Our shoppers wouldn’t make any distant calls within the vital fee restrict path, and a couple of) our shoppers would carry out optimistic fee limiting, the place by default requests can be let by way of except we already knew we wished to reject these explicit requests. We had been wonderful with not having strict ensures on fee limits as a tradeoff for scalability as a result of backend companies may tolerate some share of overlimit. At a excessive degree, batch-reporting does native relying on the consumer aspect, and periodically (e.g. 100ms) reviews again the counts to the server. The server would inform the consumer whether or not any of the entries wanted to be fee restricted.

The batch-reporting circulate regarded like this:

- The consumer information what number of requests it let by way of (outstandingHits) and what number of requests it rejected (rejectedHits)

- Periodically, a course of on the consumer will report the collected counts to the server.

- E.g.

KeyABC_SubKeyXYZ: outstandingHits=624, rejectedHits=857;KeyQWD_SubKeyJHP: outstandingHits=876, rejectedHits=0

- E.g.

- Server returns an array of responses

- KeyABC_SubKeyXYZ: rejectTilTimestamp=…, rejectionRate=…

KeyQWD_SubKeyJHP: rejectTilTimestamp=…, rejectionRate=…

- KeyABC_SubKeyXYZ: rejectTilTimestamp=…, rejectionRate=…

The advantages of this method had been enormous; we may have virtually zero-latency fee restrict calls, a 10x enchancment when in comparison with some tail latency calls, and switch spiky fee restrict site visitors into (comparatively) fixed qps site visitors! Mixed with the Dicer answer for in-memory fee limiting, it’s all easy crusing from right here, proper?

Satan’s within the Particulars

Although we had a good suggestion of the tip purpose, there was a whole lot of exhausting engineering work to really make it a actuality. Listed below are a few of the challenges we encountered alongside the way in which, and the way we solved them.

Excessive Fanout

As a result of we wanted to shard primarily based on the RateLimitGroup and dimension, this meant {that a} beforehand single RateLimitRequest with N dimensions may flip into N requests, i.e. a typical fanout request. This may very well be particularly problematic when mixed with batch-reporting, since a single batched request may fan-out to many (500+) completely different distant calls. If unaddressed, the client-side tail latency would enhance drastically (from ready on just one distant name to ready on 500+ distant calls), and the server-side load would enhance (from 1 distant request total to 500+ distant requests total). We optimized this by grouping descriptors by their Dicer assignments – descriptors assigned to the identical duplicate had been grouped right into a single fee restrict batch request and despatched to that corresponding vacation spot server. This helped to reduce the rise in client-side tail latencies (some enhance in tail latency is suitable since batched requests should not on the vital path however relatively processed in a background thread), and minimizes the elevated load to the server (every server duplicate will deal with at most 1 distant request from a consumer duplicate per batch cycle).

Enforcement Accuracy

As a result of the batch-reporting algorithm is each asynchronous and makes use of a time-based interval to report the up to date counts to the Ratelimit Service, it was very doable that we may permit too many requests by way of earlier than we may implement the speed restrict. Although we may outline these limits as fuzzy, we nonetheless wished to offer ensures that we’d go X% (e.g. 5%) over the restrict. Going excessively over the restrict may occur due to two essential causes:

- The site visitors throughout one batching window (e.g. 100ms) may exceed the speed restrict coverage.

- A lot of our use instances used the fixed-window algorithm and per-second fee limits. A property of the fixed-window algorithm is that every “window” begins contemporary (i.e. resets and begins from zero), so we may doubtlessly exceed the speed restrict each second, even throughout fixed (however excessive) site visitors!

The best way we fastened this was three-fold:

- We added a rejection-rate within the Ratelimit Service response, in order that we may use previous historical past to foretell when and the way a lot site visitors to reject on the consumer.

rejectionRate=(estimatedQps-rateLimitPolicy)/estimatedQpsThis makes use of the belief that the upcoming second’s site visitors goes to be much like the previous second’s site visitors. - We added defense-in-depth by including a client-side native fee limiter to chop off apparent instances of extreme site visitors instantly.

- As soon as we had autosharding in place, we carried out an in-memory token-bucket ratelimiting algorithm, which got here with some nice advantages:

- We may now permit managed bursts of site visitors

- Extra importantly, token-bucket “remembers” data throughout time intervals as a result of as an alternative of resetting each time interval just like the fixed-window algorithm, it will probably constantly rely, and even go damaging. Thus, if a buyer sends too many requests, we “keep in mind” how a lot over the restrict they went and may reject requests till the bucket refills to no less than zero. We weren’t in a position to help this token bucket in Redis beforehand as a result of token-bucket wanted pretty advanced operations in Redis, which might enhance our Redis latencies. Now, as a result of the token-bucket didn’t endure from amnesia each time interval, we may eliminate the rejection-rate mechanism.

- Token-bucket with out enabling further burst performance can approximate a sliding-window algorithm, which is a greater model of fixed-window that doesn’t endure from the “reset” drawback.

The advantages of the token-bucket method had been so nice that we ended up changing all our ratelimits to token bucket.

Rebuilding a Aircraft In-Flight

We knew the tip state that we wished to get to, however that required making two unbiased main adjustments to a vital service, neither of which had been assured to work nicely on their very own. And rolling these two adjustments out collectively was not an possibility, for each technical and danger administration causes. A number of the fascinating stuff we needed to do alongside the way in which:

- We constructed a localhost sidecar to our envoy ingress in order that we may apply each batch-reporting and auto-sharding, as a result of envoy is third celebration code we can not change.

- Earlier than we had in-memory fee limiting, we needed to batch writes to Redis by way of a Lua script so as to convey down the tail latency of batch-reporting requests, as a result of sending descriptors one after the other to Redis was too sluggish with all of the community round-trips, even when we had switched to batch execution.

- We constructed a site visitors simulation framework with many various site visitors patterns and fee restrict insurance policies, so we may consider our accuracy, efficiency, and scalability all through this transition.

Present State and Future Work

With the profitable rollout of each batch-reporting and in-memory token bucket fee limiting, we noticed drastic tail latency enhancements (as much as 10x in some instances!) with sub-linear progress in server aspect site visitors. Our inner service shoppers are notably comfortable that there’s no distant name after they make fee restrict calls, and that they’ve the liberty to scale independently of the Ratelimit Service.

The group has additionally been engaged on different thrilling areas, reminiscent of service mesh routing and zero-config overload safety, so maintain tuned for extra weblog posts! And Databricks is at all times trying for extra nice engineers who could make a distinction, we’d love so that you can be a part of us!