A protein situated within the mistaken a part of a cell can contribute to a number of illnesses, akin to Alzheimer’s, cystic fibrosis, and most cancers. However there are about 70,000 totally different proteins and protein variants in a single human cell, and since scientists can sometimes solely take a look at for a handful in a single experiment, this can be very pricey and time-consuming to determine proteins’ areas manually.

A brand new era of computational strategies seeks to streamline the method utilizing machine-learning fashions that always leverage datasets containing hundreds of proteins and their areas, measured throughout a number of cell traces. One of many largest such datasets is the Human Protein Atlas, which catalogs the subcellular conduct of over 13,000 proteins in additional than 40 cell traces. However as huge as it’s, the Human Protein Atlas has solely explored about 0.25 p.c of all potential pairings of all proteins and cell traces inside the database.

Now, researchers from MIT, Harvard College, and the Broad Institute of MIT and Harvard have developed a brand new computational method that may effectively discover the remaining uncharted house. Their technique can predict the placement of any protein in any human cell line, even when each protein and cell have by no means been examined earlier than.

Their method goes one step additional than many AI-based strategies by localizing a protein on the single-cell degree, somewhat than as an averaged estimate throughout all of the cells of a selected kind. This single-cell localization may pinpoint a protein’s location in a selected most cancers cell after remedy, for example.

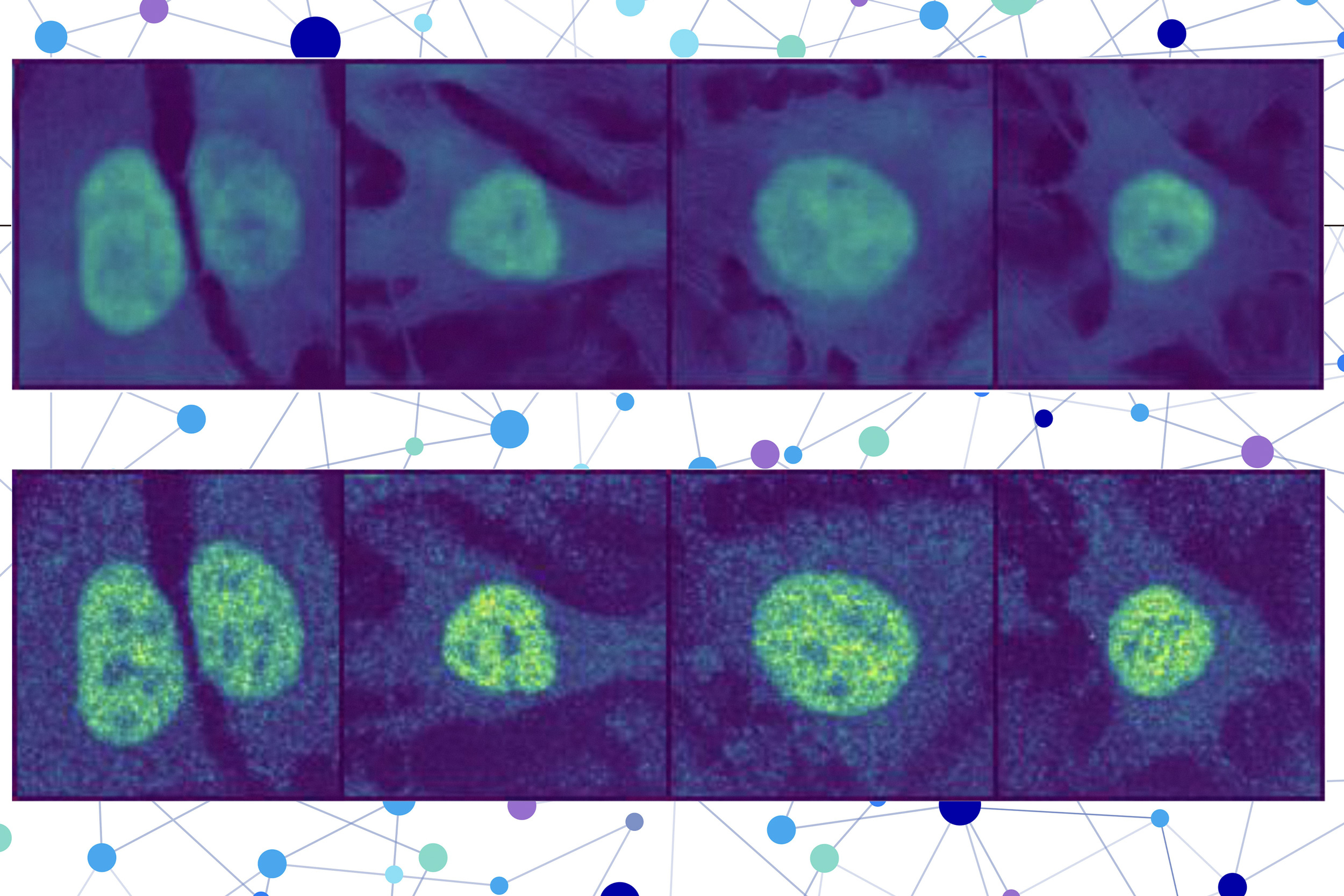

The researchers mixed a protein language mannequin with a particular kind of laptop imaginative and prescient mannequin to seize wealthy particulars a couple of protein and cell. Ultimately, the person receives a picture of a cell with a highlighted portion indicating the mannequin’s prediction of the place the protein is situated. Since a protein’s localization is indicative of its practical standing, this method may assist researchers and clinicians extra effectively diagnose illnesses or determine drug targets, whereas additionally enabling biologists to raised perceive how complicated organic processes are associated to protein localization.

“You can do these protein-localization experiments on a pc with out having to the touch any lab bench, hopefully saving your self months of effort. When you would nonetheless must confirm the prediction, this method may act like an preliminary screening of what to check for experimentally,” says Yitong Tseo, a graduate pupil in MIT’s Computational and Programs Biology program and co-lead writer of a paper on this analysis.

Tseo is joined on the paper by co-lead writer Xinyi Zhang, a graduate pupil within the Division of Electrical Engineering and Laptop Science (EECS) and the Eric and Wendy Schmidt Middle on the Broad Institute; Yunhao Bai of the Broad Institute; and senior authors Fei Chen, an assistant professor at Harvard and a member of the Broad Institute, and Caroline Uhler, the Andrew and Erna Viterbi Professor of Engineering in EECS and the MIT Institute for Information, Programs, and Society (IDSS), who can also be director of the Eric and Wendy Schmidt Middle and a researcher at MIT’s Laboratory for Data and Choice Programs (LIDS). The analysis seems at the moment in Nature Strategies.

Collaborating fashions

Many present protein prediction fashions can solely make predictions based mostly on the protein and cell information on which they had been skilled or are unable to pinpoint a protein’s location inside a single cell.

To beat these limitations, the researchers created a two-part technique for prediction of unseen proteins’ subcellular location, referred to as PUPS.

The primary half makes use of a protein sequence mannequin to seize the localization-determining properties of a protein and its 3D construction based mostly on the chain of amino acids that kinds it.

The second half incorporates a picture inpainting mannequin, which is designed to fill in lacking components of a picture. This laptop imaginative and prescient mannequin appears to be like at three stained pictures of a cell to assemble details about the state of that cell, akin to its kind, particular person options, and whether or not it’s beneath stress.

PUPS joins the representations created by every mannequin to foretell the place the protein is situated inside a single cell, utilizing a picture decoder to output a highlighted picture that reveals the expected location.

“Totally different cells inside a cell line exhibit totally different traits, and our mannequin is ready to perceive that nuance,” Tseo says.

A person inputs the sequence of amino acids that kind the protein and three cell stain pictures — one for the nucleus, one for the microtubules, and one for the endoplasmic reticulum. Then PUPS does the remainder.

A deeper understanding

The researchers employed a couple of methods in the course of the coaching course of to show PUPS the best way to mix data from every mannequin in such a manner that it could make an informed guess on the protein’s location, even when it hasn’t seen that protein earlier than.

As an illustration, they assign the mannequin a secondary job throughout coaching: to explicitly identify the compartment of localization, just like the cell nucleus. That is achieved alongside the first inpainting job to assist the mannequin study extra successfully.

A great analogy is likely to be a trainer who asks their college students to attract all of the components of a flower along with writing their names. This further step was discovered to assist the mannequin enhance its basic understanding of the potential cell compartments.

As well as, the truth that PUPS is skilled on proteins and cell traces on the similar time helps it develop a deeper understanding of the place in a cell picture proteins are inclined to localize.

PUPS may even perceive, by itself, how totally different components of a protein’s sequence contribute individually to its general localization.

“Most different strategies normally require you to have a stain of the protein first, so that you’ve already seen it in your coaching information. Our method is exclusive in that it could generalize throughout proteins and cell traces on the similar time,” Zhang says.

As a result of PUPS can generalize to unseen proteins, it could seize modifications in localization pushed by distinctive protein mutations that aren’t included within the Human Protein Atlas.

The researchers verified that PUPS may predict the subcellular location of recent proteins in unseen cell traces by conducting lab experiments and evaluating the outcomes. As well as, when in comparison with a baseline AI technique, PUPS exhibited on common much less prediction error throughout the proteins they examined.

Sooner or later, the researchers wish to improve PUPS so the mannequin can perceive protein-protein interactions and make localization predictions for a number of proteins inside a cell. In the long term, they wish to allow PUPS to make predictions by way of dwelling human tissue, somewhat than cultured cells.

This analysis is funded by the Eric and Wendy Schmidt Middle on the Broad Institute, the Nationwide Institutes of Well being, the Nationwide Science Basis, the Burroughs Welcome Fund, the Searle Students Basis, the Harvard Stem Cell Institute, the Merkin Institute, the Workplace of Naval Analysis, and the Division of Power.