In AI-driven purposes, complicated duties typically require breaking down into a number of subtasks. Nevertheless, the precise subtasks can’t be predetermined in lots of real-world situations. For example, in automated code era, the variety of recordsdata to be modified and the precise adjustments wanted rely fully on the given request. Conventional parallelized workflows battle unpredictably, requiring duties to be predefined upfront. This rigidity limits the adaptabilityof AI programs.

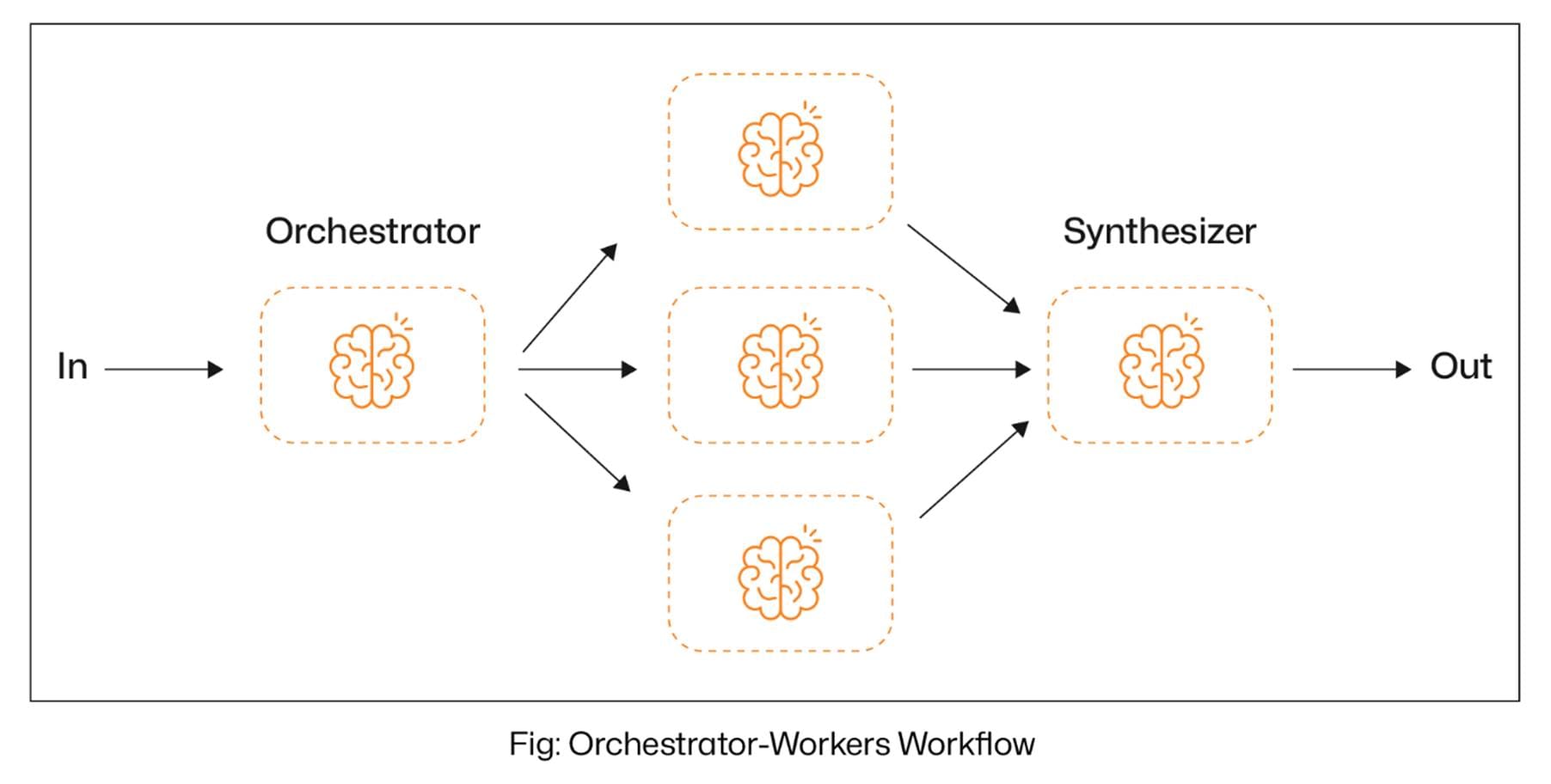

Nevertheless, the Orchestrator-Staff Workflow Brokers in LangGraph introduce a extra versatile and clever strategy to deal with this problem. As an alternative of counting on static activity definitions, a central orchestrator LLM dynamically analyses the enter, determines the required subtasks, and delegates them to specialised employee LLMs. The orchestrator then collects and synthesizes the outputs, making certain a cohesive closing consequence. These Gen AI companies allow real-time decision-making, adaptive activity administration, and better accuracy, making certain that complicated workflows are dealt with with smarter agility and precision.

With that in thoughts, let’s dive into what the Orchestrator-Staff Workflow Agent in LangGraph is all about.

Inside LangGraph’s Orchestrator-Staff Agent: Smarter Activity Distribution

The Orchestrator-Staff Workflow Agent in LangGraph is designed for dynamic activity delegation. On this setup, a central orchestrator LLM analyses the enter, breaks it down into smaller subtasks, and assigns them to specialised employee LLMs. As soon as the employee brokers full their duties, the orchestrator synthesizes their outputs right into a cohesive closing consequence.

The primary benefit of utilizing the Orchestrator-Staff workflow agent is:

- Adaptive Activity Dealing with: Subtasks are usually not predefined however decided dynamically, making the workflow extremely versatile.

- Scalability: The orchestrator can effectively handle and scale a number of employee brokers as wanted.

- Improved Accuracy: The system ensures extra exact and context-aware outcomes by dynamically delegating duties to specialised employees.

- Optimized Effectivity: Duties are distributed effectively, stopping bottlenecks and enabling parallel execution the place doable.

Let’s not take a look at an instance. Let’s construct an orchestrator-worker workflow agent that makes use of the consumer’s enter as a weblog subject, reminiscent of “write a weblog on agentic RAG.” The orchestrator analyzes the subject and plans numerous sections of the weblog, together with introduction, ideas and definitions, present purposes, technological developments, challenges and limitations, and extra. Primarily based on this plan, specialised employee nodes are dynamically assigned to every part to generate content material in parallel. Lastly, the synthesizer aggregates the outputs from all employees to ship a cohesive closing consequence.

Importing the mandatory libraries.

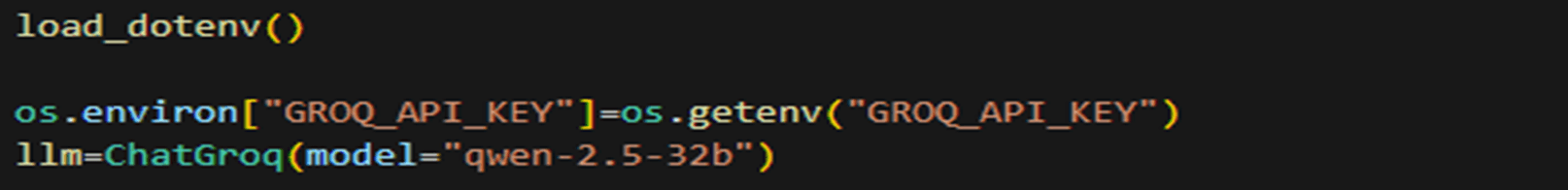

Now we have to load the LLM. For this weblog, we are going to use the qwen2.5-32b mannequin from Groq.

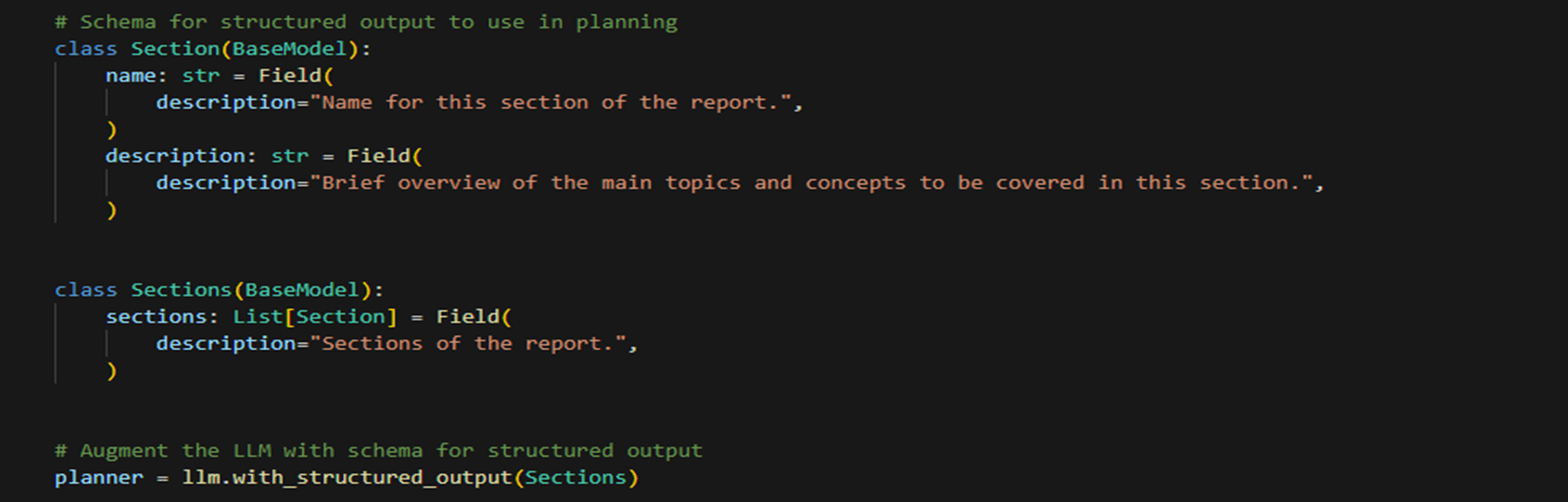

Now, let’s construct a Pydantic class to make sure that the LLM produces structured output. Within the Pydantic class, we are going to make sure that the LLM generates a listing of sections, every containing the part identify and outline. These sections will later be given to employees to allow them to work on every part in parallel.

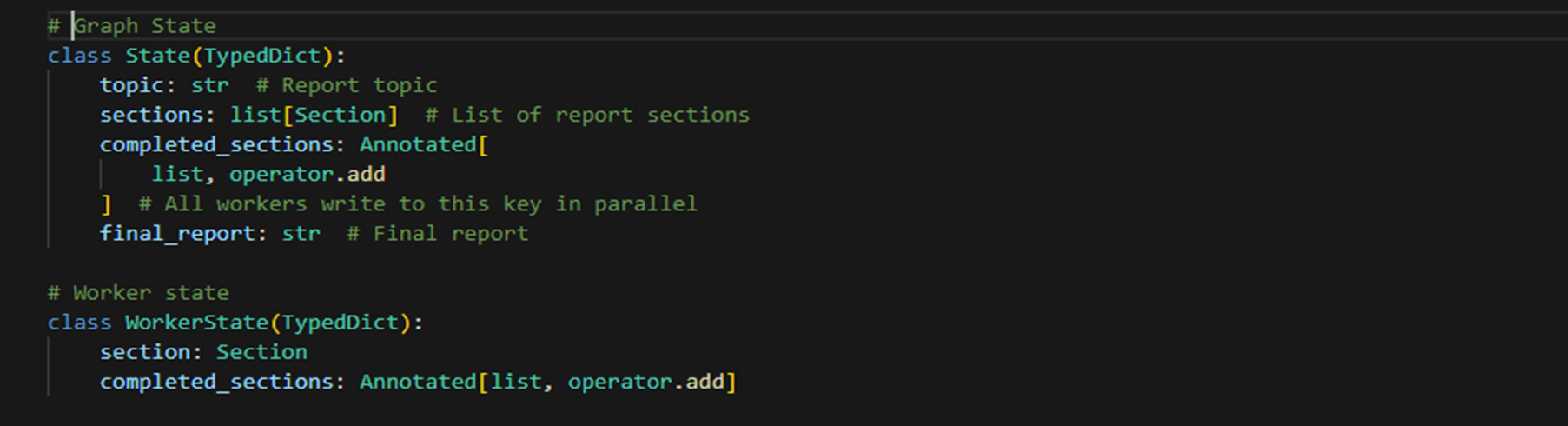

Now, we should create the state lessons representing a Graph State containing shared variables. We’ll outline two state lessons: one for the whole graph state and one for the employee state.

Now, we are able to outline the nodes—the orchestrator node, the employee node, the synthesizer node, and the conditional node.

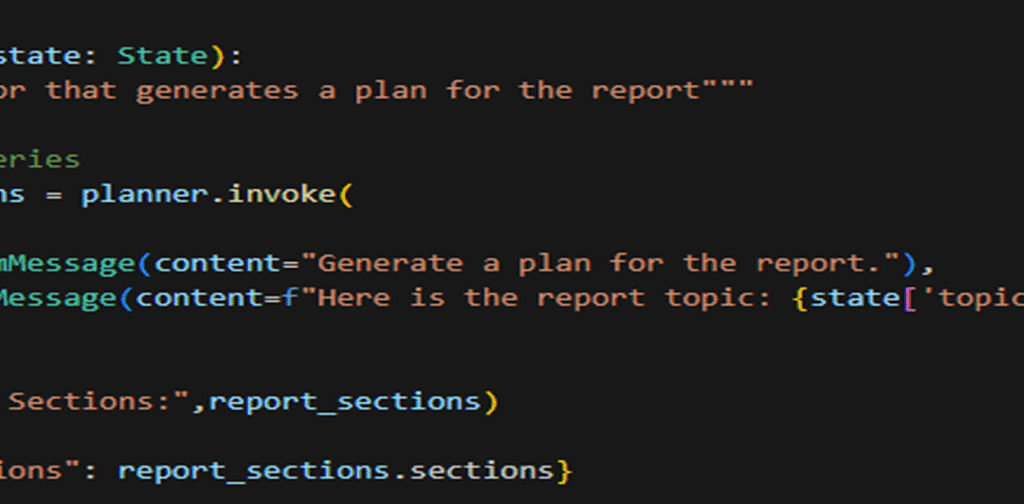

Orchestrator node: This node can be answerable for producing the sections of the weblog.

Employee node: This node can be utilized by employees to generate content material for the totally different sections

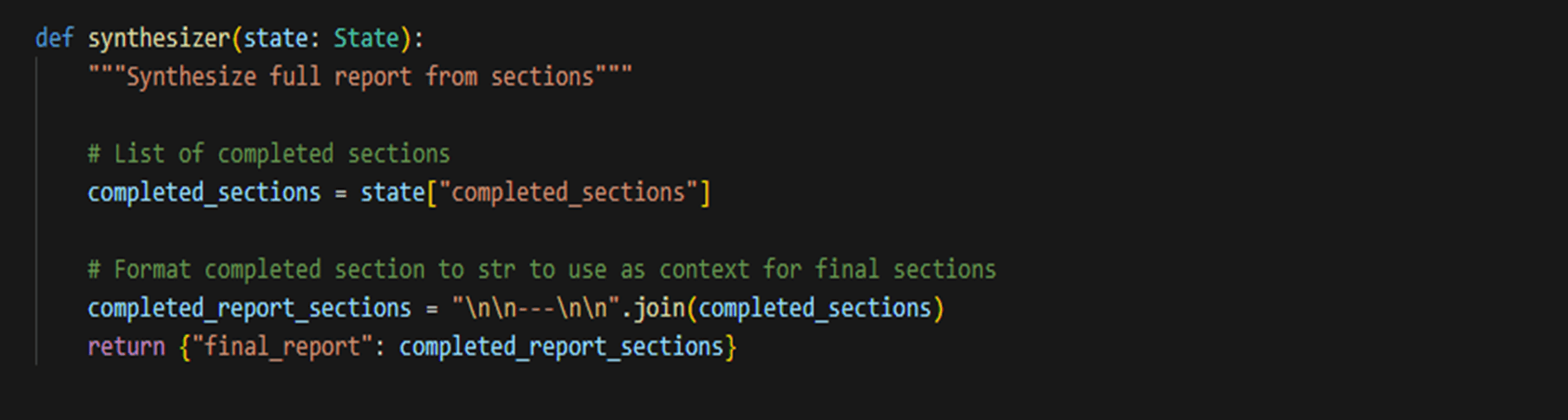

Synthesizer node: This node will take every employee’s output and mix it to generate the ultimate output.

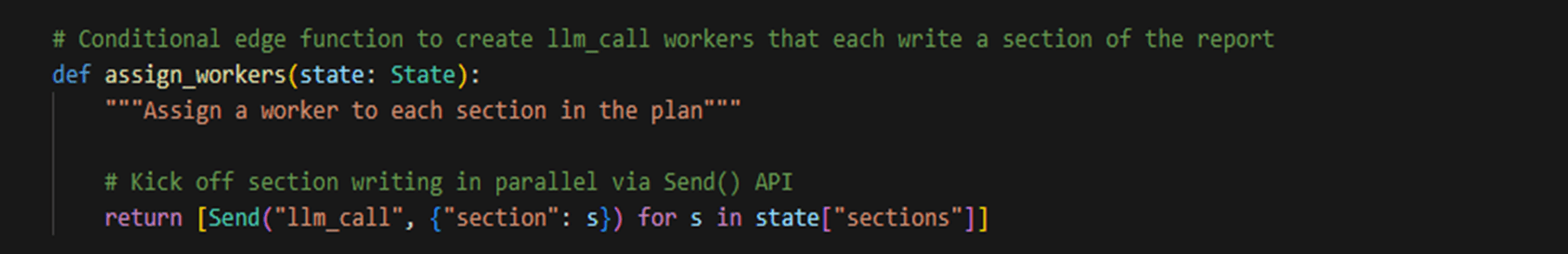

Conditional node to assign employee: That is the conditional node that can be answerable for assigning the totally different sections of the weblog to totally different employees.

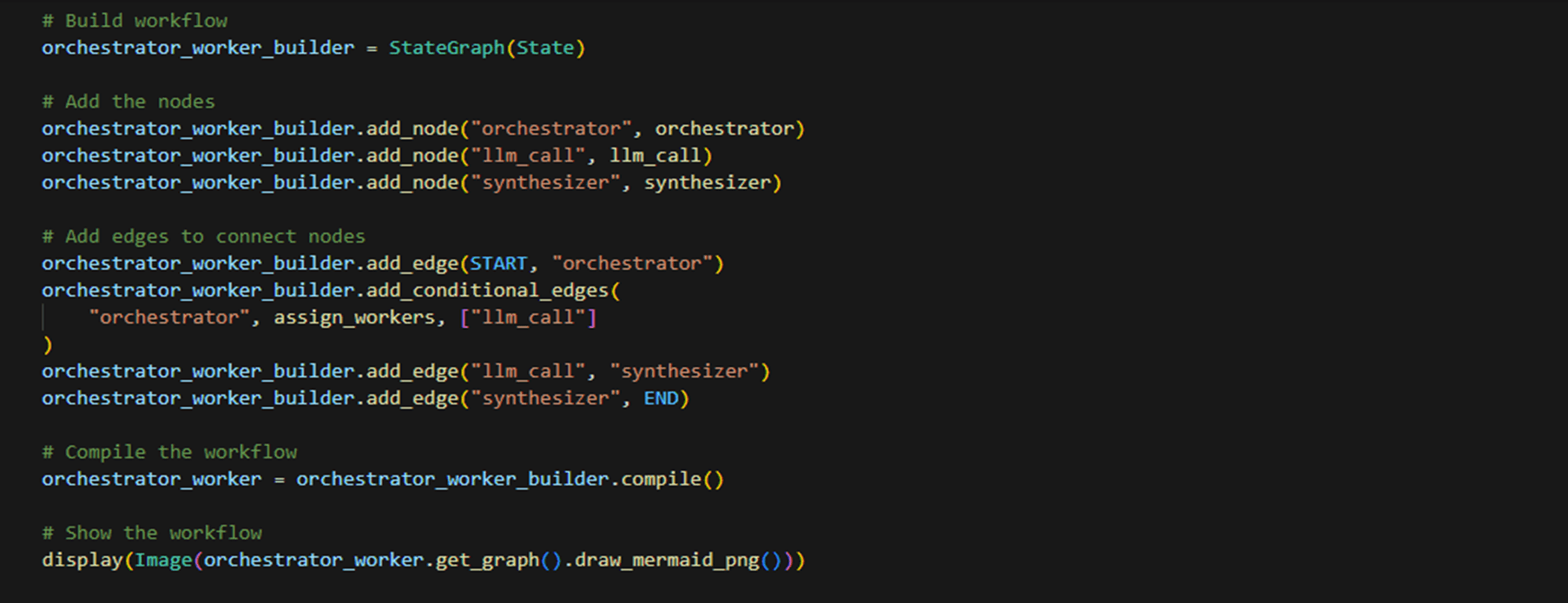

Now, lastly, let’s construct the graph.

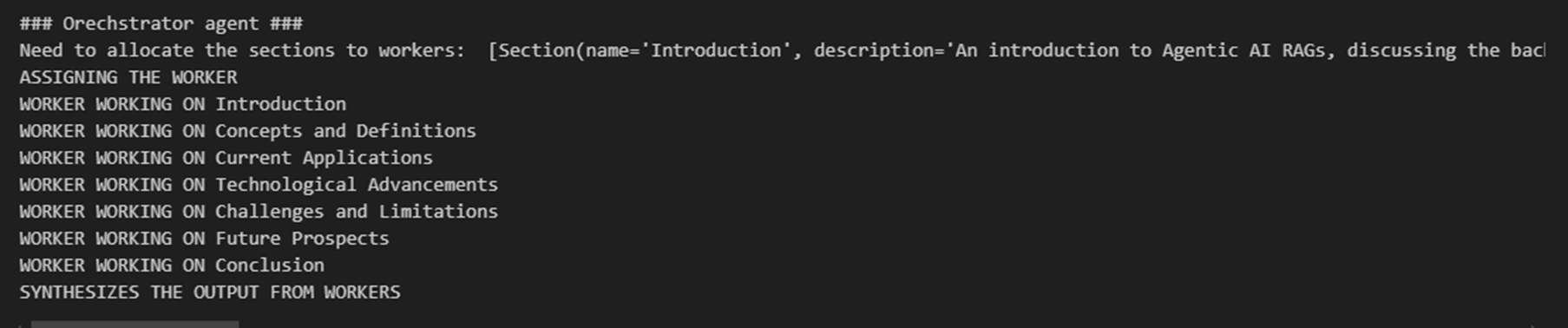

Now, once you invoke the graph with a subject, the orchestrator node breaks it down into sections, the conditional node evaluates the variety of sections, and dynamically assigns employees — for instance, if there are two sections, then two employees are created. Every employee node then generates content material for its assigned part in parallel. Lastly, the synthesizer node combines the outputs right into a cohesive weblog, making certain an environment friendly and arranged content material creation course of.

There are different use circumstances as nicely, which we are able to resolve utilizing the Orchestrator-worker workflow agent. A few of them are listed beneath:

- Automated Check Case Technology – Streamlining unit testing by mechanically producing code-based check circumstances.

- Code High quality Assurance – Making certain constant code requirements by integrating automated check era into CI/CD pipelines.

- Software program Documentation – Producing UML and sequence diagrams for higher venture documentation and understanding.

- Legacy Code Refactoring – Helping in modernizing and testing legacy purposes by auto-generating check protection.

- Accelerating Growth Cycles – Lowering handbook effort in writing exams, permitting builders to concentrate on function improvement.

Orchestrator employees’ workflow agent not solely boosts effectivity and accuracy but in addition enhances code maintainability and collaboration throughout groups.

Closing Strains

To conclude, the Orchestrator-Employee Workflow Agent in LangGraph represents a forward-thinking and scalable strategy to managing complicated, unpredictable duties. By using a central orchestrator to investigate inputs and dynamically break them into subtasks, the system successfully assigns every activity to specialised employee nodes that function in parallel.

A synthesizer node then seamlessly integrates these outputs, making certain a cohesive closing consequence. Its use of state lessons for managing shared variables and a conditional node for dynamically assigning employees ensures optimum scalability and adaptableness.

This versatile structure not solely magnifies effectivity and accuracy but in addition intelligently adapts to various workloads by allocating assets the place they’re wanted most. Briefly, its versatile design paves the way in which for improved automation throughout various purposes, finally fostering better collaboration and accelerating improvement cycles in in the present day’s dynamic technological panorama.