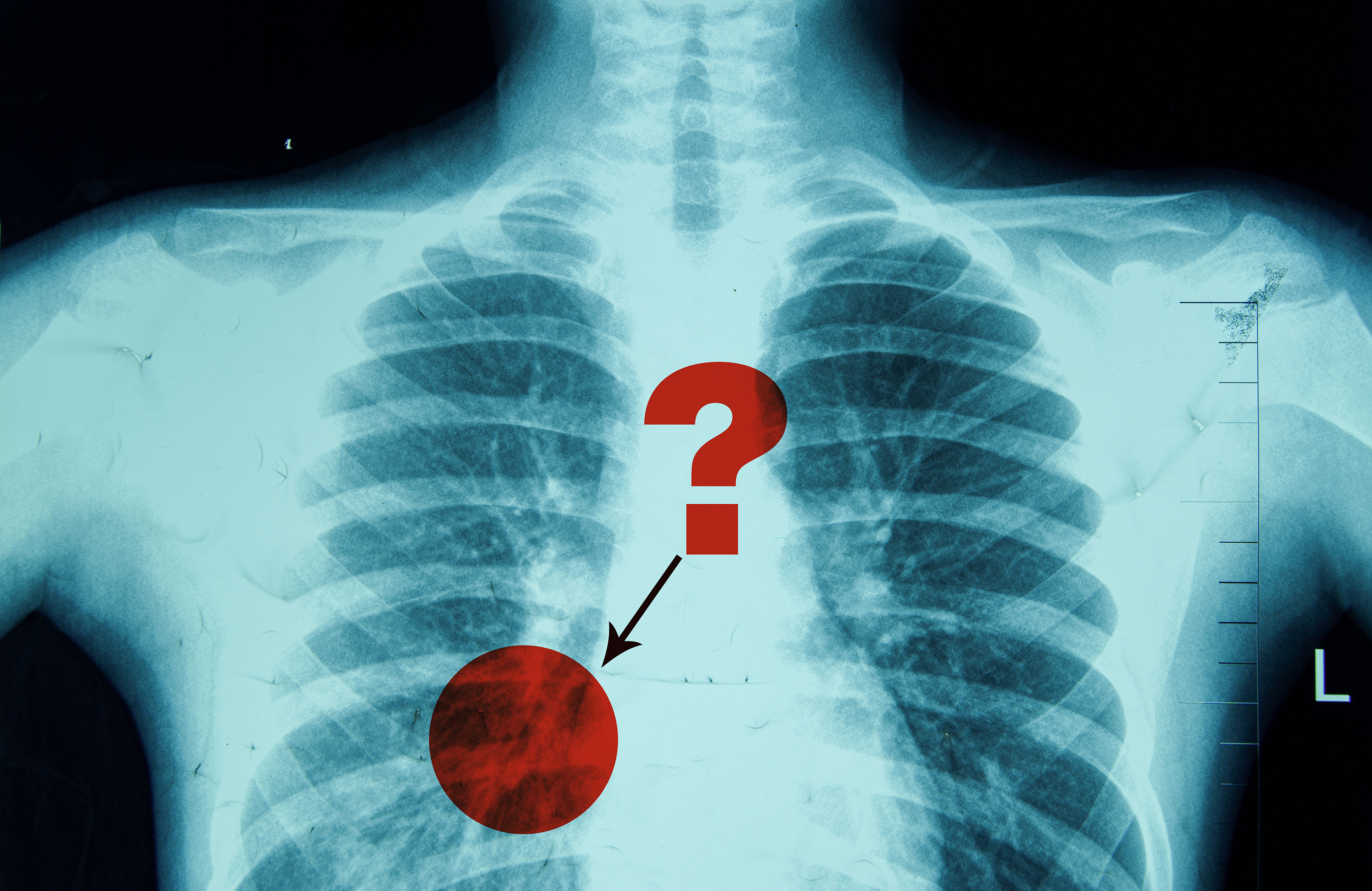

As a result of inherent ambiguity in medical photographs like X-rays, radiologists usually use phrases like “could” or “possible” when describing the presence of a sure pathology, comparable to pneumonia.

However do the phrases radiologists use to specific their confidence stage precisely replicate how usually a specific pathology happens in sufferers? A brand new research exhibits that when radiologists categorical confidence a couple of sure pathology utilizing a phrase like “very possible,” they are usually overconfident, and vice-versa after they categorical much less confidence utilizing a phrase like “probably.”

Utilizing scientific knowledge, a multidisciplinary workforce of MIT researchers in collaboration with researchers and clinicians at hospitals affiliated with Harvard Medical Faculty created a framework to quantify how dependable radiologists are after they categorical certainty utilizing pure language phrases.

They used this method to offer clear options that assist radiologists select certainty phrases that might enhance the reliability of their scientific reporting. Additionally they confirmed that the identical approach can successfully measure and enhance the calibration of enormous language fashions by higher aligning the phrases fashions use to specific confidence with the accuracy of their predictions.

By serving to radiologists extra precisely describe the probability of sure pathologies in medical photographs, this new framework may enhance the reliability of vital scientific data.

“The phrases radiologists use are vital. They have an effect on how medical doctors intervene, when it comes to their resolution making for the affected person. If these practitioners could be extra dependable of their reporting, sufferers would be the final beneficiaries,” says Peiqi Wang, an MIT graduate scholar and lead creator of a paper on this analysis.

He’s joined on the paper by senior creator Polina Golland, a Sunlin and Priscilla Chou Professor of Electrical Engineering and Pc Science (EECS), a principal investigator within the MIT Pc Science and Synthetic Intelligence Laboratory (CSAIL), and the chief of the Medical Imaginative and prescient Group; in addition to Barbara D. Lam, a scientific fellow on the Beth Israel Deaconess Medical Heart; Yingcheng Liu, at MIT graduate scholar; Ameneh Asgari-Targhi, a analysis fellow at Massachusetts Common Brigham (MGB); Rameswar Panda, a analysis employees member on the MIT-IBM Watson AI Lab; William M. Wells, a professor of radiology at MGB and a analysis scientist in CSAIL; and Tina Kapur, an assistant professor of radiology at MGB. The analysis can be offered on the Worldwide Convention on Studying Representations.

Decoding uncertainty in phrases

A radiologist writing a report a couple of chest X-ray would possibly say the picture exhibits a “attainable” pneumonia, which is an an infection that inflames the air sacs within the lungs. In that case, a physician may order a follow-up CT scan to verify the analysis.

Nonetheless, if the radiologist writes that the X-ray exhibits a “possible” pneumonia, the physician would possibly start therapy instantly, comparable to by prescribing antibiotics, whereas nonetheless ordering further exams to evaluate severity.

Making an attempt to measure the calibration, or reliability, of ambiguous pure language phrases like “probably” and “possible” presents many challenges, Wang says.

Current calibration strategies usually depend on the arrogance rating offered by an AI mannequin, which represents the mannequin’s estimated probability that its prediction is appropriate.

As an example, a climate app would possibly predict an 83 % probability of rain tomorrow. That mannequin is well-calibrated if, throughout all cases the place it predicts an 83 % probability of rain, it rains roughly 83 % of the time.

“However people use pure language, and if we map these phrases to a single quantity, it isn’t an correct description of the true world. If an individual says an occasion is ‘possible,’ they aren’t essentially considering of the precise likelihood, comparable to 75 %,” Wang says.

Reasonably than attempting to map certainty phrases to a single share, the researchers’ method treats them as likelihood distributions. A distribution describes the vary of attainable values and their likelihoods — consider the traditional bell curve in statistics.

“This captures extra nuances of what every phrase means,” Wang provides.

Assessing and enhancing calibration

The researchers leveraged prior work that surveyed radiologists to acquire likelihood distributions that correspond to every diagnostic certainty phrase, starting from “very possible” to “in line with.”

As an example, since extra radiologists imagine the phrase “in line with” means a pathology is current in a medical picture, its likelihood distribution climbs sharply to a excessive peak, with most values clustered across the 90 to one hundred pc vary.

In distinction the phrase “could signify” conveys higher uncertainty, resulting in a broader, bell-shaped distribution centered round 50 %.

Typical strategies consider calibration by evaluating how effectively a mannequin’s predicted likelihood scores align with the precise variety of constructive outcomes.

The researchers’ method follows the identical normal framework however extends it to account for the truth that certainty phrases signify likelihood distributions quite than possibilities.

To enhance calibration, the researchers formulated and solved an optimization drawback that adjusts how usually sure phrases are used, to higher align confidence with actuality.

They derived a calibration map that implies certainty phrases a radiologist ought to use to make the experiences extra correct for a selected pathology.

“Maybe, for this dataset, if each time the radiologist stated pneumonia was ‘current,’ they modified the phrase to ‘possible current’ as an alternative, then they might turn out to be higher calibrated,” Wang explains.

When the researchers used their framework to judge scientific experiences, they discovered that radiologists have been usually underconfident when diagnosing widespread situations like atelectasis, however overconfident with extra ambiguous situations like an infection.

As well as, the researchers evaluated the reliability of language fashions utilizing their methodology, offering a extra nuanced illustration of confidence than classical strategies that depend on confidence scores.

“Plenty of occasions, these fashions use phrases like ‘actually.’ However as a result of they’re so assured of their solutions, it doesn’t encourage individuals to confirm the correctness of the statements themselves,” Wang provides.

Sooner or later, the researchers plan to proceed collaborating with clinicians within the hopes of enhancing diagnoses and therapy. They’re working to broaden their research to incorporate knowledge from belly CT scans.

As well as, they’re fascinated with learning how receptive radiologists are to calibration-improving options and whether or not they can mentally regulate their use of certainty phrases successfully.

“Expression of diagnostic certainty is an important side of the radiology report, because it influences vital administration selections. This research takes a novel method to analyzing and calibrating how radiologists categorical diagnostic certainty in chest X-ray experiences, providing suggestions on time period utilization and related outcomes,” says Atul B. Shinagare, affiliate professor of radiology at Harvard Medical Faculty, who was not concerned with this work. “This method has the potential to enhance radiologists’ accuracy and communication, which is able to assist enhance affected person care.”

The work was funded, partly, by a Takeda Fellowship, the MIT-IBM Watson AI Lab, the MIT CSAIL Wistrom Program, and the MIT Jameel Clinic.