Think about having a private analysis assistant who not solely understands your query however intelligently decides easy methods to discover solutions. Diving into your doc library for some queries whereas producing artistic responses for others. That is what is feasible with an Agentic RAG Utilizing LlamaIndex TypeScript system.

Whether or not you want to create a literature evaluation system, a technical documentation assistant, or any knowledge-intensive software, the approaches outlined on this weblog submit present a sensible basis you may construct. This weblog submit will take you on a hands-on journey by constructing such a system utilizing LlamaIndex TypeScript, from establishing native fashions to implementing specialised instruments that work collectively to ship remarkably useful responses.

Studying Goals

- Perceive the basics of Agentic RAG Utilizing LlamaIndex TypeScript for constructing clever brokers.

- Discover ways to arrange the event surroundings and set up mandatory dependencies.

- Discover software creation in LlamaIndex, together with addition and division operations.

- Implement a math agent utilizing LlamaIndex TypeScript for executing queries.

- Execute and take a look at the agent to course of mathematical operations effectively.

This text was printed as part of the Information Science Blogathon.

Why Use TypeScript?

TypeScript affords important benefits for constructing LLM-based AI software

- Sort Security: TypeScript’s static typing catches errors throughout growth somewhat than at runtime.

- Higher IDE Assist: Auto-completion and clever recommendations make growth sooner

- Enhance Maintainability: Sort definition makes code extra readable and self-documenting

- Seamless Javascript Integration: TypeScript works with current Javascript libraries

- Scalability: TypeScript’s construction helps handle complexity as your RAG software grows.

- Frameworks: Vite, NextJS, and many others effectively designed sturdy net frameworks that seamlessly join with TypeScript which makes constructing AI-based net purposes straightforward and scalable.

Advantages of LlamaIndex

LlamaIndex offers a robust framework for constructing LLM-based AI purposes.

- Simplified Information Ingestion: Straightforward strategies to load and course of paperwork on the system or the cloud utilizing LlamaParse

- Vector Storage: Constructed-in assist for embedding and retrieving semantic info with numerous integrations with industry-standard databases akin to ChromaDB, Milvus, Weaviet, and pgvector.

- Instrument Integration: Framework for creating and managing a number of specialised instruments

- Agent Plugging: You possibly can construct or plug third-party brokers simply with LlamaIndex.

- Question Engine Flexibility: Customizable question processing for various use instances

- Persistence Assist: Capability to save lots of and cargo indexes for environment friendly reuse

Why LlamaIndex TypeScript?

LlamaIndex is a well-liked AI framework for connecting customized knowledge sources to giant language fashions. Whereas initially implementers in Python, LlamaIndex now affords a TypeScript model that brings its highly effective capabilities to the JavaScript ecosystem. That is significantly beneficial for:

- Net purposes and Node.js providers.

- JavaScript/TypeScript builders who wish to keep inside their most popular language.

- Tasks that must run in browser environments.

What’s Agentic RAG?

Earlier than diving into implementation, let’s make clear what it means by Agetntic RAG.

- RAG(Retrieval-Augmented Era) is a way that enhances language mannequin outputs by first retrieving related info from a data base, after which utilizing that info to generate extra correct, factual responses.

- Agentic methods contain AI that may determine which actions to take primarily based on person queries, successfully functioning as an clever assistant that chooses acceptable instruments to satisfy requests.

An Agentic RAG system combines these approaches, creating an AI assistant that may retrieve info from a data base and use different instruments when acceptable. Based mostly on the character of the person’s query, it decides whether or not to make use of its built-in data, question the vector database, or name exterior instruments.

Setting Improvement Setting

Set up Node in Home windows

To put in Node into Home windows comply with these steps.

# Obtain and set up fnm:

winget set up Schniz.fnm

# Obtain and set up Node.js:

fnm set up 22

# Confirm the Node.js model:

node -v # Ought to print "v22.14.0".

# Confirm npm model:

npm -v # Ought to print "10.9.2".For different methods, you could comply with this.

A Easy Math Agent

Let’s create a basic math agent to grasp the LlamaIndex TypeScript API.

Step 1: Set Up Work Setting

Create a brand new listing and navigate into it and Initialize a Node.js venture and set up dependencies.

$ md simple-agent

$ cd simple-agent

$ npm init

$ npm set up llamaindex @llamaindex/ollama We are going to create two instruments for the maths agent.

- An addition software that provides two numbers

- A divide software that divides numbers

Step 2: Import Required Modules

Add the next imports to your script:

import { agent, Settings, software } from "llamaindex";

import { z } from "zod";

import { Ollama, OllamaEmbedding } from "@llamaindex/ollama";Step 3: Create an Ollama Mannequin Occasion

Instantiate the Llama mannequin:

const llama3 = new Ollama({

mannequin: "llama3.2:1b",

});Now utilizing Settings you straight set the Ollama mannequin for the system’s essential mannequin or use a distinct mannequin straight on the agent.

Settings.llm = llama3;Step 4: Create Instruments for the Math Agent

Add and divide instruments

const addNumbers = software({

identify: "SumNubers",

description: "use this perform to solar two numbers",

parameters: z.object({

a: z.quantity().describe("The primary quantity"),

b: z.quantity().describe("The second quantity"),

}),

execute: ({ a, b }: { a: quantity; b: quantity }) => `${a + b}`,

});Right here we are going to create a software named addNumber utilizing LlamaIndex software API, The software parameters object accommodates 4 essential parameters.

- identify: The identify of the software

- description: The outline of the software that shall be utilized by the LLM to grasp the software’s functionality.

- parameter: The parameters of the software, the place I’ve used Zod libraries for knowledge validation.

- execute: The perform which shall be executed by the software.

In the identical method, we are going to create the divideNumber software.

const divideNumbers = software({

identify: "divideNUmber",

description: "use this perform to divide two numbers",

parameters: z.object({

a: z.quantity().describe("The dividend a to divide"),

b: z.quantity().describe("The divisor b to divide by"),

}),

execute: ({ a, b }: { a: quantity; b: quantity }) => `${a / b}`,

});Step 5: Create the Math Agent

Now in the primary perform, we are going to create a math agent that may use the instruments for calculation.

async perform essential(question: string) {

const mathAgent = agent({

instruments: [addNumbers, divideNumbers],

llm: llama3,

verbose: false,

});

const response = await mathAgent.run(question);

console.log(response.knowledge);

}

// driver code for operating the appliance

const question = "Add two quantity 5 and seven and divide by 2"

void essential(question).then(() => {

console.log("Carried out");

});Should you set your LLM on to by Setting then you definately don’t should put the LLM parameters of the agent. If you wish to use completely different fashions for various brokers then you could put llm parameters explicitly.

After that response is the await perform of the mathAgent which can run the question by the llm and return again the information.

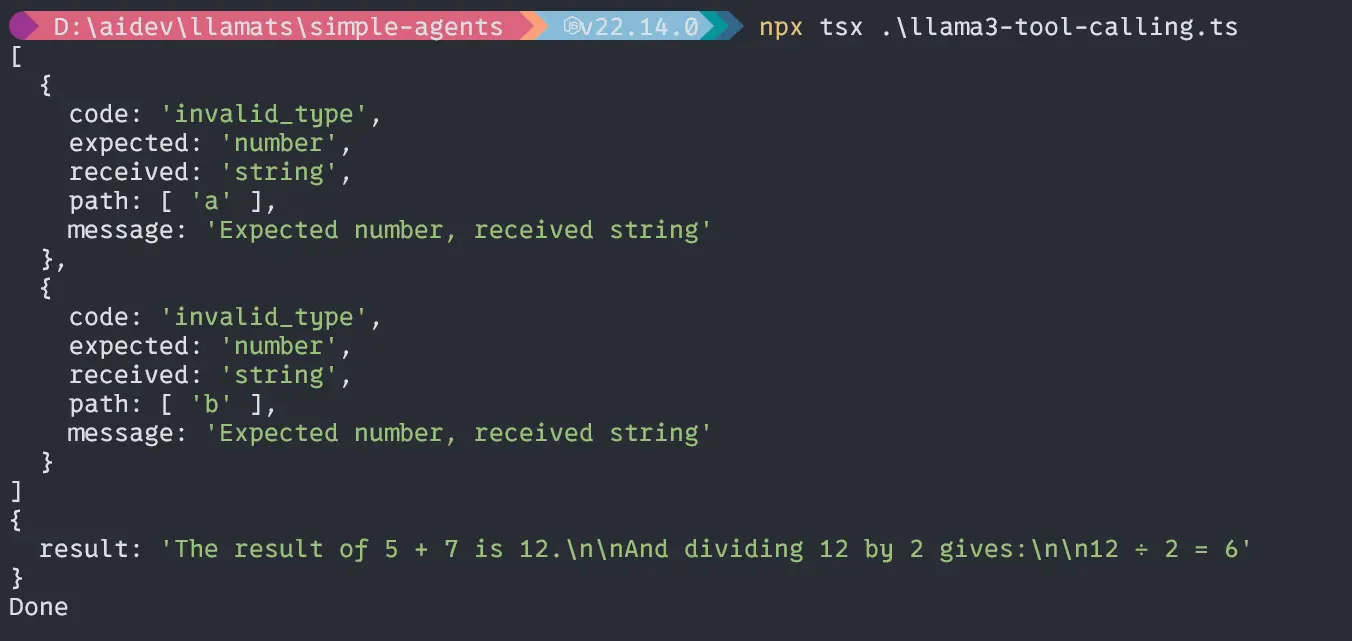

Output

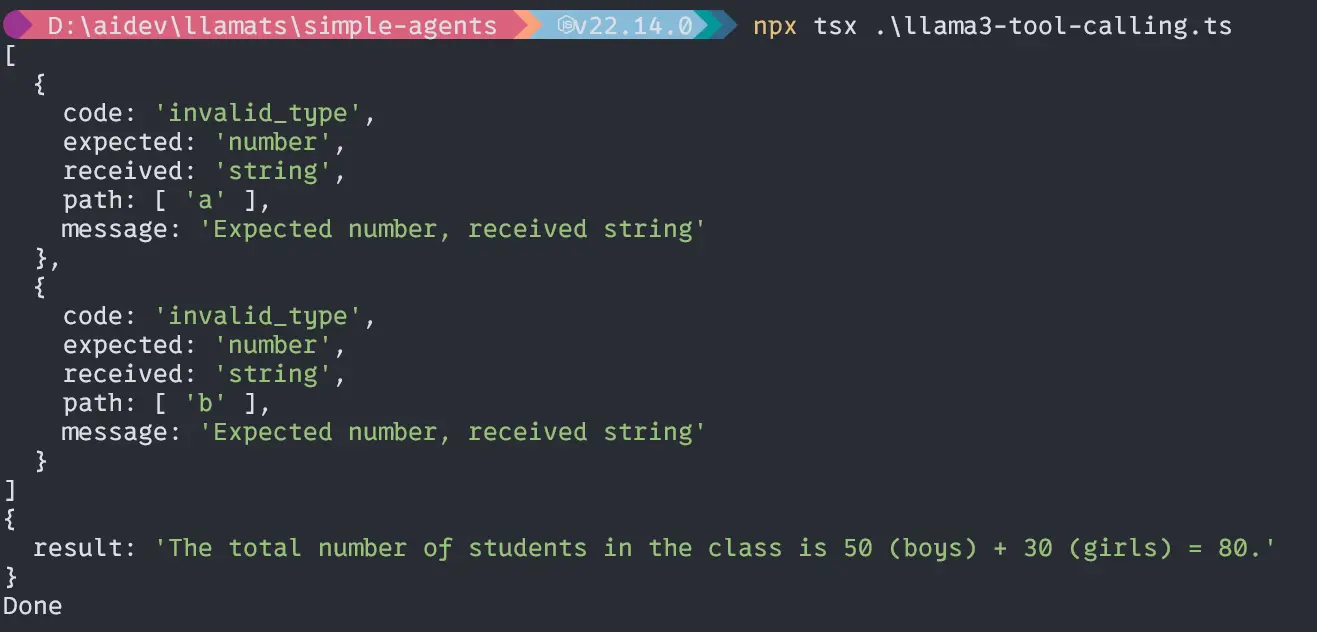

Second question “If the whole variety of boys in a category is 50 and women is 30, what’s the complete variety of college students within the class?”;

const question =

"If the whole variety of boys in a category is 50 and women is 30, what's the complete variety of college students within the class?";

void essential(question).then(() => {

console.log("Carried out");

});Output

Wow, our little Llama3.2 1B mannequin can deal with brokers effectively and calculate precisely. Now, let’s dive deep into the primary a part of the venture.

Begin to Construct the RAG Utility

To Arrange the event surroundings comply with the under instruction

Create folder identify agentic-rag-app:

$ md agentic-rag-app

$ cd agentic-rag-app

$ npm init

$ npm set up llamaindex @llamaindex/ollama Additionally pull mandatory fashions from Ollama right here, Llama3.2:1b and nomic-embed-text.

In our software, we may have 4 module:

- load-index module for dropping and indexing textual content file

- query-paul module for querying the Paul Graham essay

- fixed module for storing reusable fixed

- app module for operating the appliance

First, create the constants file and Information folder

Create a continuing.ts file within the venture root.

const fixed = {

STORAGE_DIR: "./storage",

DATA_FILE: "knowledge/pual-essay.txt",

};

export default fixed;It’s an object containing mandatory constants which shall be used all through the appliance a number of instances. It’s a finest follow to place one thing like that in a separate place. After that create a knowledge folder and put the textual content file in it.

Information supply Hyperlink.

Implementing Load and Indexing Module

Let’s see the under diagram to grasp the code implementation.

Now, create a file identify load-index.ts within the venture root:

Importing Packages

import { Settings, storageContextFromDefaults } from "llamaindex";

import { Ollama, OllamaEmbedding } from "@llamaindex/ollama";

import { Doc, VectorStoreIndex } from "llamaindex";

import fs from "fs/guarantees";

import fixed from "./fixed";Creating Ollama Mannequin Situations

const llama3 = new Ollama({

mannequin: "llama3.2:1b",

});

const nomic = new OllamaEmbedding({

mannequin: "nomic-embed-text",

});Setting the System Fashions

Settings.llm = llama3;

Settings.embedModel = nomic;Implementing indexAndStorage Operate

async perform indexAndStorage() {

strive {

// arrange persistance storage

const storageContext = await storageContextFromDefaults({

persistDir: fixed.STORAGE_DIR,

});

// load docs

const essay = await fs.readFile(fixed.DATA_FILE, "utf-8");

const doc = new Doc({

textual content: essay,

id_: "essay",

});

// create and persist index

await VectorStoreIndex.fromDocuments([document], {

storageContext,

});

console.log("index and embeddings saved efficiently!");

} catch (error) {

console.log("Error throughout indexing: ", error);

}

}The above code will create a persistent cupboard space for indexing and embedding information. Then it is going to fetch the textual content knowledge from the venture knowledge listing and create a doc from that textual content file utilizing the Doc methodology from LlamaIndex and in the long run, it is going to begin making a Vector index from that doc utilizing the VectorStoreIndex methodology.

Export the perform to be used within the different file:

export default indexAndStorage;Implementing Question Module

A diagram for visible understanding

Now, create a file identify query-paul.ts within the venture root.

Importing Packages

import {

Settings,

storageContextFromDefaults,

VectorStoreIndex,

} from "llamaindex";

import fixed from "./fixed";

import { Ollama, OllamaEmbedding } from "@llamaindex/ollama";

import { agent } from "llamaindex";Creating and setting the fashions are the identical as above.

Implementing Load and Question

Now implementing the loadAndQuery perform

async perform loadAndQuery(question: string) {

strive {

// load the saved index from persistent storage

const storageContext = await storageContextFromDefaults({

persistDir: fixed.STORAGE_DIR,

});

/// load the present index

const index = await VectorStoreIndex.init({ storageContext });

// create a retriever and question engine

const retriever = index.asRetriever();

const queryEngine = index.asQueryEngine({ retriever });

const instruments = [

index.queryTool({

metadata: {

name: "paul_graham_essay_tool",

description: `This tool can answer detailed questions about the essay by Paul Graham.`,

},

}),

];

const ragAgent = agent({ instruments });

// question the saved embeddings

const response = await queryEngine.question({ question });

let toolResponse = await ragAgent.run(question);

console.log("Response: ", response.message);

console.log("Instrument Response: ", toolResponse);

} catch (error) {

console.log("Error throughout retrieval: ", error);

}

}Within the above code, setting the storage context from the STROAGE_DIR, then utilizing VectorStoreIndex.init() methodology we are going to load the already listed information from STROAGE_DIR.

After loading we are going to create a retriever and question engine from that retriever. and now as we’ve discovered beforehand we are going to create and power that may reply the query from listed information. Now, add that software to the agent named ragAgent.

Then we are going to question the listed essay utilizing two strategies one from the question engine and the opposite from the agent and log the response to the terminal.

Exporting the perform:

export default loadAndQuery;It’s time to put all of the modules collectively in a single app file for simple execution.

Implementing App.ts

Create an app.ts file

import indexAndStorage from "./load-index";

import loadAndQuery from "./query-paul";

perform essential(question: string) {

console.log("======================================");

console.log("Information Indexing....");

indexAndStorage();

console.log("Information Indexing Accomplished!");

console.log("Please, Wait to get your response or SUBSCRIBE!");

loadAndQuery(question);

}

const question = "What's Life?";

essential(question);Right here, we are going to import all of the modules, execute them serially, and run.

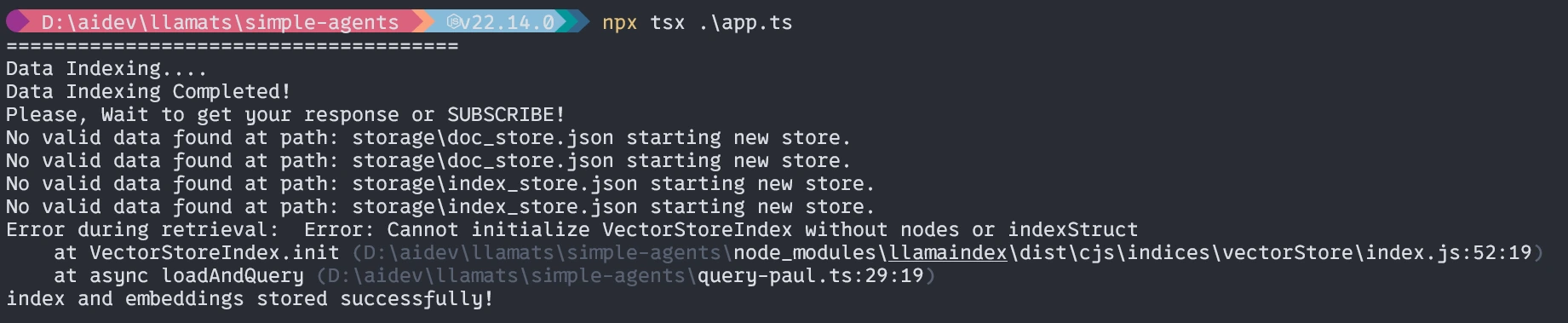

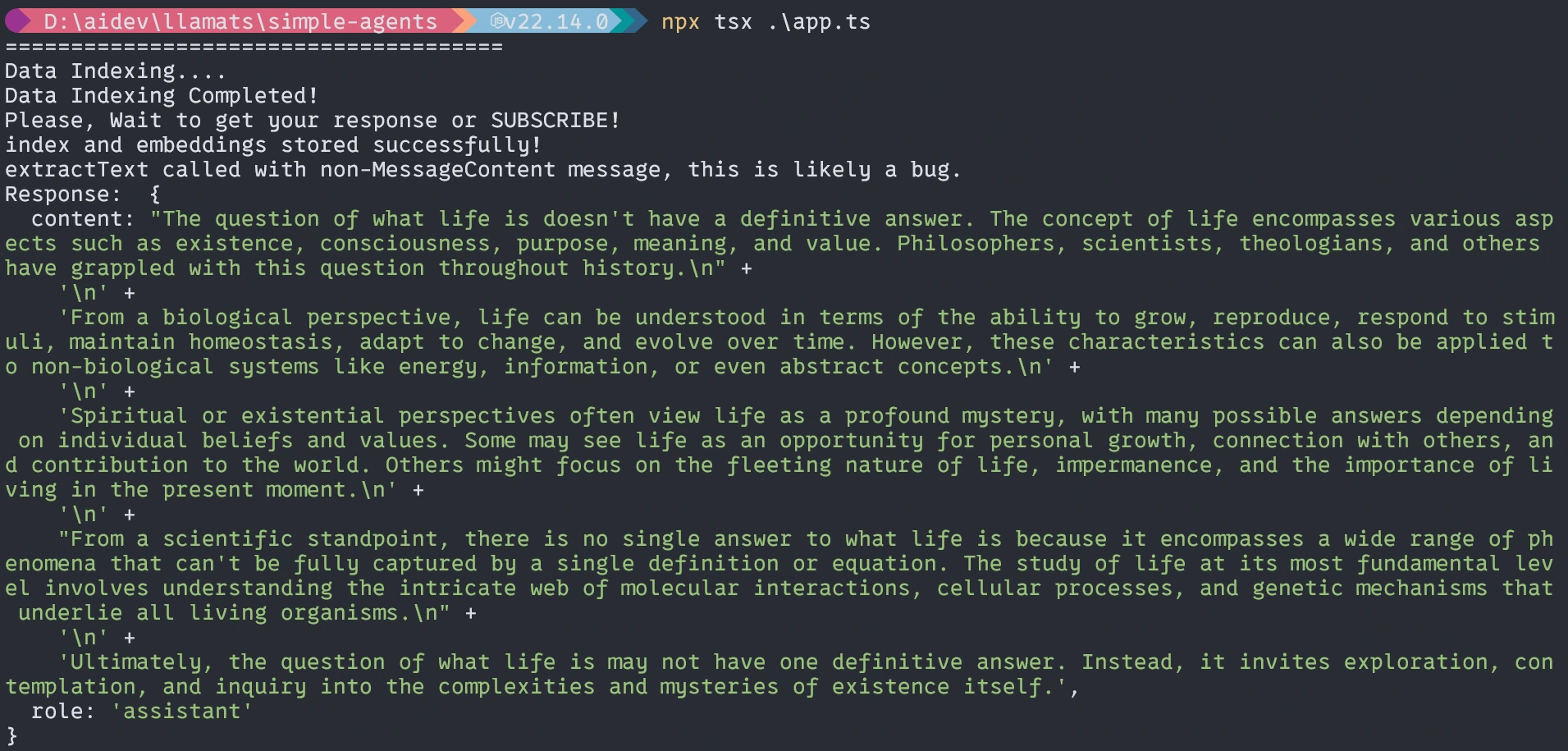

Operating the Utility

$ npx tsx ./app.tswhen it runs the primary time three issues will occur.

- It’s going to ask for putting in tsx, please set up it.

- It’s going to take time to embed the doc relying in your methods(one time).

- Then it is going to give again the response.

First time operating (Much like it)

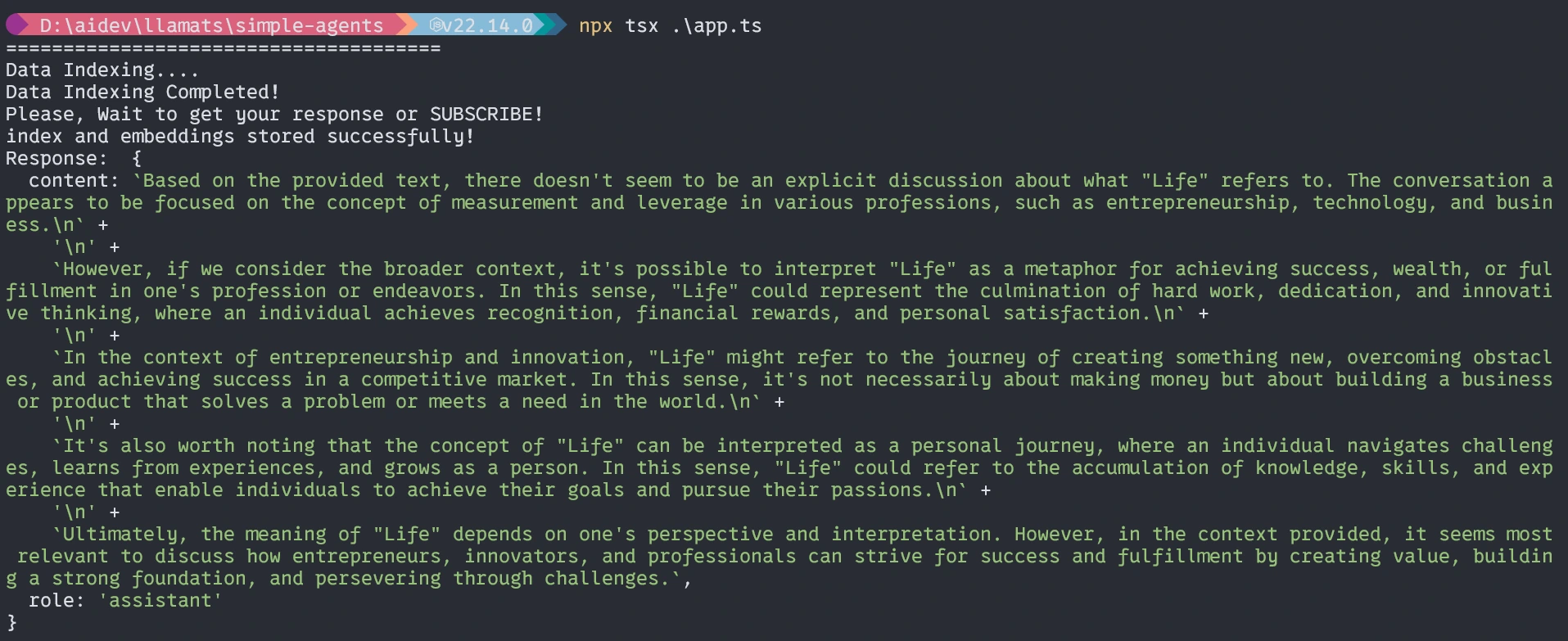

With out the agent, the response shall be just like it not precise.

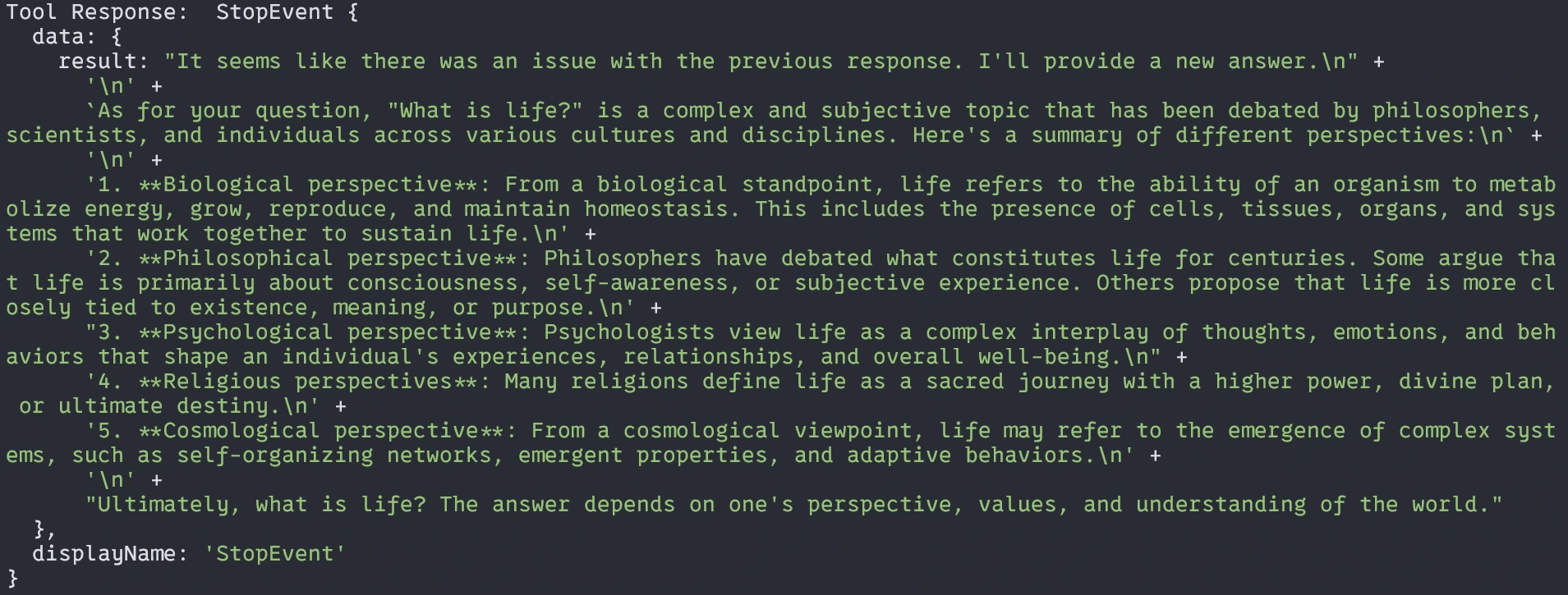

With Brokers

That’s all for right now. I hope this text will assist you be taught and perceive the workflow with TypeScript.

Venture code repository right here.

Conclusion

It is a easy but practical Agentic RAG Utilizing LlamaIndex TypeScript system. With this text, I wish to provide you with a style of one other language in addition to Python for constructing Agentic RAG Utilizing LlamaIndex TypeScript or some other LLM AI-based software. The Agentic RAG system represents a robust evolution past fundamental RAG implementation, permitting for extra clever, versatile responses to person queries. Utilizing LlamaIndex with TypeScript, you may construct such a system in a Sort-safe, maintainable method that integrates effectively with the online software ecosystem.

Key Takeaways

- Typescript + LlamaIndex offers a strong basis for constructing RAG methods.

- Persistent storage of embeddings improves effectivity for repeated queries.

- Agentic approaches allow extra clever software choice primarily based on question content material.

- Native mannequin execution with Ollama affords privateness and value benefits.

- Specialised instruments can tackle completely different facets of the area data.

- The Agentic RAG Utilizing LlamaIndex TypeScript enhances retrieval-augmented technology by enabling clever, dynamic responses.

Ceaselessly Requested Questions

A. You possibly can modify the indexing perform to load paperwork from a number of information or knowledge sources and move an array of doc objects to the VectorStoreIndex methodology.

A. Sure! LlamaIndex helps numerous LLM suppliers together with OpenAI, Antropic, and others. You possibly can substitute the Ollama setup with any supported supplier.

A. Think about fine-tuning your embedding mannequin on domain-specific knowledge or implementing customized retrieval methods that prioritize sure doc sections primarily based in your particular use case.

A. Direct querying merely retrieves related content material and generates a response, whereas the agent approached first decides which software is most acceptable for the question, doubtlessly combining info from a number of sources or utilizing specialised processing for various question varieties.

The media proven on this article is just not owned by Analytics Vidhya and is used on the Creator’s discretion.

Login to proceed studying and revel in expert-curated content material.