The sort of content material that customers may need to create utilizing a generative mannequin similar to Flux or Hunyuan Video will not be at all times be simply out there, even when the content material request is pretty generic, and one may guess that the generator may deal with it.

One instance, illustrated in a brand new paper that we’ll check out on this article, notes that the increasingly-eclipsed OpenAI Sora mannequin has some problem rendering an anatomically right firefly, utilizing the immediate ‘A firefly is glowing on a grass’s leaf on a serene summer season night time’:

OpenAI’s Sora has a barely wonky understanding of firefly anatomy. Supply: https://arxiv.org/pdf/2503.01739

Since I hardly ever take analysis claims at face worth, I examined the identical immediate on Sora in the present day and received a barely higher consequence. Nevertheless, Sora nonetheless didn’t render the glow accurately – relatively than illuminating the tip of the firefly’s tail, the place bioluminescence happens, it misplaced the glow close to the insect’s ft:

My very own check of the researchers’ immediate in Sora produces a consequence that exhibits Sora doesn’t perceive the place a Firefly’s gentle truly comes from.

Mockingly, the Adobe Firefly generative diffusion engine, skilled on the corporate’s copyright-secured inventory images and movies, solely managed a 1-in-3 success price on this regard, once I tried the identical immediate in Photoshop’s generative AI characteristic:

Solely the ultimate of three proposed generations of the researchers’ immediate produces a glow in any respect in Adobe Firefly (March 2025), although not less than the glow is located within the right a part of the insect’s anatomy.

This instance was highlighted by the researchers of the brand new paper for example that the distribution, emphasis and protection in coaching units used to tell fashionable basis fashions could not align with the person’s wants, even when the person is just not asking for something notably difficult – a subject that brings up the challenges concerned in adapting hyperscale coaching datasets to their most effective and performative outcomes as generative fashions.

The authors state:

‘[Sora] fails to seize the idea of a glowing firefly whereas efficiently producing grass and a summer season [night]. From the info perspective, we infer that is primarily as a result of [Sora] has not been skilled on firefly-related subjects, whereas it has been skilled on grass and night time. Moreover, if [Sora had] seen the video proven in [above image], it should perceive what a glowing firefly ought to seem like.’

They introduce a newly curated dataset and counsel that their methodology could possibly be refined in future work to create knowledge collections that higher align with person expectations than many current fashions.

Knowledge for the Folks

Basically their proposal posits a knowledge curation method that falls someplace between the customized knowledge for a model-type similar to a LoRA (and this method is way too particular for basic use); and the broad and comparatively indiscriminate high-volume collections (such because the LAION dataset powering Steady Diffusion) which aren’t particularly aligned with any end-use situation.

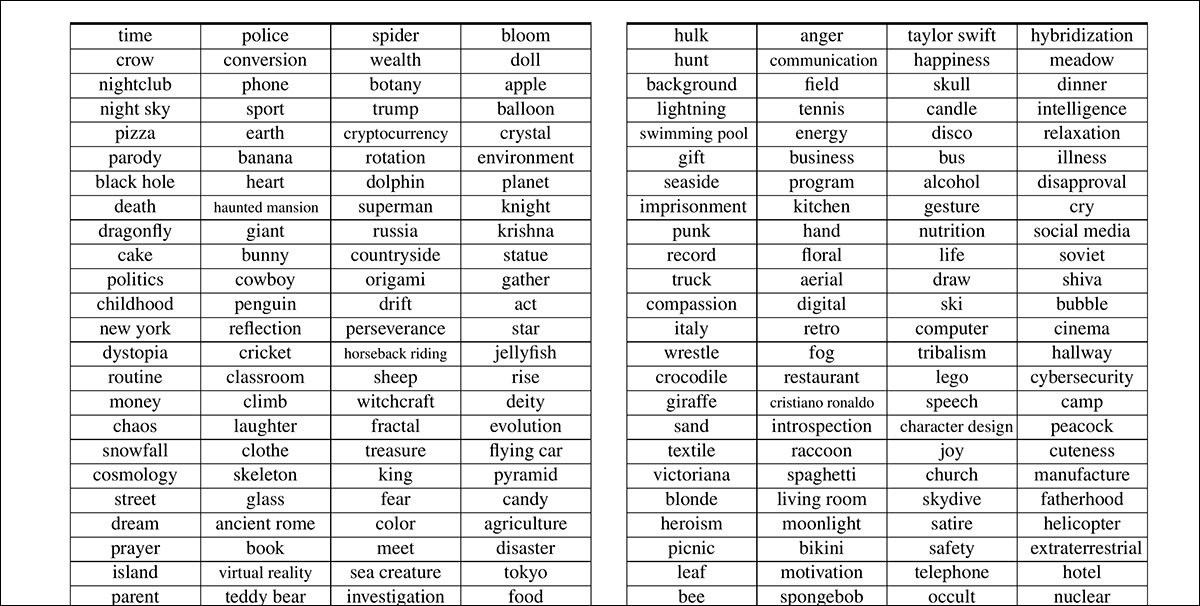

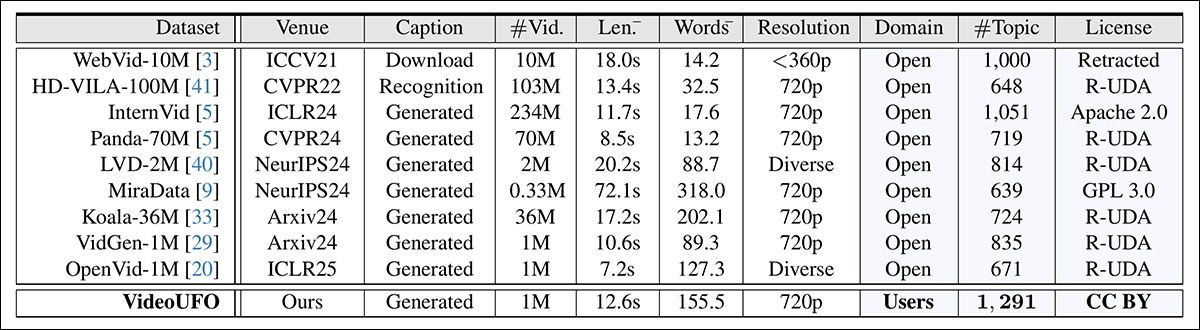

The brand new method, each as methodology and a novel dataset, is (relatively tortuously) named Customers’ FOcus in text-to-video, or VideoUFO. The VideoUFO dataset contains 1.9 million video clips spanning 1291 user-focused subjects. The subjects themselves have been elaborately developed from an current video dataset, and parsed by means of numerous language fashions and Pure Language Processing (NLP) strategies:

Samples of the distilled subjects introduced within the new paper.

The VideoUFO dataset incorporates a excessive quantity of novel movies trawled from YouTube – ‘novel’ within the sense that the movies in query don’t characteristic in video datasets which are presently fashionable within the literature, and subsequently within the many subsets which were curated from them (and lots of the movies have been in actual fact uploaded subsequent to the creation of the older datasets thar the paper mentions).

The truth is, the authors declare that there’s solely 0.29% overlap with current video datasets – a formidable demonstration of novelty.

One cause for this could be that the authors would solely settle for YouTube movies with a Inventive Commons license that might be much less more likely to hamstring customers additional down the road: it is attainable that this class of movies has been much less prioritized in prior sweeps of YouTube and different high-volume platforms.

Secondly, the movies have been requested on the premise of pre-estimated user-need (see picture above), and never indiscriminately trawled. These two elements together may result in such a novel assortment. Moreover, the researchers checked the YouTube IDs of any contributing movies (i.e., movies which will later have been break up up and re-imagined for the VideoUFO assortment) towards these featured in current collections, lending credence to the declare.

Although not every thing within the new paper is kind of as convincing, it is an fascinating learn that emphasizes the extent to which we’re nonetheless relatively on the mercy of uneven distributions in datasets, by way of the obstacles the analysis scene is usually confronted with in dataset curation.

The new work is titled VideoUFO: A Million-Scale Person-Targeted Dataset for Textual content-to-Video Technology, and comes from two researchers, respectively from the College of Expertise Sydney in Australia, and Zhejiang College in China.

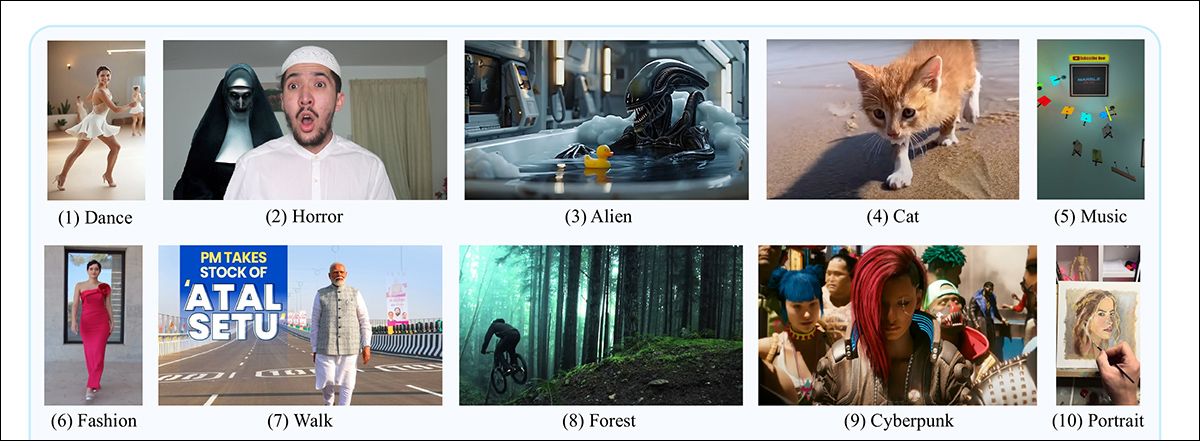

Choose examples from the ultimate obtained dataset.

A ‘Private Shopper’ for AI Knowledge

The subject material and ideas featured within the whole sum of web pictures and movies don’t essentially mirror what the typical finish person could find yourself asking for from a generative system; even the place content material and demand do are likely to collide (as with porn, which is plentifully out there on the web and of nice curiosity to many gen AI customers), this may occasionally not align with the builders’ intent and requirements for a brand new generative system.

Apart from the excessive quantity of NSFW materials uploaded each day, a disproportionate quantity of net-available materials is more likely to be from advertisers and people making an attempt to control search engine optimisation. Business self-interest of this type makes the distribution of subject material removed from neutral; worse, it’s tough to develop AI-based filtering programs that may address the issue, since algorithms and fashions developed from significant hyperscale knowledge could in themselves mirror the supply knowledge’s tendencies and priorities.

Subsequently the authors of the brand new work have approached the issue by reversing the proposition, by means of figuring out what customers are more likely to need, and acquiring movies that align with these wants.

On the floor, this method appears simply as more likely to set off a semantic race to the underside as to realize a balanced, Wikipedia-style neutrality. Calibrating knowledge curation round person demand dangers amplifying the preferences of the lowest-common-denominator whereas marginalizing area of interest customers, since majority pursuits will inevitably carry better weight.

Nonetheless, let’s check out how the paper tackles the problem.

Distilling Ideas with Discretion

The researchers used the 2024 VidProM dataset because the supply for subject evaluation that might later inform the mission’s web-scraping.

This dataset was chosen, the authors state, as a result of it’s the solely publicly-available 1m+ dataset ‘written by actual customers’ – and it needs to be acknowledged that this dataset was itself curated by the 2 authors of the brand new paper.

The paper explains*:

‘First, we embed all 1.67 million prompts from VidProM into 384-dimensional vectors utilizing SentenceTransformers Subsequent, we cluster these vectors with Ok-means. Be aware that right here we preset the variety of clusters to a comparatively giant worth, i.e., 2, 000, and merge comparable clusters within the subsequent step.

‘Lastly, for every cluster, we ask GPT-4o to conclude a subject [one or two words].’

The authors level out that sure ideas are distinct however notably adjoining, similar to church and cathedral. Too granular a standards for instances of this type would result in idea embeddings (for example) for every sort of canine breed, as an alternative of the time period canine; whereas too broad a standards may corral an extreme variety of sub-concepts right into a single over-crowded idea; subsequently the paper notes the balancing act mandatory to judge such instances.

Singular and plural kinds have been merged, and verbs restored to their base (infinitive) kinds. Excessively broad phrases – similar to animation, scene, movie and motion – have been eliminated.

Thus 1,291 subjects have been obtained (with the complete record out there within the supply paper’s supplementary part).

Choose Internet-Scraping

Subsequent, the researchers used the official YouTube API to hunt movies based mostly on the standards distilled from the 2024 dataset, searching for to acquire 500 movies for every subject. Apart from the requisite artistic commons license, every video needed to have a decision of 720p or greater, and needed to be shorter than 4 minutes.

On this method 586,490 movies have been scraped from YouTube.

The authors in contrast the YouTube ID of the downloaded movies to plenty of fashionable datasets: OpenVid-1M; HD-VILA-100M; Intern-Vid; Koala-36M; LVD-2M; MiraData; Panda-70M; VidGen-1M; and WebVid-10M.

They discovered that just one,675 IDs (the aforementioned 0.29%) of the VideoUFO clips featured in these older collections, and it must be conceded that whereas the dataset comparability record is just not exhaustive, it does embody all the most important and most influential gamers within the generative video scene.

Splits and Evaluation

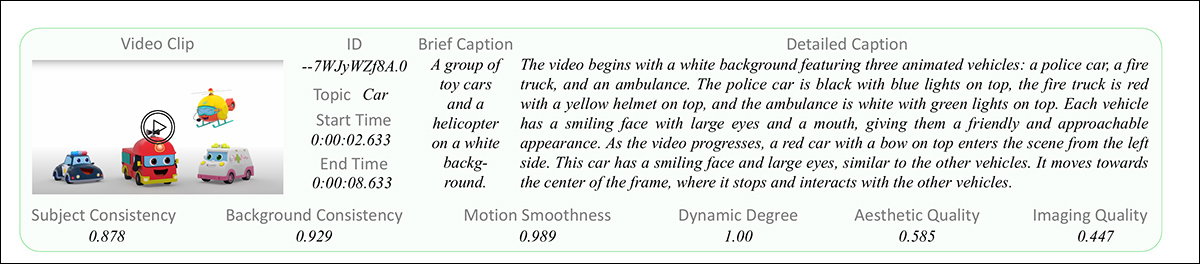

The obtained movies have been subsequently segmented into a number of clips, in response to the methodology outlined within the Panda-70M paper cited above. Shot boundaries have been estimated, assemblies stitched, and the concatenated movies divided into single clips, with temporary and detailed captions offered.

Every knowledge entry within the VideoUFO dataset incorporates a clip, an ID, begin and finish occasions, and a quick and an in depth caption.

The temporary captions have been dealt with by the Panda-70M methodology, and the detailed video captions by Qwen2-VL-7B, alongside the rules established by Open-Sora-Plan. In instances the place clips didn’t efficiently embody the meant goal idea, the detailed captions for every such clip have been fed into GPT-4o mini, so as to confirm whether or not it was actually a match for the subject. Although the authors would have most well-liked analysis through GPT-4o, this may have been too costly for tens of millions of video clips.

Video high quality evaluation was dealt with with six strategies from the VBench mission .

Comparisons

The authors repeated the subject extraction course of on the aforementioned prior datasets. For this, it was essential to semantically-match the derived classes of VideoUFO to the inevitably totally different classes within the different collections; it must be conceded that such processes provide solely approximated equal classes, and subsequently this can be too subjective a course of to vouchsafe empirical comparisons.

Nonetheless, within the picture beneath we see the outcomes the researchers obtained by this methodology:

Comparability of the elemental attributes derived throughout VideoUFO and the prior datasets.

The researchers acknowledge that their evaluation relied on the prevailing captions and descriptions offered in every dataset. They admit that re-captioning older datasets utilizing the identical methodology as VideoUFO may have supplied a extra direct comparability. Nevertheless, given the sheer quantity of knowledge factors, their conclusion that this method could be prohibitively costly appears justified.

Technology

The authors developed a benchmark to judge text-to-video fashions’ efficiency on user-focused ideas, titled BenchUFO. This entailed choosing 791 nouns from the 1,291 distilled person subjects in VideoUFO. For every chosen subject, ten textual content prompts from VidProM have been then randomly chosen.

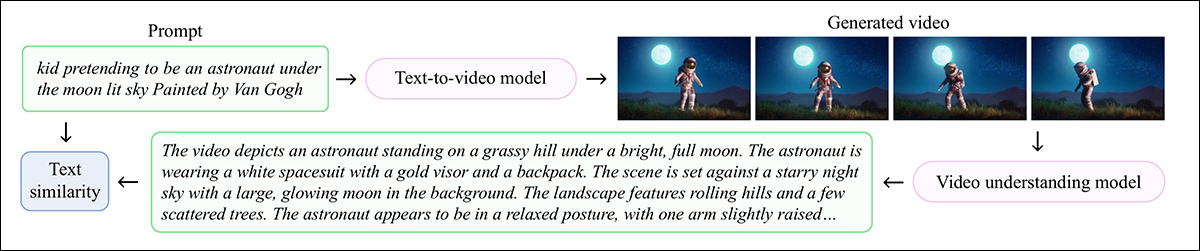

Every immediate was handed by means of to a text-to-video mannequin, with the aforementioned Qwen2-VL-7B captioner used to judge the generated outcomes. With all generated movies thus captioned, SentenceTransformers was used to calculate cosine similarity for each the enter immediate and output (inferred) description in every case.

Schema for the BenchUFO course of.

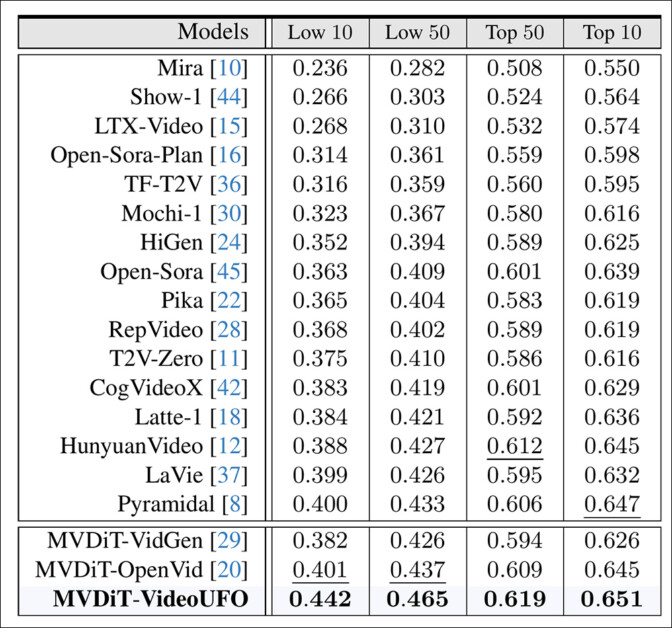

The evaluated generative fashions have been: Mira; Present-1; LTX-Video; Open-Sora-Plan; Open Sora; TF-T2V; Mochi-1; HiGen; Pika; RepVideo; T2V-Zero; CogVideoX; Latte-1; Hunyuan Video; LaVie; and Pyramidal.

Apart from VideoUFO, MVDiT-VidGen and MVDit-OpenVid have been the choice coaching datasets.

The outcomes take into account the Tenth-Fiftieth worst-performing and best-performing subjects throughout the architectures and datasets.

Outcomes for the efficiency of public T2V fashions vs. the authors’ skilled fashions, on BenchUFO.

Right here the authors remark:

‘Present text-to-video fashions don’t constantly carry out effectively throughout all user-focused subjects. Particularly, there’s a rating distinction starting from 0.233 to 0.314 between the top-10 and low-10 subjects. These fashions could not successfully perceive subjects similar to “large squid”, “animal cell”, “Van Gogh”, and “historic Egyptian” attributable to inadequate coaching on such movies.

‘Present text-to-video fashions present a sure diploma of consistency of their best-performing subjects. We uncover that almost all text-to-video fashions excel at producing movies on animal-related subjects, similar to ‘seagull’, ‘panda’, ‘dolphin’, ‘camel’, and ‘owl’. We infer that that is partly attributable to a bias in the direction of animals in present video datasets.’

Conclusion

VideoUFO is an impressive providing if solely from the standpoint of contemporary knowledge. If there was no error in evaluating and eliminating YouTube IDs, and if the dataset accommodates a lot materials that’s new to the analysis scene, it’s a uncommon and probably precious proposition.

The draw back is that one wants to present credence to the core methodology; for those who do not consider that person demand ought to inform web-scraping formulation, you would be shopping for right into a dataset that comes with its personal units of troubling biases.

Additional, the utility of the distilled subjects depends upon each the reliability of the distilling methodology used (which is usually hampered by price range constraints), and in addition the formulation strategies for the 2024 dataset that gives the supply materials.

That mentioned, VideoUFO actually deserves additional investigation – and it’s out there at Hugging Face.

* My substitution of the authors’ citations for hyperlinks.

First revealed Wednesday, March 5, 2025