Unlocking the true worth of information typically will get impeded by siloed data. Conventional information administration—whereby every enterprise unit ingests uncooked information in separate information lakes or warehouses—hinders visibility and cross-functional evaluation. A knowledge mesh framework empowers enterprise items with information possession and facilitates seamless sharing.

Nevertheless, integrating datasets from totally different enterprise items can current a number of challenges. Every enterprise unit exposes information belongings with various codecs and granularity ranges, and applies totally different information validation checks. Unifying these necessitates further information processing, requiring every enterprise unit to provision and keep a separate information warehouse. This burdens enterprise items targeted solely on consuming the curated information for evaluation and never involved with information administration duties, cleaning, or complete information processing.

On this publish, we discover a strong structure sample of a knowledge sharing mechanism by bridging the hole between information lake and information warehouse utilizing Amazon DataZone and Amazon Redshift.

Answer overview

Amazon DataZone is a knowledge administration service that makes it easy for enterprise items to catalog, uncover, share, and govern their information belongings. Enterprise items can curate and expose their available domain-specific information merchandise by Amazon DataZone, offering discoverability and managed entry.

Amazon Redshift is a quick, scalable, and absolutely managed cloud information warehouse that means that you can course of and run your advanced SQL analytics workloads on structured and semi-structured information. Hundreds of shoppers use Amazon Redshift information sharing to allow instantaneous, granular, and quick information entry throughout Amazon Redshift provisioned clusters and serverless workgroups. This lets you scale your learn and write workloads to hundreds of concurrent customers with out having to maneuver or copy the info. Amazon DataZone natively helps information sharing for Amazon Redshift information belongings. With Amazon Redshift Spectrum, you may question the info in your Amazon Easy Storage Service (Amazon S3) information lake utilizing a central AWS Glue metastore out of your Redshift information warehouse. This functionality extends your petabyte-scale Redshift information warehouse to unbounded information storage limits, which lets you scale to exabytes of information cost-effectively.

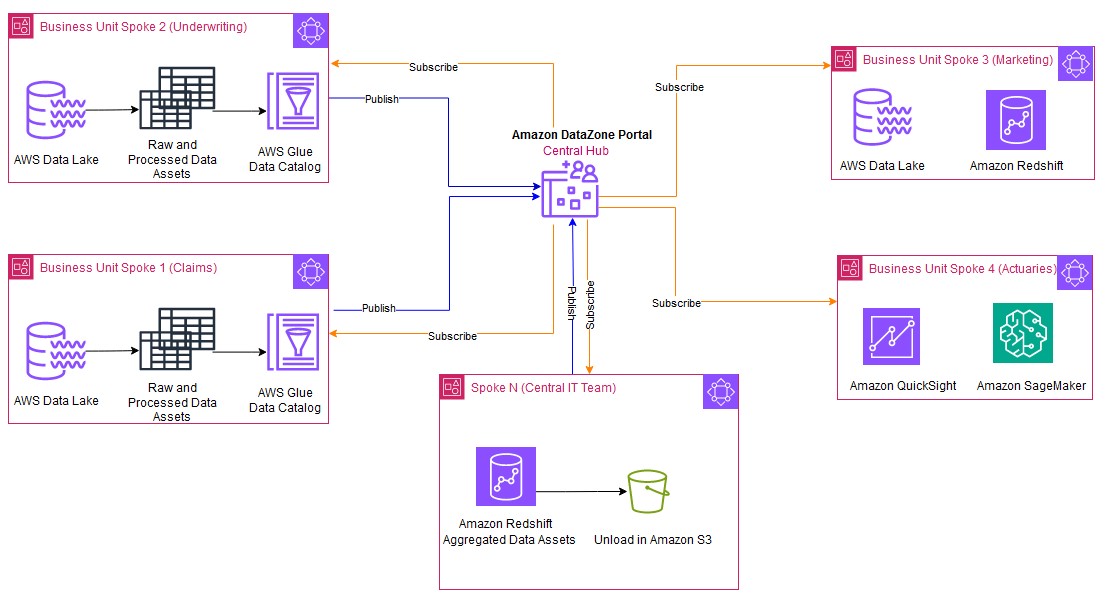

The next determine reveals a typical distributed and collaborative architectural sample applied utilizing Amazon DataZone. Enterprise items can merely share information and collaborate by publishing and subscribing to the info belongings.

The Central IT workforce (Spoke N) subscribes the info from particular person enterprise items and consumes this information utilizing Redshift Spectrum. The Central IT workforce applies standardization and performs the duties on the subscribed information comparable to schema alignment, information validation checks, collating the info, and enrichment by including further context or derived attributes to the ultimate information asset. This processed unified information can then persist as a brand new information asset in Amazon Redshift managed storage to satisfy the SLA necessities of the enterprise items. The brand new processed information asset produced by the Central IT workforce is then revealed again to Amazon DataZone. With Amazon DataZone, particular person enterprise items can uncover and immediately eat these new information belongings, gaining insights to a holistic view of the info (360-degree insights) throughout the group.

The Central IT workforce manages a unified Redshift information warehouse, dealing with all information integration, processing, and upkeep. Enterprise items entry clear, standardized information. To eat the info, they’ll select between a provisioned Redshift cluster for constant high-volume wants or Amazon Redshift Serverless for variable, on-demand evaluation. This mannequin permits the items to give attention to insights, with prices aligned to precise consumption. This enables the enterprise items to derive worth from information with out the burden of information administration duties.

This streamlined structure strategy presents a number of benefits:

- Single supply of reality – The Central IT workforce acts because the custodian of the mixed and curated information from all enterprise items, thereby offering a unified and constant dataset. The Central IT workforce implements information governance practices, offering information high quality, safety, and compliance with established insurance policies. A centralized information warehouse for processing is commonly extra cost-efficient, and its scalability permits organizations to dynamically regulate their storage wants. Equally, particular person enterprise items produce their very own domain-specific information. There aren’t any duplicate information merchandise created by enterprise items or the Central IT workforce.

- Eliminating dependency on enterprise items – Redshift Spectrum makes use of a metadata layer to immediately question the info residing in S3 information lakes, eliminating the necessity for information copying or counting on particular person enterprise items to provoke the copy jobs. This considerably reduces the danger of errors related to information switch or motion and information copies.

- Eliminating stale information – Avoiding duplication of information additionally eliminates the danger of stale information present in a number of places.

- Incremental loading – As a result of the Central IT workforce can immediately question the info on the info lakes utilizing Redshift Spectrum, they’ve the pliability to question solely the related columns wanted for the unified evaluation and aggregations. This may be finished utilizing mechanisms to detect the incremental information from the info lakes and course of solely the brand new or up to date information, additional optimizing useful resource utilization.

- Federated governance – Amazon DataZone facilitates centralized governance insurance policies, offering constant information entry and safety throughout all enterprise items. Sharing and entry controls stay confined inside Amazon DataZone.

- Enhanced value appropriation and effectivity – This technique confines the price overhead of processing and integrating the info with the Central IT workforce. Particular person enterprise items can provision the Redshift Serverless information warehouse to solely eat the info. This fashion, every unit can clearly demarcate the consumption prices and impose limits. Moreover, the Central IT workforce can select to use chargeback mechanisms to every of those items.

On this publish, we use a simplified use case, as proven within the following determine, to bridge the hole between information lakes and information warehouses utilizing Redshift Spectrum and Amazon DataZone.

The underwriting enterprise unit curates the info asset utilizing AWS Glue and publishes the info asset Insurance policies in Amazon DataZone. The Central IT workforce subscribes to the info asset from the underwriting enterprise unit.

We give attention to how the Central IT workforce consumes the subscribed information lake asset from enterprise items utilizing Redshift Spectrum and creates a brand new unified information asset.

Conditions

The next conditions have to be in place:

- AWS accounts – You must have energetic AWS accounts earlier than you proceed. In the event you don’t have one, seek advice from How do I create and activate a brand new AWS account? On this publish, we use three AWS accounts. In the event you’re new to Amazon DataZone, seek advice from Getting began.

- A Redshift information warehouse – You may create a provisioned cluster following the directions in Create a pattern Amazon Redshift cluster, or provision a serverless workgroup following the directions in Get began with Amazon Redshift Serverless information warehouses.

- Amazon Knowledge Zone sources – You want a site for Amazon DataZone, an Amazon DataZone undertaking, and a new Amazon DataZone surroundings (with a customized AWS service blueprint).

- Knowledge lake asset – The information lake asset

Insurance policiesfrom the enterprise items was already onboarded to Amazon DataZone and subscribed by the Central IT workforce. To grasp affiliate a number of accounts and eat the subscribed belongings utilizing Amazon Athena, seek advice from Working with related accounts to publish and eat information. - Central IT surroundings – The Central IT workforce has created an surroundings known as

env_central_teamand makes use of an present AWS Id and Entry Administration (IAM) position known ascustom_role, which grants Amazon DataZone entry to AWS companies and sources, comparable to Athena, AWS Glue, and Amazon Redshift, on this surroundings. So as to add all of the subscribed information belongings to a typical AWS Glue database, the Central IT workforce configures a subscription goal and makes use ofcentral_dbbecause the AWS Glue database. - IAM position – Make it possible for the IAM position that you just wish to allow within the Amazon DataZone surroundings has needed permissions to your AWS companies and sources. The next instance coverage offers adequate AWS Lake Formation and AWS Glue permissions to entry Redshift Spectrum:

As proven within the following screenshot, the Central IT workforce has subscribed to the info Insurance policies. The information asset is added to the env_central_team surroundings. Amazon DataZone will assume the custom_role to assist federate the surroundings consumer (central_user) to the motion hyperlink in Athena. The subscribed asset Insurance policies is added to the central_db database. This asset is then queried and consumed utilizing Athena.

The objective of the Central IT workforce is to eat the subscribed information lake asset Insurance policies with Redshift Spectrum. This information is additional processed and curated into the central information warehouse utilizing the Amazon Redshift Question Editor v2 and saved as a single supply of reality in Amazon Redshift managed storage. Within the following sections, we illustrate eat the subscribed information lake asset Insurance policies from Redshift Spectrum with out copying the info.

Robotically mount entry grants to the Amazon DataZone surroundings position

Amazon Redshift mechanically mounts the AWS Glue Knowledge Catalog within the Central IT Crew account as a database and permits it to question the info lake tables with three-part notation. That is obtainable by default with the Admin position.

To grant the required entry to the mounted Knowledge Catalog tables for the surroundings position (custom_role), full the next steps:

- Log in to the Amazon Redshift Question Editor v2 utilizing the Amazon DataZone deep hyperlink.

- Within the Question Editor v2, select your Redshift Serverless endpoint and select Edit Connection.

- For Authentication, choose Federated consumer.

- For Database, enter the database you wish to connect with.

- Get the present consumer IAM position as illustrated within the following screenshot.

- Connect with Redshift Question Editor v2 utilizing the database consumer title and password authentication technique. For instance, connect with

devdatabase utilizing the admin consumer title and password. Grant utilization on theawsdatacatalogdatabase to the surroundings consumer positioncustom_role(change the worth of current_user with the worth you copied):

Question utilizing Redshift Spectrum

Utilizing the federated consumer authentication technique, log in to Amazon Redshift. The Central IT workforce will be capable of question the subscribed information asset Insurance policies (desk: coverage) that was mechanically mounted beneath awsdatacatalog.

Combination tables and unify merchandise

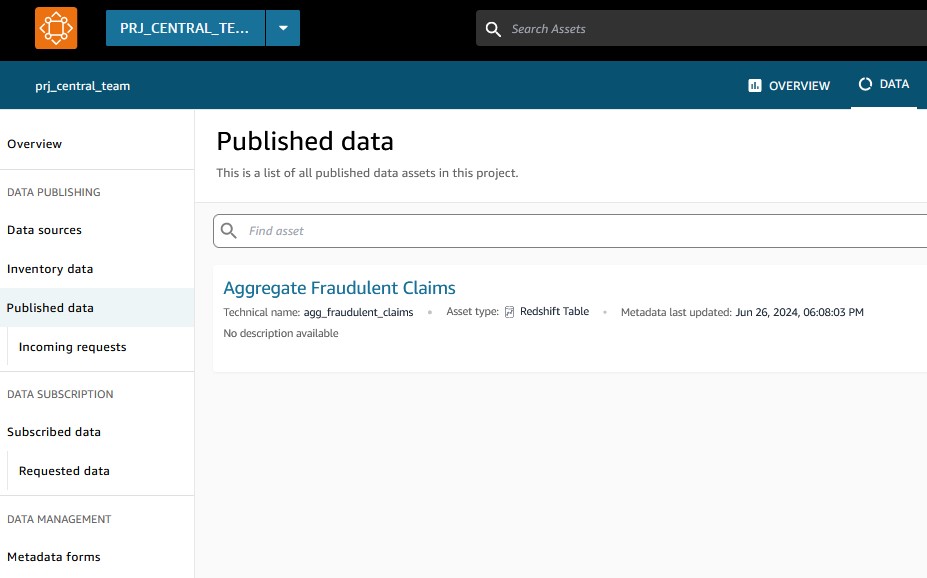

The Central IT workforce applies the required checks and standardization to mixture and unify the info belongings from all enterprise items, bringing them on the similar granularity. As proven within the following screenshot, each the Insurance policies and Claims information belongings are mixed to type a unified mixture information asset known as agg_fraudulent_claims.

These unified information belongings are then revealed again to the Amazon DataZone central hub for enterprise items to eat them.

The Central IT workforce additionally unloads the info belongings to Amazon S3 so that every enterprise unit has the pliability to make use of both a Redshift Serverless information warehouse or Athena to eat the info. Every enterprise unit can now isolate and put limits to the consumption prices on their particular person information warehouses.

As a result of the intention of the Central IT workforce was to eat information lake belongings inside a knowledge warehouse, the beneficial answer can be to make use of customized AWS service blueprints and deploy them as a part of one surroundings. On this case, we created one surroundings (env_central_team) to eat the asset utilizing Athena or Amazon Redshift. This accelerates the event of the info sharing course of as a result of the identical surroundings position is used to handle the permissions throughout a number of analytical engines.

Clear up

To wash up your sources, full the next steps:

- Delete any S3 buckets you created.

- On the Amazon DataZone console, delete the tasks used on this publish. It will delete most project-related objects like information belongings and environments.

- Delete the Amazon DataZone area.

- On the Lake Formation console, delete the Lake Formation admins registered by Amazon DataZone together with the tables and databases created by Amazon DataZone.

- In the event you used a provisioned Redshift cluster, delete the cluster. In the event you used Redshift Serverless, delete any tables created as a part of this publish.

Conclusion

On this publish, we explored a sample of seamless information sharing with information lakes and information warehouses with Amazon DataZone and Redshift Spectrum. We mentioned the challenges related to conventional information administration approaches, information silos, and the burden of sustaining particular person information warehouses for enterprise items.

So as to curb working and upkeep prices, we proposed an answer that makes use of Amazon DataZone as a central hub for information discovery and entry management, the place enterprise items can readily share their domain-specific information. To consolidate and unify the info from these enterprise items and supply a 360-degree perception, the Central IT workforce makes use of Redshift Spectrum to immediately question and analyze the info residing of their respective information lakes. This eliminates the necessity for creating separate information copy jobs and duplication of information residing in a number of locations.

The workforce additionally takes on the accountability of bringing all the info belongings to the identical granularity and course of a unified information asset. These mixed information merchandise can then be shared by Amazon DataZone to those enterprise items. Enterprise items can solely give attention to consuming the unified information belongings that aren’t particular to their area. This fashion, the processing prices might be managed and tightly monitored throughout all enterprise items. The Central IT workforce can even implement chargeback mechanisms based mostly on the consumption of the unified merchandise for every enterprise unit.

To be taught extra about Amazon DataZone and get began, seek advice from Getting began. Try the YouTube playlist for among the newest demos of Amazon DataZone and extra details about the capabilities obtainable.

In regards to the Authors

Lakshmi Nair is a Senior Analytics Specialist Options Architect at AWS. She makes a speciality of designing superior analytics techniques throughout industries. She focuses on crafting cloud-based information platforms, enabling real-time streaming, massive information processing, and strong information governance.

Lakshmi Nair is a Senior Analytics Specialist Options Architect at AWS. She makes a speciality of designing superior analytics techniques throughout industries. She focuses on crafting cloud-based information platforms, enabling real-time streaming, massive information processing, and strong information governance.

Srividya Parthasarathy is a Senior Huge Knowledge Architect on the AWS Lake Formation workforce. She enjoys constructing analytics and information mesh options on AWS and sharing them with the group.

Srividya Parthasarathy is a Senior Huge Knowledge Architect on the AWS Lake Formation workforce. She enjoys constructing analytics and information mesh options on AWS and sharing them with the group.