Forecasting fashions are crucial for a lot of companies to foretell future developments, however their accuracy relies upon closely on the standard of the enter information. Poor high quality information can result in inaccurate forecasts that end in suboptimal choices. That is the place Databricks Lakehouse Monitoring is available in – it offers a unified resolution to watch each the standard of knowledge flowing into forecasting fashions in addition to the mannequin efficiency itself.

Monitoring is very essential for forecasting fashions. Forecasting offers with time collection information, the place the temporal part and sequential nature of the info introduce further complexities. Points like information drift, the place the statistical properties of the enter information change over time, can considerably degrade forecast accuracy if not detected and addressed promptly.

Moreover, the efficiency of forecasting fashions is commonly measured by metrics like Imply Absolute Proportion Error (MAPE) that examine predictions to precise values. Nevertheless, floor reality values usually are not instantly obtainable, solely arriving after the forecasted time interval has handed. This delayed suggestions loop makes proactive monitoring of enter information high quality and mannequin outputs much more essential to determine potential points early.

Frequent retraining of statistical forecasting fashions utilizing latest information is frequent, however monitoring stays priceless to detect drift early and keep away from pointless computational prices. For complicated fashions like PatchTST, which use deep studying and require GPUs, retraining could also be much less frequent on account of useful resource constraints, making monitoring much more crucial.

With computerized hyperparameter tuning, you possibly can introduce skew and inconsistent mannequin efficiency throughout runs. Monitoring helps you rapidly determine when a mannequin’s efficiency has degraded and take corrective motion, akin to manually adjusting the hyperparameters or investigating the enter information for anomalies. Moreover, monitoring will help you strike the proper steadiness between mannequin efficiency and computational price. Auto-tuning could be resource-intensive, particularly if it is blindly operating on each retraining cycle. By monitoring the mannequin’s efficiency over time, you possibly can decide if the auto-tuning is definitely yielding vital enhancements or if it is simply including pointless overhead. This perception permits you to optimize your mannequin coaching pipeline.

Databricks Lakehouse Monitoring is constructed to watch the statistical properties and high quality of knowledge throughout all tables, but it surely additionally contains particular capabilities tailor-made for monitoring the efficiency of machine studying fashions through monitoring inference tables containing mannequin inputs and predictions. For forecasting fashions, this permits:

- Monitoring information drift in enter options over time, evaluating to a baseline

- Monitoring prediction drift and distribution of forecasts

- Measuring mannequin efficiency metrics like MAPE, bias, and many others as actuals grow to be obtainable

- Setting alerts if information high quality or mannequin efficiency degrades

Creating Forecasting Fashions on Databricks

Earlier than we focus on monitor forecasting fashions, let’s briefly cowl develop them on the Databricks platform. Databricks offers a unified setting to construct, practice, and deploy machine studying fashions at scale, together with time collection forecasting fashions.

There are a number of standard libraries and methods you should utilize to generate forecasts, akin to:

- Prophet: An open-source library for time collection forecasting that’s straightforward to make use of and tune. It excels at dealing with information with sturdy seasonal results and works properly with messy information by smoothing out outliers. Prophet’s easy, intuitive API makes it accessible to non-experts. You should use PySpark to parallelize Prophet mannequin coaching throughout a cluster to construct hundreds of fashions for every product-store mixture. Weblog

- ARIMA/SARIMA: Basic statistical strategies for time collection forecasting. ARIMA fashions intention to explain the autocorrelations within the information. SARIMA extends ARIMA to mannequin seasonality. In a latest benchmarking research, SARIMA demonstrated sturdy efficiency on retail gross sales information in comparison with different algorithms. Weblog

Along with standard libraries like Prophet and ARIMA/SARIMA for producing forecasts, Databricks additionally provides AutoML for Forecasting. AutoML simplifies the method of making forecasting fashions by routinely dealing with duties like algorithm choice, hyperparameter tuning, and distributed coaching. With AutoML, you possibly can:

- Rapidly generate baseline forecasting fashions and notebooks by way of a user-friendly UI

- Leverage a number of algorithms like Prophet and Auto-ARIMA beneath the hood

- Robotically deal with information preparation, mannequin coaching and tuning, and distributed computation with Spark

You’ll be able to simply combine fashions created utilizing AutoML with MLflow for experiment monitoring and Databricks Mannequin Serving for deployment and monitoring. The generated notebooks present code that may be custom-made and included into manufacturing workflows.

To streamline the mannequin growth workflow, you possibly can leverage MLflow, an open-source platform for the machine studying lifecycle. MLflow helps Prophet, ARIMA and different fashions out-of-the-box to allow experiment monitoring which is right for time collection experiments, permitting for the logging of parameters, metrics, and artifacts. Whereas this simplifies mannequin deployment and promotes reproducibility, utilizing MLflow is elective for Lakehouse Monitoring – you possibly can deploy fashions with out it and Lakehouse Monitoring will nonetheless be capable to observe them.

After getting a educated forecasting mannequin, you could have flexibility in the way you deploy it for inference relying in your use case. For real-time forecasting, you should utilize Databricks Mannequin Serving to deploy the mannequin as a low-latency REST endpoint with just some traces of code. When a mannequin is deployed for real-time inference, Databricks routinely logs the enter options and predictions to a managed Delta desk referred to as an inference log desk. This desk serves as the muse for monitoring the mannequin in manufacturing utilizing Lakehouse Monitoring.

Nevertheless, forecasting fashions are sometimes utilized in batch scoring situations, the place predictions are generated on a schedule (e.g. producing forecasts each evening for the subsequent day). On this case, you possibly can construct a separate pipeline that scores the mannequin on a batch of knowledge and logs the outcomes to a Delta desk. It is more cost effective to load the mannequin straight in your Databricks cluster and rating the batch of knowledge there. This strategy avoids the overhead of deploying the mannequin behind an API and paying for compute assets in a number of locations. The logged desk of batch predictions can nonetheless be monitored utilizing Lakehouse Monitoring in the identical approach as a real-time inference desk.

For those who do require each real-time and batch inference to your forecasting mannequin, you possibly can think about using Mannequin Serving for the real-time use case and loading the mannequin straight on a cluster for batch scoring. This hybrid strategy optimizes prices whereas nonetheless offering the required performance. You’ll be able to leverage Lakeview dashboards to construct interactive visualizations and share insights in your forecasting experiences. You too can arrange electronic mail subscriptions to routinely ship out dashboard snapshots on a schedule.

Whichever strategy you select, by storing the mannequin inputs and outputs within the standardized Delta desk format, it turns into easy to watch information drift, observe prediction adjustments, and measure accuracy over time. This visibility is essential for sustaining a dependable forecasting pipeline in manufacturing.

Now that we have lined construct and deploy time collection forecasting fashions on Databricks, let’s dive into the important thing features of monitoring them with Lakehouse Monitoring.

Monitor Knowledge Drift and Mannequin Efficiency

To make sure your forecasting mannequin continues to carry out properly in manufacturing, it is essential to watch each the enter information and the mannequin predictions for potential points. Databricks Lakehouse Monitoring makes this straightforward by permitting you to create screens in your enter function tables and inference log tables. Lakehouse Monitoring is constructed on prime of Unity Catalog as a unified technique to govern and monitor your information, and requires Unity Catalog to be enabled in your workspace.

Create an Inference Profile Monitor

To observe a forecasting mannequin, create an inference profile monitor on the desk containing the mannequin’s enter options, predictions, and optionally, floor reality labels. You’ll be able to create the monitor utilizing both the Databricks UI or the Python API.

Within the UI, navigate to the inference desk and click on the “High quality” tab. Click on “Get began” and choose “Inference Profile” because the monitor kind. Then configure the next key parameters:

- Drawback Kind: Choose regression for forecasting fashions

- Timestamp Column: The column containing the timestamp of every prediction. It’s the timestamp of the inference, not the timestamp of the info itself.

- Prediction Column: The column containing the mannequin’s forecasted values

- Label Column (elective): The column containing the precise values. This may be populated later as actuals arrive.

- Mannequin ID Column: The column figuring out the mannequin model that made every prediction

- Granularities: The time home windows to mixture metrics over, e.g. each day or weekly

Optionally, you too can specify:

- A baseline desk containing reference information, just like the coaching set, to check information drift in opposition to

- Slicing expressions to outline information subsets to watch, like totally different product classes

- Customized metrics to calculate, outlined by a SQL expression

- Arrange a refresh schedule

Utilizing the Python REST API, you possibly can create an equal monitor with code like:

from databricks.sdk import WorkspaceClient

from databricks.sdk.service.catalog import MonitorInferenceLog,

MonitorInferenceLogProblemType, MonitorMetric, MonitorMetricType

from pyspark.sql import sorts as T

w = WorkspaceClient()

w.quality_monitors.create(

table_name=f"{catalog}.{schema}.{table_name}",

inference_log=MonitorInferenceLog(

timestamp_col="ts",

model_id_col="model_version",

prediction_col="prediction",

label_col="precise",

problem_type=MonitorInferenceLogProblemType.PROBLEM_TYPE_REGRESSION,

granularities=["1 day", "1 week"]

),

output_schema_name=f"{catalog}.{schema}",

assets_dir= f"/Workspace/Customers/{user_email}/databricks_lakehouse

_monitoring/{catalog}.{schema}.{table_name}",

baseline_table="my_training_table",

slicing_exprs=["product_category"],

custom_metrics=[

MonitorMetric(

type=MonitorMetricType.CUSTOM_METRIC_TYPE_AGGREGATE,

name="pct_within_tolerance",

input_columns=[":table"],

definition="100.0 * sum(case when abs(prediction - precise) /

precise <= 0.1 then 1 else 0 finish) / depend(*)"

output_data_type=T.StructField("output", T.DoubleType()).json(),

),

MonitorMetric(

kind=MonitorMetricType.CUSTOM_METRIC_TYPE_AGGREGATE,

identify="weighted_mae",

input_columns=[":table"],

definition="sum(abs(prediction - precise) * product_value) /

sum(product_value)"

output_data_type=T.StructField("output", T.DoubleType()).json(),

),

MonitorMetric(

kind=MonitorMetricType.CUSTOM_METRIC_TYPE_AGGREGATE,

identify="qoq_pct_change",

input_columns=[":table"],

definition="""

(sum(case when extract(quarter from ts) = extract(quarter

from current_timestamp) and extract(12 months from ts) = extract(

12 months from current_timestamp) then precise else 0 finish) /

nullif(sum(case when extract(quarter from ts) = extract(

quarter from current_timestamp) - 1 and extract(12 months from

ts) = extract(12 months from current_timestamp) then precise else

0 finish), 0) - 1) * 100

"""

output_data_type=T.StructField("output", T.DoubleType()).json(),

),

]

)Baseline desk is an elective desk containing a reference dataset, like your mannequin’s coaching information, to check the manufacturing information in opposition to. Once more, for forecasting fashions, they’re often retrained, so the baseline comparability is commonly not vital because the mannequin will often change. It’s possible you’ll do the comparability to a earlier time window and if baseline comparability is desired, solely replace the baseline when there’s a greater replace, like hyperparameter tuning or an replace to the actuals.

Monitoring in forecasting is beneficial even in situations the place the retraining cadence is pre-set, akin to weekly or month-to-month. In these circumstances, you possibly can nonetheless have interaction in exception-based forecast administration when forecast metrics deviate from actuals or when actuals fall out of line with forecasts. This lets you decide if the underlying time collection must be re-diagnosed (the formal forecasting language for retraining, the place developments, seasonality, and cyclicity are individually recognized if utilizing econometric fashions) or if particular person deviations could be remoted as anomalies or outliers. Within the latter case, you would not re-diagnose, however mark the deviation as an outlier and doubtlessly connect a calendar occasion or an exogenous variable to the mannequin sooner or later.

Lakehouse Monitoring will routinely observe statistical properties of the enter options over time and alert if vital drift is detected relative to the baseline or earlier time home windows. This lets you determine information high quality points that might degrade forecast accuracy. For instance:

- Monitor the distribution of key enter options like gross sales quantities. If there’s a sudden shift, it might point out information high quality points which will degrade forecast accuracy.

- Observe the variety of lacking or outlier values. A rise in lacking information for latest time durations might skew forecasts.

Along with the default metrics, you possibly can outline customized metrics utilizing SQL expressions to seize business-specific logic or complicated comparisons. Some examples related to forecasting:

- Evaluating metrics throughout seasons or years, e.g. calculating the p.c distinction in common gross sales between the present quarter and the identical quarter final 12 months

- Weighting errors in another way based mostly on the merchandise being forecasted, e.g. penalizing errors on high-value merchandise extra closely

- Monitoring the proportion of forecasts inside a suitable error tolerance

Customized metrics could be of three sorts:

- Combination metrics calculated from columns within the inference desk

- Derived metrics calculated from different mixture metrics

- Drift metrics evaluating an mixture or derived metric throughout time home windows or to a baseline

Examples of those customized metrics are proven within the Python API instance above. By incorporating customized metrics tailor-made to your particular forecasting use case, you possibly can achieve deeper, extra related insights into your mannequin’s efficiency and information high quality.

The important thing thought is to deliver your mannequin’s enter options, predictions, and floor reality labels collectively in a single inference log desk. Lakehouse Monitoring will then routinely observe information drift, prediction drift, and efficiency metrics over time and by the scale you specify.

In case your forecasting mannequin is served exterior of Databricks, you possibly can ETL the request logs right into a Delta desk after which apply monitoring on it. This lets you centralize monitoring even for exterior fashions.

It is essential to notice that while you first create a time collection or inference profile monitor, it analyzes solely information from the 30 days previous to the monitor’s creation. Attributable to this cutoff, the primary evaluation window may be partial. For instance, if the 30-day restrict falls in the midst of per week or month, the total week or month is not going to be included.

This 30-day lookback limitation solely impacts the preliminary window when the monitor is created. After that, all new information flowing into the inference desk will probably be absolutely processed in line with the required granularities.

Refresh Displays to Replace Metrics

After creating an inference profile monitor to your forecasting mannequin, that you must periodically refresh it to replace the metrics with the most recent information. Refreshing a monitor recalculates the profile and drift metrics tables based mostly on the present contents of the inference log desk. You need to refresh a monitor when:

- New predictions are logged from the mannequin

- Precise values are added for earlier predictions

- The inference desk schema adjustments, akin to including a brand new function column

- You modify the monitor settings, like including further customized metrics

There are two methods to refresh a monitor: on a schedule or manually.

To arrange a refresh schedule, specify the schedule parameter when creating the monitor utilizing the UI, or with the Python API:

from databricks.sdk import WorkspaceClient

from databricks.sdk.service.catalog import MonitorInferenceLog,

MonitorInferenceLogProblemType, MonitorCronSchedule

w = WorkspaceClient()

w.quality_monitors.create(

table_name=f"{catalog}.{schema}.{table_name}",

inference_log=MonitorInferenceLog(...),

schedule=MonitorCronSchedule(quartz_cron_expression="0 0 * * *")

# each day at midnight

skip_builtin_dashboard=False # set to True if dashboard is

already created

output_schema_name=f"{catalog}.{schema}",

assets_dir= f"/Workspace/Customers/{user_email}/databricks_lakehouse

_monitoring/{catalog}.{schema}.{table_name}",

)The `CronSchedule` helps you to present a cron expression to outline the refresh frequency, akin to each day, hourly, and many others. You’ll be able to set `skip_builtin_dashboard` to True, which is able to skip producing a brand new dashboard for the monitor. That is particularly helpful when you could have already constructed a dashboard or have customized charts within the dashboard you need to preserve and do not want a brand new one.

Alternatively, you possibly can manually refresh a monitor utilizing the UI or Python API. Within the Databricks UI, go to the “High quality” tab on the inference desk, choose the monitor, and click on “Refresh metrics”.

Utilizing Python API, you possibly can create a pipeline that refreshes a monitor in order that it is action-driven, for instance, after retraining a mannequin. To refresh a monitor in a pocket book, use the run_refresh operate:

from databricks.sdk import WorkspaceClient

w = WorkspaceClient()

w.quality_monitors.run_refresh(

table_name=f"{catalog}.{schema}.{table_name}"

)This submits a serverless job to replace the monitor metrics tables. You’ll be able to proceed to make use of the pocket book whereas the refresh runs within the background.

After a refresh completes, you possibly can question the up to date profile and drift metrics tables utilizing SQL. Nevertheless, be aware that the generated dashboard is up to date individually, which you are able to do so by clicking on the “Refresh” button within the DBSQL dashboard itself. Equally, while you click on Refresh on the dashboard, it does not set off monitor calculations. As a substitute, it runs the queries over the metric tables that the dashboard makes use of to generate visualizations. To replace the info within the tables used to create the visualizations that seem on the dashboard, you need to refresh the monitor after which refresh the dashboard.

Understanding the Monitoring Output

If you create an inference profile monitor to your forecasting mannequin, Lakehouse Monitoring generates a number of key property that will help you observe information drift, mannequin efficiency, and general well being of your pipeline.

Profile and Drift Metrics Tables

Lakehouse Monitoring creates two main metrics tables:

- Profile metrics desk: Incorporates abstract statistics for every function column and the predictions, grouped by time window and slice. For forecasting fashions, this contains metrics like:

- depend, imply, stddev, min, max for numeric columns

- depend, variety of nulls, variety of distinct values for categorical columns

- depend, imply, stddev, min, max for the prediction column

- Drift metrics desk: Tracks adjustments in information and prediction distributions over time in comparison with a baseline. Key drift metrics for forecasting embody:

- Wasserstein distance for numeric columns, measuring the distinction in distribution form, and for the prediction column, detecting shifts within the forecast distribution

- Jensen-Shannon divergence for categorical columns, quantifying the distinction between chance distributions

You’ll be able to question them straight utilizing SQL to research particular questions, akin to:

- What’s the common prediction and the way has it modified week-over-week?

- Is there a distinction in mannequin accuracy between product classes?

- What number of lacking values have been there in a key enter function yesterday vs the coaching information?

Mannequin Efficiency Dashboard

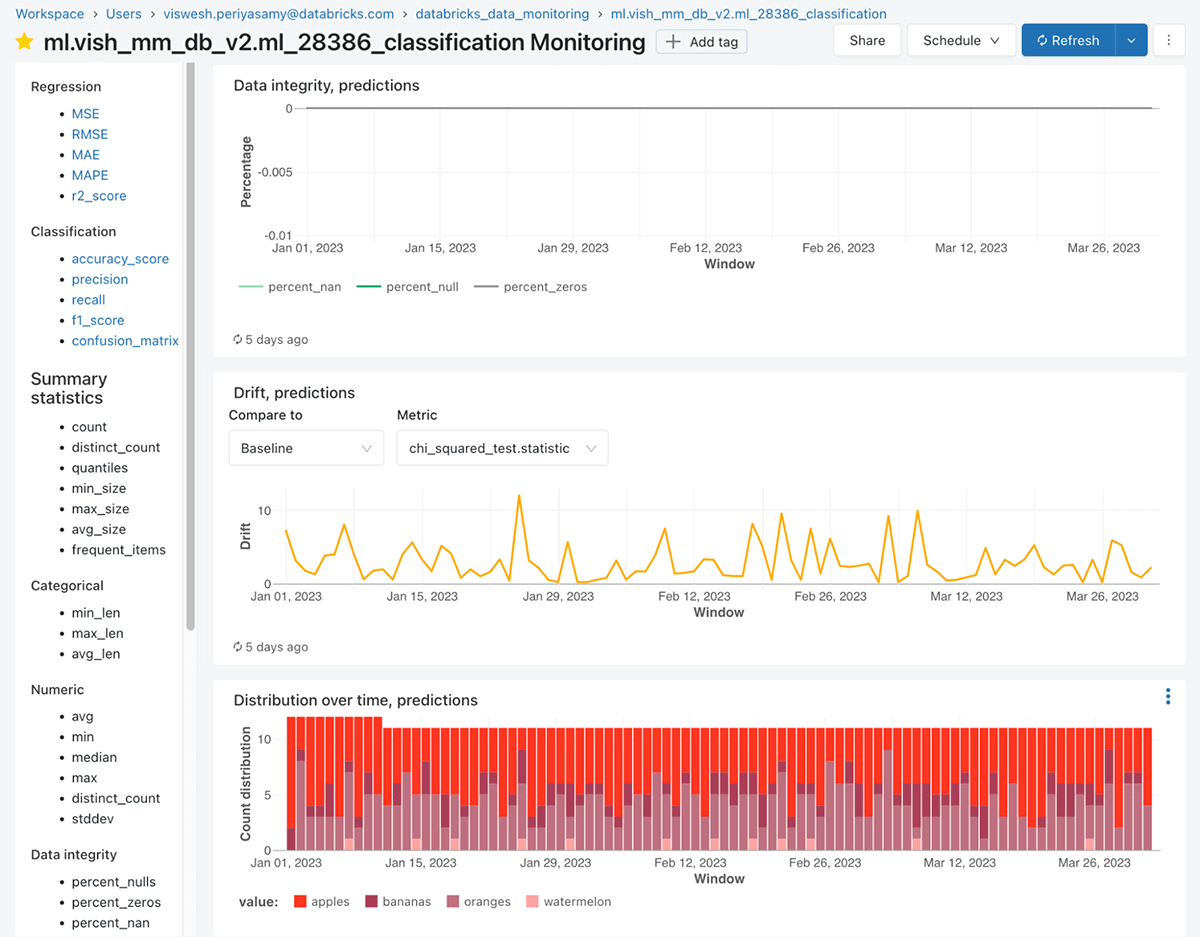

Along with the metrics tables, Lakehouse Monitoring routinely generates an interactive dashboard to visualise your forecasting mannequin’s efficiency over time. The dashboard contains a number of key elements:

- Mannequin Efficiency Panel: Shows key accuracy metrics to your forecasting mannequin, akin to MAPE, RMSE, bias, and many others. These metrics are calculated by evaluating the predictions to the precise values, which could be offered on a delay (e.g. each day actuals for a each day forecast). The panel reveals the metrics over time and by essential slices like product class or area.

- Drift Metrics Panel: Visualizes the drift metrics for chosen options and the prediction column over time.

- Knowledge High quality Panel: Reveals varied metrics akin to % of lacking values, % of nas, varied statistics akin to depend, imply, min and max, and different information anomalies for each numeric options and categorical options over time. This helps you rapidly spot information high quality points that might degrade forecast accuracy.

The dashboard is extremely interactive, permitting you to filter by time vary, choose particular options and slices, and drill down into particular person metrics. The dashboard is commonly custom-made after its creation to incorporate any views or charts your group is used to . Queries which might be used on the dashboard could be custom-made and saved, and you’ll add alerts from any of the views by clicking on “view question” after which clicking on “create alert”. On the time of writing, a custom-made template for the dashboard will not be supported.

It is a priceless instrument for each information scientists to debug mannequin efficiency and enterprise stakeholders to take care of belief within the forecasts.

Leveraging Actuals for Accuracy Monitoring

To calculate mannequin efficiency metrics like MAPE, the monitoring system wants entry to the precise values for every prediction. Nevertheless, with forecasting, actuals are sometimes not obtainable till a while after the prediction is made.

One technique is to arrange a separate pipeline that appends the precise values to the inference log desk after they grow to be obtainable, then refresh the monitor to replace the metrics. For instance, should you generate each day forecasts, you possibly can have a job that runs every evening so as to add the precise values for the day before today’s predictions.

By capturing actuals and refreshing the monitor recurrently, you possibly can observe forecast accuracy over time and determine efficiency degradation early. That is essential for sustaining belief in your forecasting pipeline and making knowledgeable enterprise choices.

Furthermore, monitoring actuals and forecasts individually allows highly effective exception administration capabilities. Exception administration is a well-liked approach in demand planning the place vital deviations from anticipated outcomes are proactively recognized and resolved. By organising alerts on metrics like forecast accuracy or bias, you possibly can rapidly spot when a mannequin’s efficiency has degraded and take corrective motion, akin to adjusting mannequin parameters or investigating enter information anomalies.

Lakehouse Monitoring makes exception administration easy by routinely monitoring key metrics and offering customizable alerting. Planners can focus their consideration on probably the most impactful exceptions moderately than sifting by way of mountains of knowledge. This focused strategy improves effectivity and helps preserve excessive forecast high quality with minimal handbook intervention.

In abstract, Lakehouse Monitoring offers a complete set of instruments for monitoring your forecasting fashions in manufacturing. By leveraging the generated metrics tables and dashboard, you possibly can proactively detect information high quality points, observe mannequin efficiency, diagnose drift, and handle exceptions earlier than they affect your small business. The power to slice and cube the metrics throughout dimensions like product, area, and time lets you rapidly pinpoint the basis reason behind any points and take focused motion to take care of the well being and accuracy of your forecasts.

Set Alerts on Mannequin Metrics

After getting an inference profile monitor arrange to your forecasting mannequin, you possibly can outline alerts on key metrics to proactively determine points earlier than they affect enterprise choices. Databricks Lakehouse Monitoring integrates with Databricks SQL to let you create alerts based mostly on the generated profile and drift metrics tables.

Some frequent situations the place you’ll need to arrange alerts for a forecasting mannequin embody:

- Alert if the rolling 7-day common prediction error (MAPE) exceeds 10%. This might point out the mannequin is now not correct and may have retraining.

- Alert if the variety of lacking values in a key enter function has elevated considerably in comparison with the coaching information. Lacking information might skew predictions.

- Alert if the distribution of a function has drifted past a threshold relative to the baseline. This might sign an information high quality concern or that the mannequin must be up to date for the brand new information patterns.

- Alert if no new predictions have been logged up to now 24 hours. This might imply the inference pipeline has failed and desires consideration.

- Alert if the mannequin bias (imply error) is constantly constructive or unfavorable. This might point out the mannequin is systematically over or beneath forecasting.

There are built-in queries already generated to construct the views of the dashboard. To create an alert, navigate to the SQL question that calculates the metric you need to monitor from the profile or drift metrics desk. Then, within the Databricks SQL question editor, click on “Create Alert” and configure the alert situations, akin to triggering when the MAPE exceeds 0.1. You’ll be able to set the alert to run on a schedule, like hourly or each day, and specify obtain notifications (e.g. electronic mail, Slack, PagerDuty).

Along with alerts on the default metrics, you possibly can write customized SQL queries to calculate bespoke metrics to your particular use case. For instance, possibly you need to alert if the MAPE for high-value merchandise exceeds a special threshold than for low-value merchandise. You would be a part of the profile metrics with a product desk to section the MAPE calculation.

The secret is that each one the function and prediction information is out there in metric tables, so you possibly can flexibly compose SQL to outline customized metrics which might be significant for your small business. You’ll be able to then create alerts on prime of those customized metrics utilizing the identical course of.

By organising focused alerts in your forecasting mannequin metrics, you possibly can preserve a pulse on its efficiency with out handbook monitoring. Alerts let you reply rapidly to anomalies and preserve belief within the mannequin’s predictions. Mixed with the multi-dimensional evaluation enabled by Lakehouse Monitoring, you possibly can effectively diagnose and resolve points to maintain your forecast high quality excessive.

Monitor Lakehouse Monitoring Bills

Whereas not particular to forecasting fashions, it is essential to know observe your utilization and bills for Lakehouse Monitoring itself. When planning to make use of Lakehouse Monitoring, it is essential to know the related prices so you possibly can price range appropriately. Lakehouse Monitoring jobs run on serverless compute infrastructure, so you do not have to handle clusters your self. To estimate your Lakehouse Monitoring prices, observe these steps:

- Decide the quantity and frequency of screens you propose to create. Every monitor will run on a schedule to refresh the metrics.

- Estimate the info quantity and complexity of the SQL expressions to your screens. Bigger information sizes and extra complicated queries will eat extra DBUs.

- Lookup the DBU price for serverless workloads based mostly in your Databricks tier and cloud supplier.

- Multiply your estimated DBUs by the relevant price to get your estimated Lakehouse Monitoring price.

Your precise prices will rely in your particular monitor definitions and information, which might fluctuate over time. Databricks offers two methods to watch your Lakehouse Monitoring prices: utilizing a SQL question or the billing portal. Consult with https://docs.databricks.com/en/lakehouse-monitoring/expense.html for extra data.

Prepared to begin monitoring your forecasting fashions with Databricks Lakehouse Monitoring? Join a free trial to get began. Already a Databricks buyer? Try our documentation to arrange your first inference profile monitor in the present day.